The narrative of human progress has long been anchored in two distinct yet converging quests: the desire to understand our biological past and the ambition to engineer our technological future. However, recent developments in both evolutionary genetics and artificial intelligence are dismantling long-held assumptions. From a provocative new theory that challenges the existence of "inner Neanderthal" DNA to the growing realization that "human-in-the-loop" safeguards in AI warfare may be little more than a comforting myth, the foundations of our modern identity are being recalibrated. As the White House navigates a complex relationship with AI pioneers like Anthropic and the global supply chain for rare earth elements enters a period of intense volatility, the intersection of science, security, and industry has never been more fraught with uncertainty.

For over a decade, the prevailing consensus in paleoanthropology has been that modern humans carry a small but significant percentage of Neanderthal DNA, the result of ancient interbreeding events between Homo sapiens and our extinct cousins. This "inner Neanderthal" concept became a cornerstone of 21st-century evolutionary science, offering a tangible link to a vanished lineage. Yet, in a bold departure from this established view, a team of French geneticists has proposed a radical alternative. Their research suggests that the genetic signatures we have interpreted as evidence of interbreeding could instead be the result of complex population structures within ancient Africa.

This hypothesis posits that genes concentrate in small, isolated groups over millennia, creating patterns that mimic the appearance of cross-species hybridization when analyzed through modern genomic tools. If this theory gains traction, it would fundamentally alter our understanding of human migration and development, suggesting that our "cousins" may have been more distant than we cared to admit. The implications extend beyond academia; they touch upon the very essence of what makes us human, questioning whether our history is one of integration or isolated divergence.

While scientists look backward to redefine our origins, military strategists are looking forward to a battlefield increasingly governed by algorithms. The concept of "human-in-the-loop" (HITL) has become the ethical and legal bedrock of autonomous warfare. The theory is simple: as long as a human operator remains the final arbiter of lethal force, the machine remains a tool rather than an autonomous agent. However, experts are increasingly warning that this oversight is an illusion. In high-velocity combat environments, where AI systems process vast datasets and identify targets in milliseconds, the human "overseer" lacks the cognitive bandwidth to understand the machine’s rationale.

When a human is presented with a target recommendation from a "black box" system, they are rarely providing "accountability" or "context"; instead, they are often merely rubber-stamping a decision they lack the time or information to challenge. This cognitive offloading creates a dangerous gap in responsibility. As AI plays a more central role in global conflicts—ranging from drone swarms in Eastern Europe to surveillance systems in the Middle East—the urgency for new safeguards has moved from the realm of ethics to the necessity of national security. The danger is not necessarily a "rogue" AI, but a human operator who is functionally obsolete while technically "in control."

This tension between innovation and safety is nowhere more apparent than in the current relationship between the U.S. government and the AI industry. Despite public friction and a perceived "culture war" between certain political factions and safety-focused firms like Anthropic, the strategic value of high-level intelligence models remains undeniable. Reports indicate that the White House is actively negotiating access to Anthropic’s "Mythos" model, a system the company itself deemed too dangerous for general public release.

The paradox is striking: the state is blacklisting the very entities it relies upon for the next generation of national defense. Anthropic’s decision to withhold Mythos highlights a growing trend of "capability-based" gatekeeping, where the developers of AI are more cautious than the regulators. While Anthropic has released more stable, less risky versions like Claude Opus 4.7, the government’s hunger for the "most powerful" model suggests a burgeoning AI arms race where the threshold for "too dangerous" is constantly being shifted by geopolitical necessity.

Parallel to these security concerns is a crisis of corporate governance within the private sector. OpenAI, once a non-profit dedicated to the democratic distribution of AI benefits, is now embroiled in legal and ethical battles that threaten to overshadow its technical achievements. The leadership of Sam Altman has come under intense scrutiny, with critics pointing to a web of opaque personal investments that may create significant conflicts of interest. As OpenAI pivots toward more "agentic" systems—AI that can autonomously write code and execute complex tasks—the stakes for the company’s "founding mission" have never been higher. A looming jury trial will soon determine whether the organization has strayed too far from its original altruistic goals, a case that could set a precedent for how all major AI labs are governed in the future.

The physical infrastructure required to sustain this digital revolution is also facing a reckoning. The expansion of AI is currently being choked by two primary factors: power and land. Recent data suggests that nearly 40% of planned data center projects are at risk of significant delays. This bottleneck is driven by a combination of grid instability and local opposition—the "NIMBY" (Not In My Back Yard) phenomenon—as communities push back against the massive energy consumption and environmental footprint of these facilities. Without the hardware to house the models, the software revolution risks stalling, creating a strategic vulnerability for nations that cannot scale their infrastructure quickly enough.

This vulnerability is compounded by the military’s growing reliance on private satellite networks. A recent Starlink outage during Navy drone tests exposed the fragility of modern defense systems that depend on commercial infrastructure. As the Pentagon taps legacy automakers like Ford and General Motors for military innovation, the integration of private-sector agility with public-sector security remains a work in progress. The reliance on a single provider like SpaceX for global connectivity is increasingly seen as a single point of failure that adversaries could exploit.

The global competition for AI supremacy is also seeing a surge from the East. Alibaba’s release of its "Happy Oyster" world model represents a significant attempt by Chinese tech giants to bridge the gap in "physical reality comprehension." These models aim to go beyond text and images, attempting to understand cause and effect within the physical world—a prerequisite for advanced robotics and autonomous manufacturing. Meanwhile, Google is doubling down on personalization, integrating its Gemini AI into the deepest recesses of user data to generate hyper-tailored content. While this promises a more seamless user experience, it raises profound privacy concerns as the line between "personal assistant" and "personal surveillance" continues to blur.

As we navigate these transitions, the cultural impact of technology is being felt in unexpected ways. In South Korea, AI-powered smartglasses are being used to provide real-time translations in theaters, potentially giving traditional performing arts a "K-Pop moment" on the global stage. Conversely, the creative community is pushing back against the very tools that might expand their reach. Global voice actors are currently in a standoff with Hollywood studios, fighting against the use of their own voices to train the AI models that are designed to replace them. It is a microcosm of the broader labor struggle in the age of automation: the creators are being used to build the engines of their own obsolescence.

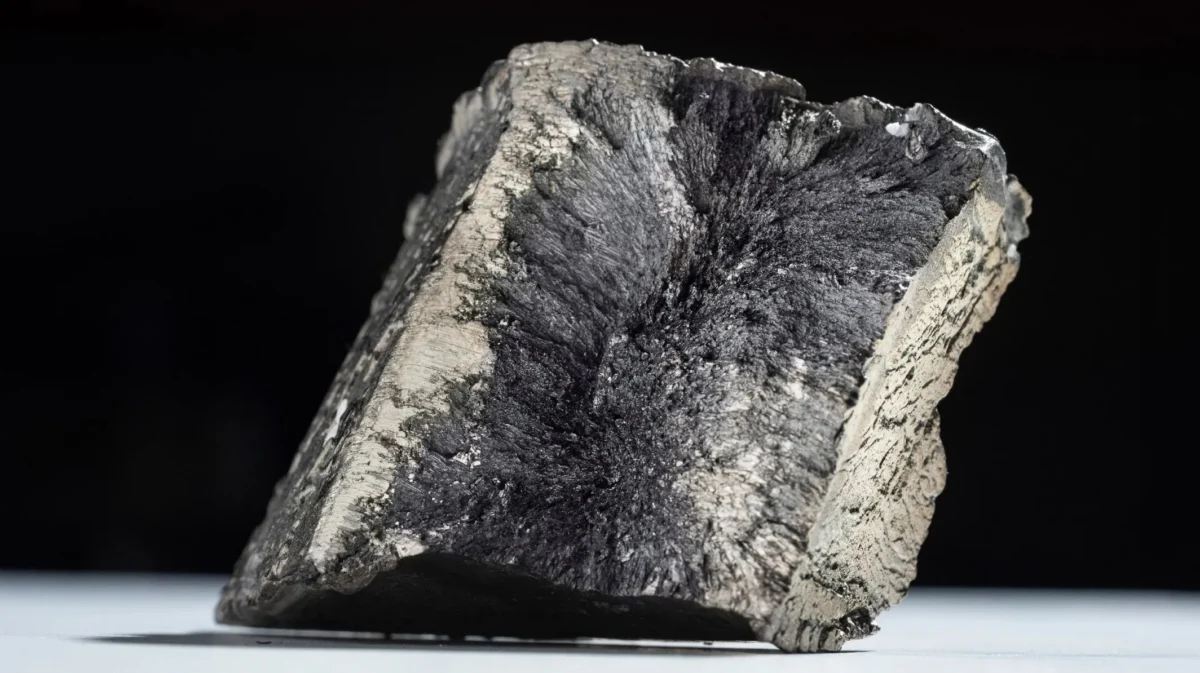

Finally, the transition to a high-tech, low-carbon future is being dictated by the geography of rare earth elements. These minerals are the lifeblood of everything from electric vehicle motors to advanced missile guidance systems. With China currently dominating the extraction and processing of these materials, Western nations are in a frantic race to find unconventional sources. From "urban mining" (recycling old electronics) to extracting minerals from coal ash, the search for critical minerals has become a matter of sovereign survival. The race for these elements will determine which nations can actually meet their climate goals and which will remain beholden to foreign supply chains.

In conclusion, the "Download" of our current era is one of profound reassessment. We are discovering that our biological past may be less integrated than we thought, while our technological future is becoming more entangled than we are prepared for. The illusion of human control in warfare, the volatility of AI governance, and the physical constraints of our digital ambitions all point toward a decade of radical transformation. As we move through what former NSA officials describe as a "dark period" where offensive AI holds the advantage, the need for transparency, ethical clarity, and strategic foresight has never been more urgent. The path forward requires us to look clearly at the machines we are building—and the humans we used to be.