The rapid proliferation of generative artificial intelligence has moved beyond the stage of mere novelty, embedding itself into the foundational architecture of global commerce, national security, and public health. While the debut of Large Language Models (LLMs) in late 2022 signaled a shift in how humans interact with machines, the subsequent years have revealed a more complex reality. We are now witnessing the "second wave" of the AI revolution—a period defined not by the wonder of discovery, but by the systemic challenges of implementation, the weaponization of the technology by bad actors, and a growing skepticism regarding the actual utility of AI in high-stakes environments like healthcare.

The Industrialization of AI-Driven Cybercrime

The democratization of high-level intelligence via LLMs has inadvertently provided a sophisticated toolkit for cybercriminals. In the pre-AI era, the effectiveness of phishing and social engineering was often limited by the "human bottleneck"—the time required to craft convincing narratives and the linguistic tells that often gave away foreign-based scammers. Today, that bottleneck has been eliminated.

Cybercriminals are now leveraging LLMs to execute what security analysts call "turbocharged phishing." These are not the generic, error-ridden emails of the past; they are highly personalized, context-aware communications that mimic the tone and style of trusted colleagues or institutions with startling accuracy. Beyond text, the rise of hyperrealistic deepfakes—both audio and video—has introduced a new dimension of risk. We are seeing a rise in "CEO fraud," where AI-generated voices are used to authorize fraudulent wire transfers during live phone calls.

Furthermore, the threat is moving toward automation. Malicious actors are deploying AI to conduct automated vulnerability scans, identifying weaknesses in corporate networks at a speed that human IT teams struggle to match. This creates an asymmetrical battlefield: while defenders must secure every possible entry point, an AI-powered attacker only needs to find one flaw, which they can now do through 24/7 automated probing. The cost of launching a sophisticated, large-scale cyberattack has plummeted, forcing organizations to rethink their security postures from the ground up, moving toward "Zero Trust" architectures where identity is never assumed, even if the voice on the other end of the line sounds familiar.

The Clinical Paradox: Accuracy vs. Outcomes in Healthcare

As AI moves into the medical ward, a different set of challenges emerges. On the surface, the integration of AI into healthcare seems like a resounding success. Doctors are increasingly reliant on AI for administrative efficiency, particularly in automated notetaking and the summarization of patient histories. In diagnostic settings, AI models have demonstrated an uncanny ability to interpret X-rays, MRIs, and pathology slides, often matching or exceeding the accuracy of human specialists in identifying specific anomalies.

However, a critical gap has appeared between technical accuracy and clinical efficacy. While a model may be 99% accurate at flagging a potential nodule on a lung scan, the medical community is still grappling with whether this leads to better patient outcomes. There is a risk of "over-diagnosis," where AI identifies minor abnormalities that would never have progressed to clinical significance, leading to unnecessary biopsies, surgeries, and patient anxiety.

The industry is currently facing a "clinical paradox": we have tools that can see more than ever before, but we lack the longitudinal data to prove that acting on this information actually saves lives or improves the quality of care. This has led to a call for more rigorous, peer-reviewed studies that move beyond bench testing and into real-world clinical trials. Until we can bridge the gap between "accurate detection" and "improved survival rates," the true value of healthcare AI remains an open question.

Geopolitical Friction and the Open-Source Frontier

The global race for AI supremacy has shifted into a higher gear, marked by both technological breakthroughs and escalating geopolitical tensions. The recent unveiling of DeepSeek-V4, a new flagship model from the Chinese AI firm, represents a significant milestone in the open-source movement. By launching a model that rivals the performance of closed-source giants like OpenAI’s GPT-4 or Google’s Gemini, DeepSeek is challenging the Western dominance of the AI landscape.

Crucially, DeepSeek-V4 has been optimized for Huawei’s chip technology, signaling China’s growing resilience against Western semiconductor export restrictions. This "hardware-software co-design" allows Chinese firms to squeeze maximum performance out of domestic silicon, potentially bypassing the need for high-end Western GPUs.

This technological progress is occurring against a backdrop of intense diplomatic friction. The U.S. government has recently escalated its rhetoric, accusing Chinese entities of "industrial-scale" AI theft. A recent White House memorandum alleges that Chinese firms are systematically exploiting American models and intellectual property to accelerate their own development. Beijing has dismissed these claims as "slander," but the accusations highlight the degree to which AI has become the primary theater of the 21st-century "Cold War." The battle is no longer just about who has the best algorithms, but who controls the data, the talent, and the underlying silicon.

The Economic Re-calibration of Silicon Valley

The "move fast and break things" era of AI development is being replaced by a more sober, profit-driven reality. For years, the major AI labs operated on a model of massive subsidization, offering advanced tools for free to capture market share. That era is coming to an abrupt end. Investors are now demanding a return on the billions of dollars poured into GPU clusters and data centers.

This pressure is manifesting in several ways. First, we are seeing a "monetization pivot," where previously free tiers of advanced AI are being gated behind increasingly expensive subscriptions. Second, the massive capital expenditure (CapEx) required to stay at the cutting edge is forcing even the largest tech giants to trim their workforces. Meta’s recent announcement of a 10% staff reduction—roughly 8,000 employees—is a direct result of this shift. The company is effectively reallocating its budget from human capital to AI infrastructure, a move that has sparked growing "anti-AI" protests among tech workers who fear they are being automated out of their own industry.

The legal landscape is also becoming more litigious. The ongoing feud between Elon Musk and Sam Altman, which has now moved into the discovery phase of a high-profile lawsuit, is exposing the internal secrets of OpenAI’s transition from a non-profit research lab to a commercial powerhouse. This case is about more than just personal animosity; it is a fundamental debate over the soul of AI development—whether it should be a transparent, "open" resource for humanity or a proprietary, profit-making engine.

The Human Response: Digital Sobriety and Regulation

As AI and algorithms become more pervasive, a counter-movement is beginning to take root among the general public and policymakers. There is a growing sense of "algorithmic fatigue"—a realization that the constant mediation of life through screens and AI-driven feeds may be harming social cohesion and mental health.

In Norway, the government is moving to enforce some of the world’s strictest social media age restrictions, aiming to ban access for children under 16. Prime Minister Jonas Gahr Støre’s assertion that "childhood must not be taken over by algorithms" reflects a broader European trend toward protecting digital minors. Similar movements are gaining traction in the Philippines and the United States, where parents and educators are pushing to remove generative AI from the classroom until its long-term psychological effects are better understood.

This push for "digital sobriety" is also manifesting in the "Month Offline" movement—a grassroots initiative encouraging adults to ditch their smartphones for 30 days. Much like "Dry January" for alcohol, this movement aims to reset the human relationship with technology, prioritizing face-to-face interaction over algorithmic engagement.

The Extraterrestrial Perspective

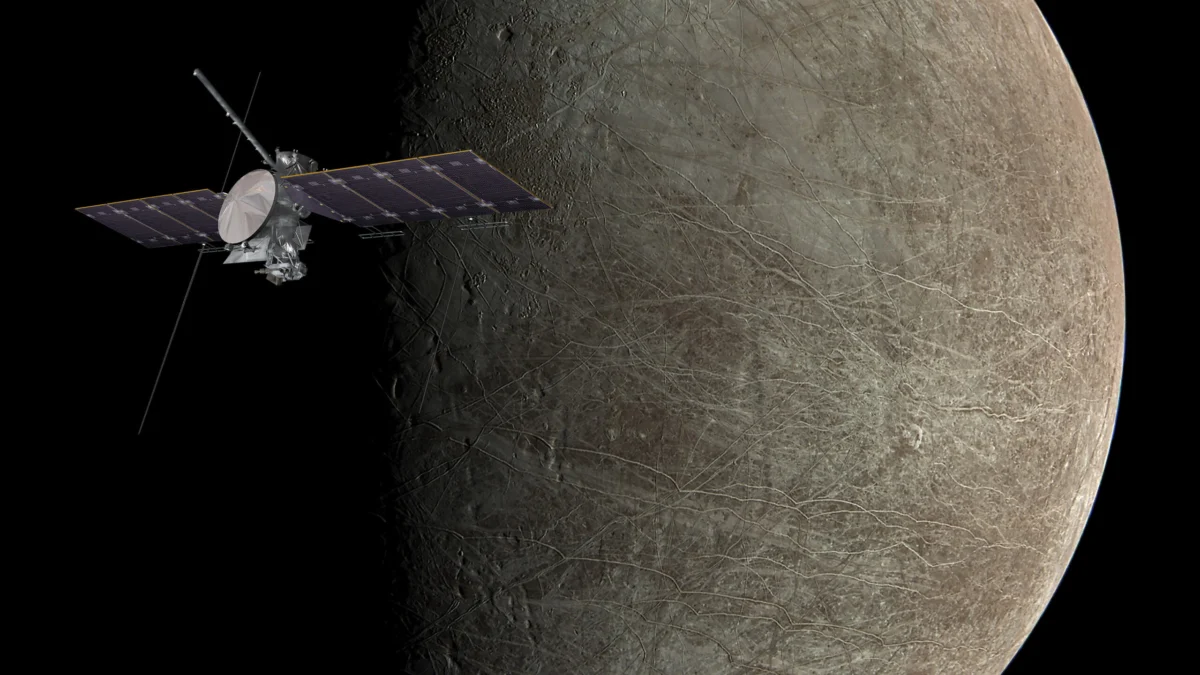

While the world remains fixated on the digital and geopolitical challenges of AI on Earth, the frontier of exploration continues to expand outward. NASA’s mission to Europa, Jupiter’s icy moon, serves as a poignant reminder of the broader scientific goals that transcend terrestrial squabbles.

Europa is widely considered one of the most promising candidates for extraterrestrial life in our solar system. Scientists believe that beneath its thick icy crust lies a massive, saltwater ocean kept liquid by the tidal forces of Jupiter’s gravity. The search for organic molecules and energy sources in Europa’s depths represents the pinnacle of human curiosity and scientific endeavor. It is a reminder that while we struggle to manage the "artificial" intelligence we have created, the search for "alien" intelligence—and the fundamental understanding of life itself—remains one of our most profound callings.

As we navigate this new era, the challenge will be to harness the undeniable power of AI for genuine progress while mitigating the systemic risks it poses to our security, our economy, and our very sense of what it means to be human. The generative shift is here, and its consequences will be felt for generations to come.