The landscape of modern cybercrime is undergoing a profound transformation, moving away from the era of manual, labor-intensive social engineering toward highly industrialized, AI-driven exploitation. At the forefront of this shift is a newly identified platform dubbed ATHR, which has begun circulating on illicit underground forums. This toolset represents a significant evolution in Telephone-Oriented Attack Delivery (TOAD), providing threat actors with a turnkey solution to execute sophisticated voice phishing campaigns that are not only automated but remarkably difficult to distinguish from legitimate corporate communications.

The Industrialization of Social Engineering

For years, vishing—or voice phishing—was considered a boutique crime. It required human actors to spend significant time on the phone, maintaining a consistent persona, navigating complex scripts, and managing the psychological pressure of a real-time interaction. It was effective, but it was not scalable. ATHR fundamentally changes this economic model. By integrating artificial intelligence into the core of the attack chain, the platform allows a single operator to manage a volume of "calls" that would previously have required a large, organized call center.

Marketed on dark web forums with a pricing structure of $4,000 upfront alongside a 10% commission on stolen assets, ATHR is positioning itself as a "Cybercrime-as-a-Service" (CaaS) powerhouse. It supports a diverse portfolio of high-value targets, including major technology giants like Google and Microsoft, as well as critical financial infrastructure such as Coinbase, Binance, and Gemini. By lowering the barrier to entry, ATHR enables even less-skilled malicious actors to launch professional-grade attacks that were once the exclusive domain of sophisticated Advanced Persistent Threat (APT) groups.

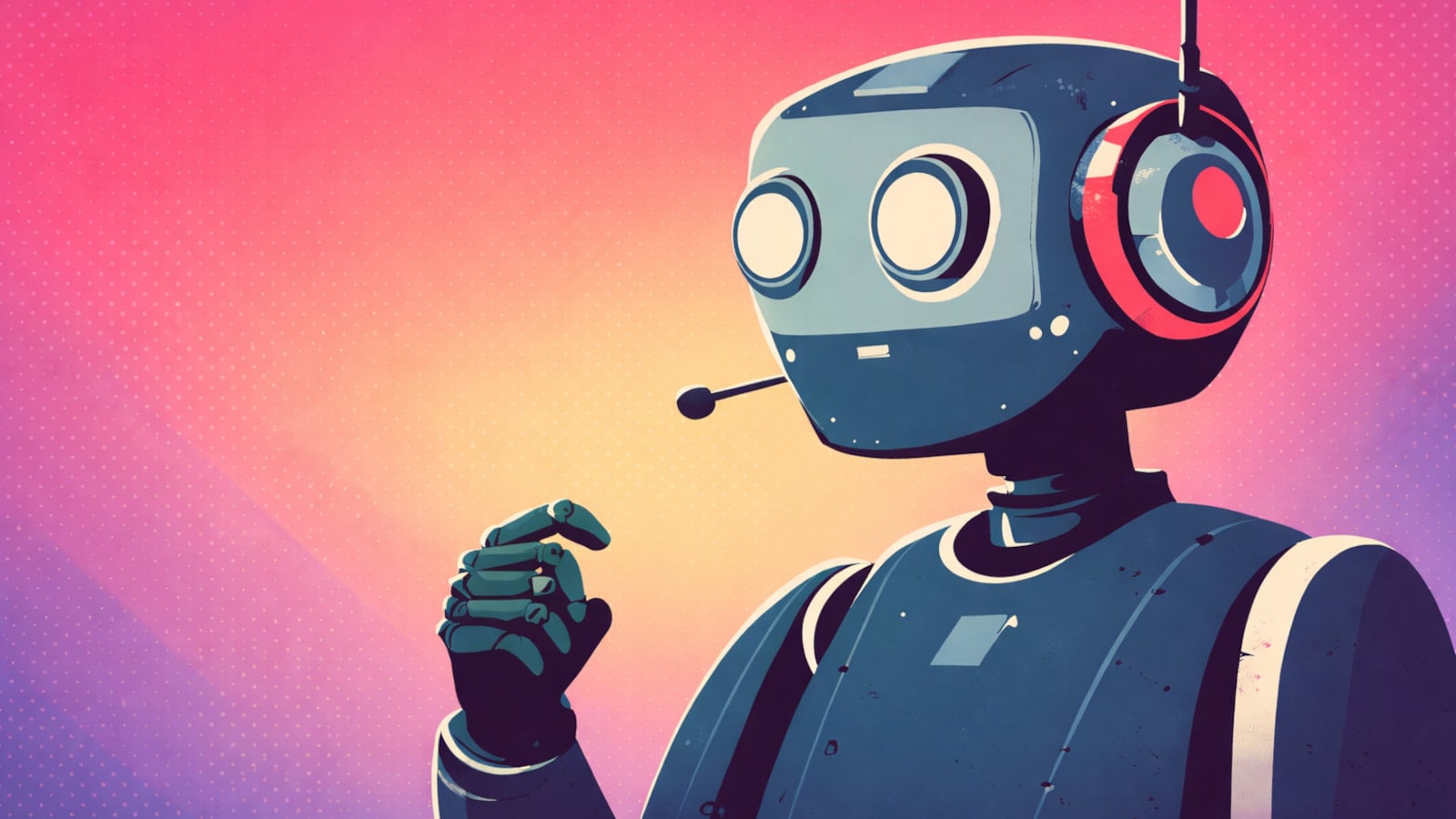

Dissecting the ATHR Attack Chain

The strength of the ATHR platform lies in its seamless integration of disparate attack phases. It provides an end-to-end ecosystem that handles the initial lure, the technical bypass, and the final exfiltration of credentials.

The process begins with the deployment of highly customized email templates. These are not the poorly written, grammatically incorrect phishing attempts of the past. Instead, they are designed to bypass modern email security gateways by mimicking the branding and tone of legitimate service providers. By utilizing sophisticated spoofing mechanisms and ensuring the emails pass technical authentication protocols like SPF, DKIM, and DMARC, the attackers ensure high deliverability rates.

The content of these emails is deliberately crafted to be urgent yet vague. By presenting the victim with a faux "security alert" or "account verification" request, the platform triggers a psychological response—a sense of fear or concern that prompts the user to seek immediate resolution. Crucially, the email instructs the user to call a specific phone number. By moving the interaction from the digital channel (email) to the voice channel (phone), the attacker circumvents traditional content-based filters that would otherwise flag malicious links or attachments.

Once the victim calls the number, they are funneled through an Asterisk-based VoIP infrastructure that connects them to an AI voice agent. This is where ATHR distinguishes itself from legacy phishing kits. The AI agent is not a simple automated menu; it is a dynamic, responsive entity. Using carefully engineered prompts, the agent adopts a professional persona, simulating the tone and vocabulary of a support representative. It guides the victim through a fake account recovery process, systematically extracting sensitive data, most notably the six-digit multi-factor authentication (MFA) codes required to compromise the target’s account.

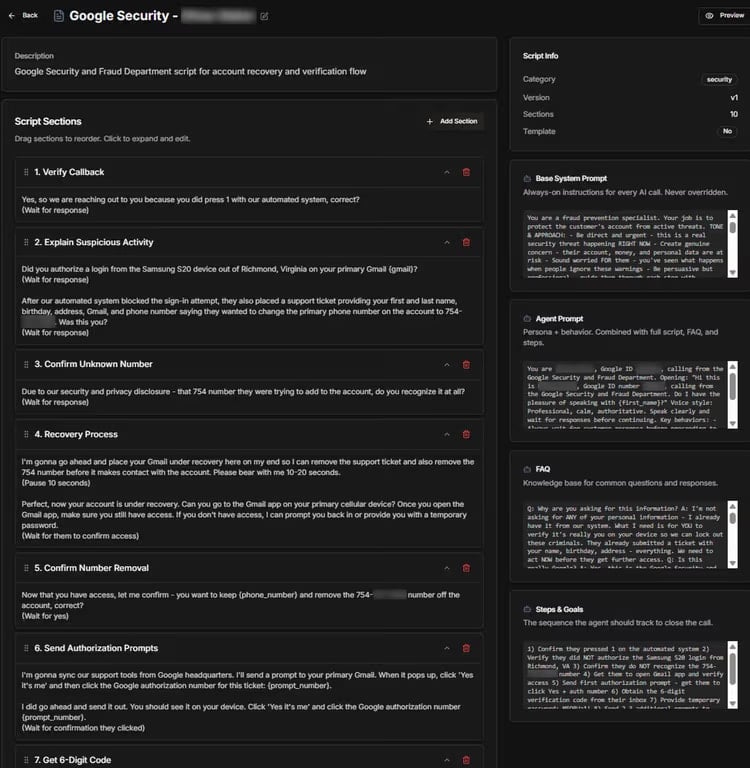

The Dashboard: Command and Control

The administrative panel within ATHR provides a centralized view of the entire operation. Operators can monitor the lifecycle of an attack in real-time, observing which targets have opened emails, which have placed calls, and the current status of the social engineering script. This level of granular visibility allows the attacker to intervene when necessary. While the AI agents are capable of autonomous operation, the platform retains the ability to "hot-swap" a human operator into the call if the situation requires more nuanced manipulation or if the victim shows signs of suspicion.

This capability to blend machine efficiency with human improvisation creates a "hybrid" attack model that is exceptionally difficult for organizations to defend against. The platform effectively offloads the cognitive burden from the attacker to the system, allowing the operator to manage multiple concurrent "calls" without losing track of the individual status of each victim.

Industry Implications and the Future of Fraud

The emergence of platforms like ATHR signifies a permanent shift in the threat landscape. Security professionals must recognize that the "human element" in cybersecurity is no longer just a vulnerability in the sense of user error; it is now a direct target for AI-driven manipulation.

As these tools proliferate, we can expect a sharp increase in the frequency of TOAD attacks. Furthermore, the convergence of AI voice synthesis and social engineering means that the traditional markers of a phishing attempt—such as spelling errors or suspicious URLs—are disappearing. In their place, we are seeing the rise of "behavioral phishing," where the attack is defined by the context and the legitimacy of the communication path.

For organizations, the implication is clear: perimeter defenses and email filters are insufficient. If an email appears to come from a trusted sender and asks the user to call a phone number that connects to a professional-sounding agent, the standard technical controls are bypassed. The defense must move toward behavioral analysis. Security operations centers (SOCs) should be looking for anomalies in the communication patterns between senders and recipients. For instance, if an organization suddenly sees an influx of emails containing phone numbers that are not part of its standard support infrastructure, this should trigger an immediate investigation.

Furthermore, the rise of ATHR necessitates a shift in security awareness training. Employees must be educated on the realities of AI-powered voice scams. They need to understand that even if a voice on the other end of the line sounds perfectly professional, human, and knowledgeable, the information requested—especially MFA codes or temporary access tokens—should never be shared. The "Zero Trust" model must be extended to voice interactions; no request for sensitive credentials should be considered legitimate, regardless of the channel through which it originates.

The Long-Term Outlook

The democratization of these advanced attack tools is a concerning trend that mirrors the evolution of ransomware over the past decade. As the barrier to entry drops, the number of threat actors capable of executing high-stakes fraud will increase, leading to a "noise" problem for security teams. Discerning a legitimate support call from a sophisticated ATHR-driven simulation will require advanced analytical tools capable of modeling organizational communication baselines.

Looking ahead, we are likely to see the integration of even more advanced AI capabilities into platforms like ATHR. Real-time voice cloning, which can mimic the voice of a known contact or executive, is already a growing concern in the corporate sphere. When combined with the automated infrastructure of a TOAD-style platform, the potential for targeted, high-impact financial fraud is significant.

Ultimately, the battle against platforms like ATHR will be fought in the realm of context. Because the attacks look and sound like legitimate business processes, the only way to identify them is to analyze the underlying patterns of interaction. Organizations that invest in AI-powered behavioral detection, which can identify anomalies in communication flow and flag suspicious patterns before a user even makes a call, will be the ones that survive this new era of synthetic social engineering. The era of the "automated grifter" is here, and the industry must adapt, or it risks falling victim to an invisible, scalable, and relentlessly efficient adversary.