The digital advertising landscape has reached a critical inflection point where the sheer velocity of content creation has outpaced traditional moderation methods. As cybercriminals increasingly weaponize generative AI to synthesize deceptive ad campaigns, platform operators are finding themselves locked in an automated arms race. Google has recently signaled a major shift in its enforcement strategy, pivoting toward its proprietary Gemini AI models to identify and neutralize malicious advertising at the point of submission. This evolution marks a transition from reactive, keyword-based filtering to a proactive, context-aware intelligence system capable of parsing intent across billions of daily signals.

The Escalation of the Malvertising Crisis

For years, malvertising—the practice of embedding malicious code or fraudulent links within legitimate advertising networks—has persisted as a formidable challenge for internet giants. Historically, these attacks relied on manual efforts: threat actors would craft a landing page, purchase an ad, and hope to snare unsuspecting users before their account was flagged and banned. However, the integration of generative AI into the cybercriminal toolkit has fundamentally altered the economics of this threat.

Attackers now leverage AI to automate the creation of high-fidelity phishing sites, craft hyper-personalized ad copy, and generate cloaking scripts that bypass rudimentary security checks. These campaigns often mimic reputable software brands, financial services, and even Google’s own internal authentication portals. By rotating through thousands of domains and utilizing sophisticated URL redirects, these malicious actors can sustain high-impact campaigns that siphon cryptocurrency, deploy trojanized software, or harvest enterprise credentials.

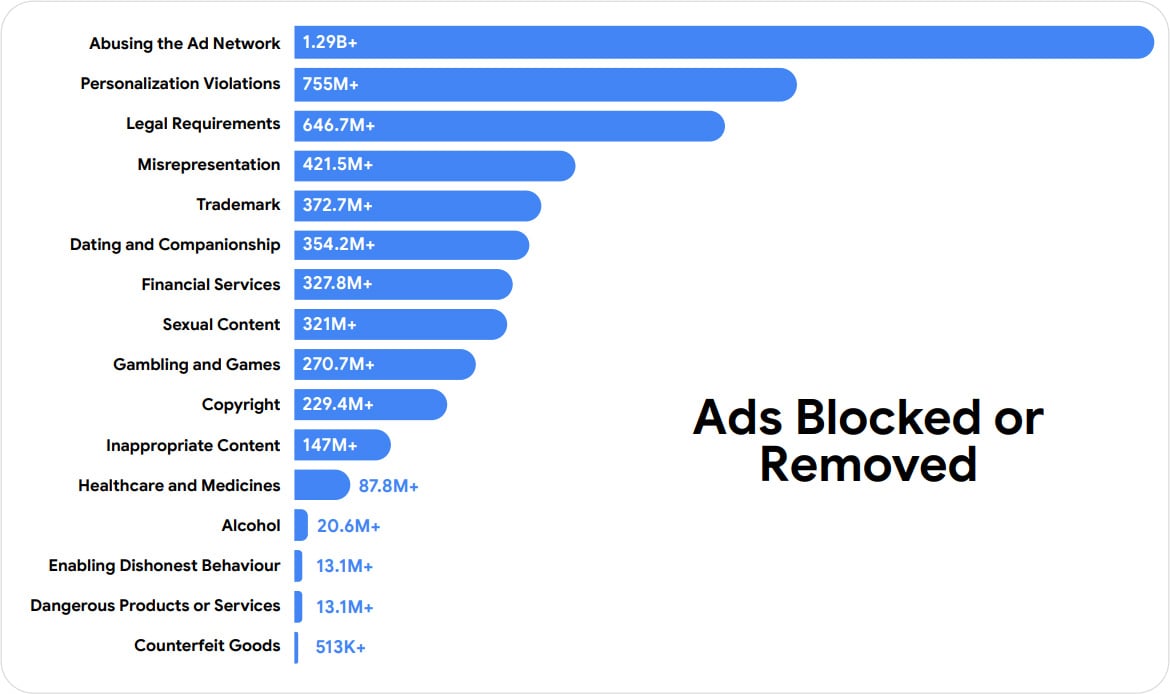

The scope of the challenge is reflected in the sheer volume of enforcement actions. During 2025 alone, global efforts to scrub the platform resulted in the removal of 8.3 billion individual advertisements and the suspension of 24.9 million advertiser accounts. Of these removals, approximately 602 million instances were explicitly identified as fraudulent scam operations. These figures underscore not just the prevalence of the issue, but the industrial scale at which threat actors are operating today.

From Keywords to Context: The Gemini Advantage

The move to integrate Gemini into the ad-review pipeline represents a departure from legacy detection systems. Previous iterations of Google’s safety infrastructure relied heavily on structured data analysis—looking for blacklisted keywords, suspicious domains, or patterns of policy violations that had been observed in past campaigns. While effective against static threats, this approach often struggled to identify novel, "zero-day" ad campaigns that lacked a historical precedent.

Gemini’s architecture, characterized by its capacity for multimodal reasoning and deep contextual understanding, allows for a more granular level of scrutiny. Rather than evaluating an advertisement in a vacuum, the system analyzes a holistic web of data points:

- Behavioral Signals: Monitoring the sequence of clicks and landing page interactions to detect suspicious redirection chains.

- Account Provenance: Analyzing the historical behavior of an advertiser, their financial footprint, and the longevity of their linked accounts.

- Intent Synthesis: Evaluating the ad content and its destination URL to determine if the promise made in the ad aligns with the reality of the landing page, a process essential for flagging "misrepresentation" violations.

This shift to AI-driven, real-time assessment has enabled a significant increase in "at-submission" blocking. By the close of the previous year, the majority of Responsive Search Ads were being reviewed instantly, with malicious content being intercepted before it ever had the opportunity to reach a single user’s screen. This is a critical development, as the window of opportunity for an attacker is often measured in seconds; preventing the initial impression is the most effective way to neutralize a threat.

Industry Implications and the AI Arms Race

The reliance on advanced AI models for platform security has profound implications for the broader digital economy. As Google continues to refine its defensive capabilities, the barrier to entry for threat actors is being artificially raised. However, this creates a dynamic of "adversarial adaptation." As the platform becomes better at detecting AI-generated scams, attackers are forced to experiment with even more nuanced tactics—such as subtle brand impersonation or "living off the land" techniques where they leverage legitimate hosting services to bypass security filters.

Furthermore, the automation of enforcement introduces the risk of false positives. Legitimate businesses, particularly smaller entities or those in highly regulated industries, often face inadvertent suspensions due to overly aggressive automated policies. Google reports that its current AI-driven improvements have resulted in an 80% reduction in incorrect advertiser suspensions, suggesting that the model’s increased accuracy is not only improving safety but also enhancing the experience for honest advertisers.

Industry analysts observe that this trend is likely to continue across all major ad networks. The shift toward "autonomous enforcement" is becoming the gold standard. Companies that cannot afford to build or license large-scale AI models for content moderation will find themselves increasingly vulnerable to being used as infrastructure for malicious campaigns. This creates a potential consolidation of the digital advertising market, where security becomes a primary differentiator.

The Future of Ad-Tech Security

Looking ahead, the focus for safety teams will move toward "predictive moderation." Rather than simply identifying a malicious ad, the objective is to model the evolution of a threat actor’s behavior. By identifying the infrastructure and tactics associated with specific malicious syndicates before they launch a campaign, platforms can essentially pre-empt the threat.

The integration of Gemini across additional ad formats, including video and display banners, will be the next major hurdle. These formats are inherently more complex to analyze than text-based ads, as they involve processing dynamic visual content, audio, and interactive elements. The challenge for developers will be balancing the computational overhead of these models with the need for near-instantaneous decision-making.

Moreover, the regulatory landscape is likely to evolve alongside these technological advancements. As AI plays a larger role in controlling what the public sees, there will be increased demand for transparency regarding how these systems make decisions. The "black box" nature of large models, while efficient for security, poses challenges for accountability.

Conclusion

The battle against malvertising is no longer a human-to-human conflict; it is a battle of algorithms. By leveraging its Gemini models, Google has shifted the terrain of this fight, forcing threat actors to confront a system that is as rapid and adaptable as the AI they use to perpetrate their schemes. While the threat of malvertising will never be fully eradicated, the transition to intelligent, context-aware moderation offers a more resilient framework for protecting users.

As we move further into this era of AI-driven security, the efficacy of these systems will determine the integrity of the digital advertising ecosystem. The goal is a frictionless experience for users and legitimate advertisers, achieved through a sophisticated, invisible, and highly accurate layer of intelligence. The progress reported in 2025 indicates that we are moving toward a future where the platform’s immune system is finally keeping pace with the pathogens seeking to exploit it. The true test will be whether this proactive posture can hold up as the tools of cyber-offense continue to democratize and proliferate.