Artificial intelligence tools have transitioned from nascent technology to indispensable infrastructure, deeply embedding themselves across the digital landscape. Applications ranging from sophisticated code generation and complex data analysis to routine content drafting and enterprise workflow automation now rely heavily on platforms like OpenAI’s GPT models, Anthropic’s Claude, and specialized offerings from Google and Microsoft. For many organizations, these tools are no longer mere conveniences; they represent mission-critical components whose smooth operation is essential for maintaining productivity and competitive edge. This rapid integration, however, has inadvertently created a lucrative new asset class for malicious actors: premium, paid AI access credentials.

The growing dependency on these powerful models directly correlates with their perceived value within the cybercriminal ecosystem. Advanced AI capabilities—specifically the ability to generate high-quality output, circumvent creative blocks, and accelerate development cycles—offer threat actors significant force multipliers. They can drastically lower the required skill ceiling for complex operations while simultaneously boosting the efficiency and sophistication of their attacks.

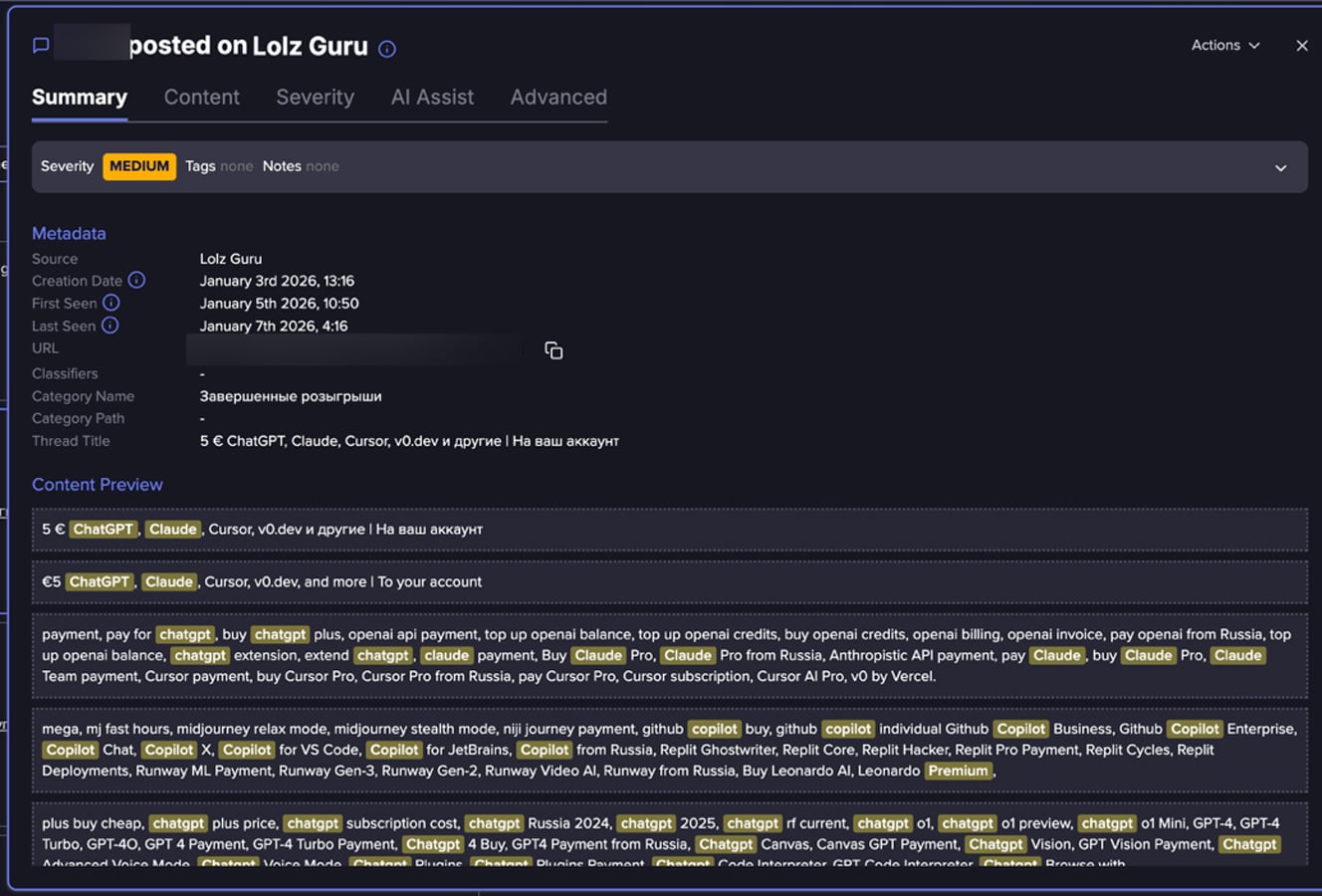

Recent in-depth monitoring of hundreds of postings across dark web forums and fraud-centric digital communities, spearheaded by analysts at Flare, reveals a burgeoning, systematic market dedicated to the trade of premium AI platform access. This is not characterized by sporadic, isolated incidents of credential theft, but rather by a formalized, recurring pattern of advertising and redistributing authenticated access. These listings frequently tout heavily discounted monthly subscriptions, offer bundled access spanning multiple high-demand AI services, or promise usage models that allegedly bypass the rate limits and content restrictions imposed by official service providers. This marketplace dynamic mirrors broader trends in underground economies where digital service entitlements are repackaged, resold, and commodified for a wider base of less technically adept buyers.

The Genesis of Compromised AI Access

While the data analyzed—drawn from open and closed fraud forums—provides a clear picture of the supply side, the precise acquisition methodology remains inferred through circumstantial evidence and known attack vectors. The intelligence strongly suggests a confluence of established compromise techniques being reapplied to modern SaaS platforms:

- Credential Stuffing and Phishing Campaigns: Threat actors leverage massive databases of stolen credentials harvested from previous breaches across unrelated services. If a user reuses their organizational or personal password for an AI platform subscription, a simple credential stuffing attempt can yield immediate, high-value access. Sophisticated spear-phishing campaigns targeting employees who utilize corporate-sponsored AI accounts are also a primary vector for harvesting session cookies or direct login details.

- Malware and Session Hijacking: Information-stealing malware, commonly deployed via drive-by downloads or malicious attachments, is proficient at exfiltrating browser session tokens and stored credentials, granting direct, authenticated access without needing to crack passwords. This is particularly effective against high-value targets who maintain persistent login states.

- Supply Chain and Insider Threats: Compromise of smaller, specialized development firms or third-party vendors who have high-volume enterprise access to AI APIs can result in the theft of bulk access tokens or entire organizational tenant credentials. Furthermore, disgruntled or compromised employees with authorized access may monetize their entitlements directly.

- Abuse of Free Trials and Provisioning Errors: In scenarios involving large enterprise deployments, misconfigurations or inadequate provisioning controls can allow threat actors to exploit temporary access rights or provision accounts using stolen payment methods, quickly reselling access before the inevitable chargeback or cancellation occurs.

Collectively, these pathways indicate that the underground trade in AI accounts is a parasitic outgrowth of existing cybercrime infrastructure, exploiting the standard authentication mechanisms of legitimate cloud services.

The Lure: Why Premium AI Access is In Demand

The appeal of these illicitly obtained accounts for buyers within the criminal ecosystem is multifaceted, rooted in efficiency, cost reduction, and enhanced operational security.

- Cost Efficacy and Accessibility: The primary driver is financial. Premium tiers of leading AI models often come with significant monthly fees, especially for high-volume API usage. Access sold on the underground market is typically offered at a steep discount—sometimes 50% to 80% below retail—making advanced capabilities accessible to actors who might not justify the official expense or who lack the necessary verifiable payment instruments.

- Circumvention of Usage Controls: Premium accounts, particularly those offering API access or enterprise tiers, usually feature higher rate limits and fewer content moderation filters compared to free or basic consumer tiers. Cybercriminals seek this "unrestricted" access to run large-scale generation tasks without fear of immediate service throttling or account suspension based on policy violations (e.g., generating malware code or creating large volumes of disinformation).

- Operational Camouflage: Utilizing a pre-authenticated, legitimate account associated with a genuine user or organization provides a degree of operational cover. Traffic originating from an established, seemingly legitimate account is often subjected to less scrutiny by platform security systems than traffic originating from newly created, suspicious, or known bad IP ranges.

- Toolchain Integration: Sophisticated actors often require AI capabilities integrated directly into their automated toolchains. Access purchased via API keys or shared session credentials allows for seamless integration into botnets or automated phishing deployment scripts, speeding up the entire malicious workflow.

Industry Implications: AI as an Attack Enabler

The normalization of this underground trade has severe implications, transforming the capabilities of the average threat actor. Generative AI tools are not merely used for drafting emails; they are actively weaponized across several domains:

Advanced Social Engineering and Fraud:

The synthesis of highly convincing, context-aware, and multilingual fraudulent communications represents the most immediate threat. Europol’s 2025 threat assessment highlighted the acceleration of phishing and fraud automation driven by generative AI, noting that the speed and sophistication achievable now surpass previous manual capabilities. Attackers are moving beyond simple grammatical errors; they can now generate narratives that mimic specific corporate jargon or personal communication styles. Palo Alto Networks’ Unit 42 analysis further corroborates this, detailing how AI facilitates hyper-personalized social engineering attacks, tailoring pretext and context precisely to the individual target, which dramatically increases conversion rates for scams, Business Email Compromise (BEC), and credential harvesting.

Malware Development and Exploit Crafting:

While major model providers implement guardrails against direct malicious code generation, actors with access to less-filtered or older models, or those skilled in prompt engineering to bypass safety layers, can leverage AI for:

- Generating obfuscated or polymorphic malware code segments.

- Rapidly developing proof-of-concept exploits for newly disclosed vulnerabilities (zero-days).

- Automating the reverse engineering of defensive code snippets.

Espionage and Information Operations:

As evidenced by reports from groups like Anthropic detailing AI misuse in orchestrated cyber espionage, these tools lower the barrier for creating large-scale influence operations. AI can rapidly produce vast amounts of seemingly authentic content across multiple platforms, enabling coordinated disinformation campaigns or the generation of synthetic identities for long-term reconnaissance missions. The capability to generate realistic synthetic media (deepfakes in audio or video) further complicates forensic analysis and verification processes.

The Commodification of Digital Identity

The trend observed by Flare demonstrates that AI access is being assimilated into the established infrastructure of the underground digital economy. These listings do not appear in isolation; they are frequently cross-sold alongside other high-demand commodities such as Remote Desktop Protocol (RDP) access, Virtual Private Server (VPS) credentials, validated email lists, and identity verification infrastructure (e.g., SIM-swapped phone numbers).

This bundling signifies a maturing market where a threat actor can acquire a full "IT stack" for a specific operation from a single vendor or marketplace channel. The offerings themselves often use technical, product-oriented terminology:

- "Uncapped Monthly Usage" or "Full API Access": Signifying a desire to avoid the usage quotas that plague shared or throttled accounts.

- "Lifetime Access Guarantee" (often contingent on seller longevity): Suggesting a desire for sustained tooling without repeated monthly purchases.

- "Bundles" (e.g., GPT-4 + Claude Opus + Perplexity Pro): Catering to actors who require multimodal AI capabilities for complex tasks like cross-referencing proprietary data against public knowledge bases while maintaining writing quality.

This commercial packaging reduces friction for buyers, allowing even actors with minimal technical sophistication to immediately deploy high-end computational resources for malicious ends.

Expert Analysis and Future Trajectory

The primary challenge for platform providers and enterprise security teams lies in the abstract nature of the asset being traded. Unlike stolen financial data, which can be flagged upon use, a stolen AI account credential might be used subtly for months before a pattern of abuse triggers a ban. Furthermore, the sheer volume of legitimate API calls made by a paying customer makes identifying a compromised credential based purely on volume difficult without sophisticated behavioral analysis.

Industry Response and Mitigation Strategies:

For organizations integrating AI into sensitive workflows, mitigation requires a multi-layered approach that extends beyond standard Identity and Access Management (IAM):

- Behavioral Anomaly Detection (BAD) for AI Usage: Security teams must move beyond simple login monitoring. They need tools capable of analyzing the content and rate of prompts being submitted against a user’s historical baseline. A sudden shift from drafting marketing copy to generating complex SQL injection payloads, or a drastic increase in token usage outside of business hours, must trigger immediate review and potential session termination.

- Contextual Access Control: Implementing Zero Trust principles specifically for AI platforms is crucial. Access to premium models should be strictly tied to the user’s role, geographical location (if applicable), and the sensitivity of the data being processed. Corporate VPNs or secure workspaces should be mandatory for accessing enterprise-level AI tools.

- Strict API Key Management: For development environments, API keys must be treated as highly sensitive secrets, subjected to regular rotation, scope limitation, and immediate revocation upon employee departure or signs of compromise. Centralized secret management systems are non-negotiable for AI-integrated applications.

- User Education on Credential Hygiene: While vendors work on stronger technical controls, organizational awareness campaigns must stress the unique value of AI credentials. Employees must understand that their access to tools like GPT-4 is now a high-value target, equivalent to credentials for a primary corporate cloud storage service.

The trajectory suggests that as AI models become more integrated—potentially moving toward multimodal reasoning that incorporates real-time data feeds—the underground value of compromised access will only escalate. Threat actors are effectively outsourcing their intellectual heavy lifting to these stolen licenses. Consequently, the security industry must rapidly evolve its monitoring frameworks to treat AI platform usage not as a benign productivity metric, but as a critical security perimeter that requires continuous, context-aware defense. The fight for digital resources is now also a fight for access to computational intelligence itself.