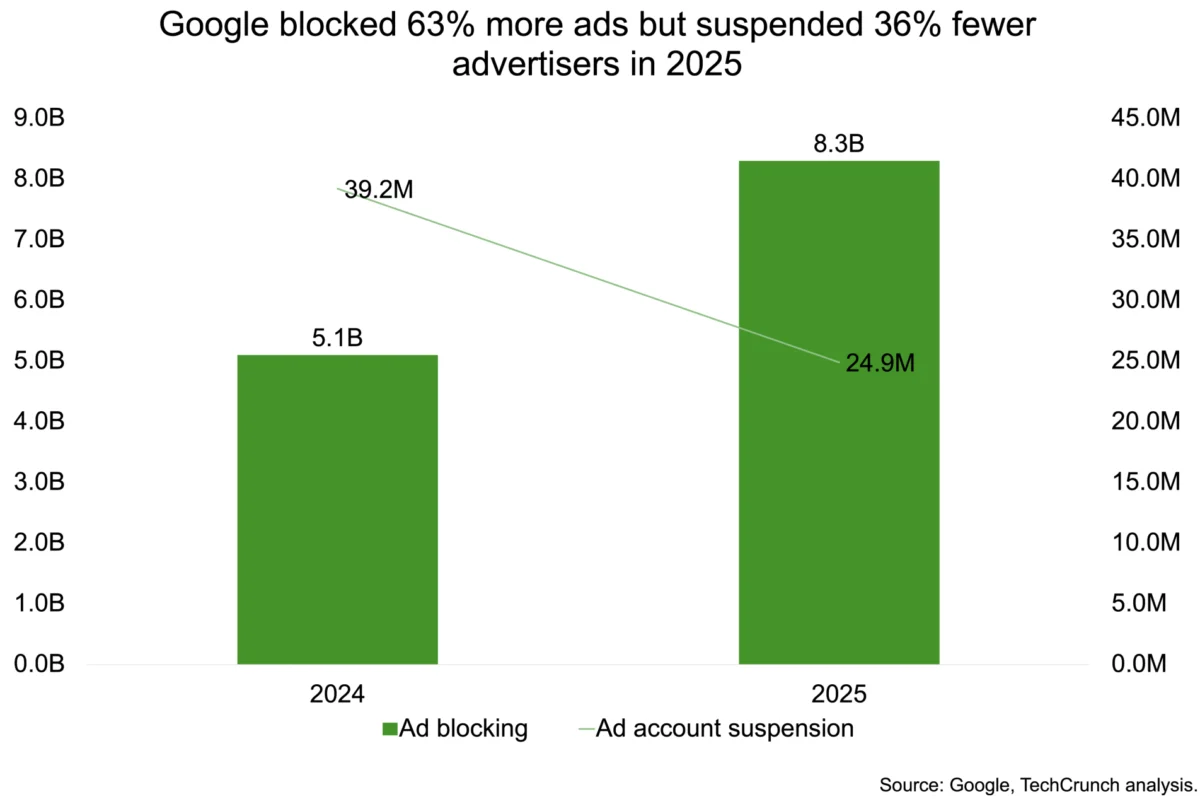

The scale of digital advertising has reached a point where human oversight is no longer a primary line of defense, but rather a secondary check on a massive, automated ecosystem. In its 2025 Ads Safety Report, Google revealed a staggering leap in its enforcement capabilities, blocking a record-breaking 8.3 billion ads globally over the course of the year. This figure represents a nearly 63% increase from the 5.1 billion ads neutralized in the previous year, signaling a dramatic escalation in the "arms race" between platform defenders and malicious actors. However, the most significant takeaway from this data is not just the sheer volume of removals, but a fundamental shift in strategy: Google is moving away from the "blunt instrument" of account-wide suspensions in favor of surgical, creative-level interventions powered by its Gemini generative AI models.

This evolution in digital governance marks a transition from targeting "bad actors" to targeting "bad ads." Historically, a common tactic for platform safety was the wholesale deplatforming of accounts suspected of fraud or policy violations. While effective in the short term, this approach often resulted in high rates of "collateral damage," where legitimate small businesses were caught in automated dragnets, leading to lost revenue and arduous appeal processes. By integrating the Gemini family of AI systems directly into its enforcement pipeline, Google claims to have reduced incorrect suspensions by a remarkable 80% year-over-year. The new methodology focuses on the "creative level"—the specific image, text, or video of an individual ad—allowing the platform to neutralize harmful content without necessarily terminating the entire advertiser relationship unless a systemic pattern of malice is established.

The Gemini Factor: AI vs. AI in the Scam Ecosystem

The surge in blocked ads is not merely a reflection of better detection; it is a response to the democratization of generative AI tools among scammers. Malicious actors are now using large language models (LLMs) and image generators to produce deceptive content at a scale and sophistication previously unimaginable. Scammers can now generate thousands of unique, high-quality ad variations in minutes, each designed to bypass traditional keyword-based filters. These campaigns often involve "malvertising," financial fraud, and sophisticated misrepresentation tactics that mimic legitimate brands with uncanny accuracy.

To counter this, Google has deployed Gemini to perform real-time, deep-content analysis. Unlike older machine learning models that relied on static signals, Gemini can understand context, intent, and subtle patterns across massive datasets. According to Google, these AI-driven systems now catch more than 99% of policy-violating ads before they are ever served to a user. This "pre-emptive strike" capability is crucial in a landscape where a single fraudulent ad can cause significant financial harm within minutes of going live. Gemini’s ability to detect "pattern-matching" across disparate campaigns allows Google to identify a single scammer operating across hundreds of seemingly unrelated accounts, neutralizing the threat at the source.

Geographic Divergence: Analyzing the US and Indian Markets

The 2025 data highlights significant regional variations in how digital threats manifest and how they are policed. In the United States, Google removed over 1.7 billion ads and suspended 3.3 million advertiser accounts. The primary drivers of these enforcement actions were ad network abuse, misrepresentation, and the proliferation of unauthorized sexual content. The U.S. market remains a high-value target for sophisticated fraud rings that attempt to exploit the high cost-per-click (CPC) rates of American consumers.

In contrast, India—now Google’s largest market by user volume—showed a different trend. The number of blocked ads in India nearly doubled to 483.7 million, yet account suspensions actually fell from 2.9 million to 1.7 million. This divergence perfectly encapsulates the "precision pivot." In a rapidly digitizing economy like India’s, trademarks, financial services fraud, and copyright infringements are the dominant concerns. By focusing on blocking specific ads rather than nuking accounts, Google is attempting to protect the integrity of its ad auction while allowing the burgeoning Indian digital entrepreneur class to continue operating without the fear of accidental deplatforming.

The Anatomy of Modern Ad Fraud

Among the billions of blocked ads, 602 million were explicitly linked to scams, supported by 4 million suspended accounts. These figures represent the "hard core" of digital criminality. Modern scams have moved far beyond the "Nigerian Prince" emails of the past. Today, they involve:

- Deepfake Endorsements: Using AI-generated likenesses of celebrities or public figures to sell fraudulent investment schemes or health products.

- Cloaking: A technique where the ad shown to Google’s reviewers is benign, but the ad shown to the end-user leads to a malicious site.

- Regulatory Arbitrage: Scammers target jurisdictions with weaker consumer protection laws or exploit specific cultural events (like elections or holidays) to create a sense of urgency.

Google’s layered defense strategy aims to stop these actors before they even place their first bid. The "Advertiser Verification" program, which requires businesses to provide legal documentation and identity confirmation, acts as a high-friction barrier for entry. While this process has been criticized by some for being cumbersome, Google’s leadership, including Keerat Sharma, VP and General Manager of Ads Privacy and Safety, argues that this friction is a necessary deterrent. The decline in account suspensions is partially attributed to these "pre-account" hurdles, which filter out low-effort bad actors before they can pollute the ecosystem.

Industry Implications and the False Positive Problem

For the legitimate advertising industry, Google’s shift toward granular enforcement is a double-edged sword. On one hand, the 80% reduction in "false positive" suspensions is a massive win for brands and agencies. An accidental suspension on a platform as dominant as Google can be a "death sentence" for a small e-commerce business or a specialized service provider. By shifting the focus to the "creative," Google allows for a more nuanced dialogue between the platform and the advertiser. If an ad is blocked, the advertiser can often simply fix the specific violation rather than fighting to regain access to their entire marketing infrastructure.

On the other hand, the increasing reliance on black-box AI models like Gemini raises questions about transparency and accountability. As Google integrates Gemini more deeply into its core infrastructure—from Workspace to Search and Advertising—the "rules of the road" are increasingly written in code that few outsiders can audit. If an AI decides an ad is "misrepresentative" based on a subtle linguistic pattern, the advertiser may struggle to understand the "why" behind the rejection. This necessitates a robust appeals process that, while increasingly automated, must still provide a path to human intervention for complex cases.

Future Trends: The Road to 2026 and Beyond

As we look toward the 2026 reporting cycle, several trends are likely to define the next phase of digital ad safety. First, the "Generative AI Arms Race" will only intensify. As scammers gain access to even more powerful open-source LLMs, the complexity of fraudulent content will increase. We can expect to see "adversarial AI" specifically designed to probe Google’s defenses and find the "blind spots" in Gemini’s detection logic.

Second, the regulatory environment is tightening. Platforms are no longer viewed as neutral conduits but as active gatekeepers responsible for the content they monetize. Legislation like the EU’s Digital Services Act (DSA) and evolving privacy laws in the U.S. and India will force Google to be even more transparent about its enforcement metrics. The 2025 Ads Safety Report is, in many ways, a preemptive strike against regulators, demonstrating that the company is taking its "duty of care" seriously through massive technological investment.

Finally, the concept of "identity" in advertising will undergo a revolution. We are likely moving toward a "zero-trust" model for digital ads, where every creative element must be digitally signed and verified back to a known entity. Google’s emphasis on "Advertiser Verification" is just the beginning. In the future, blockchain-based verification or encrypted metadata attached to every image and video could become the standard for ensuring that a "Nike" ad actually comes from Nike.

The 2025 data proves that while the volume of digital noise is increasing, the tools to filter that noise are becoming more surgical. By leveraging Gemini to move from a "blunt instrument" to a "scalpel," Google is attempting to balance the conflicting needs of scale, safety, and economic growth. The success of this pivot will determine whether the internet’s primary funding mechanism—advertising—remains a viable and trusted medium in an era defined by synthetic content and automated deception.