The digital landscape is undergoing a seismic shift, moving beyond application-specific hurdles to integrate identity verification directly into the core operating systems of personal devices. For years, users have navigated a patchwork of age checks—a pop-up on YouTube, a prompt on ChatGPT, or restrictions on Discord—each platform managing its own gatekeeping responsibilities. However, this era of fragmented verification is rapidly giving way to a more centralized, system-level approach, with mobile device manufacturers now taking the lead in enforcing digital maturity standards. This evolution, exemplified by recent developments in the United Kingdom, signals a profound change in how user access is managed online, presenting both regulatory compliance advantages and significant new privacy quandaries.

This transition is not occurring in a vacuum. It follows intense global regulatory pressure aimed at protecting minors from age-inappropriate content, particularly across video-sharing platforms and emerging generative AI services. Regulators worldwide have grown increasingly skeptical of self-attestation or simple third-party verification methods, deeming them insufficient to shield younger users effectively. The consensus appears to be coalescing around the idea that verification must be robust, immutable, and, crucially, implemented at the point of access—the device itself.

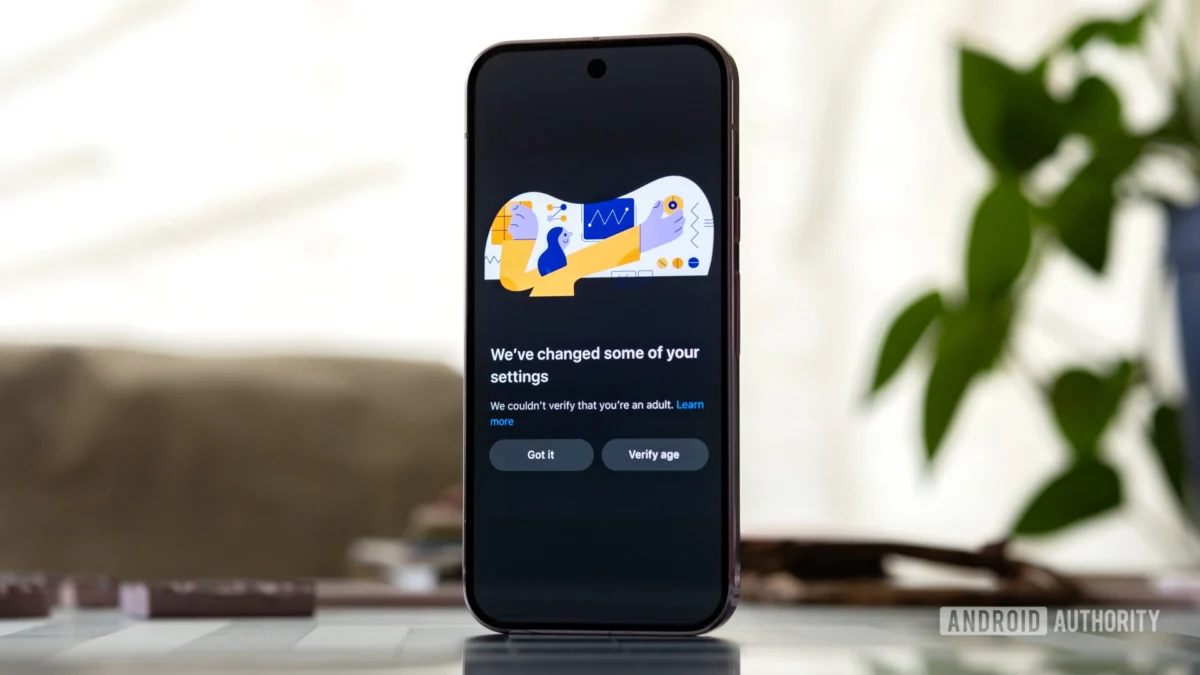

The initial significant deployment of this strategy is being observed on Apple’s iOS platform within the UK market. Following a recent software update, iPhone users in that jurisdiction are encountering mandatory age confirmation protocols to access a range of features and content deemed sensitive. This initiative extends far beyond merely restricting the download of mature-rated applications from the App Store. Reports indicate that the verification status can trigger limitations on general web browsing capabilities and even introduce more stringent "communication safety" protocols within native services like Messages and FaceTime, specifically designed to scan and flag the exchange of explicit imagery. Apple’s official documentation, while somewhat vague on the full scope, confirms that failure to successfully verify one’s age results in feature restrictions, creating a tiered system of access based on confirmed maturity.

The methods Apple is employing to establish this verified "age signal" are multi-faceted, reflecting the complexity of creating a high-assurance identity check without overly compromising user anonymity. Options reportedly include leveraging existing financial data tied to the user’s account, such as a credit card on file, which carries inherent trust because of its association with a formal financial institution. More invasively, the system may require the scanning of government-issued identification, such as a passport or driver’s license, implying a direct link between physical identity documents and the digital profile. A third pathway relies on accumulated behavioral signals, such as the longevity and established activity history of the user’s Apple ID, suggesting a level of inferred identity confirmation.

This move toward operating system (OS) level verification resonates strongly with policy debates currently unfolding in jurisdictions like the United States, particularly in states such as California and Colorado. These legislative efforts have championed the concept of a standardized, shared "age signal." The logic is efficiency and consistency: instead of every streaming service, social media site, and AI chatbot building and maintaining its own costly, potentially error-prone age verification infrastructure, the OS vendor—the entity controlling the device—would perform the check once. This verified status would then be communicated securely to compliant applications, which would use this signal to tailor the user experience accordingly. The smartphone, in essence, transforms from a neutral conduit into an active digital gatekeeper, enforcing policy dictated by external mandates.

The implications for user privacy and digital autonomy are substantial, prompting immediate and vociferous backlash from privacy advocates and segments of the user base. In online discussions surrounding the UK rollout, apprehension centered on the demand for highly sensitive personal data—government IDs or financial details—simply to maintain access to baseline functionalities. Critics argue that centralizing age verification within the OS creates an unprecedented concentration of personal data under the control of a single technology titan. This raises critical questions about data security, potential government access, and the scope creep of corporate surveillance. If the OS knows the user’s age, and can communicate that to every app requesting it, the potential for micro-targeting, content suppression, or even state-mandated access controls expands dramatically.

From an industry perspective, this development could redefine competitive advantage and regulatory compliance burdens. For smaller platforms or startups, relying on an OS-level signal could dramatically lower the barrier to entry for complying with stringent age-related laws, shifting the cost and complexity onto platform owners like Apple and Google. However, for the platform owners themselves, it introduces immense liability. They become the de facto identity verification agencies for vast populations, taking on the legal and technical challenges associated with data protection regulations like GDPR while simultaneously enforcing content policy.

The strategic decision by Apple to initiate this rollout in the UK, a jurisdiction where new digital safety legislation mandates specific protections for minors, suggests a calculated approach. While Apple maintains it is not legally compelled to implement this specific OS-level mechanism under current UK law, its introduction suggests the UK is serving as a crucial proving ground. If the system proves technically viable, scalable, and effective at satisfying regulatory demands in one major territory, it sets a powerful precedent for its global deployment, potentially forcing the hand of competitors and other governments.

Expert analysis suggests this represents a maturing of the "walled garden" approach to digital safety. Previously, safety measures were applied at the application layer, allowing users to bypass restrictions by switching devices or using different browsers. By embedding verification into the OS kernel or core services, the manufacturer establishes a hard boundary. This architecture inherently favors control over user freedom in the pursuit of regulatory compliance and corporate risk mitigation. The crucial technical challenge lies in the design of the "age signal" itself: is it a zero-knowledge proof, confirming only "over 18" without revealing the actual birthdate, or does it pass through more granular data? The level of data abstraction employed will dictate the ultimate privacy impact.

Looking ahead, the industry implications extend beyond smartphone manufacturers to chip designers and cloud service providers. For OS-level verification to work across ecosystems—for instance, allowing an Android device to securely communicate its verified age status to an iOS app, or vice versa—a universal, industry-standard protocol for secure identity assertion would need to emerge. This would likely involve collaboration between major tech entities, potentially brokered or mandated by international regulatory bodies.

Furthermore, the concept of an "age signal" could be applied to areas beyond content restriction. Imagine age-gated access to financial services, mandatory time limits based on age profiles, or even differential access to data collection based on verified user maturity. The technology deployed for content safety today could easily morph into a universal digital ID attribute, managed by the device manufacturer. This potential for feature creep is precisely what fuels skepticism among civil liberties groups, who warn of creating a persistent, device-bound digital identity ledger that tracks user behavior across the entire digital sphere.

The long-term trend points toward an unavoidable convergence of identity management and operating system functions. As society demands greater accountability for the dissemination of harmful content, and as AI tools necessitate robust input filtering, the technical mechanism for confirming identity must become more pervasive. Whether users ultimately accept this trade-off—exchanging a measure of privacy and digital freedom for perceived safety—remains the defining tension of this technological shift. For now, the battleground has moved from the application layer to the very foundation of the devices we rely on daily, establishing a new paradigm where the phone itself demands credentials before granting access. The success or failure of this initial deployment in markets like the UK will shape the architecture of digital access for the next decade. The sophistication of the verification methods—the balance struck between robust identity proofing (like ID scanning) and privacy-preserving methods (like cryptographic proofs)—will determine whether this new era is viewed as a necessary safeguard or an overreaching infringement on personal digital sovereignty. The current iteration, with its diverse and sometimes intrusive verification options, suggests a strong bias toward assurance over anonymity, signaling a significant shift in the digital contract between user and device.