The narrative surrounding artificial intelligence has reached a point of dizzying volatility, characterized by a constant oscillation between extreme optimism and dire warnings of systemic collapse. To some, we are in the midst of a generational gold rush that will redefine the human condition; to others, the industry is a speculative bubble teetering on the edge of a correction. Amidst these conflicting headlines, the 2026 AI Index provides a necessary empirical anchor, offering a comprehensive "report card" that evaluates the technology’s progress, its environmental toll, and its profound impact on the global labor market. The data suggests that while the industry is sprinting at an unprecedented pace, the infrastructure, regulatory frameworks, and societal safety nets are struggling to keep their footing.

Contrary to recent skepticism suggesting that large language models (LLMs) might be hitting a "data wall" or a plateau in scaling laws, the latest performance metrics indicate that top-tier models continue to evolve. We are witnessing an adoption curve that outpaces the arrival of the personal computer and the internet. AI-driven enterprises are generating revenue at a velocity never before seen in previous technological cycles. However, this growth is not coming cheaply. The capital expenditure required to sustain this momentum is staggering, with hundreds of billions of dollars being funneled into specialized semiconductors and massive data centers. This has created a "sprint" mentality where the technology is advancing faster than our ability to comprehend, much less govern, its implications.

The most immediate and perhaps most overlooked cost of this acceleration is the physical footprint of the digital mind. The infrastructure required to train and deploy frontier models has reached a scale of energy consumption that rivals medium-sized nations. Global AI data centers now draw approximately 29.6 gigawatts of power—a figure equivalent to the peak demand of the entire state of New York. The environmental impact extends beyond electricity to the vital resource of water, used primarily for cooling these massive server clusters. Estimates suggest that the annual water consumption required to run a single high-end model like GPT-4o could exceed the drinking water requirements of 12 million people. Furthermore, the supply chain supporting this growth remains perilously thin. While the United States leads in data center density, the fabrication of almost every leading AI chip remains concentrated within a single entity in Taiwan: TSMC. This creates a singular point of failure that tethers the future of global AI to the geopolitical stability of the Taiwan Strait.

On the geopolitical stage, the long-anticipated rivalry between the United States and China has entered a state of near-parity. Data from Arena, a community-driven ranking platform, shows that the performance gap between American and Chinese models has evaporated. While OpenAI held a decisive lead in early 2023, the field leveled significantly through 2024 as Google and Anthropic released competitive architectures. By February 2025, the Chinese lab DeepSeek disrupted the market with its R1 model, which briefly matched the performance of the world’s leading models. As of early 2026, the rankings are a crowded field where Anthropic, xAI, Google, and OpenAI lead by the thinnest of margins, closely followed by Chinese giants like Alibaba.

The nature of the competition has shifted from raw capability to a more nuanced battle over reliability, cost-efficiency, and practical utility. However, the two superpowers are playing to different strengths. The U.S. maintains a commanding lead in raw capital, hosting over 5,400 data centers—ten times more than its nearest competitor. Conversely, China has secured a dominant position in the "physical" side of AI, leading the world in robotics, AI-related patent filings, and the sheer volume of research publications. This divergence suggests a future where the U.S. may lead in the "brains" of AI, while China leads in its "hands" and "eyes."

As the stakes rise, the spirit of open scientific inquiry that once defined the AI field is being replaced by a "black box" culture. Major labs such as OpenAI, Anthropic, and Google have largely ceased the practice of disclosing training methodologies, parameter counts, or the composition of their datasets. This lack of transparency has created a significant hurdle for the academic community. Without access to the underlying mechanics of these models, independent researchers find it increasingly difficult to study model behavior or develop standardized safety protocols. We are essentially building more powerful systems while knowing less about their internal logic, a trend that experts warn could lead to unpredictable and unmanageable model behaviors.

Technical benchmarks, once the gold standard for measuring progress, are also facing a crisis of credibility. As AI models blow past existing ceilings, the tests designed to measure them are becoming obsolete or, worse, misleading. Many popular benchmarks are now "contaminated," meaning the test questions have leaked into the models’ training data, allowing them to "memorize" answers rather than "reason" through them. One widely used math benchmark was recently found to have an error rate of 42% in its own answer key, yet it is still used to rank multi-billion dollar systems. This "jagged intelligence" is evident in the physical world as well; while AI can now pass PhD-level science exams, humanoid robots still struggle with basic household chores, succeeding in only about 12% of domestic tasks. The disparity between digital reasoning and physical dexterity remains one of the industry’s most significant hurdles.

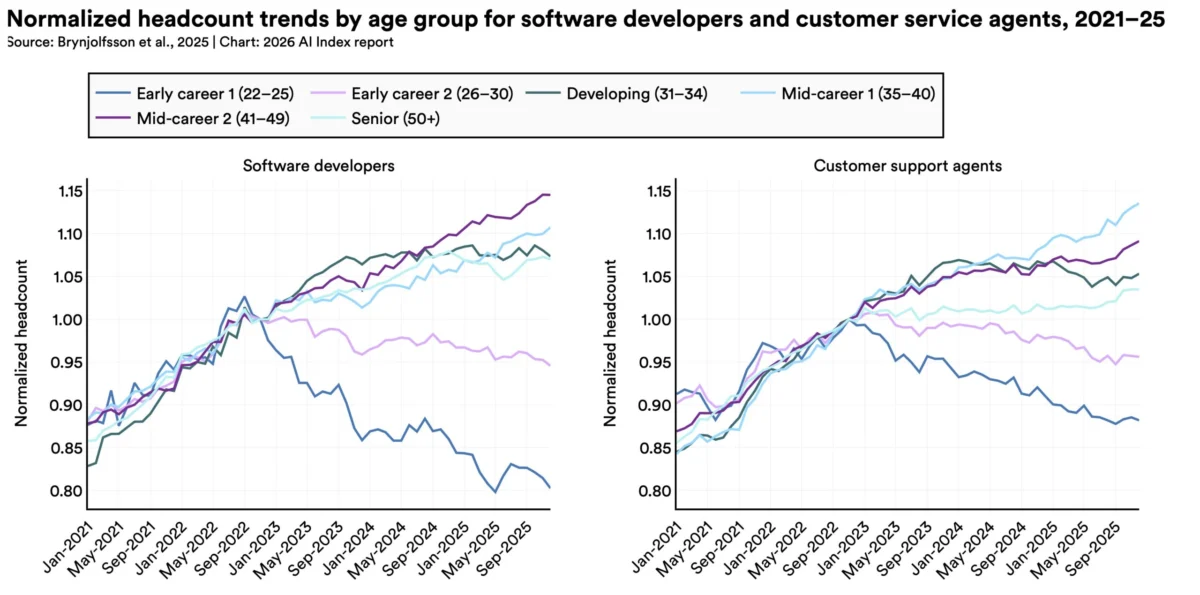

The economic implications of this "jagged intelligence" are beginning to manifest in the labor market, particularly for entry-level professionals. AI adoption has reached a saturation point where 88% of organizations report using the technology in some capacity. While the broader economic impact remains difficult to quantify, early indicators are concerning for the next generation of workers. In the software development sector, employment for those aged 22 to 25 has plummeted by nearly 20% since 2022. While macroeconomic factors play a role, there is a growing consensus that AI is acting as a "force multiplier" for senior staff, reducing the need for junior-level "code monkeys" or entry-level support staff.

The productivity gains are real—14% in customer service and 26% in software engineering—but these gains are concentrated in tasks that are routine or data-heavy. In roles requiring high-level judgment, nuance, or physical presence, the impact is less pronounced. Nevertheless, the sentiment among employers is shifting; a McKinsey survey indicates that a third of organizations expect AI to lead to a net reduction in their workforce over the next year. This has created a profound "optimism gap" between those who build AI and those who live with it. While over 70% of AI experts believe the technology will improve the future of work, only 23% of the general public shares that sentiment.

The public’s anxiety is mirrored in a fractured and often contradictory regulatory environment. Globally, there have been some milestones, such as the EU AI Act’s implementation of bans on predictive policing and emotion recognition. Nations like Japan, South Korea, and Italy have also codified national AI frameworks. However, in the United States, the regulatory approach is defined by a clash between federal and state powers. At the federal level, an executive order from the Trump administration has sought to limit the ability of states to impose restrictive regulations, favoring a deregulatory stance aimed at maintaining American competitiveness against China.

Despite this federal pressure, U.S. states have become hotbeds of legislative activity. In 2025 alone, a record 150 AI-related bills were passed into law across various states. California’s SB 53 and New York’s RAISE Act represent significant attempts to force transparency on developers, mandating safety disclosures and whistleblower protections. This "patchwork" of regulations creates a complex legal landscape for tech companies to navigate, but it also reflects a deep-seated public mistrust. Americans, in particular, report the lowest levels of trust in their government’s ability to regulate AI appropriately, with many fearing that regulation will either be too slow to catch the risks or too weak to hold developers accountable.

As we look toward the latter half of the decade, the trajectory of artificial intelligence remains a study in contradictions. The models are getting smarter, yet our ability to measure that intelligence is breaking down. The industry is generating immense wealth, yet it is consuming resources at an unsustainable rate. The technology is being integrated into the fabric of daily life with unprecedented speed, yet the social contract regarding work and privacy is fraying. The 2026 data confirms that AI is no longer a futuristic prospect; it is a current reality that is outrunning our traditional methods of oversight. The challenge for the coming years will not just be making AI more capable, but making it more legible, more sustainable, and more aligned with the human workforce it is increasingly designed to augment—or replace.