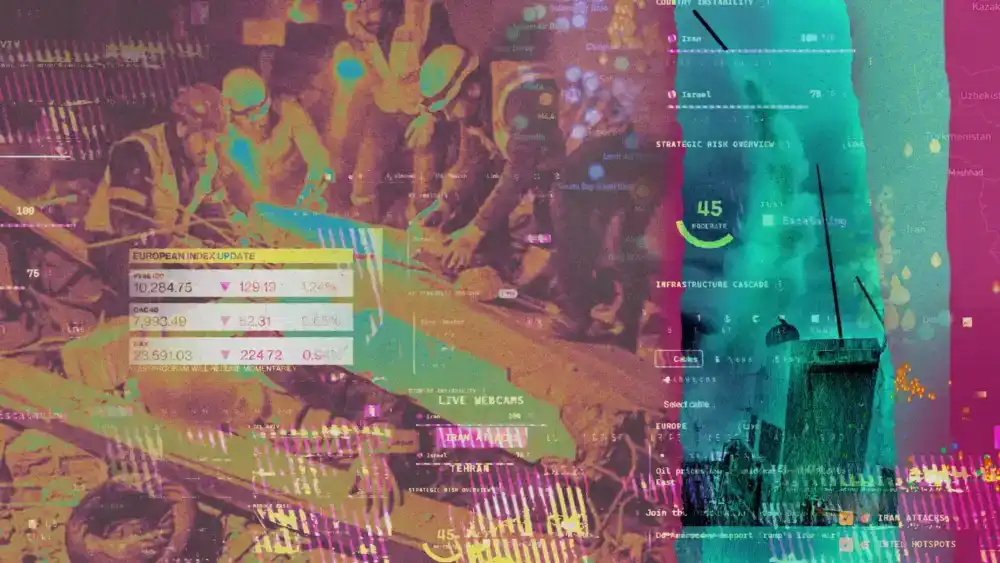

The digital landscape of modern warfare has shifted from the curated broadcasts of 24-hour news cycles to a fragmented, high-velocity ecosystem of real-time data visualizations and algorithmic analysis. As tensions between the United States, Israel, and Iran reached a fever pitch in recent months, a new phenomenon emerged on the screens of millions: the democratization of intelligence. Through the lens of "vibe-coded" dashboards and AI-integrated prediction markets, the grim realities of kinetic conflict are being transformed into a high-stakes digital spectacle, blurring the lines between informed observation and perverse entertainment.

At the heart of this transformation is the rise of the intelligence dashboard. Once the exclusive domain of state actors and elite security firms, these platforms now offer civilians access to a dizzying array of open-source intelligence (OSINT). A notable example, developed by partners at the venture capital firm Andreessen Horowitz, serves as a microcosm of this trend. By synthesizing satellite imagery, maritime tracking data, and real-time news feeds into a single interface, these tools provide a God’s-eye view of the battlefield. Yet, they go a step further by integrating a financial layer: direct links to prediction markets like Kalshi and Polymarket. Here, users don’t just watch the news; they bet on it. Payouts are triggered by events as grave as the selection of a new Iranian Supreme Leader—as seen with the recent elevation of Mojtaba Khamenei—or the timing of a missile strike.

The speed at which these tools are being deployed is a testament to the power of generative AI in software development. The term "vibe-coding" has entered the lexicon to describe the process by which individuals with minimal technical expertise use Large Language Models (LLMs) to assemble complex web applications in a matter of days. These AI-assisted builds have gained such prominence that they have even caught the eye of industry titans like the founders of Palantir, a company that serves as a primary conduit for the U.S. military’s own AI integration. This creates a strange feedback loop: the same AI models, such as Anthropic’s Claude, that are being utilized by the Department of Defense to assist in strategic decision-making are also being used by the public to monitor and gamify those very same actions.

This technological convergence has fueled a narrative of media obsolescence. Creators of these dashboards often market them as a "truth-seeking" alternative to traditional journalism, which they characterize as slow, biased, or overly sanitized. On platforms like LinkedIn and X, testimonials from users claim they "learned more in 30 seconds" from a live-updating map of Iranian airspace than from hours of traditional reporting. This sentiment reflects a growing public hunger for "raw" data, driven by a profound distrust in institutional gatekeepers. However, this shift toward unmediated data feeds introduces a paradox: while information is more accessible than ever, true understanding may be more elusive.

The core issue lies in the distinction between information and intelligence. As digital investigation experts like Craig Silverman note, the sheer volume of signals provided by these dashboards can create an "illusion of control." Having access to a map of strike locations, real-time cryptocurrency price fluctuations, and AI-generated news summaries does not equate to having the historical context or analytical expertise required to interpret them. In a traditional intelligence agency, data is vetted by subject matter experts who understand the nuances of regional politics and the potential for deception. In the world of the AI dashboard, the data is often uncurated and presented with a "flat" hierarchy, where a minor maritime anomaly is given the same visual weight as a major diplomatic shift.

Furthermore, the integration of AI-generated summaries introduces a significant risk of "hallucination" or inaccuracy. When an LLM is tasked with summarizing complex, rapidly evolving events, it may conflate details or miss critical subtleties. Because these dashboards are built for speed, the rigorous fact-checking protocols of traditional newsrooms are often sacrificed for the sake of real-time updates. This creates a fertile ground for the "synthetic fog of war," where AI-mediated information distorts the reality of the conflict as much as it clarifies it.

The most alarming aspect of this new information environment is the rise of synthetic media, particularly AI-generated satellite imagery. For decades, satellite photography was considered the "gold standard" of objective truth in conflict monitoring. It provided undeniable visual evidence of troop movements, site damage, and military posturing. However, recent reports from the Financial Times and other investigative outlets have highlighted a surge in sophisticated AI fakes circulating on social media. These manipulated images can show "damage" to facilities that remain untouched or "movements" that never occurred. When these fakes find their way into public intelligence dashboards, they can trigger massive swings in prediction markets and influence public opinion before they can be debunked. This erosion of visual evidence threatens to undermine one of the few remaining tools for objective accountability in wartime.

The financialization of these insights through prediction markets adds another layer of complexity. When war becomes a betting event, the incentives for information accuracy are warped. While some argue that prediction markets are more accurate than pundits because participants have "skin in the game," the reality is that these markets can be manipulated by those with the power to create "noise." If a false report of a nuclear strike or a leadership change can trigger a payout, the motivation to spread disinformation becomes a financial one. This turns the tragedy of war into a "circus," where the suffering of those on the ground is abstracted into data points on a profit-and-loss statement.

The military’s own reliance on these tools adds a layer of institutional legitimacy to the chaos. The use of Anthropic’s Claude by the U.S. military—despite official concerns regarding supply chain risks and the inherent unpredictability of AI models—signals to the public that AI is the "pro" tool for intelligence. This creates a trickle-down effect where the public feels they must use similar tools to stay competitive or informed. Yet, the military uses these models within highly controlled, classified environments with layers of human oversight. The civilian equivalent lacks these safeguards, leading to a dangerous "intelligence gap" where the public believes it is seeing what the generals see, without understanding the filters through which that information is being processed.

Looking toward the future, the trend of AI-mediated conflict observation appears irreversible. We are entering an era of "epistemic fragmentation," where different audiences will follow different dashboards, each offering a slightly different version of "the truth" based on their underlying algorithms and data sources. The role of the journalist is becoming increasingly vital, not as a provider of raw data, but as a provider of context, verification, and ethical grounding. While AI is undeniably proficient at assembling vast quantities of information, it lacks the moral compass and the nuanced judgment required to report on the human cost of war.

The "theater" of the Iran conflict, as seen through these digital interfaces, serves as a warning. When we view war through a screen that combines live strikes with betting odds and AI-generated "vibes," we risk losing our capacity for empathy and our ability to distinguish fact from fiction. The democratization of information is a noble goal, but without the rigorous application of human intelligence, it risks becoming a tool for mass confusion. As these technologies continue to evolve, the challenge for society will be to harness the power of AI to clarify the world, rather than allowing it to turn the world’s most dangerous conflicts into a high-tech game of chance. The synthetic fog of war is not just a technological hurdle; it is a cognitive one, demanding a renewed commitment to the slow, difficult work of true understanding in an age of instant, automated "intel."