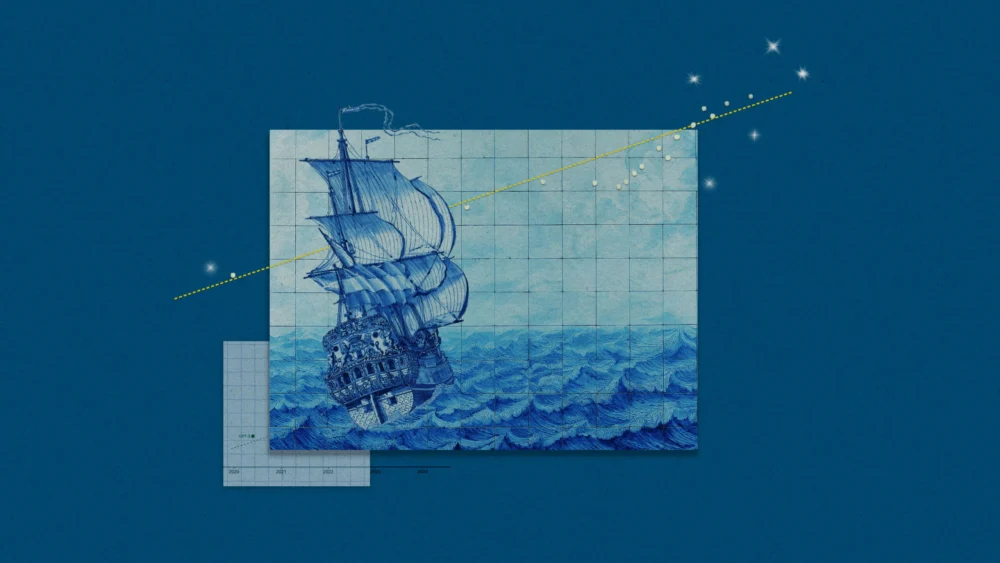

The release of a new large language model (LLM) from major development labs—be it OpenAI, Google DeepMind, or Anthropic—no longer triggers mere technical interest; it initiates a collective, global intake of breath. The industry awaits validation, or perhaps confirmation of impending disruption, often sourced from a single, compelling visualization: the Time Horizon plot produced by the research nonprofit Model Evaluation & Threat Research (METR). Since its debut, this graph has solidified its position as the definitive, if highly contested, barometer of rapid AI advancement, powerfully suggesting that fundamental AI capabilities are developing at an exponential clip.

This iconic graph, tracking the progress of frontier models over time, serves as a flashpoint for intense debate surrounding both the potential existential risks of superintelligence and the near-term economic implications of radical automation. When Anthropic released Claude Opus 4.5, for instance, the model’s performance on METR’s evaluation immediately exceeded the already dramatic exponential curve. METR’s subsequent announcement that Opus 4.5 demonstrated a capability level corresponding to a five-hour human-equivalent task sparked viral reactions. An Anthropic safety researcher publicly noted the need to pivot their research focus, while another employee’s visceral reaction—a widely shared, semi-ironic expression of alarm—perfectly captured the mix of awe and anxiety now inherent in frontier AI development.

Yet, this breathless reception often obscures the complex, nuanced reality of the underlying data. The very dramatic nature of the graph demands a deeper, more critical examination of its methodology, scope, and, crucially, its limitations. As Sydney Von Arx, a member of METR’s technical staff, cautions, the public discourse frequently "reads too much into the graph." The estimates for high-performing models like Opus 4.5, for example, are presented with substantial error bars. While five hours represents the central estimate, the true capability might range widely—from tasks requiring only two hours of human effort to those demanding 20 hours. This enormous variance, often stripped away in popular dissemination, highlights the inherent uncertainties in measuring novel, rapidly evolving capabilities.

Deconstructing the Time Horizon Metric

To understand why the METR plot generates such intense and polarized reactions, one must move beyond the visual spectacle of the upward-sweeping curve and scrutinize the ‘Time Horizon’ metric itself. This metric, plotted on the challenging Y-axis, is a novel construct developed by METR researchers to quantify the operational reach of LLMs in a specific, high-stakes domain.

The process begins with the creation of an extensive task suite, heavily biased toward software engineering and coding challenges, ranging from simple diagnostic questions to multi-step, multi-hour development problems. Human expert coders are then enlisted to complete these tasks, establishing a precise "human baseline time" for each challenge. This baseline time, spanning seconds to several hours, is the linchpin of the entire metric.

When LLMs are subsequently tested against this benchmark suite, researchers observe a predictable degradation in performance. While models ace the quick, simple tasks, accuracy falls off sharply as they attempt challenges that require progressively longer human completion times. The ‘Time Horizon’ is defined statistically: it is the point on the human task timeline where the tested model achieves a 50% success rate.

The most persistent and consequential public misinterpretation stems directly from the nomenclature. As Thomas Kwa, a lead author on the original paper, has repeatedly stressed, the five-hour figure associated with a model does not mean the model can operate independently for five continuous hours. It means the model can reliably succeed at 50% of the tasks that would typically take a human expert five hours to complete. This distinction—human time required versus model operating time—is essential, yet routinely lost in translation. Kwa has suggested that every popular presentation of the graph should mandate the inclusion of the word "human" to qualify the time metric, illustrating the organizational struggle against the simplifying force of technological hype.

Methodological Constraints and Real-World Drift

The robustness of the METR methodology is widely acknowledged within the academic community. Daniel Kang, an assistant professor of computer science at the University of Illinois Urbana-Champaign, praised the METR study as "one of the most carefully designed studies in the literature." However, even the most meticulous measurement tools have boundaries, and the Time Horizon plot is no exception.

The primary limitation is domain specificity. The progress illustrated is overwhelmingly confined to structured coding and software engineering tasks. While advancements in this domain are profound—implying radical shifts in the software development lifecycle—they do not necessarily extrapolate across the entire spectrum of human professional capabilities. As Kang notes, "A model can get better at coding, but it’s not going to magically get better at anything else." While METR has explored less formal evaluations in other domains, the definitive exponential trend is rooted firmly in the logic and structure of programming challenges.

Furthermore, the benchmark environment often fails to capture the chaotic reality of professional work, a factor METR researchers themselves attempted to quantify under the term "messiness." Messy tasks are those where the scoring criteria are ambiguous, where external conditions change mid-task, or where starting over after an error is not trivial. Models consistently perform worse on messy tasks than on clean, predefined challenges, even if the overall exponential pattern of improvement holds. A model achieving a one-hour time horizon in a controlled test environment does not automatically translate to the ability to replace one hour of work in a typical, unstructured corporate setting rife with ambiguity, interpersonal communication, and shifting objectives.

The reliance on human time as a proxy for task difficulty also invites expert skepticism. Inioluwa Deborah Raji, a Ph.D. student at UC Berkeley specializing in model evaluation, questions the fundamental assumption: "I don’t think it’s necessarily a given fact that because something takes longer, it’s going to be a harder task." Difficulty is multifaceted; some tasks are long and repetitive, while others are short but require extreme creativity or abstract reasoning. By prioritizing duration, the metric risks overstating the difficulty of lengthy, but fundamentally routine, computational challenges that LLMs excel at, while potentially overlooking complex, fast human judgments.

Industry Implications: The Polarization of Progress

Despite the researchers’ nuanced caveats, the exponential nature of the METR trend has rendered it a powerful engine for both existential alarm and venture capital exuberance.

The acceleration is undeniable: METR analysis showed that the Time Horizon for top-tier models has been consistently doubling approximately every seven months. This rate means that the models moved from completing tasks requiring nine seconds of human effort in mid-2020 to forty minutes of human effort in late 2024. This acceleration is faster than many historical technological shifts, including Moore’s Law in its prime, and provides concrete data for those who forecast a rapid, perhaps dangerous, approach to Artificial General Intelligence (AGI).

On one end of the spectrum, the graph fuels the existential risk (x-risk) community. It provided a key quantitative anchor for speculative narratives, such as the viral "AI 2027" forecast, which extrapolated the METR curve to predict the emergence of superintelligent AI capable of profoundly altering or destroying human civilization by 2030. For this group, the graph is not merely a scientific measurement; it is a ticking clock, reinforcing the need for immediate, drastic regulatory and safety interventions.

Conversely, the same exponential curve is viewed by investors as a definitive signal of unprecedented economic transformation. High-profile venture capital firms, such as Sequoia Capital, have leveraged the METR data to argue for the imminent arrival of AGI capable of acting as an effective "employee or contractor." As Sonya Huang, a general partner at Sequoia, noted, this trend challenges established business planning models, provoking firms to ask: "What will you do when your plans are measured in centuries?" The implication is clear: the speed of progress necessitates a radical restructuring of investment strategies to capture the value unlocked by increasingly autonomous AI agents.

This dual interpretation underscores the plot’s complicated reputation. METR was established with the explicit mission of assessing the risks posed by frontier AI systems, yet its signature finding has been co-opted into diametrically opposed narratives—one predicting doom, the other forecasting utopian abundance.

The Trajectory of Quantification

The researchers at METR find themselves in the unenviable position of having created a scientifically sound, yet perpetually misinterpreted, instrument. They continue to try and steer the narrative back toward methodological rigor, working on extensive FAQ documents and public clarifications. However, the lead researchers remain pessimistic about the efficacy of these efforts, recognizing the magnetic pull of the exponential trend. As Thomas Kwa acknowledged, the "hype machine will basically, whatever we do, just strip out all the caveats."

Despite the pervasive misuse, the METR team stands by the core utility of their work. Sydney Von Arx, while advising caution—"You should absolutely not tie your life to this graph"—also expresses confidence in the underlying phenomenon: "I bet that this trend is gonna hold."

This paradoxical stance defines the current state of AI measurement. The Time Horizon plot is a deeply flawed instrument, constrained by its domain, limited by measurement uncertainty, and continuously distorted by public sensationalism. Nevertheless, in a domain moving at breakneck speed, where intuitive senses of rapid progress struggle to find concrete numerical grounding, the METR plot offers one of the few rigorously constructed tools for quantification.

The real value of the Time Horizon metric is not its predictive certainty for AGI timelines, but its function as a calibrated scientific mirror, reflecting the focused, profound advances occurring in automated knowledge work, specifically software engineering. By translating raw model performance into human-equivalent workload capacity, it provides a vital, tangible metric for businesses and regulators attempting to grasp the pace of change. It forces stakeholders to confront the reality that AI is not just incrementally improving, but accelerating in its ability to take on complex, multi-step tasks. In the absence of a perfect, generalized metric for intelligence, this imperfect, domain-specific tool remains indispensable for navigating the technological turbulence ahead.