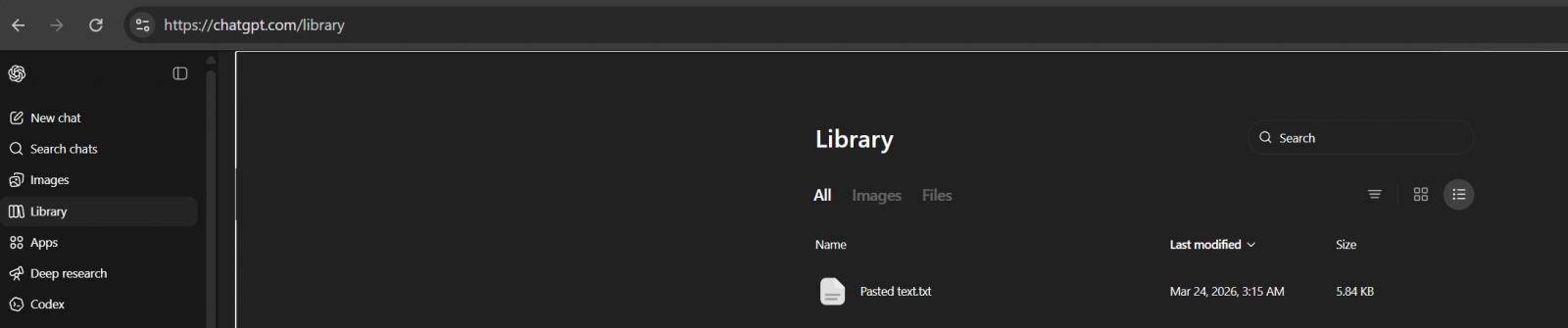

OpenAI is systematically integrating a new capability within its flagship conversational AI platform, ChatGPT, dubbed the "Library." This feature functions as a dedicated, persistent cloud repository, allowing subscribers to centralize and manage personal files, documents, and images uploaded during interactions with the model. This move signifies a crucial evolution in how users interface with large language models (LLMs), transitioning from ephemeral session-based data handling to a more structured, archival approach reminiscent of traditional personal cloud storage solutions, albeit intrinsically linked to the AI’s operational context.

The rollout is strategically tiered, currently restricted to paying customers across the ChatGPT Plus, Pro, and Business subscription tiers. Geographically, the initial deployment excludes users within the European Economic Area (EEA), Switzerland, and the United Kingdom, suggesting a phased launch potentially influenced by stringent regional data sovereignty and privacy regulations, such as the GDPR. Upon accessing the ChatGPT web interface following the update, the Library materialized automatically within the persistent sidebar navigation, marking its immediate availability for eligible users.

A notable initial observation for users is the feature’s pre-populated state. Even upon first launch, the Library often contains artifacts from recent prior sessions—specifically, files uploaded by the user over the preceding weeks. This is not a system anomaly but a deliberate design choice. OpenAI has confirmed that, by default, ChatGPT is configured to automatically archive uploaded and generated files—including documents (like PDFs or spreadsheets), presentations, and visual assets—into this secure, dedicated location. The explicit purpose is to facilitate easy retrieval and contextual referencing in subsequent, unrelated chat sessions. As stated in their supporting documentation, this mechanism ensures that valuable contextual data, previously transient, becomes persistently available for future queries, enhancing the continuity and depth of AI assistance.

It is important to delineate the scope of the Library versus other content areas. While uploaded documents and static images reside here, AI-generated imagery produced via tools like DALL-E integration within ChatGPT will continue to be managed within their dedicated ‘Images’ tab or gallery view, indicating a separation between user-supplied input material and model-generated output assets within the platform’s architecture. The Library, therefore, serves as the definitive ingress point for user-controlled source material.

The Mechanics of Persistent Context and Data Sovereignty

The fundamental shift underpinning the Library feature relates to the concept of persistent context. In earlier iterations of ChatGPT, context was largely session-bound; once a chat thread concluded or timed out, the specific files referenced often required re-uploading for subsequent discussions. The Library bypasses this friction. By storing files securely—and importantly, associating them directly with the user’s account—OpenAI is creating a personalized knowledge base that the underlying LLM can draw upon, provided the user explicitly permits its use within a new interaction. This transforms ChatGPT from a stateless query engine into a stateful, personalized digital assistant anchored by the user’s proprietary data corpus.

Data retention policies within this new structure are explicit yet carry significant implications for users concerned about data lifecycles. Files saved to the Library remain stored on OpenAI’s infrastructure indefinitely until the user takes manual action to remove them. Crucially, the system distinguishes between deleting a chat thread and deleting the source file itself. Deleting a conversation that utilized an uploaded file will not automatically purge that file from the Library. Users must navigate to the Library interface and execute a specific deletion command for the file to be marked for removal.

The timeline for actual data erasure post-deletion is set at 30 days. OpenAI indicates that files marked for deletion will be purged from their active servers within this period. The rationale for this extended window is speculative but likely rooted in established industry practices concerning data redundancy, backup protocols, and, potentially, legal or regulatory compliance requirements that necessitate a grace period for data recovery or auditing purposes. For enterprise users accustomed to immediate data destruction upon request, this 30-day buffer warrants careful consideration during operational deployment planning.

Industry Implications: The Commoditization of Context

The introduction of a structured file library carries profound implications for the broader generative AI ecosystem and enterprise adoption. Historically, a major hurdle for businesses integrating LLMs was ensuring that proprietary, domain-specific knowledge—internal policies, financial reports, technical schematics—could be reliably referenced without constant re-ingestion. The Library offers a formalized, subscription-gated solution to this challenge.

1. Enhanced Enterprise Suitability: For organizations utilizing ChatGPT Business, the Library transforms the platform into a more robust knowledge management tool. Instead of relying solely on custom fine-tuning or complex Retrieval-Augmented Generation (RAG) pipelines built externally, core documents can now reside within the platform’s secure perimeter (relative to the user’s account), ready for instant recall by the AI for summarization, analysis, or Q&A. This lowers the barrier to entry for sophisticated internal use cases.

2. Competitive Differentiation: As the LLM market matures, features that enhance personalization and context retention become critical differentiators. Competitors offering similar conversational interfaces must now match or exceed this persistent storage capability. The Library forces a standardization around the expectation that advanced AI services should not treat user data as disposable after a single interaction.

3. The Cloud Storage Paradigm Shift: This feature blurs the lines between AI service providers and traditional cloud storage platforms. Users are effectively entrusting OpenAI not just with processing power, but with the secure hosting of their digital assets. This demands an elevated level of scrutiny regarding OpenAI’s security architecture, encryption standards, and adherence to data residency promises—especially given the tiered global rollout strategy that currently excludes key regulatory zones. The trust calculus shifts: users are trusting the platform not only for accuracy but for archival integrity.

Expert Analysis: Security, Scalability, and Architectural Choices

From an architectural perspective, managing a persistent library of diverse file types—from small text documents to potentially large spreadsheets or high-resolution images—presents significant engineering challenges related to storage optimization, indexing, and rapid retrieval latency.

Indexing and Vectorization: For the files to be useful in a chat context, they must be indexed and likely converted into vector embeddings that the LLM can semantically query. The Library is not merely a file dump; it is a pre-processed repository. The efficiency with which OpenAI can vectorize, store, and retrieve these embeddings, ensuring they are contextually linked to the user’s profile, will dictate the feature’s ultimate utility. Slow retrieval would negate the benefit of persistence.

Security Posture: The designation of a "dedicated, secure location" is paramount. For enterprise clients, the security boundary around these stored files must be absolute. If a file uploaded by Company A is mistakenly indexed or accessible by Company B, the ramifications—ranging from data leakage lawsuits to the immediate termination of contracts—would be catastrophic. OpenAI must demonstrate robust logical separation between customer data sets, even within shared cloud infrastructure. The 30-day deletion window, while potentially legally prudent, suggests that data cleanup operations are integrated into scheduled maintenance cycles rather than being instantaneous, which warrants transparency for security-conscious consumers.

The Opt-Out Dilemma: The fact that files are saved by default is a critical editorial point. While OpenAI provides mechanisms for manual deletion, the initial configuration biases toward retention. In the current regulatory climate, where explicit consent is often preferred over implied consent (especially for data storage), this default setting places the onus of proactive data hygiene entirely on the user. Future iterations may benefit from allowing users to set a default preference: "Never save uploads to Library unless explicitly instructed."

Future Trajectories: Beyond Simple Storage

The Library is likely the foundational layer for more sophisticated, proactive AI agents. Looking ahead, this persistent data store can fuel several advanced capabilities:

1. Proactive Contextual Agents: In the near future, the Library could enable ChatGPT agents to perform tasks without explicit initiation. For example, if the Library contains quarterly financial reports and an associated project roadmap, the AI could proactively alert the user to potential timeline conflicts based on data trends observed across those documents, without waiting for a direct prompt.

2. Cross-Modal Integration: Currently, the Library seems focused on supporting text-based analysis of uploaded files. Future development will almost certainly focus on deeper cross-modal integration. For instance, an uploaded CAD file might be analyzed by a specialized model, and the resulting summary or modified parameters stored back into the Library, ready for the primary LLM to integrate into a narrative report.

3. Personalized Model Anchoring: For high-tier business users, the Library might evolve into a mechanism for "anchoring" specialized instances of the LLM. Instead of fine-tuning the model weights (which is resource-intensive), the persistent Library could act as a massive, dynamically queried external memory block, allowing the model to exhibit hyper-specific knowledge of that organization’s history, jargon, and data structure on demand.

In summary, the introduction of the ChatGPT Library represents a significant infrastructural enhancement, transforming the user experience from episodic interaction to continuous, context-aware partnership. While the immediate benefits are convenience and continuity for paying subscribers outside restricted European zones, the long-term implications concern the architecture of personalized AI, the competitive landscape of cloud-integrated intelligence, and the critical importance of transparent data lifecycle management policies in an era where our digital assets are increasingly managed by artificial intelligence.