The digital infrastructure underpinning global business operations experienced significant turbulence today as a major service disruption crippled access to Microsoft Exchange Online, effectively locking users out of their essential communication and scheduling tools. This incident, which Microsoft formally acknowledged shortly after 06:42 AM UTC, underscores the critical dependence modern enterprises maintain on cloud-native productivity suites, turning even brief service interruptions into high-stakes operational emergencies. The scope of the failure was comprehensive, impacting virtually every established connection vector for Exchange Online services.

According to initial advisories disseminated via Microsoft’s official service health channels, the outage targeted the core mechanisms of Exchange Online access. This included, but was not limited to, the Outlook Web Application (OWA), the installed Outlook desktop clients utilizing cloud connections, and crucial synchronization protocols such as Exchange ActiveSync (EAS). The reference code EX1253275, flagged within the Microsoft 365 admin center, became the identifier for this widespread connectivity breakdown. For organizations running on the cloud-hosted version of the venerable mail server, this translates directly into halted workflows, missed appointments, and the inability to dispatch or receive critical electronic correspondence.

Microsoft’s response mechanism initiated a rapid investigation, attempting to triage the situation against existing service monitoring telemetry. While the vendor reported that initial indicators suggested the degradation was ceasing for some impacted users, and engineers were actively validating sustained recovery measures, real-world user reports painted a picture of ongoing paralysis. This dichotomy—between internal telemetry suggesting improvement and external user experience indicating continued failure—is a common flashpoint in major cloud incidents, often signaling that while the primary fault might be addressed, propagation delays or residual session issues persist across global client bases.

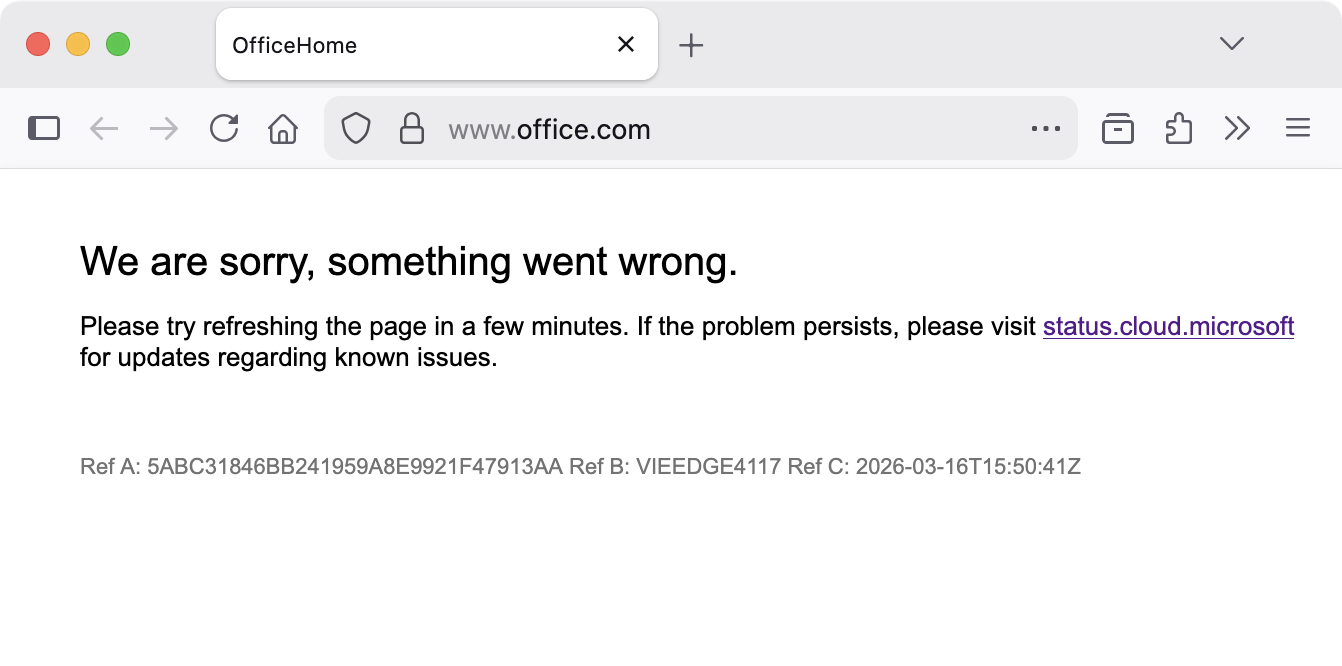

The scope of the disruption extended beyond traditional email access, bleeding into the broader Microsoft 365 portal functionality. At the time of peak disruption, attempts to access the primary Office.com web portal returned a generic, though telling, error message: "We are sorry, something went wrong. Please try refreshing the page in a few minutes." This suggests the underlying network or authentication fabric supporting the M365 suite, rather than just the specific Exchange service layer, was under duress.

Compounding the communication crisis, Microsoft concurrently began investigating a parallel, though distinct, infrastructure issue identified under service alert MO1253428. This secondary problem specifically targeted the nascent but rapidly adopted Microsoft 365 Copilot ecosystem. Web-based access points for the generative AI assistant, including the main Copilot sign-in pages and direct chat interfaces hosted at office.com/chat, m365.cloud.microsoft, and copilot.cloud.microsoft, were rendered inaccessible. In a practical workaround, Microsoft advised affected enterprise users to pivot to application-embedded instances of Copilot—such as those integrated directly within Teams or local Office applications—temporarily circumventing the problematic web infrastructure. The root cause for this related failure was tentatively identified as inefficient traffic processing within a specific segment of the service infrastructure, prompting immediate configuration adjustments.

Industry Implications and the Erosion of Resilience Perception

The recurring nature of these high-profile outages within the Microsoft ecosystem presents a complex challenge to the prevailing narrative surrounding cloud migration. For years, the promise of Software as a Service (SaaS) has been intrinsically linked to superior uptime, resilience, and scalability compared to on-premises infrastructure management. Major events like this erode that confidence.

From an enterprise risk management perspective, the dependence on a single vendor for mission-critical services like email and collaboration introduces a significant single point of failure. When Exchange Online falters, it is not merely an inconvenience; it is a direct impediment to business continuity. For sectors operating under strict regulatory mandates—such as finance, healthcare, and government—the inability to guarantee email availability can trigger compliance concerns and immediate contractual liabilities related to Service Level Agreements (SLAs).

The impact analysis must extend beyond direct productivity loss. During an email outage, secondary systems relying on Exchange hooks—such as ticketing systems, CRM platforms utilizing email notifications, and workflow automation tools—also experience cascading failures. This forces IT departments into reactive firefighting mode, often scrambling to deploy manual workarounds that introduce new vectors for human error, rather than focusing on strategic initiatives.

Expert Analysis: The Network Layer Vulnerability

The initial update regarding the Exchange Online degradation pointed toward an "underlying issue involving the supporting network infrastructure." This diagnostic detail is critical. Unlike application-level bugs that might manifest as feature failures or data corruption, a network infrastructure failure suggests a deeper, potentially systemic problem related to load balancing, routing, or core service mesh communication within Microsoft’s vast global data centers.

When core networking components falter, the remediation process becomes inherently complex. Fixing an application requires patching code; fixing a network component often requires careful configuration changes, rollbacks, or traffic re-routing across vast geographical distances, all while attempting to maintain minimal service for unaffected segments. The alert MO1253428, referencing inefficient traffic processing, strongly implies that the systems designed to distribute user load across available servers failed to perform their function correctly. This could stem from outdated load balancer configurations, failures in health check monitoring that incorrectly marked healthy nodes as unhealthy, or issues with Software-Defined Networking (SDN) overlays managing cross-datacenter communication.

Furthermore, the simultaneous, though separate, issue plaguing the Copilot web clients suggests a potential cross-service dependency on that same core network fabric. If the underlying transport layer or authentication gateway shared by both Exchange Online and the M365 administrative interfaces experienced congestion or failure, it explains the disparate symptoms arising from a shared systemic weakness. This highlights the architectural reality of hyper-scale cloud providers: services are deeply interconnected, and a fault in one foundational layer can ripple unexpectedly across seemingly unrelated front-end applications.

Historical Context and the Trend of Recurrence

This event is not isolated. The technological landscape demonstrates a pattern of recurring connectivity challenges within Microsoft’s cloud offerings. The report references a significant incident in January where access was intermittently blocked via the Internet Mailbox Access Protocol 4 (IMAP4)—a protocol critical for third-party mail clients—and a similar event last November specifically disrupting access via the classic Outlook desktop client.

These repetitions suggest that while Microsoft is adept at implementing short-term fixes, the root cause analysis may be pointing toward systemic architectural debt or insufficient failover mechanisms in specific, perhaps legacy, components of the Exchange Online infrastructure that interact with modern authentication or connection protocols. Each incident forces a temporary patch, but the underlying fragility remains exposed until a comprehensive, infrastructure-wide overhaul is completed—a monumental task in an environment serving billions of active users daily.

The evolution of access methods (from desktop clients to web portals to integrated AI interfaces) adds layers of complexity. What worked reliably five years ago may now introduce a new vulnerability when interfacing with modern APIs or authentication flows required by tools like Copilot. The migration path for enterprises is rarely linear, meaning Microsoft must simultaneously support mature protocols (like EAS) while engineering stability for cutting-edge features.

Future Impact and Mitigation Strategies

The immediate aftermath of today’s outage will see increased scrutiny from enterprise customers regarding Microsoft’s transparency and future preventative measures. The commitment to providing a Post-Incident Report (PIR) is standard, but the content of that report will dictate the long-term confidence level.

For organizations utilizing Microsoft 365, the necessity for robust business continuity planning that acknowledges cloud vendor dependency is paramount. This extends beyond simple data backup to include strategic planning for communication continuity:

- Diversified Communication Channels: Maintaining active, tested failover channels (e.g., secondary, non-M365 based communication platforms for executive teams or critical response units) ensures that essential coordination can continue during a major platform outage.

- Protocol Resilience Testing: IT teams should regularly test access via diverse connection methods (web, desktop, mobile) to ensure they are not overly reliant on a single protocol that might be vulnerable during a targeted service degradation.

- Vendor Accountability Review: Contractual reviews regarding SLA thresholds and penalty structures become more critical following repeated incidents. While vendors rarely offer substantial financial compensation for service credits, the reputational cost and the pressure exerted by large customers serve as necessary market correction mechanisms.

Looking forward, the trend toward deeply integrated AI assistants like Copilot means that future outages might impact cognitive workflow capabilities rather than just messaging. If the network infrastructure supporting Copilot’s reasoning engine fails, the ability of employees to synthesize information, draft complex documents, or analyze data rapidly grinds to a halt—a far more profound operational slowdown than simply being unable to send an email.

The mitigation update confirmed that the core Exchange Online service availability degradation had been addressed, attributing it to the supporting network infrastructure. However, the investigation into the MO1253428 issue affecting the M365 Copilot web access continued. This phased resolution confirms the complexity of cloud dependency chains: disentangling one issue does not automatically resolve related symptoms unless the shared foundational component is fully stabilized. As the digital economy continues its migration to the cloud, the stability of global service providers like Microsoft remains the single most critical variable in maintaining global enterprise productivity.