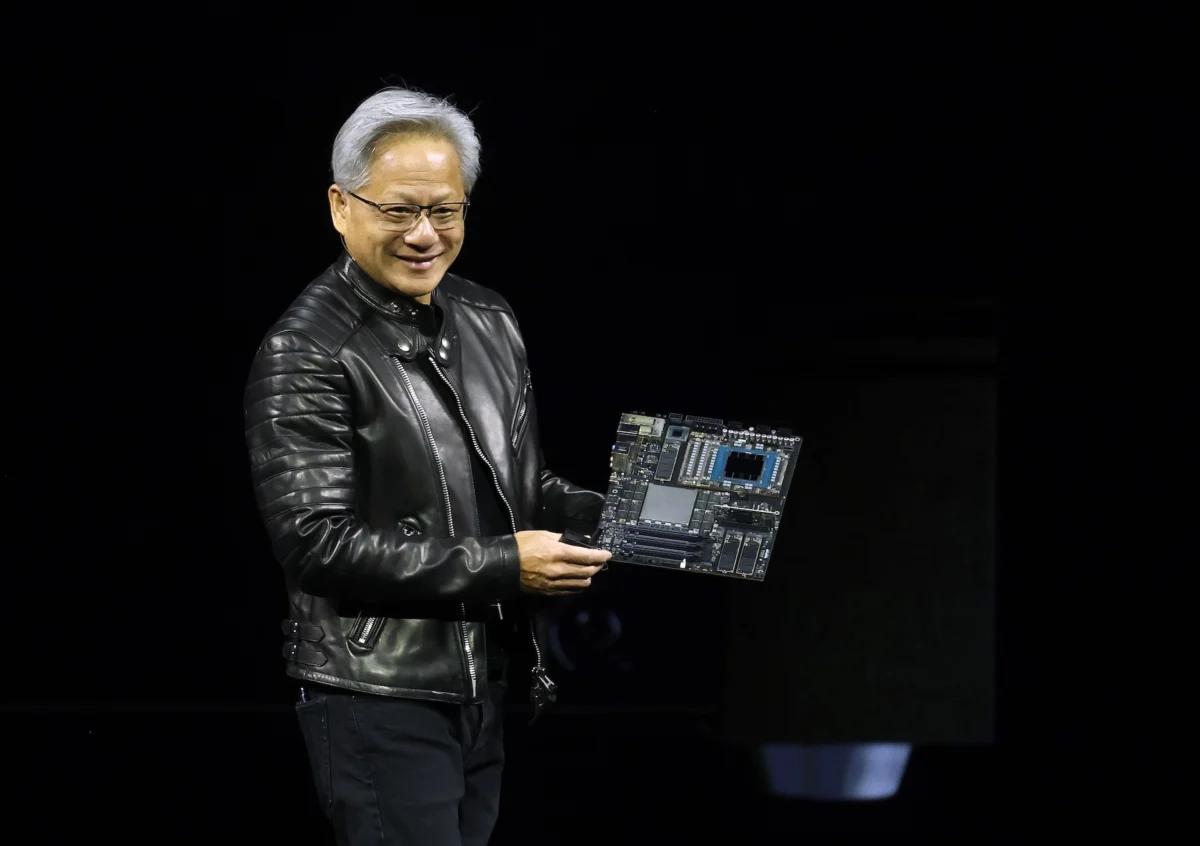

The global technology landscape finds itself at a critical inflection point as Nvidia prepares to convene its annual GPU Technology Conference (GTC) in San Jose, California. Scheduled to run from March 16 through March 19, the event has transitioned from a niche gathering of graphics hardware enthusiasts into the definitive summit for the artificial intelligence era. At the center of this maelstrom is CEO Jensen Huang, whose keynote address—set for Monday at 11 a.m. PT at the SAP Center—is expected to serve as a manifesto for the next phase of the "Industrial Revolution of AI." As the company seeks to defend its trillion-dollar valuation and its near-monopoly on high-end AI silicon, the 2026 keynote represents more than a product launch; it is a strategic repositioning of Nvidia as the foundational layer of the global digital economy.

The backdrop of this year’s GTC is one of unprecedented expectations and intensifying competition. While Nvidia currently commands an estimated 80% share of the AI training market, the industry is shifting its gaze toward the "last mile" of AI deployment: inference. This pivot, combined with the rise of autonomous software agents and the integration of AI into physical robotics, forms the core of the anticipated announcements. Huang’s two-hour address will likely attempt to bridge the gap between abstract computational power and practical, industry-specific utility, signaling a future where AI is not merely a tool for generation, but an autonomous participant in the workforce.

The Software Gambit: NemoClaw and the Rise of Agentic AI

One of the most significant rumors circulating ahead of GTC 2026 concerns Nvidia’s aggressive move into the software layer. Industry insiders suggest the company is poised to unveil NemoClaw, an open-source platform designed for the creation and management of enterprise-grade AI agents. While Nvidia has long provided the "shovels" for the AI gold rush in the form of GPUs, NemoClaw represents a move toward providing the "blueprints" for how that gold is extracted.

AI agents differ from standard large language models (LLMs) in their ability to execute multi-step, autonomous tasks. While a chatbot might write an email, an agent can research a client’s history, draft a personalized proposal, schedule a follow-up meeting, and update a CRM database without human intervention at every step. By offering an open-source platform for these agents, Nvidia is positioning itself as a direct competitor to software-centric entities like OpenAI and Microsoft. The strategic value of an open-source approach cannot be overstated; it fosters an ecosystem where developers are incentivized to build on Nvidia’s stack, effectively creating a "moat" of software compatibility that makes it difficult for enterprises to switch to rival hardware providers.

This move toward "Agentic AI" reflects a broader trend in the tech industry where the focus is moving from model size to model agency. For enterprises, the primary barrier to AI adoption has been the complexity of integrating models into existing workflows. NemoClaw aims to solve this by providing a structured framework that handles the orchestration of these complex tasks, potentially democratizing the deployment of sophisticated AI systems across sectors like finance, legal services, and supply chain management.

Solving the Inference Bottleneck: The New Hardware Paradigm

While software is the new frontier, hardware remains Nvidia’s fortress. At GTC 2026, the company is expected to address the most pressing economic challenge in the AI sector: the cost and speed of inference. Inference—the process by which a pre-trained model generates a response or makes a decision—is where the vast majority of computational work will happen as AI applications scale. Unlike training, which is a one-time (albeit massive) expense, inference is a recurring operational cost.

Rumors point to the debut of a dedicated inference chip designed specifically to undercut the cost-per-query of current general-purpose GPUs. This is a defensive necessity. Hyperscalers like Google, Amazon, and Meta have been aggressively developing their own custom silicon (TPUs and Inferentia chips) to reduce their reliance on Nvidia’s premium-priced hardware. By releasing a specialized inference processor, Nvidia hopes to prove that its specialized architecture can outperform in-house designs on both latency and energy efficiency.

The technical specifications of this rumored chip likely focus on high-bandwidth memory and reduced-precision arithmetic, which are essential for running large models at scale. If Nvidia can successfully lower the "inference tax," it will unlock a new wave of real-time AI applications, such as instantaneous language translation, complex real-time fraud detection, and more responsive autonomous systems. This hardware evolution is critical for maintaining Nvidia’s dominance as the market transitions from a "build phase" (buying chips for training) to a "utilization phase" (running chips for daily operations).

The $20 Billion Integration: Groq and the Future of Speed

Perhaps the most anticipated segment of the keynote involves Nvidia’s relationship with Groq, the high-speed inference startup. Following reports that Nvidia paid roughly $20 billion to license Groq’s technology and bring its key leadership—including founder Jonathan Ross and president Sunny Madra—into the fold, the industry is eager to see the fruits of this collaboration.

Groq’s Language Processing Unit (LPU) architecture gained fame for its ability to deliver nearly instantaneous token generation, solving the "lag" often associated with LLMs. Integrating Groq’s deterministic processing capabilities into the broader Nvidia ecosystem could be a game-changer. Market analysts, including those from Zacks Investment Research, suggest that the integration of Groq’s tech could lead to a new category of "Ultra-Low Latency" compute clusters. This would be particularly transformative for high-frequency trading, real-time interactive gaming, and the control systems of autonomous vehicles, where every millisecond of delay can have significant consequences.

The acquisition of talent and tech from Groq also signals a shift in Nvidia’s corporate culture. It demonstrates a willingness to look outside its own traditional GPU architecture to find specialized solutions for the unique demands of generative AI. By absorbing its most potent challengers, Nvidia is effectively consolidating the "speed" segment of the market before competitors can gain a foothold.

Vertical Integration: From Healthcare to Humanoids

Beyond the core compute and software, GTC 2026 will showcase Nvidia’s expansion into vertical industries. The three-day event features a heavy emphasis on healthcare, robotics, and autonomous transport. In healthcare, the focus is expected to be on "Digital Biology"—the use of generative AI to simulate protein folding and accelerate drug discovery. By providing the compute and the specialized models (like BioNeMo) for pharmaceutical companies, Nvidia is positioning itself as an essential partner in the next decade of medical breakthroughs.

Robotics, however, may be the most visually striking part of the conference. Following the introduction of Project GR00T, Nvidia’s foundation model for humanoid robots, the company is expected to demonstrate advancements in how AI interacts with the physical world. The goal is to move robotics from rigid, pre-programmed movements to "generalist" behaviors, where a robot can learn tasks through observation and simulation within Nvidia’s Omniverse platform. This "digital twin" technology allows for millions of hours of training to occur in a virtual environment before a single physical motor is turned on, drastically reducing the cost and risk of developing autonomous hardware.

Economic and Geopolitical Implications

The stakes of Jensen Huang’s keynote extend far beyond the walls of the SAP Center. As Nvidia’s chips have become a form of "digital oil," the company sits at the heart of global geopolitical tensions. The 2026 conference will likely touch upon the company’s efforts to navigate export controls and diversify its supply chain. Furthermore, as the energy consumption of AI data centers becomes a political and environmental flashpoint, Huang is expected to emphasize the "performance-per-watt" improvements of new hardware, framing Nvidia’s technology as a tool for efficiency rather than just raw power.

The financial community will be watching closely for signs of sustainable growth. The massive capital expenditures by big tech companies on AI infrastructure have led some to worry about a "bubble." Huang’s task is to prove that the ROI on this hardware is real and that the shift to an AI-first economy is permanent. By focusing on inference and autonomous agents, he is attempting to show that the industry is moving into a phase of revenue generation, where AI starts paying for itself through increased productivity and new service models.

Conclusion: The Vision of an AI-Native World

As GTC 2026 kicks off, the narrative surrounding Nvidia is no longer just about chips; it is about the architecture of a new society. From the rumored NemoClaw platform to the next generation of inference-optimized silicon and the integration of Groq’s lightning-fast technology, Nvidia is building a comprehensive ecosystem that spans from the data center to the edge.

Jensen Huang’s keynote is likely to conclude with a vision of a world where AI is ubiquitous, invisible, and indispensable. Whether through the autonomous agents that manage our schedules or the humanoid robots that assist in manufacturing, the technologies debuted this week in San Jose will define the boundaries of the possible for years to come. For competitors, the message is clear: Nvidia is not content with leading the race; it intends to own the track, the cars, and the fuel. As the conference unfolds, the tech world will be watching to see if this ambitious roadmap can withstand the pressures of a rapidly maturing market and the relentless pace of innovation.