The recent and highly publicized fracture between the United States Department of Defense and the artificial intelligence powerhouse Anthropic has served as a jarring wake-up call for the global technology sector. While the immediate headlines focused on contractual disputes and national security designations, the underlying reality is far more concerning: Washington is currently operating in a total policy vacuum regarding the governance of the most transformative technology in human history. In the absence of federal leadership, a diverse and ideologically varied coalition of scientists, ethicists, and former government officials has stepped forward to propose a definitive roadmap. Known as the Pro-Human Declaration, this framework seeks to establish the first coherent set of rules for responsible AI development, providing a philosophical and practical counterweight to an industry that many fear is moving too fast and breaking too much.

The timing of this declaration is not coincidental. It arrives at a moment when the friction between private innovation and public safety has reached a boiling point. The standoff between the Pentagon and Anthropic—where the latter was labeled a "supply chain risk" for refusing to grant the military unfettered access to its proprietary models—highlights the desperate need for a standardized legal framework. Without such a framework, the government is forced to rely on ad-hoc measures, such as the "supply chain risk" label usually reserved for adversarial foreign entities like Huawei. This lack of clarity has created a chaotic environment where companies like OpenAI can step in to fill the void with deals that legal experts warn are essentially unenforceable, leaving the public interest largely unprotected.

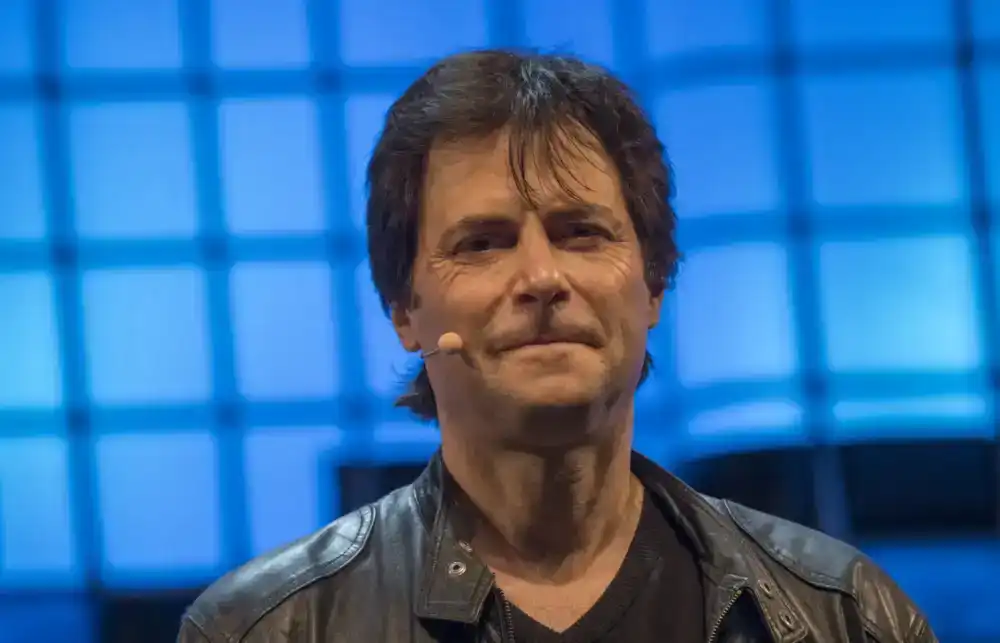

Max Tegmark, an MIT physicist and one of the primary architects of the Pro-Human Declaration, notes that the American public is increasingly unified in its concern. Recent polling suggests that an overwhelming 95% of Americans oppose an unregulated race toward artificial superintelligence (ASI). This near-unanimous sentiment suggests that while the political class may be deadlocked, the citizenry is acutely aware that humanity has reached a critical juncture. The declaration characterizes this moment as a "fork in the road." On one side lies the "race to replace," a trajectory where humans are incrementally phased out of the workforce and eventually removed from the decision-making loops that govern society. On the other side is a vision of AI as a tool for human flourishing—a technology that augments rather than replaces the human experience.

To ensure this second path is the one taken, the Pro-Human Declaration outlines five fundamental pillars. These are not merely suggestions but are intended to serve as the bedrock for future legislation. The first pillar is the absolute necessity of keeping humans in the loop. As AI systems become more autonomous, the risk of "mode confusion" or total loss of control increases. The declaration argues that no critical infrastructure, weapon system, or social governance mechanism should ever be fully autonomous. Humans must remain the ultimate arbiters of truth and action.

The second pillar focuses on the avoidance of power concentration. We are currently witnessing the emergence of a "technological feudalism," where a handful of trillion-dollar corporations control the compute, the data, and the algorithms that shape global discourse. The declaration calls for decentralized access to AI benefits and strict anti-monopoly measures to ensure that the "intelligence dividend" is shared across society rather than hoarded by a new class of digital oligarchs.

Third, the document emphasizes the protection of the human experience. In an era of deepfakes and generative content, the value of authentic human interaction is being eroded. The declaration advocates for clear labeling of AI-generated content and the preservation of spaces—in education, healthcare, and the arts—where human connection remains paramount. Closely tied to this is the fourth pillar: the preservation of individual liberty. AI should not be used as a tool for mass surveillance or "social credit" scoring. The declaration asserts that an individual’s right to privacy and freedom from algorithmic manipulation is a fundamental human right that must be codified in the digital age.

The final pillar is perhaps the most contentious for Silicon Valley: legal accountability. Currently, many AI developers operate under a "use at your own risk" model, shielded by complex terms of service and a lack of specific tort law. The Pro-Human Declaration insists that if an AI system causes harm—whether through a biased hiring algorithm, a medical misdiagnosis, or a physical accident—the company that developed and deployed that system must be held legally responsible. This "strict liability" approach is intended to slow down the "move fast and break things" culture, forcing companies to prioritize safety over speed.

Beyond these pillars, the declaration includes several "muscular" provisions that target the most extreme risks of AI development. One of the most significant is an outright moratorium on the development of superintelligence until a global scientific consensus is reached on how to do so safely. This is paired with a demand for mandatory "off-switches" on all high-capacity AI models. Furthermore, the document calls for a ban on architectures capable of self-replication or autonomous self-improvement. The fear among safety researchers is the "recursive improvement" loop, where an AI system begins to rewrite its own code, leading to an intelligence explosion that would be impossible for humans to monitor, let alone control.

Tegmark often draws a parallel between the AI industry and the pharmaceutical industry. In the United States, a drug company cannot simply release a new chemical compound into the market and hope for the best. The Food and Drug Administration (FDA) requires years of rigorous clinical trials to prove both safety and efficacy. Yet, in the realm of AI, companies are deploying "black box" models with billions of parameters directly to the public without any third-party safety testing. The Pro-Human Declaration essentially calls for an "FDA for AI," a regulatory body with the power to audit models before they are released.

Recognizing that broad federal regulation is often slow to materialize, the coalition behind the declaration has identified a strategic "wedge issue" to force legislative action: child safety. The declaration calls for immediate, mandatory pre-deployment testing for any AI product aimed at minors. This includes chatbots and "digital companion" apps that have already been linked to instances of emotional manipulation, exacerbation of eating disorders, and even suicidal ideation among young users. By framing AI safety as a matter of protecting children, the coalition hopes to bypass the traditional partisan gridlock. As Tegmark points out, the legal system already punishes adults who manipulate or harm children; there is no logical reason why a machine or the company that owns it should be exempt from the same standards.

The bipartisan nature of the declaration is its most striking feature. The list of signatories includes figures who rarely agree on anything else: Steve Bannon, a firebrand of the populist right; Susan Rice, a veteran of the Obama and Biden administrations; and Admiral Mike Mullen, former Chairman of the Joint Chiefs of Staff. Their alignment signals that the risks posed by unchecked AI transcend traditional political boundaries. It is not a "left" or "right" issue; it is a "human" issue. Whether one is concerned about the erosion of traditional values, the loss of labor rights, or the stability of national security, the conclusion is the same: the current trajectory of unregulated AI development is unsustainable.

The fallout from the Anthropic and OpenAI deals with the Pentagon serves as a cautionary tale of what happens when the government lacks a roadmap. By designating a domestic company like Anthropic as a "supply chain risk" because it insisted on safety protocols, the government is effectively penalizing responsible behavior. Meanwhile, OpenAI’s willingness to bypass those same concerns in exchange for military contracts suggests a "race to the bottom" where safety is sacrificed for market share and political favor.

As we look toward the future, the Pro-Human Declaration provides a necessary framework for a world where AI is ubiquitous. It acknowledges that AI has the potential to solve some of our greatest challenges—from curing diseases to mitigating climate change—but only if it is developed within a cage of human-centric rules. The "roadmap" is now on the table. The question that remains is whether the leaders in Washington and the executives in Silicon Valley have the foresight to follow it, or if they will continue their headlong rush into an uncertain future, leaving the rest of humanity to deal with the consequences. The choice is no longer about technology; it is about sovereignty—the right of humans to remain the masters of their own destiny in an increasingly automated world.