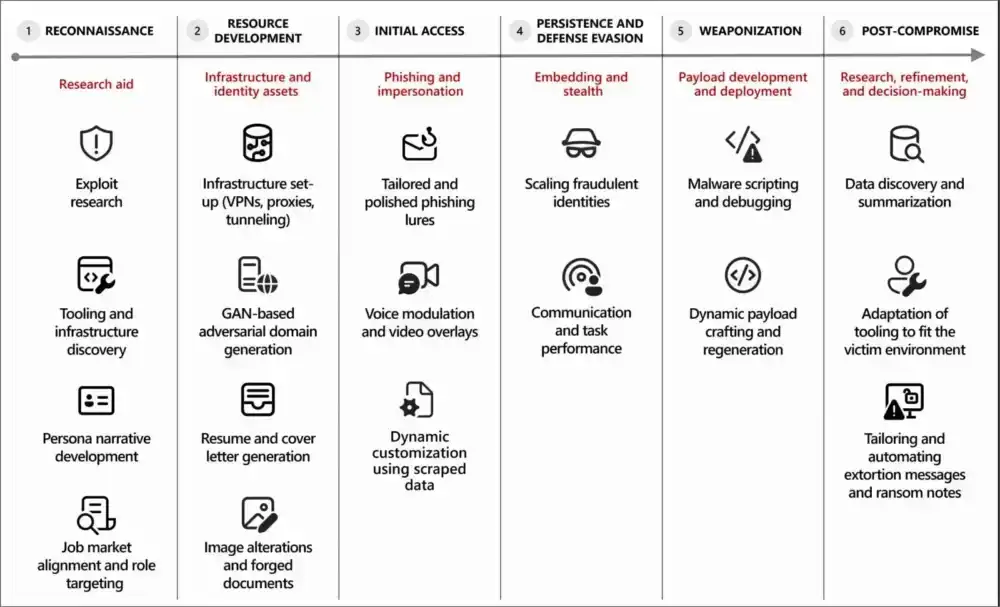

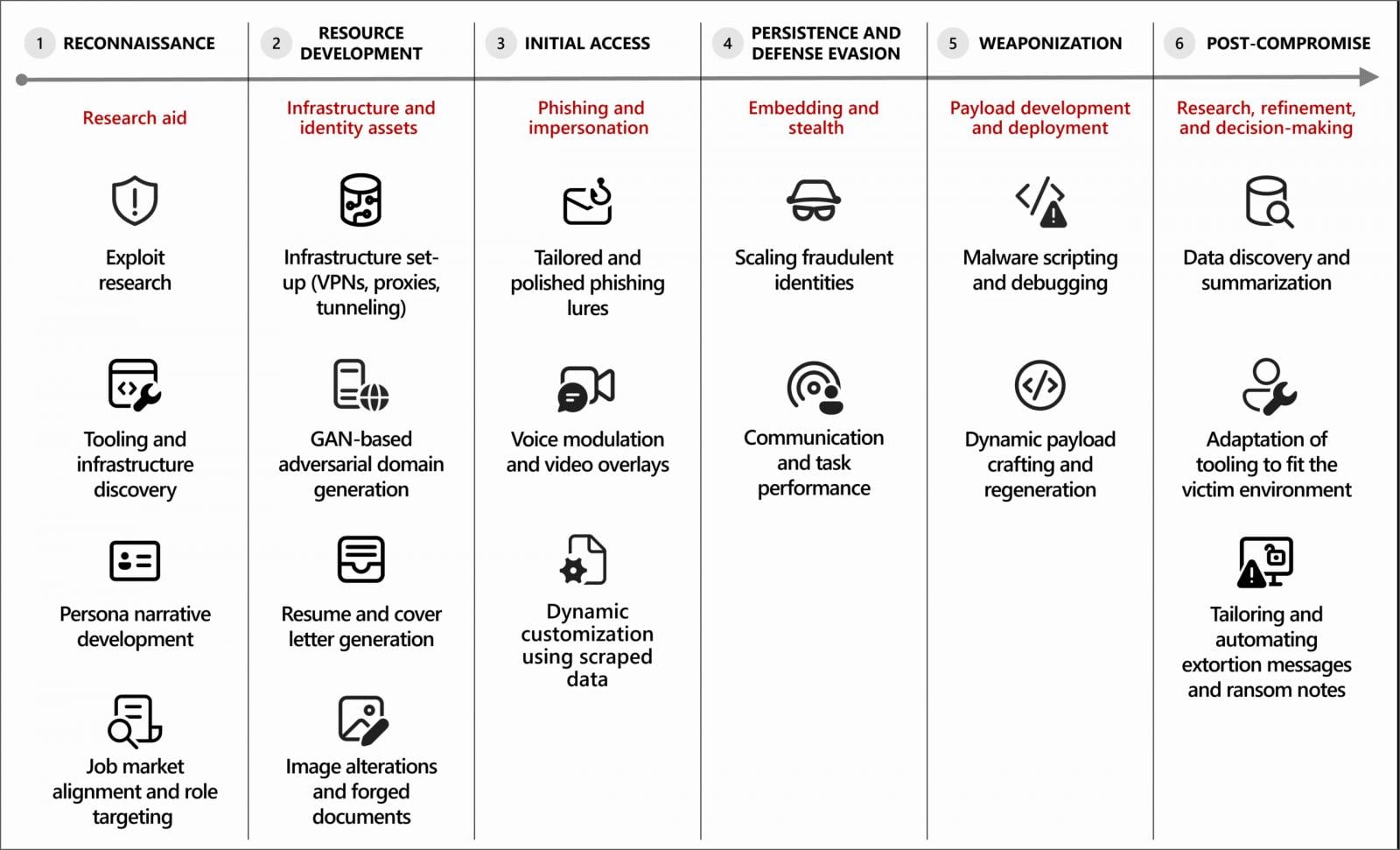

The integration of artificial intelligence, particularly large language models (LLMs) and generative AI tools, is fundamentally reshaping the landscape of cyber offense. A comprehensive analysis from Microsoft Threat Intelligence reveals that malicious actors are systematically embedding AI capabilities across every phase of the cyberattack lifecycle. This strategic adoption is not merely a technological novelty; it functions as a potent force multiplier, dramatically accelerating the speed of operations, expanding the scope of influence, and crucially, lowering the technical proficiency required to launch sophisticated attacks.

The proliferation of accessible, powerful AI services has democratized cybercrime. Where once complex scripting, nuanced social engineering scripts, or deep knowledge of obscure programming languages were prerequisites for advanced persistent threats (APTs) or organized cybercriminal groups, these barriers are rapidly eroding. Threat actors are now leveraging these tools for mundane yet time-consuming tasks, freeing up human operators to focus on strategic objectives like targeting, lateral movement, and exfiltration planning.

The core of this observed malicious use centers on the generation of text, code, and synthetic media. Intelligence suggests that threat actors are utilizing generative AI extensively for crafting highly convincing phishing lures, translating malicious content for global distribution, rapidly summarizing vast quantities of exfiltrated sensitive data, and critically, generating or refining malicious codebases. Furthermore, AI assistants are proving invaluable in scaffolding infrastructure setup—generating necessary configuration scripts or boilerplate code for command-and-control (C2) frameworks, thereby streamlining deployment logistics.

Microsoft’s warning underscores a crucial distinction: while AI is augmenting the tactical execution of attacks, human oversight remains paramount. The intelligence notes that the technology "reduces technical friction and accelerates execution, while human operators retain control over objectives, targeting, and deployment decisions." This indicates that AI is currently optimized for operational efficiency rather than independent, goal-oriented adversarial action, though this distinction is anticipated to blur over time.

AI-Enabled Statecraft and Impersonation

The operationalization of AI is not confined to digital weaponization; it is deeply intertwined with the initial stages of infiltration, particularly in espionage and financially motivated campaigns targeting high-value intellectual property or access.

Microsoft has specifically highlighted the activities of state-sponsored groups, including North Korean entities tracked as Jasper Sleet (Storm-0287) and Coral Sleet (Storm-1877). These groups are exploiting AI to enhance long-term infiltration strategies, most notably through remote IT worker schemes designed to secure legitimate employment within targeted Western organizations.

The process involves an industrial-scale creation of fabricated digital identities. Jasper Sleet actors are using generative AI platforms to meticulously craft these personas. This includes leveraging prompts to generate culturally specific lists of names and formulating standardized, yet realistic, email address formats corresponding to those identities. For instance, prompts like "Create a list of 100 Greek names" or "Generate email address formats using the name Jane Doe" are precursors to building robust, believable digital footprints designed to withstand initial scrutiny during background checks or credential verification.

Moreover, the research reveals that AI is used to analyze and weaponize publicly available professional data. Threat actors feed job postings for highly technical roles—such as software development or specialized IT infrastructure positions—into LLMs. The AI then extracts and summarizes required skills, creating a precise profile that allows the human operator to tailor the fabricated resume and professional narrative of the digital persona to perfectly match the desired employment role, thereby maximizing the probability of successful infiltration and persistent access.

Malware Evolution and Infrastructure Automation

Beyond social engineering, the impact of AI on the development pipeline for offensive tools is significant. Threat actors are increasingly employing AI coding assistants to accelerate the malware development lifecycle. This includes generating novel malicious code snippets, identifying and debugging errors within existing exploits, and efficiently porting core malware components from one programming language environment to another—a process that traditionally demands significant expertise and time.

Evidence suggests that this evolution is pushing toward more dynamic and adaptive malware. Researchers are observing experimental malware variants exhibiting signs of AI-enabled functionality, capable of dynamically generating new execution scripts or altering their behavior in real-time based on environmental analysis (runtime modification). This adaptability presents a formidable challenge to signature-based detection systems.

Coral Sleet, for example, demonstrates the efficiency AI brings to infrastructure management. The group has been observed using these tools to rapidly generate convincing, seemingly legitimate corporate websites, provision the necessary underlying cloud infrastructure, and then thoroughly test and troubleshoot the entire deployment before launching an active campaign. This level of rapid, automated infrastructure setup drastically reduces the "dwell time" between planning and execution.

Bypassing Defenses: Jailbreaking and Autonomous Agents

When developers of LLMs implement safeguards designed to prevent the generation of harmful code or instructions, threat actors are demonstrating ingenuity in circumventing these constraints. The report highlights the increasing use of "jailbreaking" techniques—carefully constructed prompts and conversational manipulations designed to trick the LLM into ignoring its safety parameters and outputting malicious content or functional exploit code.

Looking forward, the research points toward the nascent exploration of agentic AI within threat actor circles. While current malicious applications largely rely on generative AI for task assistance (AI as a co-pilot), agentic AI implies systems capable of performing multi-step tasks autonomously, adapting their strategies based on feedback loops from the environment. Microsoft cautions that while this technology is still primarily experimental in offensive contexts, the shift from mere acceleration to autonomous execution represents the next major inflection point in cyber threat evolution.

Industry Implications and Defensive Imperatives

The findings carry profound implications for enterprise security postures. The infiltration vector involving leveraging legitimate access—as seen in the IT worker schemes—demands a paradigm shift in how organizations view insider threats. Security teams must now treat sophisticated, AI-enhanced social engineering campaigns as a form of advanced insider risk, even when the initial access vector involves deception rather than coercion.

Defenders must recalibrate their focus toward detecting anomalies in access patterns. This requires robust monitoring of credential usage that deviates from established baselines, irrespective of how legitimate the user account appears. Hardening identity systems against increasingly persuasive phishing attacks—which are now hyper-personalized and contextually accurate thanks to LLMs—is non-negotiable. Multi-factor authentication (MFA) must be universally enforced, and behavioral analytics applied to authentication events must be finely tuned.

Furthermore, as the tools themselves become targets, organizations utilizing AI in their own operations must secure those platforms with the same rigor applied to core network infrastructure. An AI system compromised via prompt injection or data poisoning becomes a potent tool for the adversary.

A Broadening Threat Ecosystem

It is critical to recognize that this trend is systemic, not isolated to one vendor’s findings. This strategic adoption of AI is a cross-industry phenomenon. Other major technology leaders have corroborated Microsoft’s observations. Google recently detailed how threat actors are actively abusing their Gemini AI models across the entire attack spectrum, mirroring the findings concerning reconnaissance and exploitation stages. Similarly, reports detailing campaigns, such as the one that leveraged multiple generative AI services to successfully breach over 600 FortiGate firewalls in a matter of weeks, underscore the tangible, high-impact results adversaries are achieving by integrating these technologies into their established methodologies.

The future of cybersecurity will be defined by this AI arms race. Defenders must rapidly mature their own AI-driven defensive countermeasures—leveraging machine learning for anomaly detection, proactive threat hunting, and automated response—to counter the speed and scale afforded to adversaries by their generative tools. The current phase is characterized by AI as a force multiplier for efficiency; the next phase threatens to bring true AI-driven autonomy to the threat landscape.