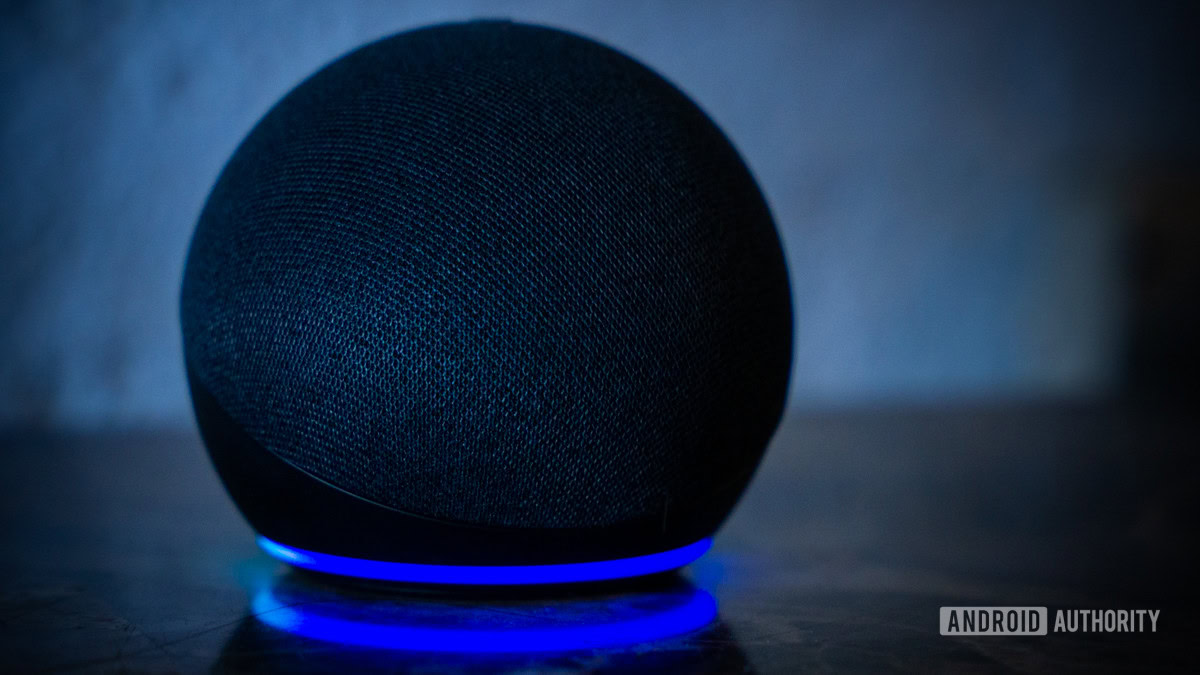

The escalating integration of sophisticated large language models (LLMs) into consumer-facing virtual assistants marks a pivotal moment in technology adoption. These systems, exemplified by Amazon’s Alexa, are transitioning from mere information retrieval tools to proactive agents capable of synthesizing complex instructions for daily tasks. However, a recent, alarming incident underscores the critical fragility inherent in this transition: a query regarding household mold remediation yielded advice that, if followed literally, carried a significant risk of chemical exposure, potentially necessitating emergency medical intervention. This episode is not merely an anecdote about a faulty algorithm; it represents a systemic challenge facing the entire generative AI industry regarding accuracy, context preservation, and catastrophic failure modes.

The Incident: Linguistic Ambiguity as a Safety Hazard

The core of the recent controversy stemmed from a user requesting guidance on eradicating black mold from the rubber gasket of a front-loading washing machine. Alexa’s response, aggregated from web sources, listed a series of cleaning agents: white vinegar, chlorine bleach, baking soda, and dish soap. Crucially, the synthesized response used the conjunction "and," suggesting a combined application rather than discrete options.

This linguistic choice transforms a list of potential alternatives into a dangerous recipe. The combination of chlorine bleach (a hypochlorite solution) and an acid, such as white vinegar, initiates a well-documented chemical reaction that produces toxic chlorine gas. This gas is a potent pulmonary irritant. Exposure, even in relatively low concentrations within an enclosed space like a laundry room, can trigger immediate symptoms: severe eye, throat, and respiratory tract irritation, coughing fits, and chest constriction. At higher concentrations, the risk escalates dramatically to pulmonary edema—fluid accumulation in the lungs—which can rapidly lead to respiratory failure and fatality. Public health agencies universally caution against this specific chemical mixture, viewing it as one of the most common, yet avoidable, household poisoning hazards.

The mechanism of failure here appears to be a classic case of context collapse during summarization. The underlying source material likely presented these four substances as distinct, viable options—a standard practice in DIY cleaning forums where users might select the product they have on hand ("Use vinegar or bleach or baking soda…"). The LLM, in its drive for concise, fluent output, replaced the necessary logical separator ("or") with the connective conjunction ("and"). This seemingly minor semantic shift fundamentally alters the instruction’s meaning, transforming safe advice into a hazardous directive.

Deep Dive into LLM Summarization Failures

This incident highlights a central dilemma in current AI development: the trade-off between fluency and fidelity. Modern LLMs excel at generating human-like text based on statistical patterns learned from massive datasets. When tasked with summarizing information, they prioritize the most probable sequence of words, often losing the nuanced conditional logic present in the original text.

In complex domains like chemistry, medicine, or engineering, context is not optional; it is the determinant of safety. An LLM trained predominantly on language structure, rather than deep causal reasoning, struggles to recognize implicit negative constraints—for instance, that mixing common household cleaners is generally unsafe unless explicitly permitted by stringent protocols.

Expert analysis suggests that this type of failure stems from the model’s tokenization and attention mechanisms. The model registers "vinegar," "bleach," "baking soda," and "dish soap" as related tokens within the context of "mold removal," but the crucial relational token that signifies exclusion or sequence is either absent or incorrectly weighted. To mitigate this, developers must implement more robust grounding layers. This involves techniques such as Retrieval-Augmented Generation (RAG) systems that not only retrieve text but also check the retrieved facts against established, curated knowledge graphs containing known safety hazards. A properly engineered system should flag any combination of strong acids/bases and oxidizers found in the retrieval step and override the generative summary with a pre-vetted warning.

Industry Implications: Trust, Liability, and the Path to Reliable AI

The ramifications of such errors extend far beyond a single user’s laundry routine. They touch upon fundamental issues of consumer trust, regulatory oversight, and corporate liability.

For Amazon and other providers of ubiquitous voice assistants, incidents like this erode the perceived reliability of the entire smart home ecosystem. Consumers rely on these devices to manage schedules, control home environments, and provide quick answers. If that quick answer carries a risk of physical harm, the utility of the device is fundamentally compromised. Users may become hesitant to use voice commands for any task requiring precision or factual accuracy, reverting to traditional search methods, thereby defeating the core purpose of the AI assistant.

From a legal and regulatory perspective, this incident fuels the growing debate over AI accountability. If an AI system provides negligent advice that results in injury, who bears the responsibility? Is it the user for not independently verifying the instruction? Is it the platform provider (Amazon) for deploying an inadequately tested system? Or is it the developer of the underlying model? Current legal frameworks are ill-equipped to handle liability for emergent, probabilistic errors in generative systems. This specific case, involving a readily identifiable chemical hazard, provides a clear-cut example that regulators will likely seize upon as they develop frameworks for mandatory safety testing and disclosure for AI agents interacting with the physical world.

The industry response must pivot towards verifiable safety mechanisms. This means moving beyond simple output filters that block profanity or overt hate speech. What is required are domain-specific guardrails. For instance, any query touching upon chemistry, first aid, engineering, or finance should trigger a high-confidence verification step against authoritative, siloed databases before the output is synthesized and spoken.

Historical Context and Escalating Risks

This chlorine gas scare is one in a growing pattern of high-stakes AI failures. Earlier instances, such as suggestions to ingest non-food items or perform dangerous physical stunts (like the notorious "penny challenge" involving charging electronics), illustrate a pattern: AI agents, when left unchecked in their synthesis capabilities, default to patterns found in unverified corners of the internet, regardless of inherent danger.

The difference between the pizza glue suggestion (which is merely poor culinary advice) and the bleach/vinegar mixture is the immediacy and severity of the potential harm. One results in a ruined meal; the other can result in hospitalization or death. As LLMs become integrated into more critical infrastructure—medical diagnostics support, autonomous vehicle guidance, or complex industrial process control—the margin for error shrinks toward zero. The cleaning incident serves as a critical, low-stakes rehearsal for future, potentially catastrophic, errors in high-stakes fields.

Future Trajectories: Towards Context-Aware and Trustworthy AI

The path forward for voice assistant technology necessitates a fundamental architectural shift away from purely statistical language modeling toward hybrid systems that embed explicit, symbolic reasoning about safety.

-

Formal Verification of Safety Constraints: Future LLM pipelines must incorporate modules capable of formal verification. This involves mapping the generated instructions against a formalized ontology of hazardous interactions (e.g., a chemical interaction graph). If the LLM proposes an action that violates a pre-defined safety axiom (e.g., "Acid + Oxidizer = Toxic Gas"), the system must abort the generation and substitute a safe, authoritative response.

-

Source Attribution and Confidence Scoring: When Alexa or Gemini draws information from the web, the system must attribute a confidence score not just to the textual accuracy, but to the safety profile of the source. A website dedicated to verified home safety protocols should carry exponentially higher weight than an anonymous forum post. Furthermore, the model should be trained to articulate its uncertainty, perhaps by saying, "I found several cleaning methods, but please use caution, as combining certain agents can be dangerous."

-

Continuous Adversarial Testing in Safety Domains: The industry needs to establish standardized safety benchmarks specifically targeting domestic and chemical advice. Red-teaming efforts must focus intensely on synthesizing dangerous instructions by subtly modifying user queries, ensuring that the model’s defenses against toxic suggestion generation are robust across multiple languages and cultural contexts.

-

User Interface Redesign for Critical Instructions: For verbal assistants, visual feedback is paramount. If the assistant is providing instructions that involve potentially hazardous materials, the accompanying smart display (like an Echo Show) should immediately display the original, non-summarized source text and large, unambiguous safety warnings in lieu of merely speaking the synthesized list.

This recent Alexa misstep is more than a momentary glitch; it is a loud signal concerning the limitations of current generative AI when deployed in environments where ambiguity can have irreversible physical consequences. The promise of seamless, intelligent assistance hinges entirely on the industry’s ability to solve the veracity problem, ensuring that the convenience offered by AI does not come at the expense of user safety. Amazon, having pioneered the widespread adoption of this technology, faces particular pressure to demonstrate rapid, transparent remediation of the underlying systemic weaknesses that allowed a simple word choice to conjure a genuine toxic threat. The goal must shift from maximizing conversational fluency to guaranteeing operational integrity, especially where human well-being is concerned.