The digital landscape of identity verification has been fundamentally challenged by the recent guilty plea of Yurii Nazarenko, a 27-year-old Ukrainian national, concerning his operation of "OnlyFake." This sophisticated, subscription-based online service utilized generative artificial intelligence to produce and distribute over 10,000 hyper-realistic, counterfeit identification documents to a global clientele. Nazarenko, who operated under several aliases including "John Wick," "Tor Ford," and "Uriel Septimberus," has admitted to facilitating an operation that directly undermines the foundational security mechanisms designed to protect financial systems and national borders.

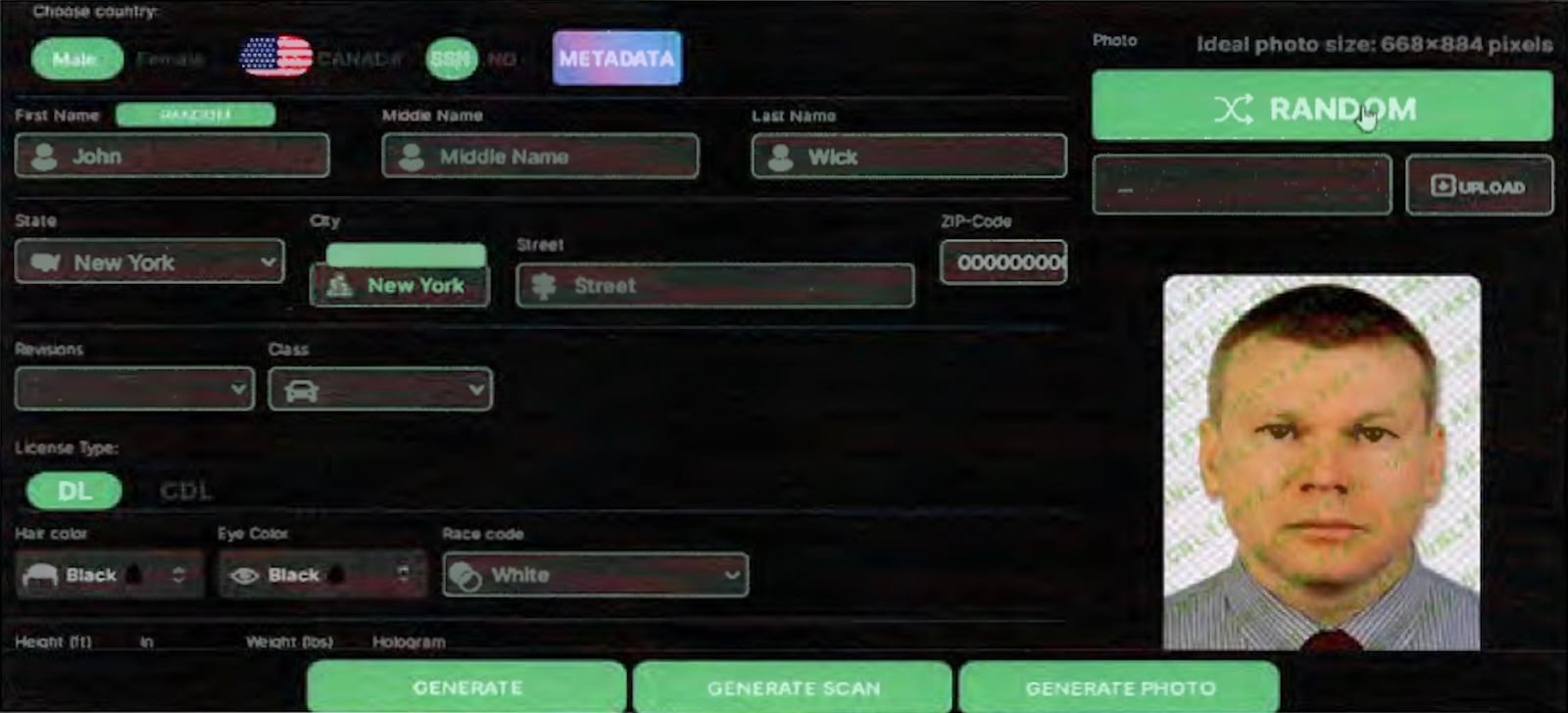

The scale and technological sophistication of the OnlyFake platform distinguish this case from traditional document forgery. Instead of relying on physical printing presses or template manipulation, Nazarenko’s service leveraged advanced AI models—likely deep learning algorithms trained on vast datasets of genuine identification formats—to create digital forgeries of U.S. driver’s licenses covering all fifty states, U.S. passports, passport cards, and equivalent identity proofs for approximately 56 other jurisdictions. Customers possessed the granular control to input specific personal data, request randomized information sets, and dictate the final output format, choosing between a clean digital scan or a more contextually convincing tabletop photograph. This level of customization provided criminals with highly adaptable tools for identity concealment.

U.S. Attorney Jay Clayton underscored the gravity of this technological transgression during his recent statement, emphasizing that government-issued identification serves as a critical bulwark against a spectrum of transnational illicit activities, including terrorism financing, human trafficking, money laundering, and various forms of high-level fraud. "The manufacture of fraudulent IDs and other documents by operations like OnlyFake places every citizen and institution at risk, and decisive action against these threats is imperative," Clayton noted, framing the prosecution as a necessary defense of public trust and systemic integrity.

Background: The Rise of Synthetic Identity Fraud

The genesis of the OnlyFake operation coincides with a significant acceleration in the capabilities of publicly accessible generative AI tools. While large language models (LLMs) have captured public attention for text generation, the underlying technology, specifically Generative Adversarial Networks (GANs) and diffusion models, has proven exceptionally adept at image synthesis. In the realm of identity theft, this has moved the threat profile beyond the realm of easily detectable template errors common in digitally altered JPEGs.

Historically, creating high-quality synthetic IDs required specialized skills in graphic design, knowledge of security features (like watermarks or micro-printing, though less relevant for purely digital copies), and access to specialized software. OnlyFake democratized this capability. By packaging advanced image generation technology into an accessible, user-friendly web portal, Nazarenko effectively lowered the barrier to entry for identity-based fraud, transforming a niche criminal activity into a scalable, e-commerce venture.

The indictment details how undercover operations conducted by the FBI between May and June 2024 successfully procured various fake documents, including New York State IDs, U.S. passports, and Social Security cards, validating the efficacy of the platform’s output.

Industry Implications: Targeting KYC and AML Frameworks

The primary vector for the illicit use of these AI-generated documents, as highlighted by New York federal prosecutors, was the systematic circumvention of Know Your Customer (KYC) and Anti-Money Laundering (AML) protocols. KYC regulations, formalized in the U.S. largely through the Patriot Act, require financial institutions—including traditional banks and, increasingly, cryptocurrency exchanges—to verify the true identity of their clients before opening accounts or enabling large transactions.

Cryptocurrency exchanges, due to their pseudonymous nature and rapid transaction speeds, have become a prime target for illicit actors seeking to "on-ramp" or "off-ramp" laundered funds. When a service like OnlyFake provides near-perfect digital IDs, bad actors can create numerous synthetic identities, open multiple accounts across different exchanges, and layer transactions to obscure the source of the funds—a classic money laundering technique known as "smurfing" or layering. The availability of these synthetic documents directly attacks the integrity of the digital identity verification layer upon which modern financial compliance rests.

Furthermore, the acceptance of only cryptocurrency payments by OnlyFake was a deliberate obfuscation tactic. Nazarenko employed established dark web techniques, routing transactions through multiple digital wallets to complicate tracing efforts. This combination—AI-generated synthetic documents paired with cryptocurrency tumblers—represents a highly evolved threat model that outpaces traditional forensic accounting methods.

The reaction from law enforcement, evidenced by the extradition of Nazarenko from Romania in September 2025, signals a global commitment to cross-border enforcement against AI-enabled cybercrime infrastructure. Nazarenko’s agreement to forfeit $1.2 million underscores the significant financial motive behind the scheme and provides a measure of restitution, though the true cost of the compromised trust may be incalculable.

Expert Analysis: The Arms Race in Digital Verification

From a cybersecurity and identity verification (IDV) industry perspective, the OnlyFake case serves as a stark validation of the ongoing technological arms race. IDV providers, which utilize automated checks against government databases or deploy complex liveness detection for biometric verification, must now contend with synthetic identities that are not merely fabricated but are computationally generated to mimic reality.

The core challenge lies in distinguishing between a photograph of a real document and a statistically perfect AI rendering. Traditional checks often look for artifacts associated with Photoshop manipulation (e.g., pixel inconsistencies, blurred edges around text fields). Generative models, however, are designed to produce outputs that exist within the statistical manifold of real-world data, meaning they often pass basic artifact detection tests.

"We are moving beyond detecting forgery into the realm of detecting synthetic creation," notes Dr. Evelyn Reed, a computational forensics specialist. "The fact that these documents were designed to look like scans or tabletop photos suggests an awareness of how KYC platforms often process visual evidence. The next generation of attackers will pivot to exploiting biometric checks, perhaps using deepfake video profiles matched to these synthetic IDs."

The case highlights the insufficiency of relying solely on visual inspection or simple database lookups. Robust IDV solutions are now compelled to integrate advanced machine learning detectors specifically trained to recognize the subtle, underlying statistical patterns inherent in GAN-produced imagery—patterns that are invisible to the human eye but betray the synthetic origin to a specialized algorithm.

Future Impact and Emerging Trends

The guilty plea marks a significant victory, but the underlying technology remains accessible. The future impact of this incident will likely drive three major trends in cybersecurity and regulatory compliance:

1. Hardening of Biometric and Liveness Checks: Since visual document forgery is increasingly trivialized by AI, the industry will pivot aggressively toward robust liveness detection for remote onboarding. This involves sophisticated analysis of micro-movements, eye tracking, and 3D mapping during video verification to ensure the person presenting the ID is physically present and not a sophisticated digital overlay.

2. Decentralized Identity Solutions (DID): The vulnerability of centralized ID repositories and verification checkpoints incentivizes the exploration of decentralized identity frameworks. If identity credentials can be cryptographically verified without relying on the visual presentation of a single document, the utility of synthetic visual IDs diminishes significantly. DID seeks to give individuals sovereign control over their verified attributes, making mass creation of synthetic profiles less effective against networked verification protocols.

3. International Cooperation on AI Crime: The cross-border nature of the operation—Ukrainian operator, international clientele, extradition from Romania, prosecution in the U.S.—sets a precedent for future collaborative efforts. As AI tools become globally distributed, law enforcement agencies will increasingly need standardized protocols for seizing digital assets, analyzing cross-jurisdictional cryptocurrency flows, and sharing threat intelligence related to generative fraud techniques.

Yurii Nazarenko faces sentencing on June 26, 2026, with a maximum exposure of 15 years imprisonment. While the operators of OnlyFake are being held accountable, the technology they popularized represents a persistent, evolving threat. The era of relying on digital representations of physical identity documents as primary authentication factors is clearly drawing to a close, necessitating rapid innovation in identity assurance technologies to maintain the security of global finance and commerce. The industry must now account for the fact that the next wave of fraud won’t just use forged documents; it will use documents that were never printed.