When chief information security officers (CISOs) and their teams conduct comprehensive risk assessments, the initial focus invariably gravitates toward the visible scaffolding of the modern enterprise. This includes hardened server infrastructure, complex identity and access management (IAM) frameworks, perimeter defenses like VPNs, the sprawling architecture of cloud deployments, and the hardened configuration of core operating systems. These assets are tangible, documented, and feature prominently in network diagrams and compliance reports. They represent the controlled boundaries of the organization.

However, a significant portion of the true operational risk profile—the vector through which most initial compromises occur—resides not in these core systems, but within the peripheral, yet indispensable, software ecosystem that enables daily productivity. This layer, often overlooked in high-level strategic discussions, comprises the everyday utilities employees rely on to process information and communicate: PDF rendering engines, file compression tools, remote desktop clients, word processing suites, analytical software, email clients, web browsers, screen-sharing utilities, and background update agents.

These applications are not optional components; they are the essential lubricants of the digital economy. Business continuity hinges on their seamless functionality. Legal departments ingest contracts via PDF; finance executes critical planning through spreadsheets; human resources processes sensitive candidate data; IT manages distributed workforces through remote tools; and executive decision-making is tethered to the constant flow of browser-based information and email. Deploying these tools is rarely a debated decision; they are integrated by default, dictated by necessity and external compatibility standards.

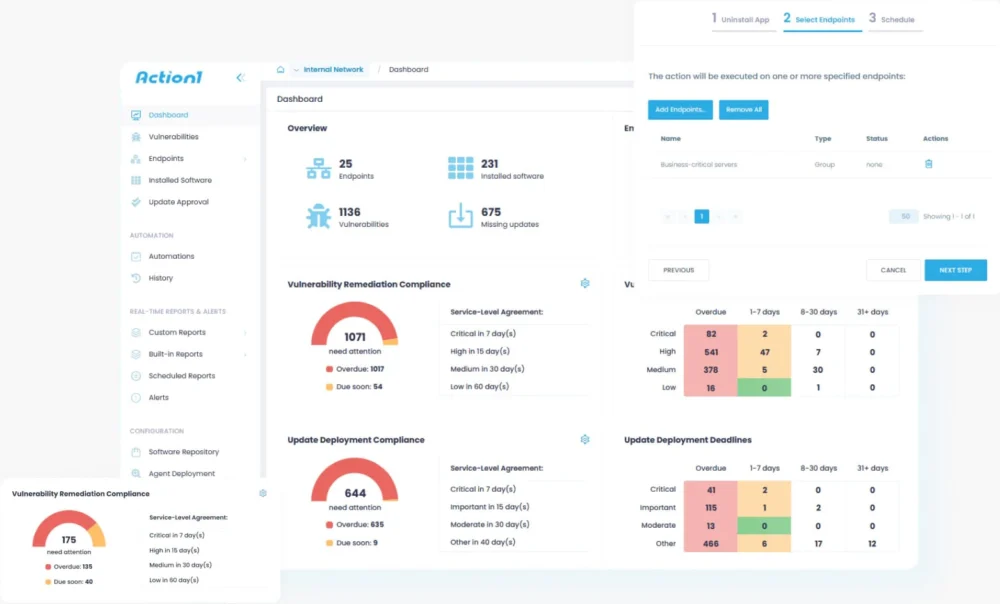

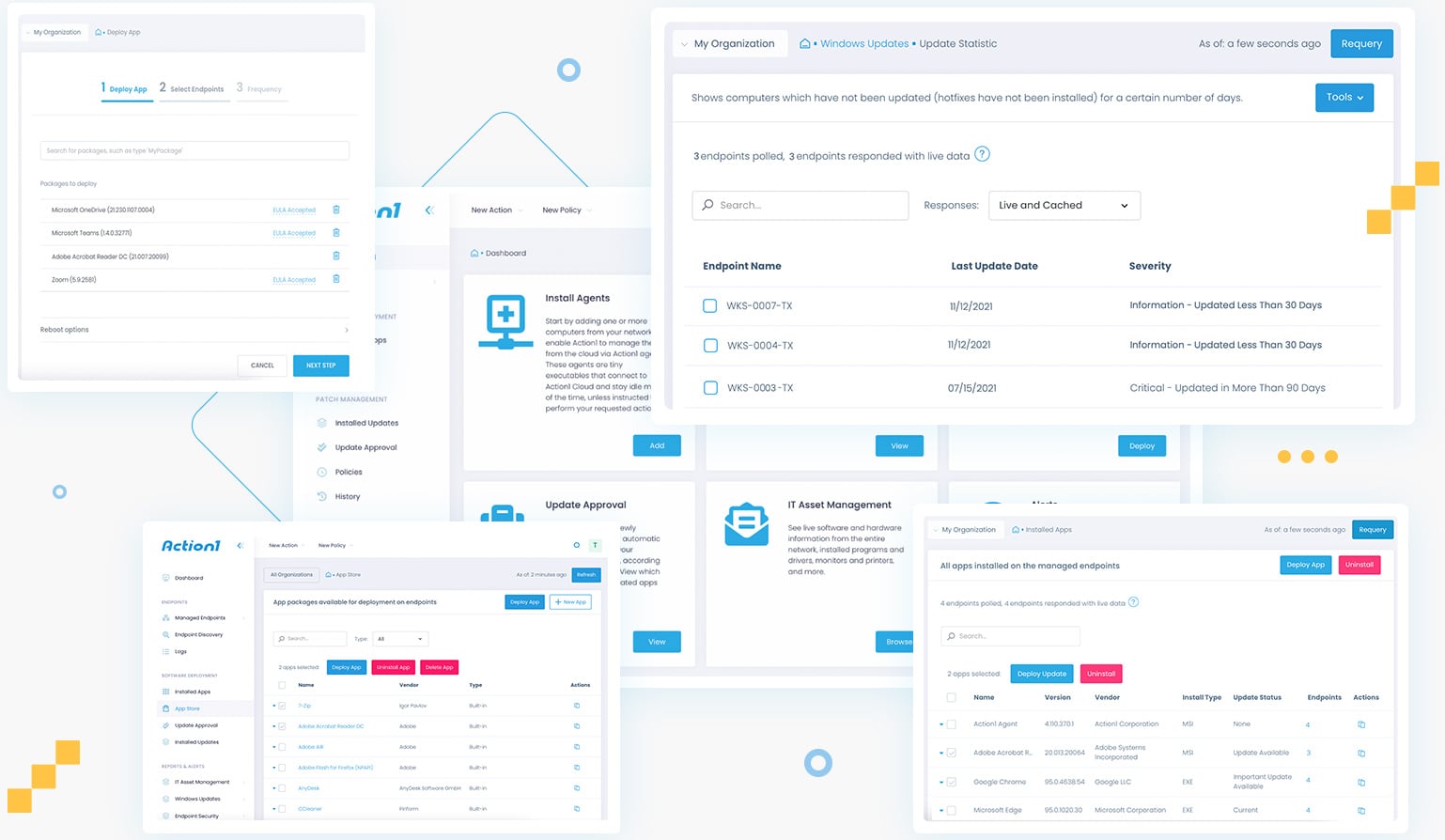

For organizations specializing in endpoint visibility and vulnerability management, such as those focused intensely on managing exposure across heterogeneous endpoints, this background software consistently surfaces as the defining characteristic of the actual attack surface. The very ubiquity and standardization that allow modern business to function efficiently are precisely what make these tools irresistible targets for sophisticated threat actors.

The Strategic Value Proposition of Standardization

While the network topology, specific security stack integrations, and proprietary internal applications of two different corporations may vary wildly, a profound structural similarity exists in the third-party software stack across nearly all enterprises. A common set of dominant software titles—the market leaders in document creation, email handling, and web browsing—reigns supreme across the industry.

This standardization is not an accident of preference but a requirement for interoperability. Modern commerce demands frictionless exchange of data. The era of format wars, where proprietary software locked users into closed ecosystems, proved economically unsustainable. The industry coalesced around common standards (like PDF, XLSX, HTML) precisely to eliminate this friction, cementing the dominance of a few key software providers.

Adversaries possess an intimate understanding of this structural reality. Instead of expending significant resources mapping and exploiting niche, bespoke internal applications unique to a single target, threat actors focus their efforts on the high-probability overlap areas. If a zero-day vulnerability is discovered within a widely adopted PDF parsing library, a common spreadsheet engine, or a ubiquitous email preview component, the probability of that exploit landing on a vulnerable endpoint within a target environment skyrockets. The attack strategy shifts from precision targeting of architecture to leveraging collective familiarity.

The mechanics of successful social engineering and initial access frequently exploit this routine. Users are conditioned to open attachments, click embedded links, and render documents as part of their habitual workflow. Attackers are betting that this muscle memory overrides reflexive security caution precisely because the action—opening a seemingly benign spreadsheet—feels utterly normal. This high degree of environmental predictability must fundamentally inform defensive posture planning.

The Probabilistic Shift in Threat Modeling

Historically, early-stage cyber attacks targeting endpoints often felt like a form of probabilistic guesswork. An attacker might craft a phishing email specifically targeting Microsoft Outlook users, attaching a weaponized Excel file, hoping the recipient possessed the vulnerable version of the corresponding software. This approach carried inherent risk: the exploit might fail if the target used a different mail client or an unpatched version, leading to wasted resources and potential detection exposure where the failed attempt could be analyzed and signatured by defenders.

The prevalence of common utilities fundamentally alters this calculus, shifting the paradigm from guesswork to high-probability likelihood modeling. Because email clients, major browsers, standard office suites, and archive tools are requirements for contemporary business function, an attacker need not possess perfect knowledge of the target’s precise asset inventory. They can operate with high confidence that compatible processing software exists on the receiving end.

This encourages attackers to prioritize investment in exploit development for vulnerabilities found in these ubiquitous tools. Exploits that prove effective within a common toolchain scale exponentially. If a flaw is successfully weaponized against the rendering engine of a major browser, the potential return on investment is maximized because the deployment environment—the global user base relying on that browser—is massive and predictable. Vulnerabilities in these widely deployed applications rapidly traverse exploit ecosystems because they offer the greatest potential for mass compromise or high-value lateral movement within established corporate environments.

The Symbiotic Business Footprint

The correlation between these applications extends beyond mere presence; these tools are intrinsically linked within operational workflows. The ecosystem reinforces itself. An inbound email, flagged as originating from a known client like Outlook, provides an immediate clue about the potential processing environment on the recipient’s machine. Email workflows invariably feed into document handling workflows—opening attachments or links often triggers associated document software like Word or Adobe Acrobat.

For an attacker mapping an intrusion path, this interdependency creates exploitable chains rather than isolated entry points. A vulnerability in an email client’s attachment handler can cascade into issues within the document rendering engine, shared memory libraries, or integrated collaboration features, all co-resident on the same endpoint. The attack surface, therefore, ceases to be a collection of discrete applications and starts mirroring the actual, day-to-day operational footprint of the organization. When vulnerabilities surface within this core operational nexus, they gain immediate strategic importance because they align perfectly with established user behavior patterns.

Metadata: The Unintended Disclosure of System State

A subtle, yet critical, dimension of this risk involves the inadvertent information leakage inherent in digital artifacts. Documents, emails, and shared files are not inert containers; they are often rich with metadata that silently broadcasts details about the underlying software environment.

A PDF document may embed fingerprints indicating the specific version of the creation engine used. Spreadsheets retain characteristic formatting behaviors tied to particular versions of office suites. Email headers meticulously detail the client software and server paths traversed. Browser traffic, through user-agent strings, advertises operating system details and rendering capabilities. File structures themselves can reveal versioning habits and patch levels.

Individually, these pieces of data may appear benign or irrelevant. Collectively, however, they construct a detailed profile of the target’s software stack over time. This profiling capability is invaluable to an adversary. It allows them to move beyond speculation and engineer highly tailored payloads. If metadata reveals an endpoint is processing files via a version of software that has been unpatched for three years, the attacker can discard reliance on novel exploits and instead deploy known, reliable exploits specific to that vintage. This transition from broad experimentation to precision targeting significantly elevates the likelihood and speed of successful breach.

The Drift Phenomenon: Why Third-Party Software Decays

The diligence applied to core platform patching—operating systems, major network components—is often robust. Patch deployment pipelines are usually well-defined, automated, and subject to rigorous governance. Browsers, given their constant external exposure, are frequently updated, often automatically. Mobile endpoints are typically enforced by centralized Mobile Device Management (MDM) policies. These systems possess established baselines and continuous monitoring.

Third-party utilities, conversely, operate in a state of managed entropy. Vendor distribution methods are diverse: some offer seamless auto-updates, others rely on end-user initiative, and still others are locked down by internal deployment scripts that fail to account for iterative updates. Critically, some essential business workflows become brittle; an update to a key application—say, a specific PDF editor required for regulatory compliance—can break downstream processes, leading IT to intentionally freeze that application at an older, potentially vulnerable version.

This operational friction results in endpoint fragmentation. Within a single enterprise, it is common to discover multiple, divergent versions of the same third-party application residing side-by-side, some lagging current security releases by substantial margins—sometimes years. This fragmentation silently compounds exploit potential. Attackers do not require a zero-day against the latest software; they merely need an established exploit against any version still lingering in the environment. A five-year-old PDF reader, dormant but functional, carries five years of accumulated, publicly known security debt. This accumulation of minor technical debt creates expansive windows for major exploitation.

The Psychology of Inherent Trust

Beyond the technical vector lies a crucial human factor: trust. End-user interaction with applications like email, document readers, and file archival tools is deeply ingrained and largely unquestioned. These applications are perceived as infrastructural furniture—as reliable as the desk or the network connection. Opening a PDF is not cognitively registered as executing complex, potentially dangerous code; it is merely viewing a document. Extracting a ZIP file is a routine file operation.

This low-scrutiny environment is precisely what attackers exploit. By the time user behavior registers as anomalous—perhaps a macro executes unexpectedly or a file opens a command prompt—the initial, high-trust interaction has already occurred. Because these foundational actions happen thousands of times daily across the organization, isolating the initial point of compromise within the deluge of routine activity becomes an immensely difficult forensic challenge.

Reorienting Security Focus: From Platform to Operational Footprint

For executive leadership defining security strategy, the key takeaway is one of perspective adjustment, not alarmism. Current security governance naturally prioritizes the platform layer: the operating system kernel, network segmentation, IAM policies, and cloud tenancy controls. These are vital, but they represent the architecture, not the activity.

The reality is that all organizational activity—the actual work—occurs through the intermediary of third-party applications: reviewing files in a browser, processing data in a spreadsheet, communicating via email. This is where data ingress, file parsing, link resolution, and data transfer physically occur. This behavioral layer is inherently predictable.

This predictability underscores why robust third-party patching often yields a disproportionately high return on security investment compared to more visible, platform-centric controls. An immaculately patched operating system can be entirely bypassed if the adjacent, trusted PDF reader contains a known, unpatched memory corruption vulnerability that is triggered by a simple file open command. Security exposure concentrates where the daily operational demands intersect with legacy or unmanaged software versions. Understanding this operational footprint—what standard tool suite employees actually run, how it evolves, and how consistently it receives timely updates—is paramount.

Strategic Patching: Moving Beyond the Operational Ticking Clock

Third-party patch management is too often relegated to the tactical realm—a recurring operational chore rather than a strategic security imperative. Yet, these utilities occupy the critical nexus where human action meets file execution. Their ordinariness is their most potent security liability. Attackers design their campaigns around the high probability that enterprises will share similar software dependencies, exploiting the lowest common denominator of widely deployed, potentially outdated code.

Security teams must pivot their analysis from merely cataloging infrastructure to rigorously defining the standard business software suite deployed across endpoints. This requires disciplined inventory management: identifying necessary versus default installations, tracking applications that persist post-project completion, and establishing clear remediation SLAs for tools that fall outside automated update cycles.

In practice, platforms that provide comprehensive, continuous visibility into third-party software exposure consistently demonstrate the greatest tangible reduction in exploitable risk. A successful breach rarely hinges on a novel, undiscovered flaw in a core OS component; it is overwhelmingly enabled by the accumulated vulnerability debt residing within applications that users interact with daily, applications that have quietly drifted out of compliance while remaining deeply embedded in workflow.

These conditions—the existence of aged, vulnerable third-party code within routine execution paths—precede any malicious campaign. They define the practical attack surface by dictating which executables are permitted to run and which user actions are deemed safe enough to bypass scrutiny. Third-party software is not peripheral to the platform; it is the functional medium through which the platform performs work, and it is frequently the primary locus of concentrated exposure when core systems appear superficially secure.

Modern risk mitigation demands continuous, granular insight into every third-party application executing across the environment, paired with the capacity for rapid, consistent remediation across distributed endpoints. As enterprises mature their security architecture, the realization that OS patching alone is insufficient drives the adoption of integrated solutions designed specifically to manage this pervasive threat vector. True resilience in the current threat landscape necessitates treating the security of everyday applications with the same rigor once reserved only for the network perimeter.