The foundational assumptions underpinning modern web development security have been fundamentally challenged by a critical vulnerability discovered within the Google Cloud ecosystem. For years, API keys associated with widely used services—such as Google Maps embedding, YouTube integration, or Firebase functionalities—were routinely committed to client-side code, often visible in publicly accessible JavaScript source files. This practice, while discouraged by security best practices, was largely considered a low-risk oversight because these keys typically possessed narrow, non-sensitive permissions. However, the advent of Google’s powerful Gemini generative AI assistant has dramatically altered this threat landscape, transforming previously inert identifiers into high-value authentication tokens capable of accessing private AI services and incurring substantial financial liabilities.

This paradigm shift was brought to light by security researchers at TruffleSecurity, who conducted an extensive scan leveraging the November 2025 Common Crawl dataset—a vast index reflecting the live internet. Their findings painted a concerning picture: they identified over 2,800 instances of active Google API keys embedded in the publicly accessible code of websites across diverse sectors. Disturbingly, this exposed inventory included keys utilized by organizations in sensitive industries, such as major financial institutions, established cybersecurity firms, and high-volume recruiting agencies. Even Google’s own publicly facing infrastructure was not immune, demonstrating the pervasive nature of this latent vulnerability.

The Innocuous Key Becomes a Gateway

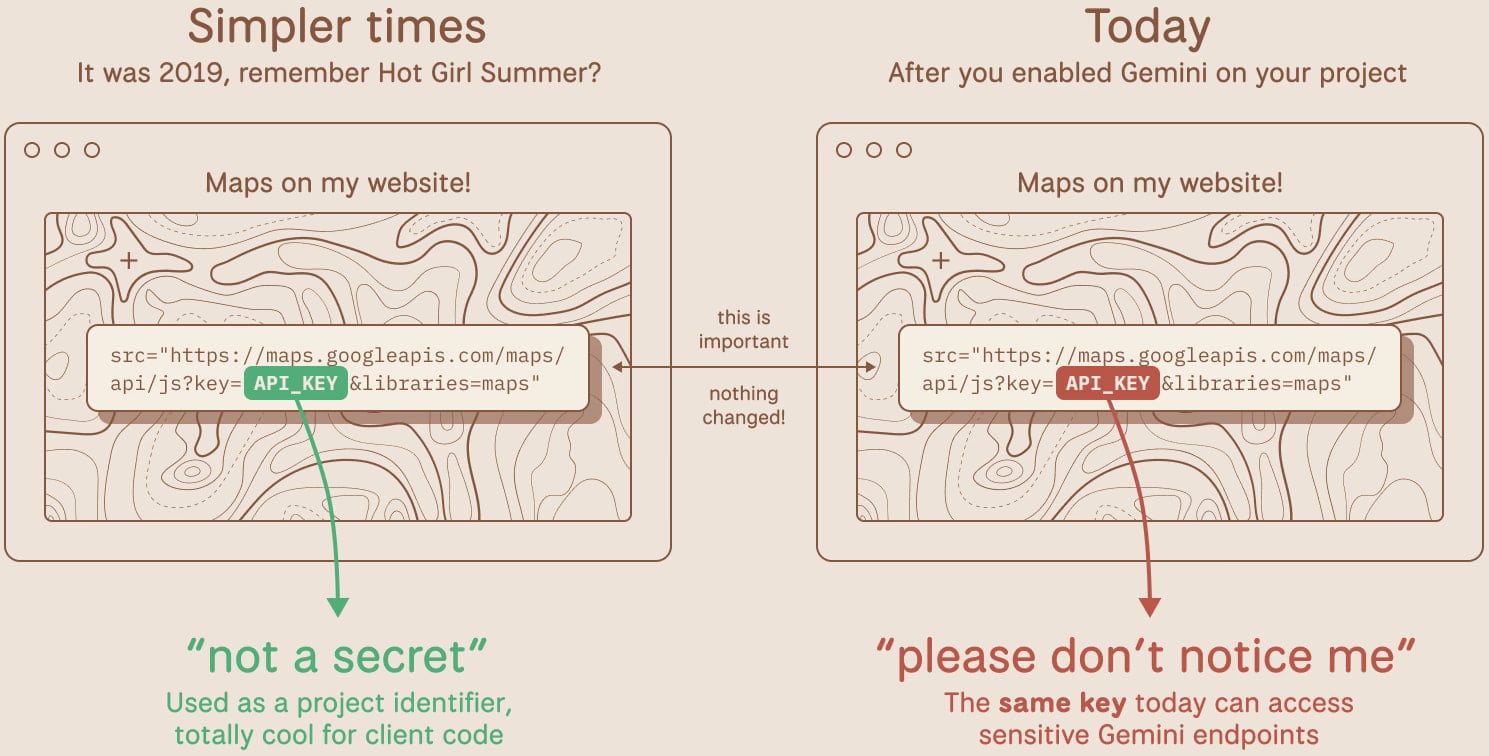

The core of the issue lies in how Google Cloud manages authentication for its expanding suite of services. Standard API keys, traditionally employed for enabling front-end features like geographical visualization or basic analytics tracking, were generally scoped to those specific services. A key embedded in a map widget, for example, could only authorize map-related calls. This limited scope meant that even if an attacker retrieved the key by inspecting a webpage’s source code—a common technique for identifying exposed credentials—the potential damage was confined.

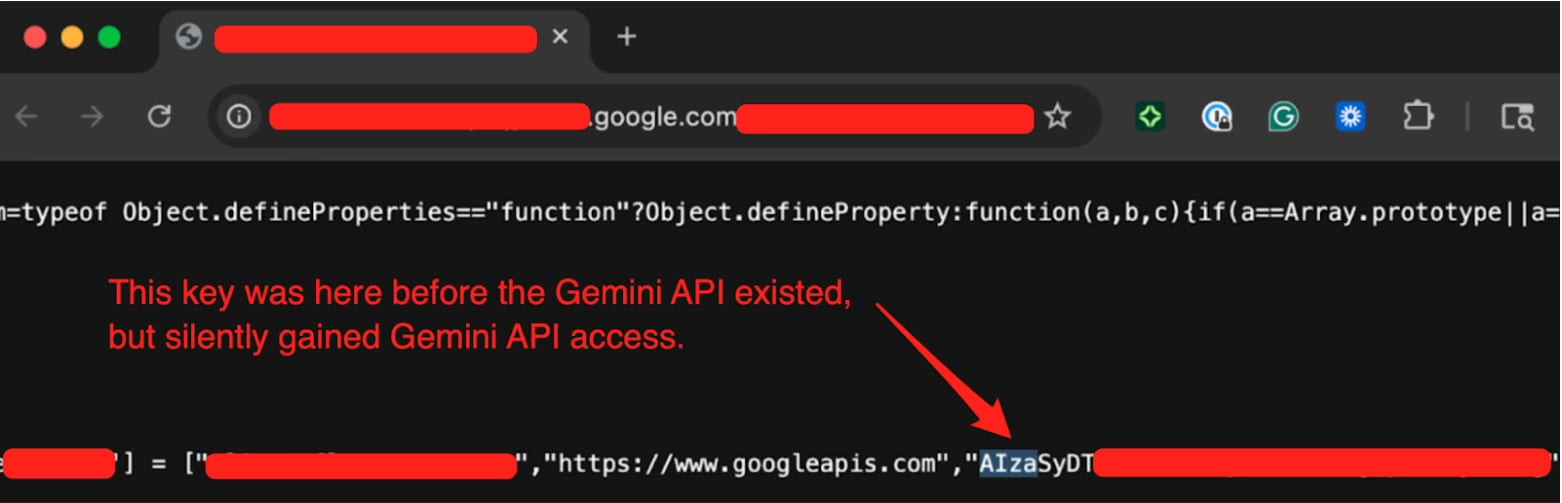

With the rollout of the Gemini Large Language Model (LLM) API, these legacy keys, which remained active in the wild, suddenly inherited a broader authorization profile. If a developer had previously enabled the Generative Language API within a project that utilized one of these publicly exposed keys, that key instantaneously gained the ability to authenticate against the Gemini endpoint. This represents a classic case of "implicit privilege escalation," where a pre-existing, seemingly benign asset acquires dangerous new capabilities due to downstream service enablement, often without the developer being aware of the associated risk increase.

TruffleSecurity confirmed this threat vector by testing one of the discovered keys against the Gemini API’s /models endpoint. The successful enumeration of available models confirmed that the key was fully authenticated and authorized to interact with Google’s cutting-edge AI infrastructure.

The Financial and Data Implications

The ramifications extend beyond mere data exposure; they include direct financial fraud. Access to the Gemini API is metered, meaning every call consumes resources and incurs charges against the associated billing account. Researchers highlighted the potential for rapid financial depletion: "Depending on the model and context window, a threat actor maxing out API calls could generate thousands of dollars in charges per day on a single victim account," according to Truffle Security’s analysis. An attacker could easily automate high-volume, resource-intensive queries—such as complex code generation or extensive data processing—until the victim organization’s spending limits are breached or significant unexpected invoices accumulate.

Furthermore, while the primary risk identified was financial exploitation, the principle of privilege escalation suggests potential access to sensitive data flowing through the API. If the key was used by an organization handling proprietary data via the LLM—perhaps for internal summarization, analysis, or content moderation—an attacker could theoretically exfiltrate or manipulate that data, leading to severe compliance violations and intellectual property loss.

Industry Context and Developer Blind Spots

This incident serves as a stark reminder of the "drift" in security posture that occurs as platforms evolve. In the early days of public cloud APIs, the primary security concern regarding client-side keys was service hijacking (e.g., an attacker using an exposed Maps key to generate massive billing for unauthorized map usage). Developers, accustomed to this limited threat profile, developed standard deployment patterns that often involved hardcoding keys directly into HTML or JavaScript files, assuming the client-side execution environment was inherently untrusted but that the keys themselves were too narrowly scoped to matter much.

The rise of AI services introduces an entirely new dimension. AI models are complex, computationally expensive, and often handle sensitive or novel data inputs. They transition API keys from being mere operational identifiers to highly privileged access tokens for sophisticated computational resources. The failure to re-evaluate the security classification of existing, exposed keys upon the introduction of a new, powerful service like Gemini is a systemic oversight in cloud security hygiene.

The sheer volume of exposed keys underscores a widespread failure in automated credential scanning and key rotation policies. Many organizations rely on manual audits or sporadic third-party scans, which are often outpaced by the rapid deployment cycles enabled by DevOps and CI/CD pipelines. Keys intended for testing or ephemeral services frequently persist in production codebases indefinitely, waiting for a future service enablement to unlock their hidden potential.

Google’s Remediation Strategy and Industry Response

TruffleSecurity initiated responsible disclosure, reporting the findings to Google on November 21st. The ensuing dialogue culminated in Google classifying the incident on January 13, 2026, as a "single-service privilege escalation." This classification acknowledges that the keys were not inherently compromised but that their utility expanded beyond their original security envelope.

In response, Google has outlined a multi-pronged mitigation strategy. A spokesperson confirmed the implementation of proactive measures designed to specifically detect and block any leaked API keys attempting to access the Gemini API. Crucially, Google is altering the default configuration for new credentials: keys generated through AI Studio will now possess a default scope restricted exclusively to Gemini services. This "least privilege by default" approach significantly reduces the risk of future legacy-key-style escalations. Furthermore, Google is deploying systems to actively block access from known leaked keys and establish proactive notification channels for developers when key exposure is detected.

However, the immediate burden remains on existing users. Organizations must now undertake an urgent audit. Developers are strongly advised to systematically review all active projects linked to Google Cloud. The primary action items involve determining if the Generative Language API is enabled on any project utilizing publicly exposed keys and, if so, immediately rotating those keys and restricting their scope back to the minimum necessary services. For keys that were intended only for front-end functionality, developers should ensure they are utilizing the appropriate client-side restrictions or, preferably, moving secret management server-side entirely.

Future Trends in Credential Management for the AI Era

This incident forecasts a critical trend in cloud security: the security profile of every credential must be dynamically assessed against the services it has permission to access, not just the services it was intended for. As cloud providers integrate novel, powerful services—from advanced machine learning endpoints to specialized data processing fabrics—the risk associated with legacy, poorly managed keys escalates exponentially.

We can anticipate several shifts in security tooling and developer behavior:

- Context-Aware Secret Scanning: Future security scanners, including open-source tools like TruffleHog, will need to evolve beyond simple regex matching for key patterns. They must integrate with cloud metadata or IAM systems (where possible) to assess the effective permissions of a discovered key in real-time against active service endpoints.

- Ephemeral and Environment-Specific Keys: The industry will likely accelerate the move toward short-lived, token-based authentication for client-side interactions, rendering long-lived static API keys obsolete for publicly accessible code. Infrastructure-as-Code (IaC) platforms will need robust native support for generating and managing these highly ephemeral credentials.

- Automated Privilege De-scoping: Cloud platforms may introduce automated checks that flag API keys associated with a high volume of publicly visible code locations and automatically revoke or restrict access to newly introduced high-risk services (like LLMs) until explicit developer confirmation is received.

The exposure of these keys represents more than a simple configuration error; it symbolizes the friction between the rapid pace of feature deployment and the slower evolution of robust security governance. In the age of ubiquitous AI, developers must treat every piece of embedded configuration data as potentially capable of unlocking significantly greater power than it was originally designed to wield. The era of "harmless" exposed API keys is definitively over.