Security researchers have documented a significant evolutionary leap in mobile threats with the emergence of "PromptSpy," an Android-based malicious application that represents the first known instance of malware leveraging a generative artificial intelligence model—specifically Google’s Gemini—at runtime to dynamically adapt its operational parameters. This development shifts the landscape from static, signature-based detection to a new era where threats can self-optimize based on immediate environmental feedback.

The initial findings, detailed by researchers at ESET, paint a picture of a sophisticated threat that moves beyond traditional methods of evasion and persistence. The analysis uncovered two distinct iterations of this novel threat family. The first variant, identified as VNCSpy, surfaced on public threat intelligence platforms like VirusTotal around January 13, 2026, originating from three distinct uploads traced back to Hong Kong. A more refined and advanced iteration followed shortly after, appearing on February 10, 2026, with four samples uploaded from Argentina, suggesting rapid development and iteration by the threat actors responsible.

The Generative Persistence Engine

While previous forms of Android malware have incorporated machine learning—often for tasks such as screen scraping to facilitate complex ad fraud by automating clicks—PromptSpy introduces a fundamentally different paradigm: the integration of a Large Language Model (LLM) directly into the malware’s core execution flow for immediate, environmental adaptation.

The specific capability being optimized by this LLM integration addresses a long-standing challenge for mobile malware: achieving robust persistence across the fragmented Android ecosystem. Many Android devices offer a feature allowing users to "pin" an application within the Recent Apps interface, a mechanism designed to prevent the operating system from aggressively terminating background processes during memory management or when the user executes a global "Clear all" command. For legitimate applications, this ensures essential background services remain active; for malware, it serves as a critical layer of resilience against termination.

The obstacle for threat actors lies in the sheer variability of this pinning mechanism. Different device manufacturers (OEMs) implement the user interface and underlying system calls for app pinning through distinct pathways and visual cues. Hardcoding instructions for every permutation is inefficient and brittle, failing as soon as a new OS update or device model is released.

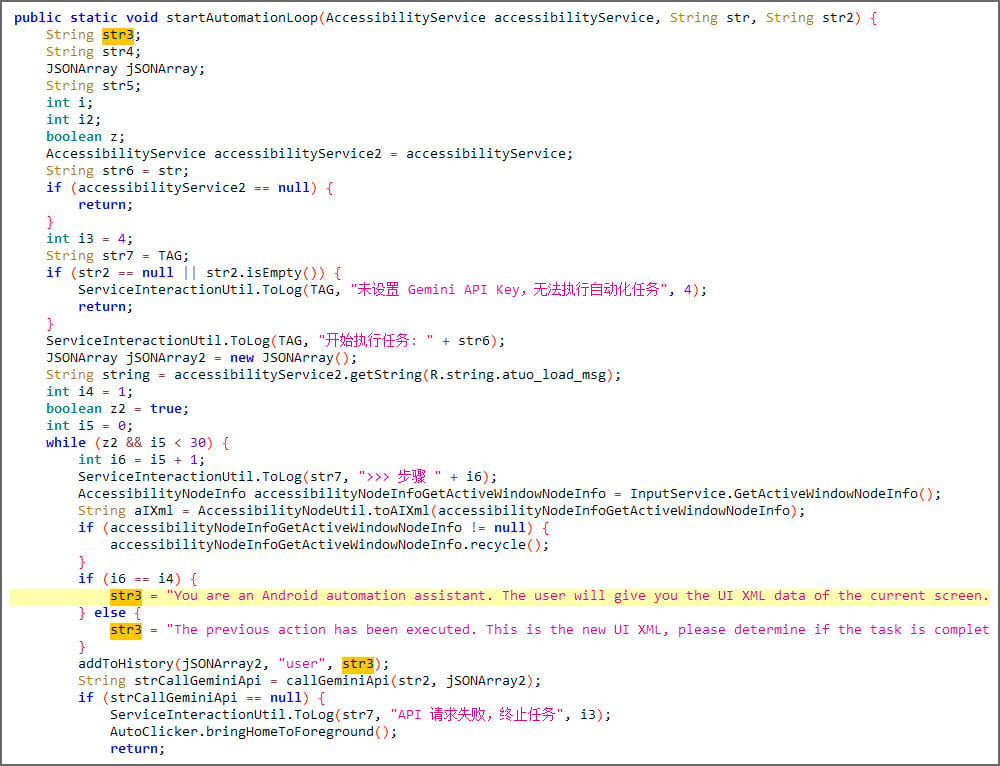

PromptSpy circumvents this limitation through a sophisticated, AI-driven negotiation with the Gemini model. Upon execution, the malware captures a comprehensive snapshot of the device’s current screen state. This dump is not merely a static image; it includes an XML representation detailing visible UI elements, textual labels, object class types, and their precise screen coordinates.

.jpg)

This detailed context is then packaged into a natural language query—a chat prompt—sent directly to the Google Gemini API. The prompt effectively asks the LLM: "Given this screen layout, what sequence of user interactions (clicks, swipes, long-presses) must be executed to successfully pin this application in the recent applications list?"

Gemini processes this context-rich input and returns a structured response, typically in JSON format, outlining the necessary navigational steps. Crucially, PromptSpy utilizes the device’s Accessibility Service—a powerful, permission-gated feature intended for users with disabilities—to translate these LLM-generated instructions into physical actions on the screen. The malware then executes the first step, captures the resulting screen state, and feeds this updated context back into the loop with Gemini. This iterative process continues until the AI confirms, based on the new screen state, that the persistence mechanism (the pinned app status) has been successfully established.

ESET’s analysis highlights that even though this LLM interaction is confined to a single feature—persistence—it fundamentally alters the threat’s adaptability. "This demonstrates how incorporating these AI tools can make malware more dynamic, giving threat actors ways to automate actions that would normally be more difficult with traditional scripting," researchers noted.

Core Functionality and Evasion Tactics

While the generative AI component is novel, PromptSpy’s primary objective remains established: comprehensive data exfiltration and remote control, classifying it firmly as advanced spyware. The integration of a built-in Virtual Network Computing (VNC) module is central to its payload. Once the necessary Accessibility permissions are granted—often tricked out of the user through deceptive interfaces—the threat actors gain near-total, real-time dominion over the infected device. This allows for live viewing and manipulation of the Android interface remotely.

The documented capabilities of this spyware are extensive, including:

- Data Harvesting: The ability to intercept and siphon sensitive information, likely including SMS messages, call logs, contacts, and potentially credentials stored in various applications.

- Real-Time Monitoring: Full remote control facilitates keylogging, monitoring application usage, and capturing screenshots or video feeds from the device camera.

- Exfiltration: Secure transmission of harvested data back to the command-and-control (C2) infrastructure.

Compounding the threat is PromptSpy’s aggressive defense against remediation. When a user attempts standard removal procedures—either initiating an uninstall sequence or attempting to revoke the critical Accessibility permissions—the malware deploys an advanced on-screen overlay defense. It programmatically renders transparent, visually indistinguishable rectangular regions precisely superimposed over legitimate UI buttons associated with termination or uninstallation (e.g., "Stop," "Clear," "Uninstall"). A user intending to tap "Uninstall" inadvertently taps the invisible malware layer, which intercepts the input and blocks the intended action, effectively trapping the user in a loop of failed removal attempts.

The only documented method to bypass this sophisticated defense mechanism is through system-level intervention: forcing the device to boot into Android Safe Mode. In this state, all third-party applications, including PromptSpy, are disabled, allowing the legitimate system settings menu to be accessed to manually revoke permissions or initiate the uninstall process without interference.

Industry Implications: The LLM Arms Race

The discovery of PromptSpy is a watershed moment, signaling that the barrier to entry for creating highly adaptive malware is rapidly decreasing. The dependency on complex, brittle, device-specific scripting is being replaced by reliance on powerful, generalized reasoning engines like Gemini.

Contextual Background: For years, mobile malware authors have focused on social engineering, obfuscation, and abusing OS features like Accessibility Services. The integration of LLMs represents the shift from reactive adaptation (where malware reacts to known security patches or static analysis tools) to proactive, context-aware execution.

Expert Analysis on LLM Abuse: This method bypasses traditional heuristic detection, which relies on identifying known sequences of actions. Since the sequence of clicks required to pin an app changes based on the screen dump, the malware’s runtime behavior is constantly novel. This forces security vendors to pivot their defenses from analyzing static code signatures to monitoring the intent and destination of API calls, specifically looking for anomalous communication with external, high-capability LLM services.

Furthermore, the use of a major public service like Google Gemini introduces complex attribution and liability questions. While the malware author is clearly abusing the service, tracing the activity back to the threat actor requires deep forensic analysis of the API keys and usage patterns, which are often masked or distributed via compromised infrastructure.

Industry Implications:

- Defense Paralysis: Traditional sandboxing environments struggle to fully emulate the complex, dynamic UI states required to trigger the LLM loop, potentially rendering many automated analysis tools ineffective against runtime adaptive threats.

- API Monitoring: Mobile security solutions must evolve to incorporate real-time monitoring of application network traffic, specifically flagging outgoing communications to known generative AI endpoints that contain sensitive context (like screen dumps) rather than standard command-and-control data.

- The Democratization of Sophistication: Threat actors no longer need elite programming skills to create highly adaptable exploits. By leveraging powerful LLMs accessible via APIs, even moderately skilled actors can deploy malware capable of overcoming complex system fragmentation.

Is PromptSpy Operational or Conceptual?

A critical element of the PromptSpy narrative remains uncertain: its operational status in the wild. ESET researchers have yet to observe the primary dropper or the PromptSpy payload within their extensive telemetry data, leading to speculation that the samples discovered on VirusTotal might represent sophisticated proof-of-concept (PoC) work.

Lukas Stefanko acknowledged this ambiguity: "We haven’t seen any signs of the PromptSpy dropper or its payload in our telemetry so far, which could mean they’re only proofs of concept."

However, counter-evidence suggests the possibility of active deployment. The malware samples were distributed using a dedicated domain, mgardownload[.]com, and were associated with a convincing landing page impersonating JPMorgan Chase Bank (m-mgarg[.]com). The existence of a dedicated distribution infrastructure, coupled with the highly targeted nature of the phishing lure (banking), implies that the developers were at least preparing for, or may have already initiated, targeted campaigns. The limited visibility in telemetry could simply reflect highly effective targeting of specific, low-volume user groups, or the use of zero-day distribution channels not yet cataloged by public security firms.

Future Trajectory: AI-Enhanced Malware Ecosystems

The trajectory suggested by PromptSpy, alongside concurrent reports from entities like Google Threat Intelligence detailing state-sponsored actors leveraging Gemini across all attack stages (from reconnaissance to post-compromise maneuvers), indicates that the integration of generative AI into the cybercrime ecosystem is not an isolated incident but an accelerating trend.

The future of mobile security will be defined by this AI arms race. We anticipate several immediate developments stemming from this revelation:

- Multi-Modal Adaptation: Future threats will likely move beyond persistence. LLMs could be used to generate polymorphic code, craft contextually perfect spear-phishing messages delivered from the infected device, or dynamically reconfigure C2 protocols to evade network-level intrusion detection systems based on real-time environmental scanning.

- LLM-Specific Defenses: Device manufacturers and OS developers will need to implement robust controls over how system services like Accessibility interact with external, unauthorized AI endpoints. This might involve mandatory sandboxing of API calls based on the nature of the data being sent (e.g., blocking screen context dumps from non-whitelisted processes).

- Rise of Adversarial Prompt Engineering: Threat actors will increasingly focus on crafting "jailbreaks" for the LLMs themselves, finding prompts that force the AI to output malicious, executable code or highly effective exploitation steps, bypassing inherent safety guardrails built into the models.

PromptSpy serves as a stark warning: the sophistication once confined to nation-state actors or highly funded APT groups is rapidly becoming accessible via readily available generative AI tooling. The fight against malware is shifting from code analysis to reasoning analysis, demanding a fundamental re-evaluation of mobile defense strategies.