The digital landscape is increasingly characterized by complex, dynamic web applications, particularly the rise of Single Page Applications (SPAs) built on modern JavaScript frameworks. While the perennial threat of leaked API keys and hardcoded credentials in source code repositories has been a known vulnerability for years, the true scope of this exposure within client-side, deployed code remained largely opaque. This uncertainty has created a significant blind spot in enterprise security postures. However, recent large-scale research, involving the automated scanning of five million distinct web applications, has illuminated this dark area, revealing a systemic failure in securing front-end assets that carry potentially catastrophic implications.

The research initiative, spearheaded by security experts who developed a specialized, advanced secrets detection methodology tailored for compiled JavaScript bundles, yielded findings that are both staggering in volume and alarming in severity. The sheer output of the initial scan—a plain-text file exceeding 100 megabytes—underscored the pervasive nature of the issue. This data corpus cataloged over 42,000 unique instances of exposed tokens, representing more than 334 distinct types of sensitive credentials. Critically, these were not historical artifacts or inert test keys; a substantial portion comprised active, high-privilege credentials actively deployed in production environments, rendering standard perimeter and source code security controls effectively useless against these specific exposures.

Unmasking Critical Access Vectors

The analysis prioritized credentials based on their potential blast radius, focusing first on those that grant access to core development and infrastructure tools. The results highlighted a dangerous reliance on these front-end assets for housing keys that should strictly remain server-side or secured within dedicated secret management vaults.

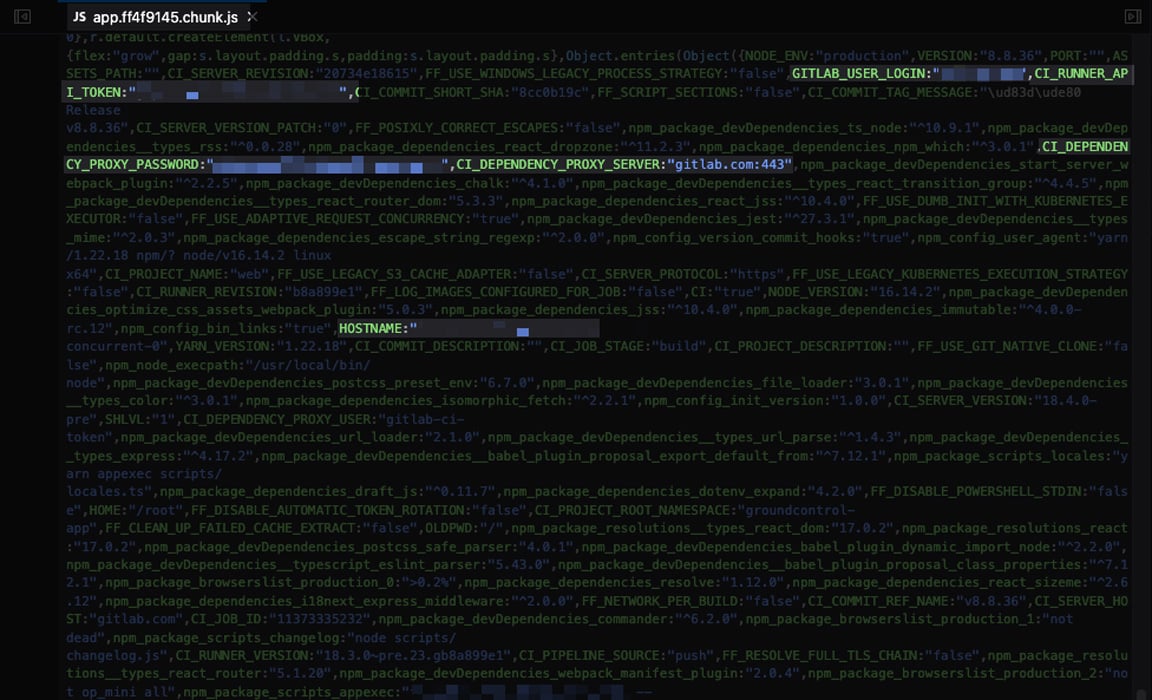

Foremost among these exposures were tokens associated with essential code repository platforms, including GitHub and GitLab. The research identified 688 such tokens. Many of these were validated as live and provided unrestricted access to organizational codebases. In a particularly egregious example uncovered during the audit, a GitLab Personal Access Token (PAT) was found directly embedded within a bundled JavaScript file. The scope assigned to this token was maximal: it permitted full access across all private repositories within the associated organization. This level of access is not merely a data leak; it constitutes a direct pipeline into the Continuous Integration/Continuous Deployment (CI/CD) infrastructure. Such a compromised token could allow an attacker to inject malicious code into production releases, pivot to cloud environments (such as AWS credentials often stored in CI/CD runners), or access sensitive SSH keys. The very foundation of software integrity was thus exposed through client-side code.

Beyond source control, productivity and project management tools proved to be another fertile ground for exposure. A significant finding involved an API key for a leading project management application, Linear, which was plainly visible in the front-end deployment package. The compromise of this single key granted the attacker a comprehensive view of the targeted organization’s entire operational backend managed through that platform. This included access to internal project roadmaps, sensitive bug reports, architectural discussions documented in tickets, and, crucially, references or embedded links to other downstream SaaS services and proprietary project data—effectively serving as a map for subsequent targeted attacks.

The Ubiquity of Over-Exposed Secrets

The inventory of exposed secrets extended far beyond development infrastructure, touching nearly every facet of a modern digital operation:

- Computer-Aided Design (CAD) APIs: Revelations included API keys granting access to third-party CAD platforms. Given the nature of these services, this translated to potential exposure of proprietary engineering schematics, user data, project metadata, and even sensitive architectural blueprints, including designs related to critical infrastructure such as healthcare facilities.

- Marketing and Communications Platforms: Credentials for email marketing services were frequently discovered. These keys facilitate unauthorized access to mailing lists, the ability to launch phishing or disinformation campaigns under the organization’s guise, and the theft of subscriber engagement data.

- Automation and Integration Webhooks: A substantial number of active webhooks were found, indicating deep integration points into internal communication and workflow automation. The audit located 213 active Slack tokens, 2 Microsoft Teams tokens, 1 Discord token, and 98 active Zapier integration keys. Compromising these allows attackers to eavesdrop on internal communications, trigger unauthorized automated actions, or exfiltrate data through established workflows.

- Document Processing Services: Exposed keys for PDF conversion and generation utilities suggest a pathway to manipulate sensitive documents or compromise the integrity of generated files used in official processes.

- Sales Intelligence and Analytics: Credentials for platforms that aggregate external company data and internal analytics were found. This can lead to the theft of market research, competitive strategy documents, or the impersonation of sales personnel.

- URL Shortening Services: Exposure here grants the ability to enumerate existing organizational links or, more dangerously, create new, malicious short links that redirect users to phishing sites while appearing to originate from the compromised entity.

Diagnosing the Security Tooling Gap

The prevalence of these secrets in deployed JavaScript begs a fundamental question: why are established security tooling paradigms failing to intercept them? The research points to specific limitations in the current security ecosystem when applied to the complexities of modern JavaScript compilation and deployment pipelines.

The Deficiency of Traditional Infrastructure Scanning

The conventional approach to automated security assessment often relies on infrastructure scanners. These tools typically operate by querying URLs and applying pattern matching—usually regular expressions—against the returned static content or known file paths. While effective for identifying configuration files or known hardcoded strings in simple server-side artifacts, this methodology is fundamentally ill-equipped for modern SPAs.

JavaScript bundles, often minified, obfuscated, and dynamically loaded, require more than simple URL enumeration. A legacy scanner, operating without a full browser rendering engine, will request the main HTML file but often fails to execute the necessary subsequent requests to fetch the compiled JavaScript assets referenced asynchronously within that initial response. For example, an infrastructure scanner might check https://portal.example.com/ but neglect to navigate to https://portal.example.com/assets/main.bundle-XYZ123.js, where the actual secrets reside post-build. The lack of browser simulation means the scanner never "sees" the content that the end-user—or an attacker—actually interacts with.

The Static Analysis (SAST) Blind Spot

Static Application Security Testing (SAST) tools represent the first line of defense, analyzing source code prior to compilation. They are instrumental in flagging hardcoded passwords or API keys directly written in source files (e.g., .js, .ts, .py). However, the research demonstrates a crucial gap: SAST tools often analyze source code before the final build process, which aggregates, transforms, and bundles modules.

Secrets injected during the build phase, or those pulled in via complex environment variable configurations that only manifest in the final, bundled output, can easily bypass SAST checks. The tool inspects the ingredients, but not the final, complex dish. When developers mistakenly inject environment variables meant for the backend directly into front-end configuration files that are then bundled, SAST tools relying on source-level inspection miss the final, deployed vulnerability.

The Dilemma of Dynamic Application Security Testing (DAST)

Dynamic Application Security Testing (DAST) tools are theoretically better suited for this task. By emulating user interaction—often using headless browsers—DAST scanners can fully render an application, navigate authenticated pathways, and inspect all dynamically loaded resources, including JavaScript bundles.

However, DAST adoption faces significant practical hurdles that limit its efficacy in comprehensive secrets discovery:

- Cost and Scalability: DAST solutions are notoriously expensive and resource-intensive. Organizations rarely possess the budget or operational capacity to deploy and maintain full DAST scanning across an entire sprawling digital estate, particularly for staging environments, microservices, or smaller, non-critical applications.

- Configuration Overhead: Effective DAST requires intricate configuration, especially for modern applications utilizing complex routing or requiring specific authentication flows. This complexity often discourages widespread, continuous deployment of these scanners.

- Pattern Specificity: While DAST excels at finding traditional web vulnerabilities (like XSS or SQLi), their built-in regular expression libraries for secrets detection may not be as exhaustively curated or up-to-date as specialized tools dedicated solely to credential hunting.

This creates a persistent security chasm: infrastructure scanners miss the client-side code entirely, SAST misses secrets introduced during build/bundling, and DAST is too costly and complex for universal deployment. The result is that secrets embedded deep within production JavaScript bundles remain largely invisible until explicitly targeted by a specialized scanner, as demonstrated by this recent audit.

Industry Implications and Future Trajectories

The implications of this research extend beyond simple remediation; they signal a necessary paradigm shift in how application security is approached in the era of the client-side dominant web.

The fundamental industry implication is the obsolescence of relying solely on perimeter or repository-level controls for protecting secrets that ultimately serve the client. When an organization’s cloud access keys or repository tokens are present in a file downloaded by any user browsing the website, the concept of a secure "perimeter" for those specific assets vanishes. The responsibility shifts toward ensuring that nothing that requires server-side trust or access to private infrastructure ever reaches the publicly accessible front-end bundle.

This trend will only accelerate with the increasing adoption of automated tooling and generative AI in software development. AI coding assistants, while boosting productivity, can inadvertently introduce secrets if developers are not rigorously disciplined, pulling configurations from development environments directly into application logic without proper sanitization. Furthermore, as build processes become more sophisticated—incorporating complex module federation, micro-frontends, and advanced tree-shaking—the compiled output becomes exponentially harder to analyze manually or with rudimentary tooling.

Moving Towards Proactive Client-Side Defense

To bridge this identified gap, the industry must prioritize security controls that operate dynamically against the deployed artifact, mimicking the final state of the application as seen by the user. This necessitates the integration of specialized Single Page Application (SPA) spidering capabilities directly into the security testing pipeline.

This advanced detection must incorporate:

- Headless Browser Execution: The scanner must functionally behave like a modern web browser, executing JavaScript to fully hydrate the DOM and trigger all necessary asynchronous asset loading calls.

- Contextual Analysis: Simple string matching is insufficient. The scanner needs the intelligence to understand the context of a string—is this a dummy key, or does it possess the structural signature of an active AWS secret or a valid CI/CD token?

- Integration into CI/CD: To counter the "build process blind spot," this scanning must be performed immediately after the production bundle is generated, acting as a final gate before deployment, long after traditional SAST checks have concluded.

The reality uncovered by scanning five million applications is stark: a significant percentage of the industry is unintentionally broadcasting the keys to their kingdom through client-side code. Shifting left is crucial, but the findings emphatically demonstrate that security must also shift forward into the deployment artifact itself, ensuring that even secrets that bypass early controls cannot survive the final journey to production. Organizations must adopt tooling capable of understanding and traversing the modern JavaScript ecosystem to reclaim control over their most sensitive credentials.