The relentless march of smartphone camera technology has delivered astonishing capabilities over the last decade. We have witnessed the proliferation of multi-lens arrays, the increasing dominance of computational photography, and the steady enlargement of physical image sensors. These advancements collectively allow pocket-sized devices to capture images that, just a few years ago, required dedicated, often bulky, mirrorless camera systems. However, the most profound leaps forward often occur not in the software layers that users see, but deep within the silicon architecture of the image sensor itself—the very component responsible for translating photons into digital data. A key technology quietly emerging in premium mobile imaging is poised to fundamentally solve one of photography’s most persistent antagonists: the dynamic range challenge.

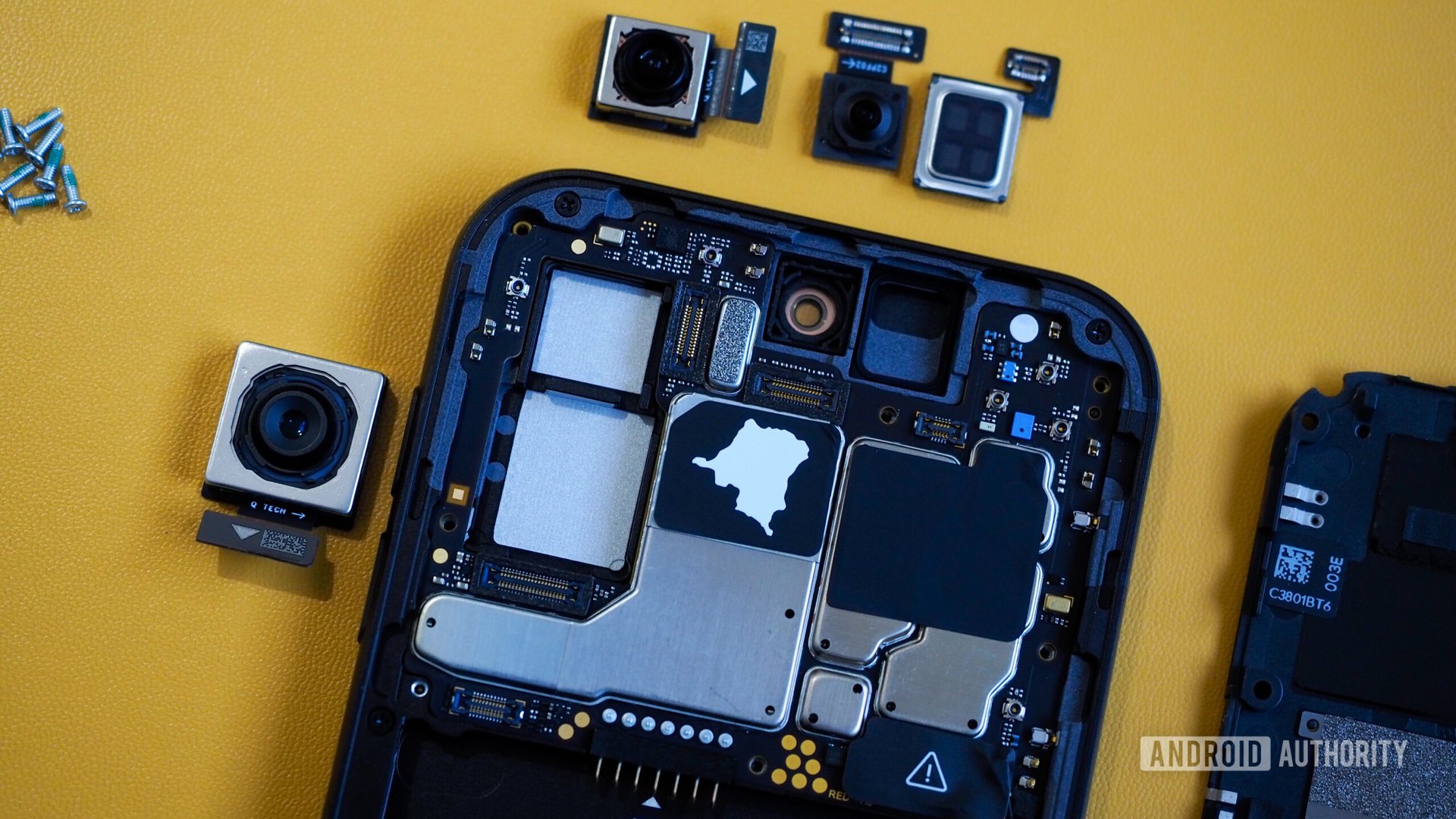

This innovation, known by the acronym LOFIC (Lateral Overflow Integration Capacitor), is moving beyond niche implementation and signaling a significant inflection point for mobile imaging fidelity. While flagships from manufacturers like Xiaomi and HUAWEI have already begun integrating this sophisticated feature into their top-tier offerings, the technology represents a hardware paradigm shift rather than another iterative software patch. It addresses the inherent limitations of capturing scenes that feature extreme luminosity differences—think of that intensely bright setting sun bleeding into deep, detailed foreground shadows.

Deconstructing the Core Challenge: The Limits of a Single Well

To appreciate the significance of LOFIC, one must first revisit the basic physics of image capture in a complementary metal-oxide-semiconductor (CMOS) sensor, the workhorse of modern digital cameras. Each pixel on the sensor houses a photodiode connected to a capacitor, often termed a ‘charge well.’ When light strikes the sensor, photons generate electrons, which accumulate in this well over the duration of the exposure time. The final electrical charge, proportional to the number of collected photons, is then read out as an analog signal, amplified (scaled by ISO), and converted into a digital value representing the pixel’s brightness.

The critical constraint here is the well’s capacity. Once the well is completely filled with charge—a condition known as saturation—any further incoming light simply overflows, leading to ‘clipping.’ In photography terms, this means highlights—the brightest parts of the scene—become pure white, losing all textural and chromatic information. Conversely, if the exposure is set low enough to prevent highlight clipping, the darker areas may register so little charge that they fall below the sensor’s noise floor or are compressed into an indistinguishable block of black pixels when amplified, resulting in a loss of shadow detail.

For decades, this dynamic range deficiency has necessitated compromises. Traditional digital photography relies on software-driven High Dynamic Range (HDR) techniques. This involves capturing multiple images sequentially—one intentionally underexposed to capture highlights, one normally exposed, and one overexposed to capture shadows—and then meticulously merging them in post-processing. While computational photography has made this process incredibly fast and effective, it is inherently imperfect. Any subject movement between the frames introduces artifacts: ghosting, misalignment, or a tell-tale "halo" effect around high-contrast edges.

Dual Conversion Gain: An Intermediate Hardware Step

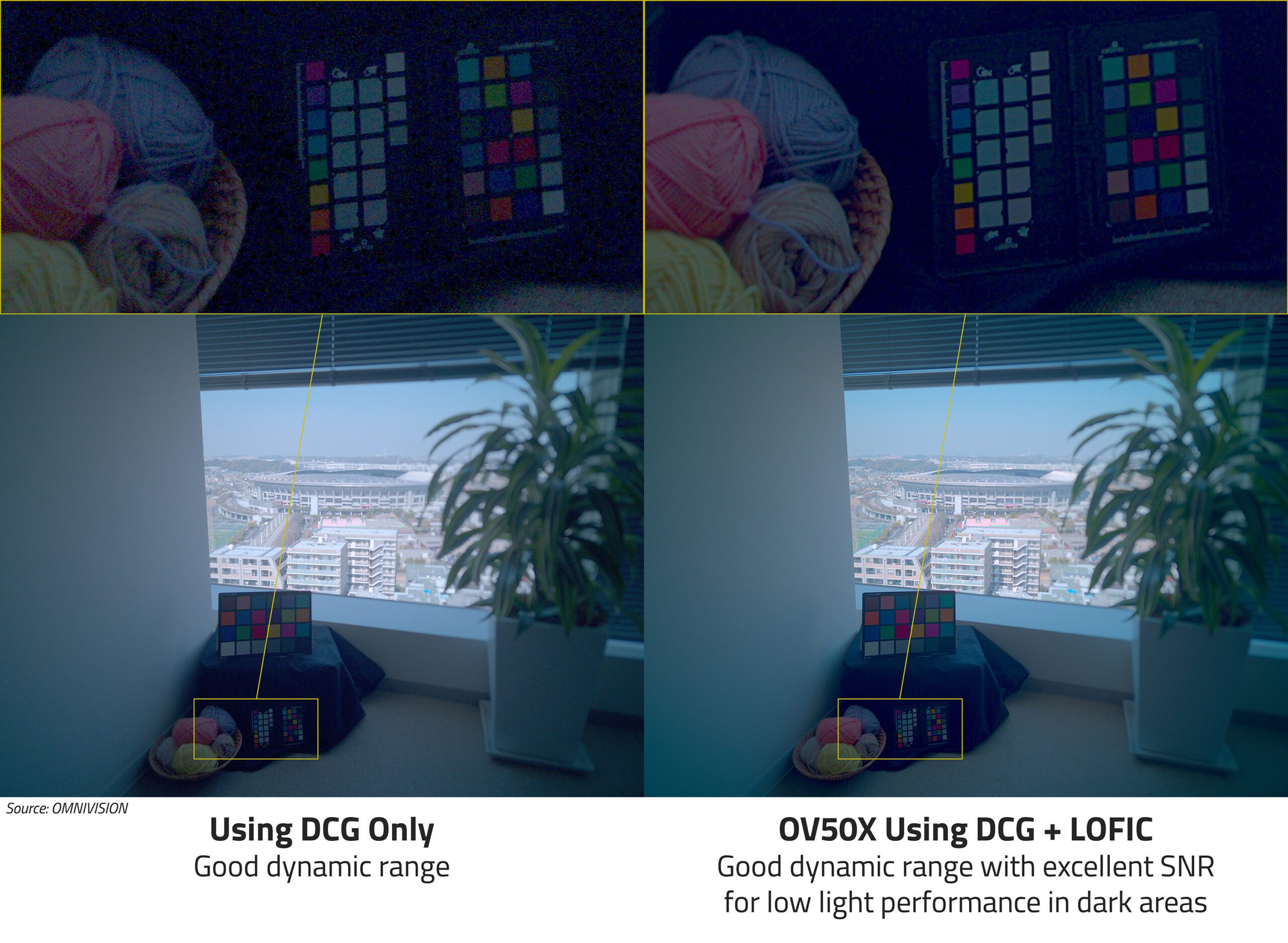

The industry attempted to mitigate this reliance on complex, frame-stacking software by introducing hardware improvements, most notably Dual Conversion Gain (DCG), also referred to as Dual-ISO. DCG sensors are engineered to read out the accumulated charge from the capacitor wells at two distinct amplification levels (gain stages) during the same exposure window. One readout uses high gain for excellent sensitivity in dark areas, and the other uses low gain to accurately capture the bright signals without saturating the well immediately. The image processor then intelligently merges these two data streams.

DCG represents a significant step forward, offering superior motion handling compared to multi-frame HDR because the data is acquired nearly simultaneously from the sensor plane. However, DCG typically involves reading out only two defined gain states, and crucially, this process is often applied globally across the sensor or in large blocks, rather than on a truly per-pixel basis for every distinct brightness level. While powerful, it still operates within a relatively fixed framework of two distinct amplification paths.

LOFIC: The Architectural Breakthrough in Charge Management

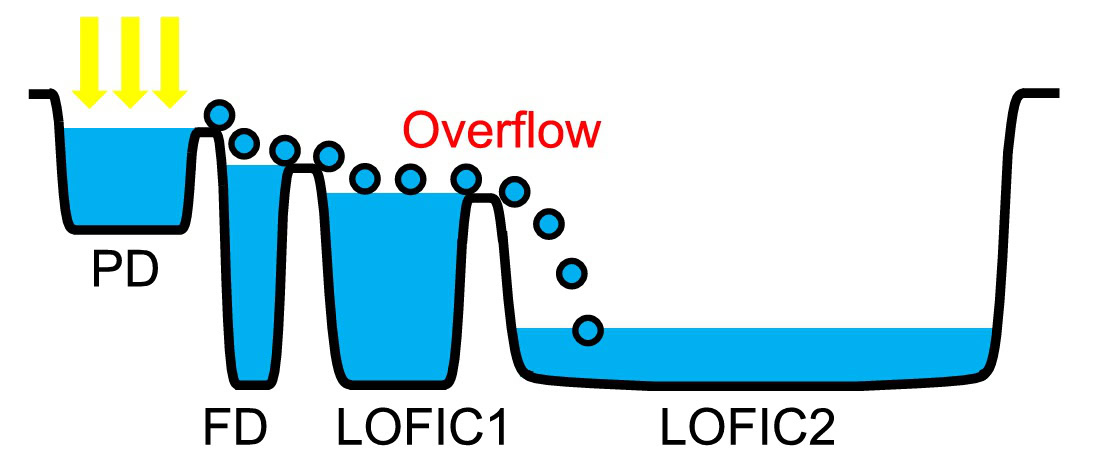

LOFIC, or Lateral Overflow Integration Capacitor, fundamentally redesigns the pixel architecture to address the capacity ceiling of the primary charge well. Instead of a single bucket, the LOFIC pixel incorporates an auxiliary, cascaded capacitor structure designed specifically to manage overflow charge.

The mechanism works as follows: During exposure, photons generate electrons that fill the primary, highly sensitive charge well. If the light intensity is so high that this primary well reaches its maximum capacity (saturation point), the excess charge does not simply dissipate or clip; instead, it flows laterally into the dedicated overflow capacitor wells. These secondary wells are designed to handle the higher charge levels associated with the brightest parts of the scene.

The crucial advantage emerges during the readout phase. Because the sensor now possesses distinct charge measurements—one from the primary well (optimized for midtones and shadows via high gain) and one from the overflow well (capturing the clipping data via low gain)—the sensor and its accompanying Image Signal Processor (ISP) can apply optimal gain mapping to each segment of the captured signal.

As the simplified analogy suggests, LOFIC allows the pixel to measure the signal at multiple, distinct voltage levels simultaneously within that single exposure event. This translates directly into a massive extension of effective dynamic range. Where traditional methods struggle to surpass 90 dB, sensors incorporating LOFIC, such as the high-end 1-inch OmniVision OV50X, are demonstrating effective dynamic ranges pushing towards or exceeding 110 dB directly from the sensor hardware.

This hardware-level solution ensures smoother highlight roll-off—the transition from bright colors to pure white—and far cleaner shadow retention, all captured in a singular, instantaneous exposure. The benefit is tangible: sunsets retain the intricate color gradients in the solar corona, bright studio lights do not become featureless orbs, and night scenes maintain texture in both illuminated windows and dark pavement simultaneously.

Industry Implications and the Competitive Edge

The adoption of LOFIC technology signals a significant divergence in high-end mobile imaging strategy. For manufacturers prioritizing pure photographic metrics—especially dynamic range and low-light performance—it represents the next hardware frontier, surpassing the limitations imposed by fixed DCG systems.

The complexity and associated manufacturing costs of integrating these extra capacitor structures are non-trivial. This explains why LOFIC currently resides in the realm of ultra-premium, often China-centric, flagship releases. The engineering overhead is substantial, impacting die size, power management, and thermal characteristics. Therefore, its early appearance is indicative of brands willing to push the boundaries of sensor cost and integration complexity to secure a clear technical advantage in imaging specifications.

For established Western giants like Apple, Google, and Samsung, the adoption curve appears to be slower. These companies have heavily invested in and optimized sophisticated, multi-frame software pipelines that deliver excellent, consumer-friendly results. While hardware improvements are always pursued, integrating a fundamentally different sensor architecture like LOFIC requires significant retooling of the entire imaging stack—from ISP firmware to computational algorithms—to fully exploit the new hardware capability. There is a natural inertia when a massive software investment has already yielded strong market acceptance. However, as LOFIC-equipped devices demonstrate superior baseline capture, the pressure mounts for these industry leaders to transition their flagship lines to embrace this superior hardware foundation.

The Impact on Video Capture

A frequently overlooked benefit of hardware-level dynamic range extension is its application to video. Video recording inherently demands high frame rates, making multi-frame HDR techniques either impossible or severely limited due to motion blur and processing latency. LOFIC’s ability to capture high dynamic range data in a single frame is transformative for mobile video.

In video applications, LOFIC ensures that every single recorded frame possesses a wider tonal latitude. This means moving cameras through high-contrast environments—such as filming a subject walking from shade into bright sunlight—will result in footage where the highlights resist immediate blowout, and shadows maintain texture. This hardware resilience reduces the need for aggressive tone-mapping in real-time video processing, leading to video that looks more natural, cinematic, and less processed. This capability is particularly attractive for professional content creators and vloggers who require maximum flexibility in post-production color grading.

Future Trajectories and Unmet Potential

While LOFIC is currently being deployed primarily in large-format (e.g., 1-inch type) sensors where the pixel pitch provides enough physical real estate to accommodate the auxiliary circuitry, the long-term goal must be its integration into smaller, more ubiquitous sensors.

The most significant untapped potential for LOFIC lies in areas where sensors are inherently light-starved due to their diminutive size: the front-facing selfie camera and specialized telephoto or ultra-wide lenses. These secondary cameras often suffer the most dramatically from dynamic range clipping, as their small pixels saturate almost instantly in challenging light. If sensor manufacturers can miniaturize the LOFIC structure without sacrificing too much pixel area (a difficult feat of semiconductor lithography), we could see front-facing cameras finally capable of handling group selfies against bright windows without producing silhouette effects.

Furthermore, the synergy between hardware and software will define the next generation of imaging. LOFIC provides the superior raw data foundation—the cleaner, more nuanced signal. This foundation will allow computational photography algorithms to become even more sophisticated. Instead of spending processing cycles desperately trying to align and merge misaligned exposure brackets (the HDR task), the ISP can dedicate its resources to advanced semantic segmentation, object recognition, and nuanced noise reduction, building upon an already superior dynamic baseline.

The evolution of mobile photography is rarely about a single breakthrough; it is about the convergence of hardware, firmware, and processing power. LOFIC represents a fundamental hardware advancement in signal acquisition. For the discerning mobile photographer who values true tonal accuracy over the latest software filter gimmick, tracking the deployment of Lateral Overflow Integration Capacitor technology will be crucial in determining which flagship device truly captures the world as the eye sees it, especially when facing the dramatic light challenges of the golden hour or the stark contrasts of urban nightscapes. This technology is not just an iterative upgrade; it is a necessary architectural evolution to bridge the final gap between mobile convenience and professional imaging fidelity.