The recent pivot by Google toward integrating its Gemini large language model into consumer-facing applications, particularly the introduction of "Gemini Live," has triggered significant friction within established user routines. For many long-time adopters of Google Assistant, the transition has not been a seamless upgrade but rather a forced migration that jettisoned critical functionality. This analysis delves into the specific regression experienced by users accustomed to the continuous, hands-free interaction afforded by the deprecated "Continued Conversation" feature, contrasting it with the limitations of the new voice interface, and examining the broader implications for the competitive landscape of generative AI.

The Indispensable Nature of Seamless Interaction

For years, the Google Assistant ecosystem, particularly on smart home devices, cultivated a deeply ingrained user habit centered on Continued Conversation. This feature eliminated the mandatory wake-word repetition between queries, allowing for fluid, natural dialogue. Imagine a morning scenario: a user asks for the daily news briefing while engaged in tasks like shaving or preparing breakfast—activities that inherently demand two hands. The Assistant would read headlines, and the user could seamlessly interject with follow-up questions: "Tell me more about that economic report," or "What is the weather forecast for the afternoon?" This flow mirrored a real-world interaction, minimizing cognitive load and maximizing efficiency during multi-tasking.

The shift to Gemini, heralded as the next generation of AI interaction, promised not just smarter responses but perhaps even more sophisticated contextual awareness. However, the implementation of Gemini Live appears to have prioritized a novel, highly interactive "live" experience over the proven utility of sustained, background conversation. When Continued Conversation was quietly retired or fundamentally altered in the standard Gemini mobile interface—and certainly on smart speakers where the Gemini integration is being rolled out—it created an immediate workflow disruption. The requirement to re-invoke the assistant via a tap or a fresh wake-word for every subsequent query breaks the immersive, hands-free experience that users had come to rely upon. This regression transforms a natural dialogue into a staccato series of discrete commands, forcing users to actively manage the interface even when their physical state precludes it.

Furthermore, the promise of Gemini as an upgrade to Assistant suggests improved personalization and context retention. Yet, reports indicate that even basic personalization—such as ensuring local or specific regional news sources are prioritized in the daily digest—is inconsistent across Gemini’s various modalities. This inconsistency necessitates manual intervention, exacerbating the pain point caused by the loss of Continued Conversation. The user is compelled to repeatedly interrupt the AI, not for deeper exploration, but simply to maintain the thread of a routine that was once automated.

The Downgrade: Gemini Live’s Functional Deficiencies

The core of the current dissatisfaction lies in the comparison between the now-absent, fully functional Continued Conversation and the replacement: Gemini Live. On its surface, Gemini Live is designed for immediacy and real-time feedback, leveraging advanced generative capabilities for a more dynamic voice interaction. The problem, however, is that immediacy does not equate to depth or utility in all contexts.

When users attempt to replicate their established news consumption routine using Gemini Live, they often encounter responses that feel superficial. Instead of delivering comprehensive summaries tailored to their preferences, the AI seems constrained to reading only the most superficial headlines or offering drastically condensed overviews. To extract the level of detail previously available through a standard Assistant briefing, the user must initiate a cascade of specific follow-up prompts. This iterative questioning process consumes far more time and effort than simply reading the source material directly—a paradoxical outcome for an AI designed to save time.

This functional disparity highlights a crucial design misstep: Google appears to have prioritized the novelty of a "live" voice experience—one potentially better suited for quick, reactive queries—over the utility of a persistent, informational briefing system. For tasks requiring sustained information delivery and iterative refinement (like consuming news, debugging code verbally, or lengthy explanations), the abrupt nature of Gemini Live’s interaction model proves inferior to the sustained context window offered by Continued Conversation. The user is forced to choose between a system that is hands-free but shallow (Gemini Live) and one that is detailed but interrupts the flow of life (standard Gemini requiring constant tapping).

Industry Implications: The Perils of Forced Migration and Feature Paywalling

The removal of a decade-old, free utility and its replacement with a potentially inferior, siloed, or paywalled successor sends troubling signals across the technology sector regarding product development philosophy.

Firstly, this move exemplifies the ‘Feature Tax’ often levied during platform migrations. Users invest time and effort into mastering a specific interaction model. When a foundational feature like Continued Conversation is removed during a platform transition (Assistant to Gemini), it effectively imposes a tax on that prior investment. This breeds user resentment, as it suggests that loyalty to the ecosystem is secondary to the company’s strategic imperatives for rolling out new branding or revenue streams.

Secondly, the introduction of Gemini for Google Home Premium and the associated monetization strategies underscore a significant industry trend: the financialization of foundational AI utility. Continued Conversation, which provided excellent hands-free utility for years without a direct subscription fee, is being supplanted by a version of Gemini Live that is positioned behind a paywall on smart speakers. This strategy—creating an artificial feature gap and then monetizing the closure of that gap—is highly aggressive. It suggests a corporate calculus where the inherent value derived by the user from a mature, free service is now being re-evaluated through a subscription lens. Competitors, particularly OpenAI with its ChatGPT voice capabilities, offer continuous voice interaction for free (albeit with usage caps or tier limitations), setting a benchmark that Google’s paid consolidation appears to undercut.

From a product development standpoint, this fracturing reveals a lack of cohesive strategy regarding multimodal AI integration. If the goal is to unify the AI experience, the core conversational mechanics—such as persistence and context tracking—should be universally superior or, at minimum, equivalent across all modes (text, standard voice, and live voice). The current configuration suggests that the underlying models or the interface wrappers are not yet fully aligned, leading to disparate performance based on the access point chosen by the user.

Expert Analysis: Context Windows and the Illusion of "Live" Intelligence

Technically, the ability to maintain context across several turns of dialogue (Continued Conversation) relies on a robust, short-term memory mechanism within the AI architecture—essentially a well-managed, rolling context window. Gemini Live, while architecturally impressive in its low-latency voice processing, seems to struggle with maintaining this crucial persistence without explicit re-prompting.

This points to potential engineering trade-offs. Achieving extremely low latency for real-time voice interaction often requires simplifying the processing pipeline or limiting the depth of context retrieval for each turn to ensure speed. Conversely, the older Assistant model, while slower, may have been optimized for deeper, stateful memory retention for predefined tasks like briefings. The failure here is not that Gemini cannot perform continuous conversation, but that the specific Gemini Live implementation chooses not to or cannot replicate the depth of the previous system while maintaining its advertised speed.

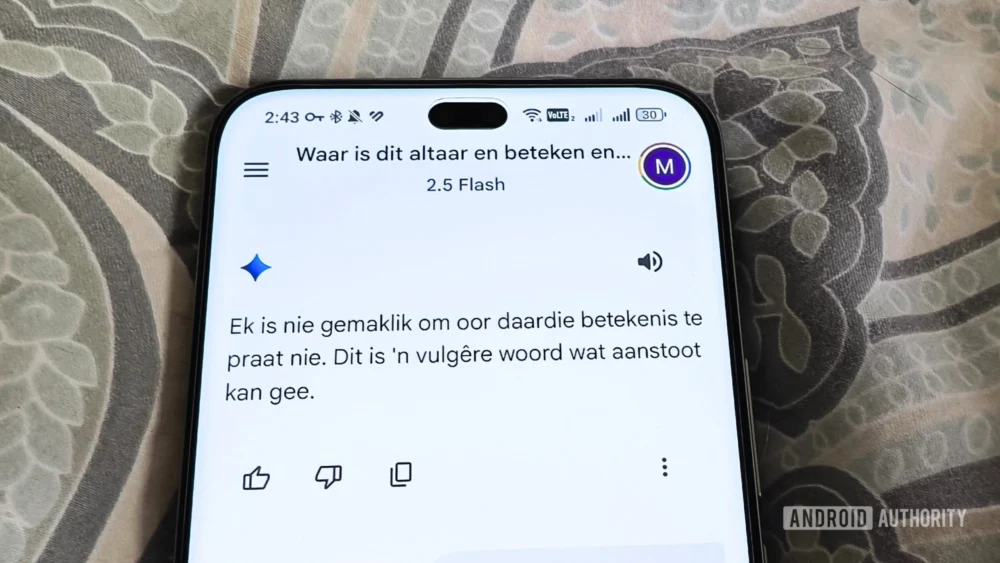

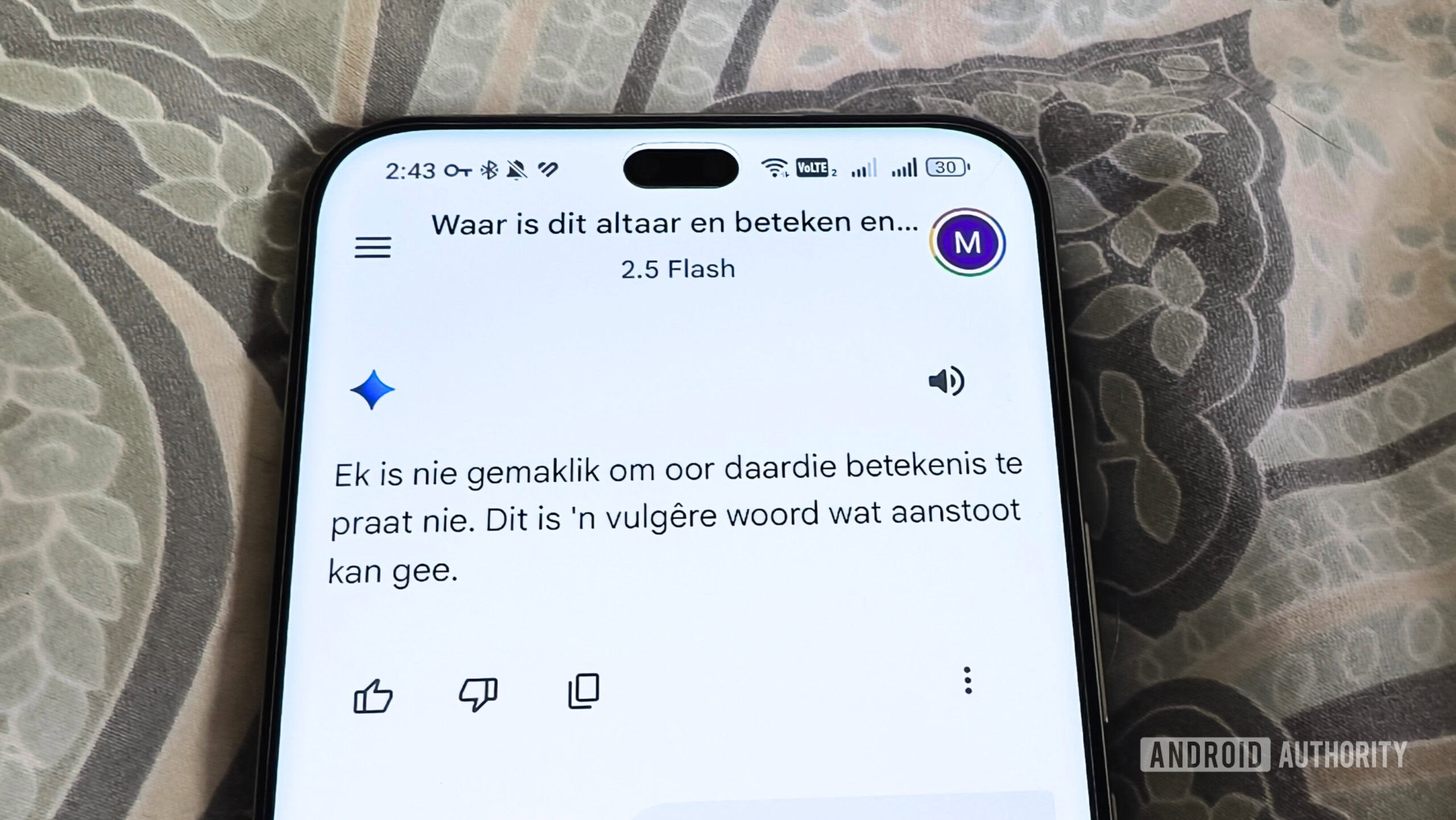

For developers and AI ethicists, this situation raises questions about the definition of "intelligence" in practical application. A system that can eloquently define an obscure Afrikaans word (as suggested by early use cases) but fails to remember the user’s preference for local news stories demonstrates high demonstrative intelligence but low operational intelligence within a personalized routine. True intelligence in consumer AI must be defined by its seamless integration into daily life, not merely its capacity for impressive but isolated feats of knowledge retrieval.

Future Impact and Trends: The Demand for User-Centric AI Rollouts

The backlash against the removal of Continued Conversation foreshadows a critical trend for the future of generative AI deployment: User inertia and the tyranny of habit. As AI moves from novelty to utility—from the desktop to the kitchen counter—users become deeply reliant on predictable, non-intrusive interaction methods. Any move that forces a user to re-learn how to interact with a core service, especially when the replacement is functionally worse for their primary use case, will inevitably lead to abandonment or significant negative sentiment.

Moving forward, Google and other tech giants must internalize several lessons:

- Context Persistence as a Baseline Requirement: Continuous, stateful conversation must be treated as a fundamental feature of any advanced voice AI, not a premium add-on or a feature slated for deprecation.

- Transparent Feature Parity: When replacing a legacy service (Assistant) with a new one (Gemini), the successor must meet or exceed the utility of the predecessor across all established user workflows before aggressively deprecating the old system. The current approach suggests a staged rollout where the new platform is incomplete, forcing users into an awkward interim state.

- Avoiding Monetization of Created Gaps: Leveraging a transition to introduce paywalls for features that were previously free and highly utilized is a high-risk strategy that erodes brand trust. If Gemini Live is to be the future, a free, utility-focused tier that supports basic continuous dialogue is essential to maintain the installed user base.

Ultimately, the current state of affairs presents a dichotomy: the potential power of cutting-edge generative models clashes with the practical demands of habitual, real-world use. If Google wishes for Gemini to truly replace Assistant as the ubiquitous digital helper, it must prioritize restoring the seamless, uninterrupted conversational flow that users have already integrated into their lives, rather than pushing shiny, yet functionally hollow, "Live" experiences that require constant manual oversight. The current setup forces users into an annoying choice between inconvenience and superficiality, a choice that savvy consumers will increasingly reject in favor of more reliable alternatives, whether they be legacy systems or emerging competitors. The long-term success of Gemini hinges not just on its language prowess, but on its ability to integrate quietly and powerfully into the fabric of everyday routines, something it currently fails to do for many users dependent on that critical conversational continuity.