The pursuit of seamless, instantaneous cross-linguistic communication—a staple of science fiction narratives for decades—is rapidly transitioning from theoretical possibility to practical application, largely driven by advances in machine learning and cloud computing infrastructure. Google Meet’s ongoing development of real-time speech translation represents a significant milestone in this technological evolution, moving beyond simple text-based translation apps toward true conversational fluidity. While this powerful feature was initially previewed and subsequently rolled out to desktop users tethered to Google’s premium enterprise and AI subscription tiers, compelling evidence unearthed within the latest Android beta build of the Meet application strongly indicates that Google is actively engineering parity for its mobile user base.

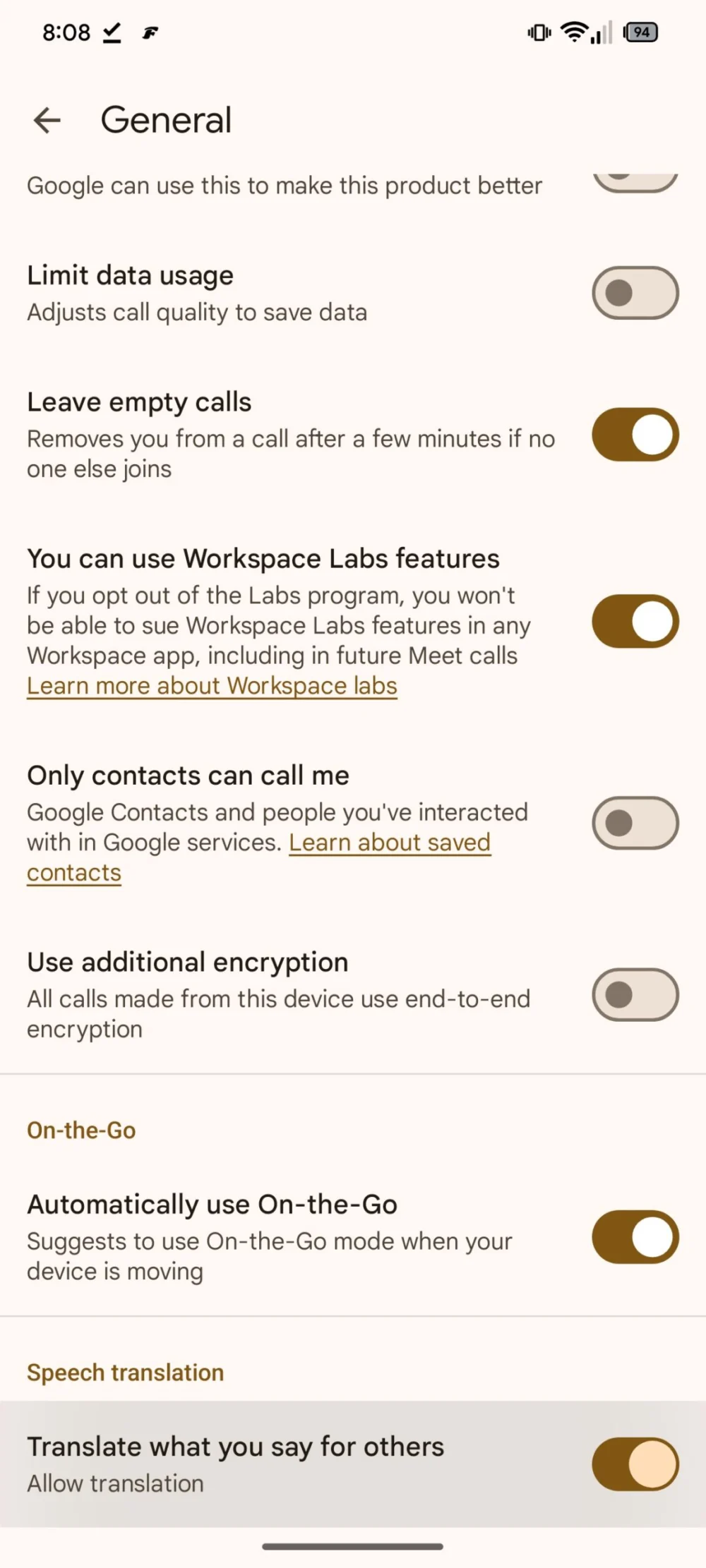

This revelation stems from an in-depth analysis of the Android version 344.0.861071534.public_beta.duo.android_20260126.01_p0. Through targeted code inspection and feature flag triggering—a technique commonly referred to as an APK teardown—researchers were able to coax a latent settings option related to speech translation into visibility within the application interface, despite it not being active for general deployment. This visual manifestation, even in this dormant state, serves as a strong canary in the coal mine, signaling that the necessary frameworks and user interface elements for mobile integration are being actively baked into the application’s core structure.

The Context: From Concept to Desktop Reality

To fully appreciate the significance of this mobile indicator, it is crucial to revisit the roadmap Google established for this technology. The concept was publicly articulated during a major developer conference the previous year, where Google showcased a vision where language barriers would dissolve in virtual meetings. Unlike legacy systems that required users to manually select languages or rely on post-meeting transcriptions, the proposed Meet solution aims for near-instantaneous audio translation—a participant speaks in Japanese, and others hear the output rendered in their native Spanish or English, ideally with minimal latency.

The initial launch of this feature was understandably cautious. Deploying complex, real-time AI models, especially those demanding high computational throughput for simultaneous speech recognition, natural language processing, and text-to-speech synthesis, presents significant infrastructure challenges. Consequently, Google strategically limited initial access to environments where computational resources are most abundant and predictable: enterprise-level cloud instances accessed via web browsers. This restriction served a dual purpose: rigorous testing under high-stakes business conditions and establishing a clear value proposition tied to premium service subscriptions.

The current limitation to desktop environments highlights the inherent technical gap between mobile and stationary computing platforms. Desktop browsers often benefit from more robust connection stability and greater access to system resources, which are critical for processing the complex pipeline required for high-fidelity, low-latency translation. Moving this capability to mobile devices necessitates optimizing these heavy models to function efficiently on battery-powered hardware with variable network conditions, a non-trivial engineering feat.

Expert Analysis: The Technical Hurdles of Mobile Translation

The implication of discovering the mobile settings toggle is that Google’s engineering teams have successfully navigated, or are very close to navigating, several critical technical hurdles.

Firstly, On-Device vs. Cloud Processing Balance: Real-time translation requires extremely low latency. If every single utterance must be sent to a central server, processed, and sent back, the conversational flow breaks down. The presence of mobile integration suggests Google is either leveraging highly optimized, smaller neural network models capable of running locally (on-device inference) for initial speech recognition and language identification, or they have drastically improved the efficiency of their cloud communication protocols to minimize round-trip time. Given the computational intensity of multilingual Transformer models, a hybrid approach—using on-device processing for rapid acoustic modeling and cloud for the heavier generative translation steps—is the most probable architecture being tested for mobile deployment.

Secondly, Battery and Thermal Management: Running complex AI inference on a smartphone CPU or GPU/NPU can rapidly drain the battery and generate significant heat. A feature like continuous live translation must be engineered with extreme efficiency to avoid degrading the user experience due to device throttling or excessive power consumption. The fact that a setting exists implies that performance benchmarks are being met or that the feature will only activate under optimal conditions (e.g., charging, strong Wi-Fi).

Thirdly, Network Agnosticism: Mobile users frequently shift between cellular bands (5G, LTE) and Wi-Fi. A stable translation stream demands consistent bandwidth. The mobile integration must incorporate sophisticated error correction and buffering mechanisms to mask minor network jitters, ensuring the translated audio stream remains coherent even when the underlying connection fluctuates.

Industry Implications: Redefining Remote Collaboration

The successful deployment of this technology across mobile platforms will have profound implications far beyond the immediate user base of Google Meet. It signals a maturation of AI-driven communication tools that fundamentally changes the economics and accessibility of global interaction.

For the global enterprise, this feature transition is transformative. Currently, international meetings often require professional human interpreters, a costly and logistically complex endeavor. If Meet can offer reliable, near-instantaneous translation accessible directly from a user’s phone, the barrier to entry for global team collaboration plummets. Small and medium-sized businesses (SMBs) that previously could not afford dedicated interpretation services can now engage international partners or remote talent more fluidly. This democratizes global commerce and significantly accelerates decision-making cycles across multinational operations.

Furthermore, this capability directly challenges competitors like Microsoft Teams and Zoom. Feature parity in high-value AI services is a key battleground in the collaboration software market. A superior, natively integrated mobile translation feature could sway large organizations considering migration or renewal decisions. It positions Google not just as a provider of video conferencing, but as a leader in breaking down linguistic friction points in the digital workplace.

Potential Roadblocks and the Ecosystem Question

While the APK findings are encouraging, several significant unknowns remain regarding the feature’s final rollout strategy, especially concerning access and platform exclusivity.

The existing desktop version is firmly positioned behind a paywall, specifically tied to Google Workspace subscriptions that include advanced AI features (often referred to under the "Duet AI" umbrella, or its successor branding). It is highly probable that the mobile iteration will inherit this monetization strategy. If this is the case, the immediate benefit will accrue only to enterprise clients already paying for premium tiers. The wider consumer or free-tier user base may have to wait significantly longer, or the feature might be permanently gated behind a subscription requirement.

A more complex question arises from the recent unveiling of similar technology on the Pixel hardware line, such as the touted "Voice Translate" tool integrated with phone calls on the latest Pixel devices. This raises the specter of device exclusivity. Will Google choose to integrate the Meet mobile translation capability exclusively through the Tensor processing unit (NPU) found in flagship Pixel phones, leveraging specialized hardware acceleration? While the current teardown did not reveal explicit Pixel-only flags, Google has a history of prioritizing cutting-edge, resource-intensive features for its own hardware ecosystem first. If this occurs, the universal translator’s reach will be artificially constrained, frustrating users on other high-end Android devices. A comprehensive, cross-device rollout would be more beneficial for market penetration but demands greater software optimization across a fragmented hardware landscape.

Future Impact: Beyond Meetings

The successful migration of real-time speech translation to mobile video conferencing sets the stage for broader applications that blur the lines between digital and physical interaction.

Consider the near-future integration with Augmented Reality (AR) platforms. If Meet can reliably translate spoken audio streams on a smartphone, that same underlying model can power AR glasses or mobile AR overlays. Imagine an individual wearing AR glasses in a physical setting—a conference hall, an international airport, or a factory floor—where foreign speakers’ words are instantly transcribed and translated as floating captions near their mouths. This moves beyond the contained environment of a video call into truly pervasive, context-aware communication assistance.

Moreover, this technology drives fundamental improvements in AI model training. Every successful mobile deployment provides millions of new, real-world data points across diverse accents, acoustic environments, and speaking styles. This feedback loop accelerates the development of more nuanced, culturally aware translation models that can handle colloquialisms and idiomatic expressions with greater accuracy, pushing the technology closer to the flawless fluency depicted in science fiction. The immediate goal may be clearer meeting communication, but the long-term trajectory points toward the complete erosion of everyday language barriers, transforming global education, tourism, and personal connection.

In conclusion, the quiet discovery within a recent Android beta build for Google Meet—a dormant, yet accessible, settings option for speech translation—is far more than a minor bug report. It is a strong indicator that one of the most highly anticipated AI features is nearing its critical transition from desktop novelty to a portable, essential communication tool. The industry watches closely to see how Google navigates the final hurdles of efficiency, distribution strategy, and potential hardware tie-ins as it prepares to unleash a genuine universal translator upon the mobile world.