The accelerating proliferation of synthetic audio content and sophisticated streaming manipulation tactics has forced digital music platforms to transition from defensive posturing to proactive technological warfare. In a significant industry pivot, Deezer, the French global music streaming service, has announced that it will commercialize and license its proprietary artificial intelligence (AI) detection engine to rival platforms and rights management organizations. This move transforms a competitive advantage into a shared industry standard, seeking to establish a unified front against the rising tide of AI-generated music fraud and content dilution that threatens the economic viability of human-centric music creation.

Deezer’s decision stems from alarming internal data demonstrating the exponential growth and pervasive fraudulent nature of automated content. The company, which initially deployed its detection system last year to tag and remove fully AI-generated tracks from user recommendations, now processes an average of 60,000 newly uploaded AI tracks daily. This represents a dramatic surge from the approximately 20,000 daily tracks observed in June of the preceding year, bringing the total number of AI-identified songs in Deezer’s catalogue to a staggering 13.4 million. Crucially, the platform reports that 85% of streams associated with these fully AI-generated tracks are classified as fraudulent, underscoring that the primary purpose of this content is not listener consumption but systemic exploitation of the royalty distribution model.

The Economic Imperative: Stopping the Royalty Drain

The core objective of Deezer’s technology is two-fold: ensuring transparency for consumers and safeguarding the integrity of royalty pools for legitimate creators. The current architecture of most streaming services relies on a pro-rata payment system, where total monthly revenue is divided based on the proportion of streams each track receives. When fraudulent AI tracks use bot networks or manipulation schemes to rack up billions of fake streams, as evidenced in recent legal cases, they effectively dilute the revenue share allocated to every human artist, songwriter, and publisher.

The high-stakes nature of this financial manipulation was starkly illustrated by a 2024 charge brought by the U.S. Department of Justice against a North Carolina musician. This individual was accused of generating AI songs and employing sophisticated bots to accrue billions of streams, resulting in the theft of over $10 million in royalties. Such incidents reveal that the issue is not merely one of aesthetic preference or content saturation, but a large-scale criminal enterprise exploiting systemic vulnerabilities in digital distribution infrastructure.

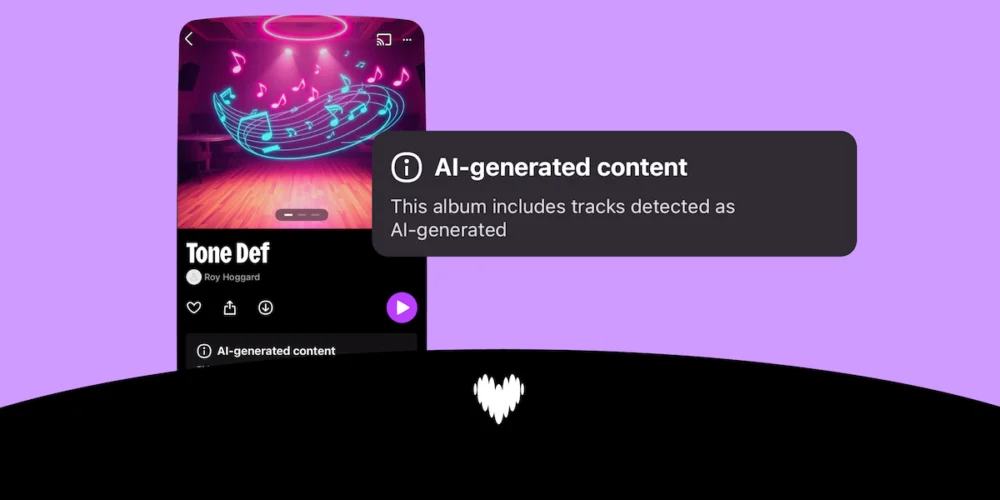

Deezer’s AI detection tool is designed to disrupt this economic cycle at the source. Once a track is positively identified as fully AI-generated, it is immediately excluded from algorithmic and editorial recommendation systems, effectively starving the track of organic discovery. More importantly, the tool demonetizes the content, permanently excluding it from the royalty calculation pool. This action directly tackles the financial incentive underpinning the fraud.

Technical Precision in an Era of Deepfakes

The efficacy of any content defense tool rests entirely on its accuracy. Generic filters are easily circumvented by iterative AI models, leading to high rates of both false positives (penalizing human artists) and false negatives (allowing fraud to pass). Deezer claims its deep learning model achieves an accuracy rate of 99.8%, a figure that, if consistently validated across different operating environments, represents a major technical achievement.

The technology is reportedly capable of identifying the distinct digital signatures left by major generative audio models, including industry leaders like Suno and Udio. This level of granular detection is critical because generative models often embed specific artifacts, spectral distortions, or unique metadata patterns that distinguish them from human-produced audio, even when the resulting music sounds highly polished. By detecting the creation source rather than just suspicious listening patterns, the tool provides robust, auditable evidence for exclusion.

Alexis Lanternier, CEO of Deezer, noted that the industry response to the availability of the tool has been enthusiastic, with several organizations already conducting successful tests. One of the first confirmed adopters is Sacem, the influential French music creators and publishers society, which represents over 300,000 artists, including high-profile electronic musicians like David Guetta and DJ Snake. The adoption by a powerful collective management organization signals that the crisis is now viewed as an administrative and legal challenge requiring advanced technological countermeasures, extending beyond the domain of streaming platforms themselves.

While Deezer has not disclosed a standardized pricing model, indicating that the cost structure is dependent on the scope and type of deal negotiated with adopting entities, the commercial availability suggests a strategic shift toward establishing the company as a key infrastructure provider in the digital rights management (DRM) and content authentication space.

Industry Implications: A Fractured Response to AI

Deezer’s decision to share its defense infrastructure arrives at a critical juncture where the music industry remains deeply divided on how to manage generative AI.

On one side stand platforms and organizations prioritizing the defense of human creativity and catalogue cleanliness. Independent platforms like Bandcamp have taken the most extreme measure, banning all AI-generated music entirely. Spotify, the market leader, has also updated its policies, focusing on labeling AI usage, clamping down on spam, and explicitly forbidding unauthorized voice cloning. These actions reflect a growing consensus that unchecked AI content threatens the integrity of the listening experience and the fairness of compensation.

On the opposing side, major record labels—Universal Music Group (UMG) and Warner Music Group (WMG)—have adopted a strategy of engagement and monetization. Both groups have recently settled lawsuits and signed strategic licensing agreements with key AI startups like Suno and Udio. These deals provide the AI companies with legal access to vast, copyrighted music catalogs for model training, ensuring that artists and songwriters receive compensation when their works are used to build the next generation of creative tools.

This schism creates a complex landscape: AI generation is being simultaneously legalized and embraced by rights holders for controlled, licensed creation, while the resulting unlicensed and fraudulent output is being aggressively fought by streaming services. Deezer’s licensing of its detection tool provides the crucial technological bridge for those platforms committed to the anti-fraud position. It standardizes the technical criteria for what constitutes unacceptable content, potentially accelerating industry-wide adoption of content authentication mandates.

Expert Analysis: The Threat of the Digital Landfill

The exponential growth in track uploads—largely driven by non-human content—presents a profound crisis of content discoverability. Industry experts frequently refer to this phenomenon as the creation of a "digital landfill." Streaming algorithms, designed to surface relevant content from millions of tracks, begin to struggle when the ratio of low-quality, fraudulent, or synthetic content skyrockets.

The ethical argument underpinning Deezer’s move is the preservation of human creative capital. If algorithms become overwhelmed by 60,000 tracks of daily AI sludge, the ability of a genuinely original, human-created track to be discovered, recommended, and monetized diminishes significantly. This threatens to discourage independent artists, who rely heavily on streaming revenue and algorithmic discovery, leading to a long-term erosion of artistic diversity and quality.

Furthermore, the fraud identified by Deezer—where 85% of streams on AI tracks are fraudulent—highlights the inadequacy of existing content moderation systems. Traditional anti-fraud measures often look for suspicious stream patterns (e.g., rapid, high-volume streams from a single location). AI detection, however, is a layer of semantic and technical analysis that verifies the provenance of the content itself. This shift in focus, from analyzing listener behavior to verifying content authenticity, is vital for long-term platform health.

For collective management organizations like Sacem, adopting a tool with 99.8% accuracy is essential for fulfilling their fiduciary duty to their members. They must ensure that the pooled royalties they distribute are not disproportionately captured by fraudulent entities. By integrating Deezer’s system, PROs gain a powerful mechanism to audit and cleanse royalty statements before distribution, guaranteeing fair compensation for human labor.

The Future of Authentication and Digital Rights

Deezer’s commercialization of its AI detection system sets a compelling precedent for cross-platform cooperation in defense of digital ecosystems. The future of the digital content space will likely necessitate a multi-layered authentication approach that goes beyond reactive detection.

One anticipated trend is the mandatory implementation of digital watermarking by generative AI developers. If models like Suno and Udio were legally required to embed an immutable, traceable watermark into every audio file they produce—identifying the track as machine-generated—detection would become significantly simpler and more robust. However, detection tools like Deezer’s will remain critical for catching content that has been passed through adversarial attacks, modified to remove watermarks, or generated by models operating outside major regulatory frameworks.

The current landscape is characterized by competitive silos, yet the pervasive nature of AI fraud demands a collaborative infrastructural solution. By offering its tool, Deezer is pushing the industry toward a baseline technical standard for content verification. This necessary step acknowledges that in the digital age, fighting systemic fraud requires shared technological investment, even among direct competitors.

Ultimately, the long-term impact of this decision will be measured by the rate of adoption among major streaming services. Should Spotify, Apple Music, or Amazon Music elect to integrate this proven technology, it would solidify a robust defense mechanism across the majority of the global streaming market, shifting the balance of power back toward human artists and establishing a clearer demarcation between legitimate content and synthesized exploitation. The fight against AI fraud is fundamentally a fight for the sustainable economics of creativity, and Deezer has just armed the opposition with a formidable new weapon.