The landscape of short-form video creation is undergoing a significant metamorphosis, driven by platforms aggressively integrating advanced generative artificial intelligence into their core editing workflows. YouTube, the dominant force in long-form content and a rapidly growing contender in the vertical video space with Shorts, is currently piloting a new set of experimental tools designed to fundamentally alter how creators interact with existing content. This initiative moves beyond simple splicing and sound overlays, venturing into the realm of direct visual manipulation powered by AI, signaling a profound shift in democratizing high-level video effects for the mainstream user base.

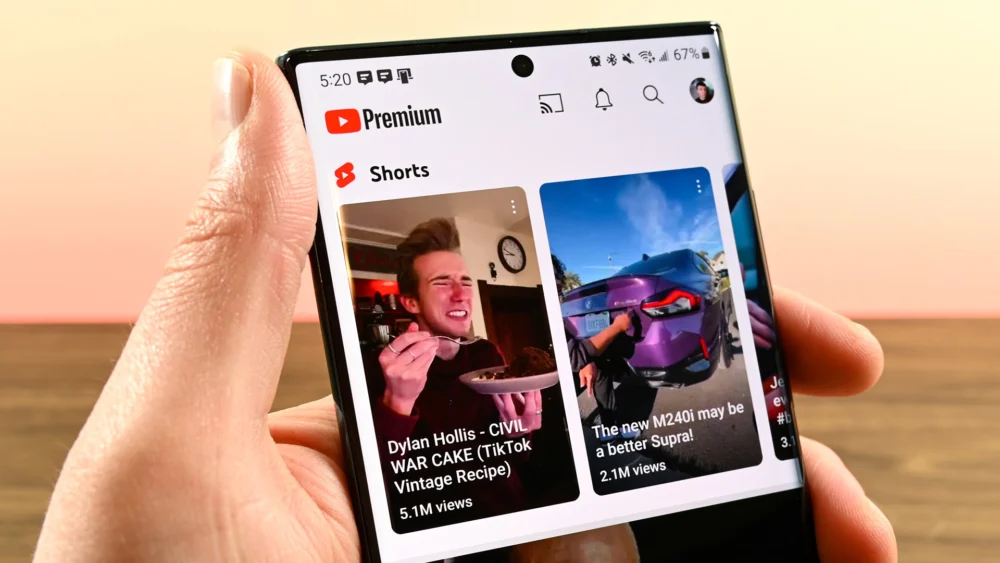

This ongoing experiment, currently limited to a small cohort of English-speaking users, introduces two powerful new capabilities within the established Shorts Remix framework: "Add object" and "Reimagine." These features build upon the existing Remix functionality, which already allows users to leverage audio tracks or video segments from other creators’ publicly available Shorts. However, the introduction of generative AI capabilities represents a leap forward, positioning YouTube not just as a content library, but as a dynamic, AI-assisted production studio accessible directly within the mobile application.

To fully appreciate the scope of this development, one must first understand the context of the "Remix" ecosystem. Remixing, which draws inspiration from the viral success of TikTok’s Duet and Stitch features, is crucial for fostering a participatory culture on YouTube Shorts. It encourages continuity, commentary, and iterative creativity by making it frictionless to sample and respond to popular content. Prior iterations focused on composition—adding your voice over someone else’s video or juxtaposing two videos side-by-side. The new AI tools pivot the focus from composition to transformation.

The "Add object" feature, as described by Google, empowers users to inject new visual elements into an existing Short, constrained to a maximum duration of eight seconds from the original clip. Crucially, this insertion is not merely pasting a static image; it utilizes AI processing to generate and integrate new items based on either pre-suggested prompts or custom text inputs from the creator. Imagine taking a clip of someone walking down a street and, using this tool, adding a photorealistic, animated dragon soaring above the skyline, all without leaving the Shorts editor. This capability blurs the line between standard video editing and sophisticated visual effects (VFX) creation, traditionally the domain of high-end desktop software.

Even more transformative is the "Reimagine" function. This tool takes a static frame—a single snapshot from the source Short—and uses the provided context (the original image and user prompts) to generate an entirely new, short video sequence. If a user finds a compelling still frame in an original Short, "Reimagine" can extrapolate that moment, generating novel motion and visual continuation based on textual direction. This is effectively leveraging latent diffusion models, similar to those powering popular text-to-image and text-to-video AI platforms, but tightly integrated into the YouTube content graph. A user could theoretically take a mundane still photo from a travel vlog and prompt the AI to "Reimagine this scene as a vibrant cyberpunk metropolis," receiving a short, animated clip as output.

The industry implications of deploying such tools at scale on a platform as vast as YouTube are staggering. Firstly, it significantly lowers the barrier to entry for advanced creative expression. Creators no longer need specialized knowledge in 3D modeling, motion tracking, or complex compositing software. The complexity is abstracted away into simple text prompts, transforming creative intent directly into tangible video assets. This democratizing effect could lead to an explosion of highly imaginative, yet technically simple, derivative content.

Secondly, this experiment directly addresses the content velocity demands of the short-form market. In the race against TikTok and Instagram Reels, sheer volume and novelty are paramount. By providing tools that allow creators to generate high-production-value additions almost instantaneously, YouTube can encourage faster content turnover and maintain user engagement by constantly refreshing the creative palette available to its user base.

However, the integration of generative AI into remixing raises substantial intellectual property and attribution challenges, which YouTube appears to be proactively addressing. The announcement emphasizes that any content generated via "Add object" or "Reimagine" will retain a mandatory link back to the original source material. This mechanism is vital for maintaining the integrity of YouTube’s copyright and fair use framework, ensuring that the derivative work acknowledges its foundation. Furthermore, creators retain the explicit right to opt out, allowing them to exclude their content from being used as a source for these new AI-driven remixing tools. This control mechanism is crucial for maintaining creator trust and addressing potential concerns about unauthorized transformation of their original artistic output.

The early detection of these tools by users, as noted by community reports on platforms like Reddit, underscores the intense scrutiny under which YouTube operates. In the fast-moving world of AI integration, leaks or early sightings are common, but they also serve as valuable, real-world stress tests for the rollout strategy. The fact that the features were spotted even before the official announcement suggests a phased, perhaps slightly uneven, deployment across user segments.

From an expert analysis standpoint, these tools represent YouTube’s strategic commitment to embedding generative AI deeply within its ecosystem, moving beyond theoretical exploration into practical application. While platforms like Meta have focused on AI filters and effects, YouTube’s approach targets the core mechanics of content iteration—audio and visual sampling. This is a calculated move to ensure that as the technology matures, YouTube’s creator base is already fluent in using these new modalities.

The underlying technology powering "Add object" and "Reimagine" is likely leveraging sophisticated models trained on vast datasets, potentially including proprietary YouTube content, although specifics remain undisclosed. The ability to insert objects with spatial awareness (implied by "insert items into a scene") suggests integration with scene understanding or depth mapping capabilities, even if simplified for mobile use. "Reimagine," functioning on a single frame, demands powerful temporal coherence generation—the AI must invent the motion and context that logically follows or precedes that static moment.

Looking toward future trends, this experiment sets a precedent for what might become standard features across the entire platform. If these AI remix tools prove successful in driving engagement within Shorts, it is highly probable that similar, perhaps more advanced, versions will eventually migrate to the standard YouTube editor. We could see future iterations allowing users to "Reimagine" entire long-form videos into different styles (e.g., turning a documentary into an animated short), provided the original creator opts in.

Furthermore, this push forces a necessary conversation about creative ownership in the age of generative media. If an AI generates a novel visual sequence based on a user prompt applied to another creator’s frame, where does the true authorship lie? YouTube’s current attribution model attempts to solve this by anchoring back to the original source, treating the AI output as an advanced form of sampling or transformative fair use. The success of this model will be closely watched by legal experts and intellectual property advocates globally.

The adoption rate will be a key metric. While early adopters might be enthusiastic, widespread adoption depends on the quality, speed, and ease of use of the generated outputs. If the AI consistently produces artifacts, unnatural lighting, or poor integration, creators will revert to traditional editing methods. Conversely, if the results are indistinguishable from professional VFX work, the platform could see an immediate, massive influx of visually stunning, yet easily produced, Shorts.

In summary, YouTube’s testing of "Add object" and "Reimagine" within Shorts Remix is not merely an incremental update; it is a strategic deployment of accessible generative AI aimed at fostering a new stratum of participatory creativity. It solidifies the platform’s commitment to short-form video dominance while simultaneously pushing the boundaries of what is technically achievable for the average mobile creator, all while navigating the thorny issues of attribution and content control in this rapidly evolving digital frontier. The long-term impact will likely redefine the baseline expectations for visual quality and creative augmentation in online video.