The integration of Artificial Intelligence into daily professional workflows has transitioned from a fringe experiment to a ubiquitous reality within modern organizations. Virtually every employee now leverages some form of AI tool, whether for drafting emails, summarizing documents, generating code, or analyzing data. This rapid, decentralized adoption has fundamentally shifted the mandate for IT and security departments. The critical question is no longer if AI should be permitted, but rather, how this rapidly expanding ecosystem of applications can be effectively secured and governed. This transition represents a significant, often underestimated, operational challenge for enterprise security teams.

The velocity of innovation in the AI landscape means that new tools and novel integrations are introduced daily, frequently bypassing traditional procurement and security vetting processes. This creates a substantial ‘shadow IT’ problem, specifically tailored to AI—often termed "Shadow AI." This hidden layer of unmanaged technology introduces significant vectors for data leakage, compliance violations, and intellectual property risk, as sensitive corporate data is often fed into third-party, externally controlled models. Managing this emergent risk profile demands a departure from reactive auditing toward continuous, automated oversight.

To effectively master this challenge, organizations require a sophisticated security framework capable of delivering persistent discovery, granular real-time monitoring, and anticipatory governance mechanisms. Crucially, this framework must achieve these objectives without necessitating the allocation of an entire dedicated team solely to the Sisyphean task of tracking down every novel AI utility. A solution that addresses the core friction points of visibility and enforcement is paramount.

Establishing the Foundational Inventory: Visibility at Day Zero

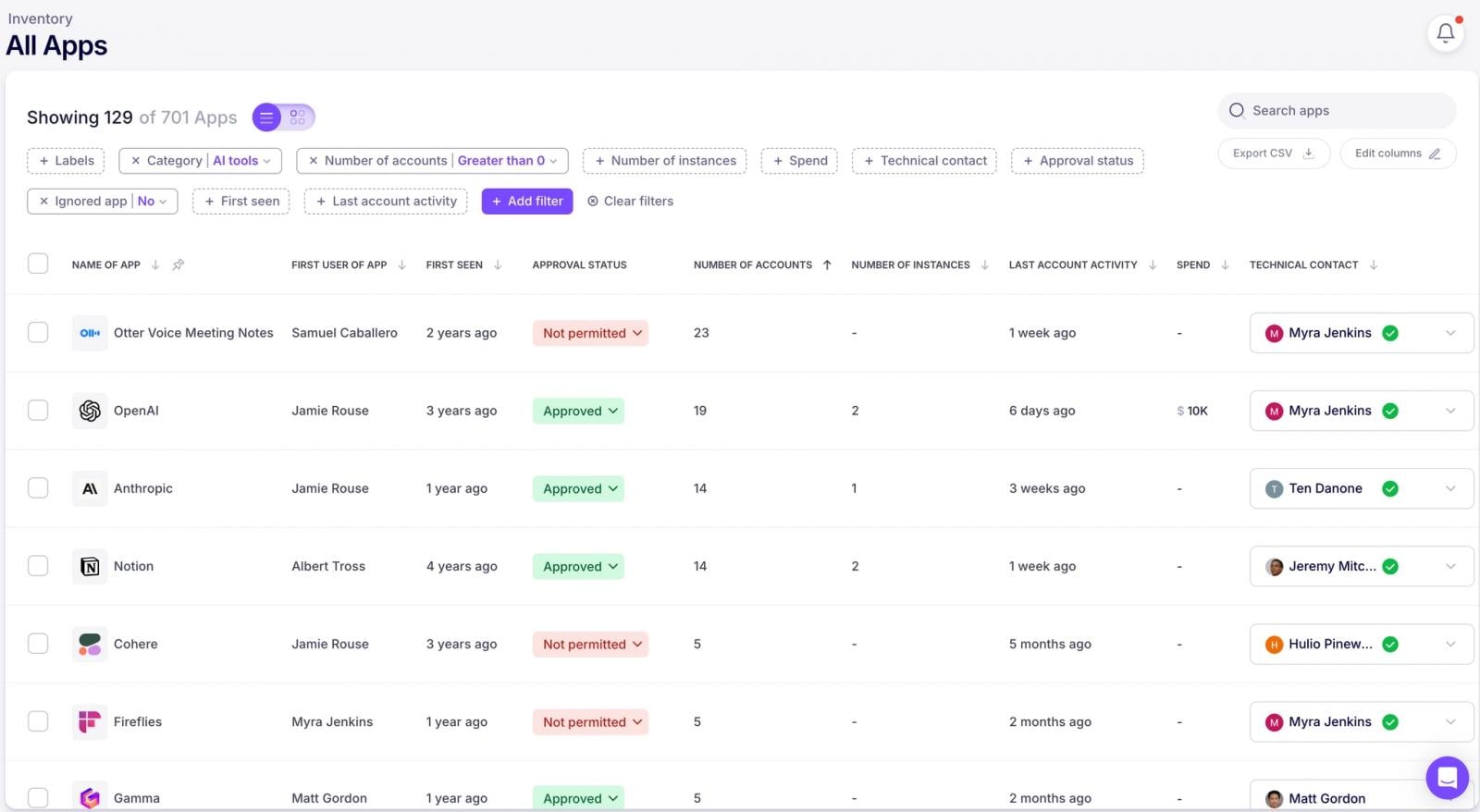

The fundamental axiom of cybersecurity remains: security cannot be applied to that which remains unseen. The initial, and perhaps most critical, step in addressing Shadow AI is achieving a comprehensive, immediate inventory of all existing AI applications and their associated user accounts within the corporate environment. This must be achieved without relying on the notoriously unreliable methods of employee surveys or manual self-reporting, which inevitably fail to capture the full scope of adoption.

The objective is to generate a complete, high-fidelity map of the organization’s AI footprint from the moment a governance solution is deployed. This "Day One" discovery must encompass every AI application and account provisioned to employees, regardless of whether that usage predates the implementation of the new security protocol.

The Mechanics of Continuous AI Discovery

Achieving this level of persistent visibility necessitates leveraging existing, trusted authentication infrastructure. Advanced discovery mechanisms typically integrate through lightweight connectors established with the organization’s Identity Provider (IdP), such as Microsoft 365 or Google Workspace environments. The enablement of this integration is often remarkably swift, frequently requiring less than five minutes.

Once integrated, the security platform analyzes the digital exhaust generated by SaaS and AI providers—specifically, the machine-generated notification emails (e.g., those originating from [email protected]). These automated communications are rich with metadata regarding account provisioning, security setting modifications, and password changes. By tapping into this stream of transactional signals—while strictly adhering to privacy mandates by never storing the content of the emails themselves—the system can automatically map the adoption of new accounts and services across the entire workforce. This passive ingestion of metadata provides instantaneous, comprehensive visibility into AI sprawl as it occurs, yielding a definitive historical inventory up to that point.

Deepening Visibility with Endpoint Context

While IdP integration reveals who has signed up for what, achieving true operational context often requires visibility at the point of interaction. Expanded oversight is achieved through the deployment of an endpoint-level agent, such as a specialized browser extension. This component provides immediate, actionable intelligence and triggers real-time alerts when high-risk behaviors are observed directly within the user’s workflow.

Beyond mere alerting, this endpoint capability enables proactive engagement through direct user communication—the "nudge." These contextual prompts can be delivered via the browser extension, or through integrated collaboration platforms like Slack, Teams, or email. Such nudges serve multiple governance functions: warning users about immediate risky actions, reinforcing established secure computing practices, guiding employees toward pre-approved, vetted alternatives, or requesting immediate clarification regarding the context of an unfamiliar or potentially unauthorized tool usage. This transforms security from a policing function into a real-time coaching mechanism.

Expert Analysis: Monitoring Data Exfiltration in Conversational AI

The sheer utility of generative AI models—like ChatGPT, Gemini, or proprietary large language models (LLMs)—drives employees to input vast quantities of internal information. This includes proprietary code, sensitive strategic documents, and operational data, often pasted directly into the chat interface. This interaction pattern represents a severe data exfiltration risk, as the inputs become training material or reside on external infrastructure.

Sophisticated security extensions are now engineered to monitor these active AI conversations. They employ techniques to detect the presence of sensitive data elements, including Personally Identifiable Information (PII), internal system secrets (API keys, credentials), and confidential financial figures, flagging them the moment they are entered.

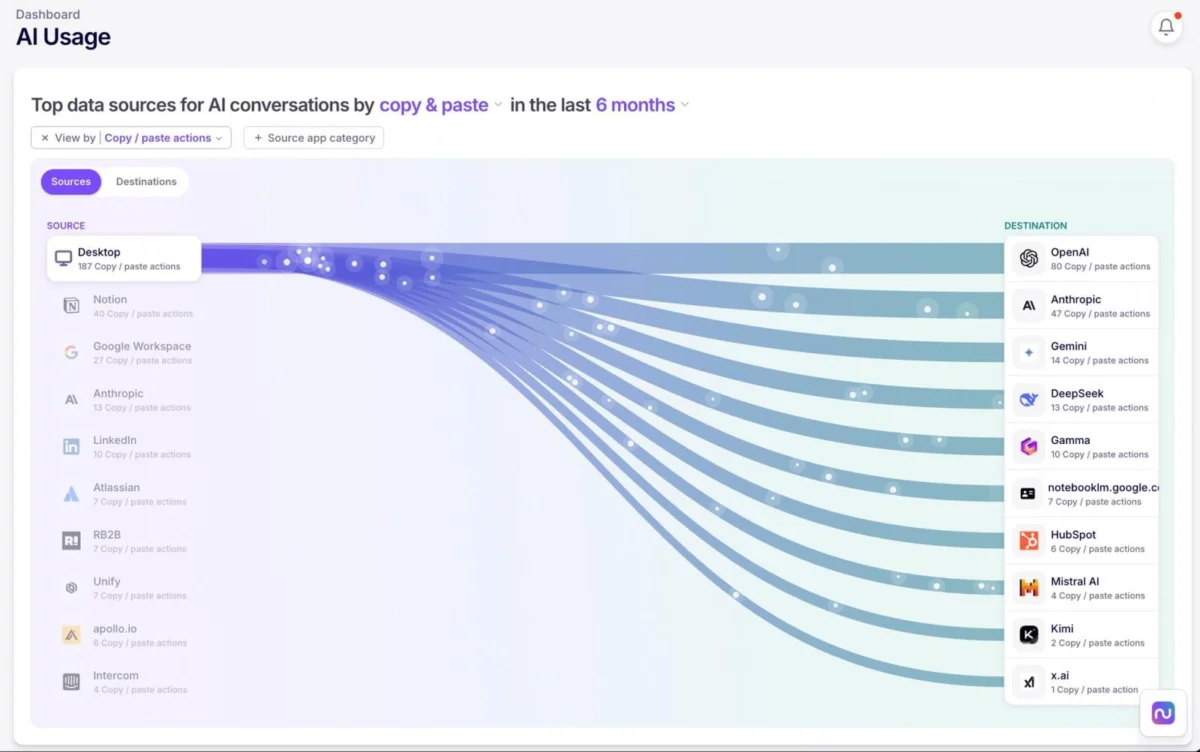

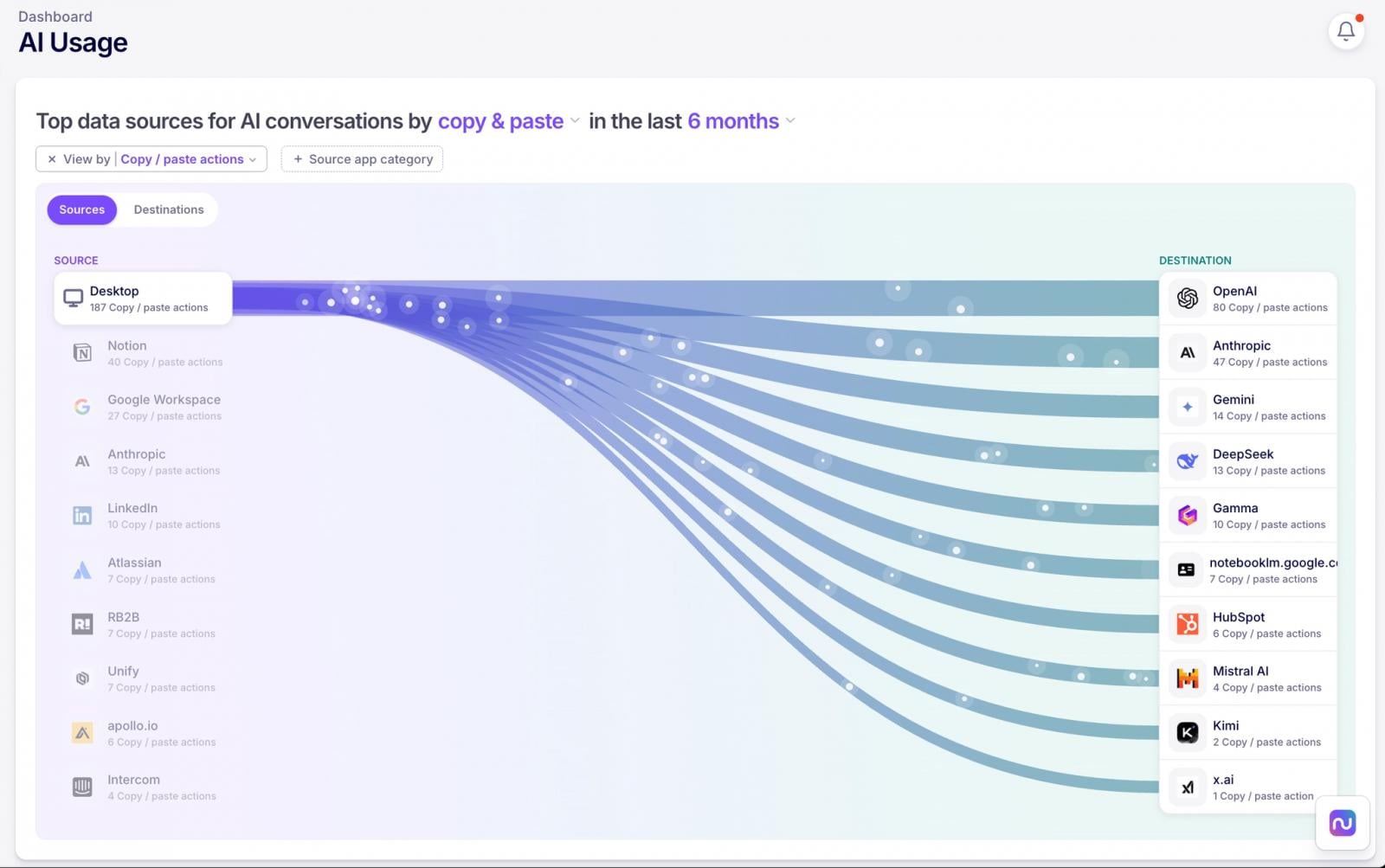

The scope of monitoring extends beyond simple text inputs. Crucially, detection must also encompass file uploads to these AI platforms. Effective solutions provide granular context: who uploaded what file, when the action occurred, and how it was executed. Furthermore, advanced platforms visualize the complex data flows established between internal enterprise systems (like cloud storage or CRM platforms) and these external AI utilities. Mapping these data pathways is essential for pinpointing the highest-leverage points of potential data leakage.

Industry Implications: Quantifying AI Adoption Trends

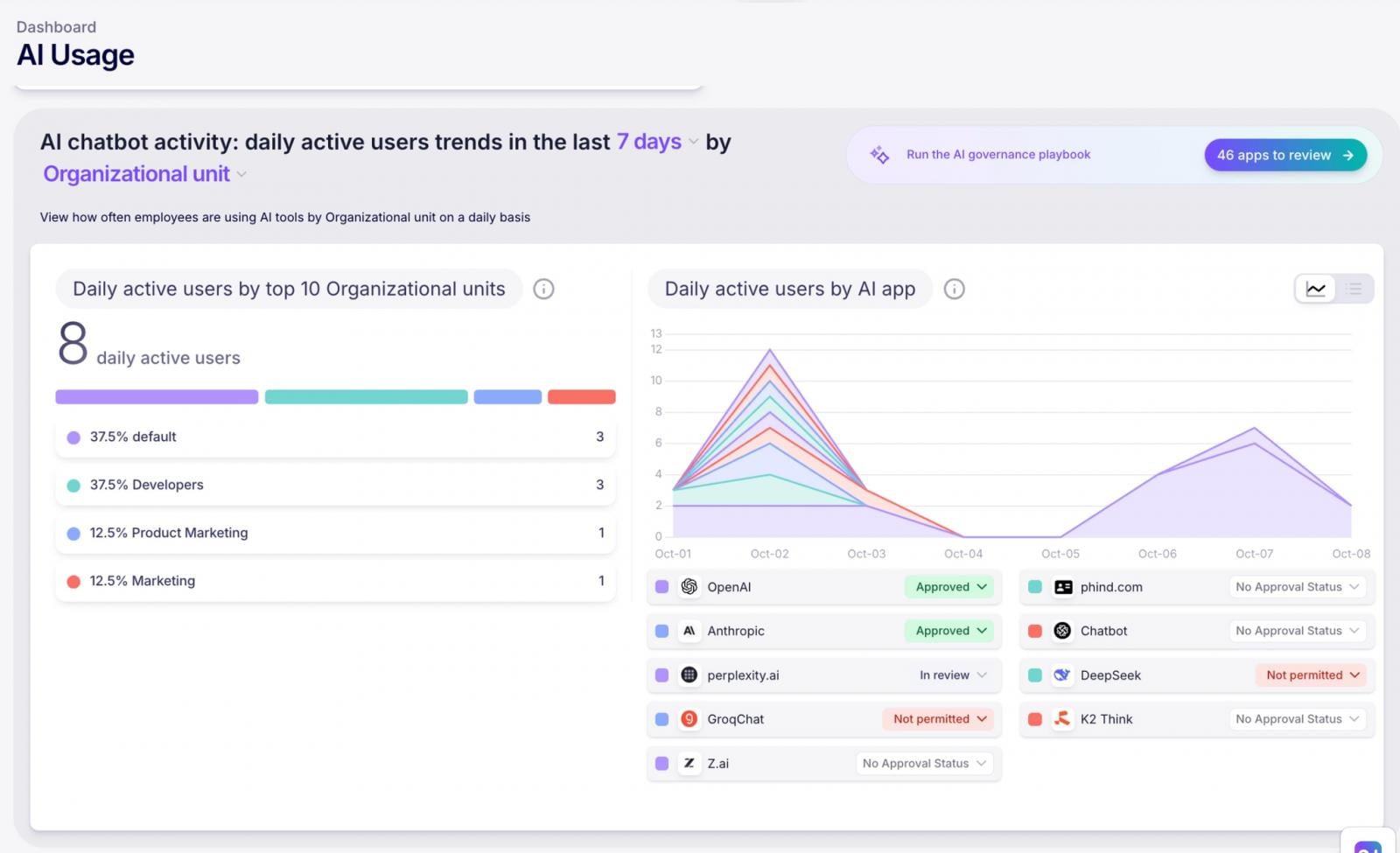

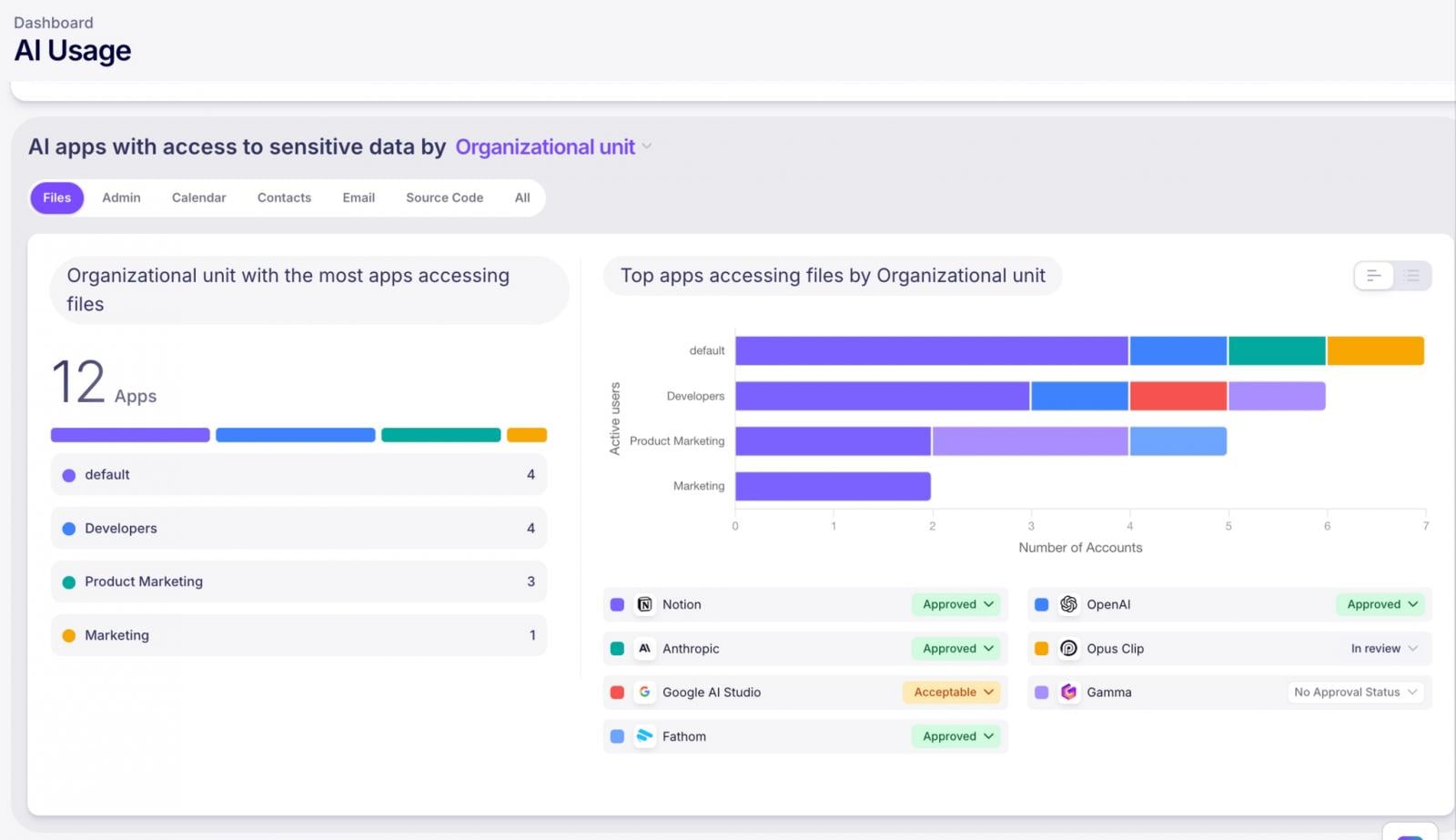

For strategic planning and resource allocation, security teams need empirical data on actual usage patterns, not anecdotal evidence. Understanding which departments are driving AI adoption and which specific tools are gaining traction is vital for focusing security investment where it matters most.

Effective governance platforms track AI utilization segmented by several critical dimensions: by approved versus unapproved application status, by specific vendor or model, and by organizational department. This provides the data necessary to move beyond blanket restrictions toward risk-based enforcement. Security efforts can then be concentrated on high-risk, high-usage tools, while simultaneously utilizing usage data to steer the broader workforce toward secure, sanctioned alternatives. This data-driven approach fosters alignment between innovation goals and security mandates.

The Critical Nexus: AI Access to Enterprise Data Assets

A significant, often overlooked, vector of risk stems from the integration permissions granted by AI services to existing enterprise Software-as-a-Service (SaaS) applications. Modern AI agents, plugins (e.g., Microsoft Copilot plugins), and third-party integrations frequently request broad data access via OAuth scopes or Managed Cloud Platform (MCP) server connections.

Security posture management requires maintaining a meticulous inventory of these inter-application trust relationships. This inventory must detail not only which AI tools are connected but, critically, the specific scope of data access they have been granted (e.g., read-only access to all emails vs. write access to code repositories). By cataloging these SaaS-to-AI integrations and their associated authorization scopes, organizations can systematically evaluate the risk exposure inherent in each connection and proactively revoke over-permissive access rights, thereby mitigating the risk of unauthorized lateral data movement.

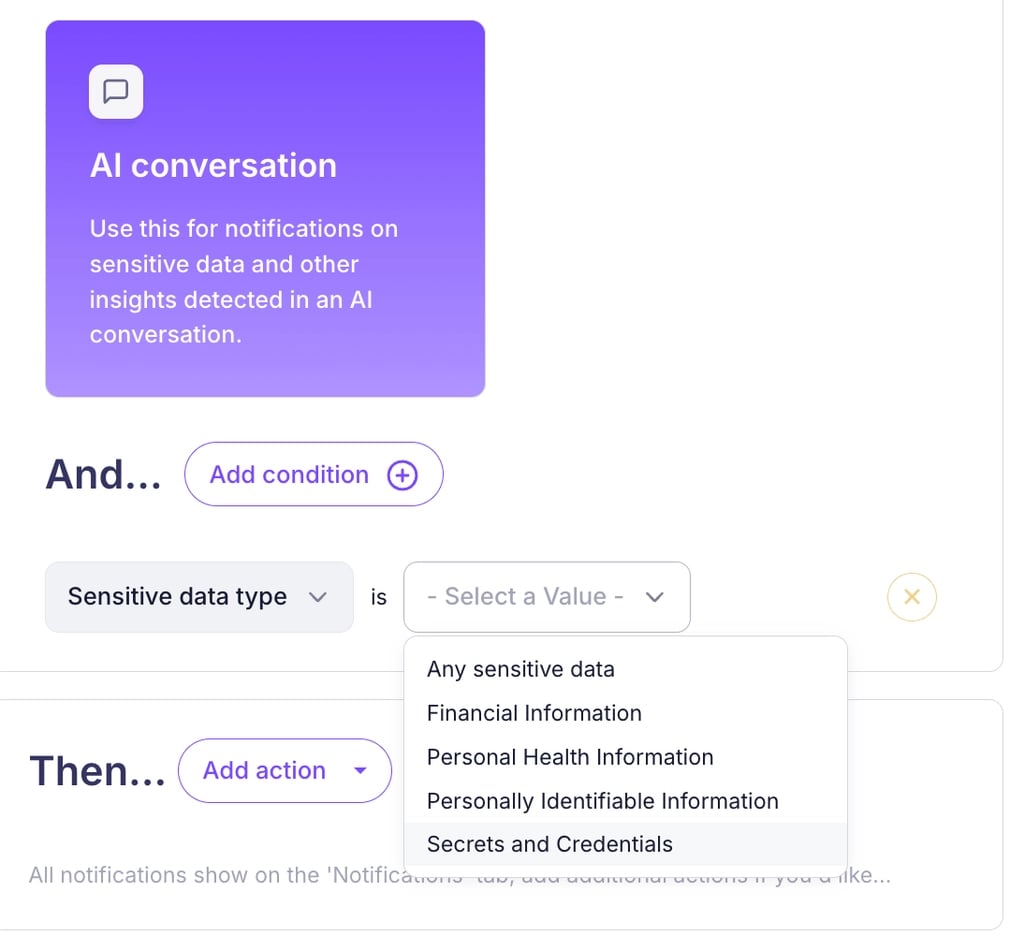

Future Impact and Trends: Proactive Alerting and Policy Enforcement

In the high-velocity environment of modern IT, continuous, manual vigilance is impossible. The security apparatus must be designed to operate autonomously. This requires robust, configurable alerting systems that function as an early warning network. Alerts should be triggered upon the emergence of any new, previously undiscovered AI tool, or immediately upon the detection of a policy violation—such as the sharing of sensitive data or the confirmed use of a blacklisted application.

The ultimate goal of comprehensive governance is not merely detection, but behavioral modification. While having an established AI Acceptable Use Policy (AUP) is the legal and procedural baseline (and acknowledgment tracking is the first step), policy enforcement must extend into the active workspace.

The future of effective AI governance lies in embedding guardrails directly into the user workflow. By employing context-aware "nudges," organizations can reinforce policy compliance precisely at the moment of decision-making. This approach guides employees toward safer, approved AI utilization patterns in real time, effectively translating static policy documents into dynamic, executable security controls without relying on an adversarial relationship between security staff and the end-user community. This shift toward proactive, non-disruptive guidance defines the next generation of effective digital risk management.

Conclusion: Balancing Innovation with Data Integrity

The proliferation of powerful, easily accessible AI tools is an undeniable driver of productivity and innovation. The role of the security function is not to act as a barrier to this technological advancement, but rather to ensure that progress is achieved without compromising the foundational integrity of corporate data or exposing the organization to unacceptable regulatory liabilities. Nudge Security’s approach illustrates a pathway toward achieving this balance by offering the requisite visibility, granular control mechanisms, and automation necessary to govern AI usage effectively at scale, thereby safeguarding enterprise assets amidst this transformative technological wave.