When the history of the artificial intelligence revolution is written, the spotlight will inevitably fall on the silicon—the massive, heat-generating Graphics Processing Units (GPUs) that have become the most sought-after commodity in the global economy. Yet, beneath the headlines of H100s and Blackwell chips, a quieter transformation is taking place. Nvidia is no longer just a semiconductor company; it has effectively transformed into a systems and networking titan, constructing a multibillion-dollar business that is rapidly becoming as fundamental to the company’s identity as the processors themselves.

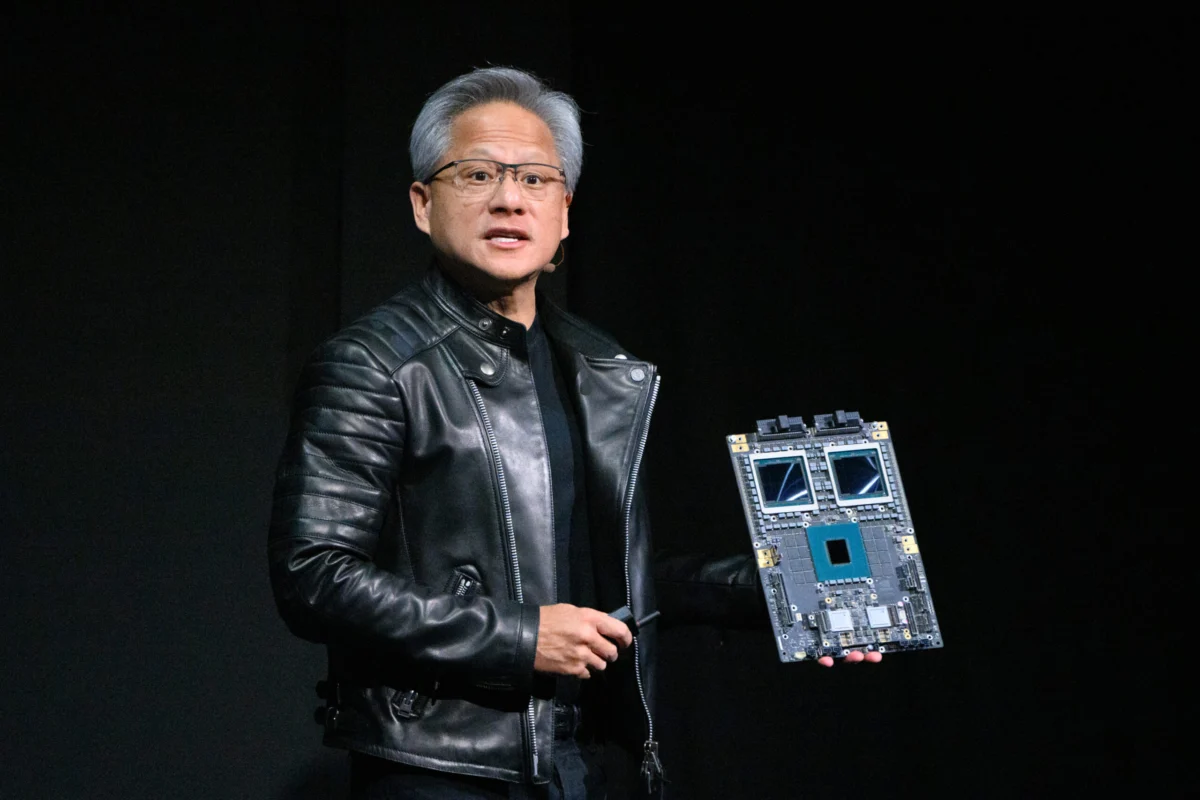

This strategic evolution did not happen by accident. Nvidia CEO Jensen Huang has built a career on making high-stakes bets years before the market recognizes their necessity. In 2010, long before the term "generative AI" entered the common lexicon, Huang pivoted the company toward AI-specific compute. A decade later, in 2020, he made a similarly prescient move: doubling down on data center networking with the $7 billion acquisition of Mellanox. At the time, the deal left some analysts scratching their heads. Today, it is recognized as the masterstroke that allowed Nvidia to own not just the "brain" of the AI factory, but its entire nervous system.

The financial results of this strategy are nothing short of staggering. In its most recent fiscal reports, Nvidia’s networking division emerged as the company’s second-largest revenue driver, eclipsed only by its massive compute segment. The division reported a quarterly revenue of $11 billion—a year-over-year surge of 267%. To put that figure into perspective, Nvidia’s networking business now generates more revenue in a single quarter than Cisco’s entire networking segment typically does in an equivalent period. For the full year, the division brought in over $31 billion, dwarfing the company’s original core business, gaming, which is now nearly three times smaller than the networking arm.

The Architecture of the AI Factory

To understand why networking has become a multibillion-dollar behemoth for Nvidia, one must understand the changing nature of the data center. In the traditional cloud era, networking was largely about "north-south" traffic—moving data from a server to a user’s device. In the AI era, the bottleneck is "east-west" traffic—the massive, high-speed communication between thousands of GPUs working in parallel to train a single large language model.

This is where Nvidia’s "AI Factory" concept comes into play. A modern AI supercomputer is not a collection of independent servers; it is a single, massive, distributed computer. Kevin Deierling, Senior Vice President of Networking at Nvidia, who joined the company via the Mellanox acquisition, notes that the industry’s perception of networking is often outdated. He argues that while people once viewed networking as a peripheral task—akin to connecting a printer—it has now become the "backlining" of the computer itself.

Nvidia’s networking portfolio is designed to eliminate every possible microsecond of latency. This includes NVLink, a high-speed interconnect that allows GPUs on a single rack to communicate as if they were a single processor. It also includes InfiniBand, a specialized networking standard that offers the ultra-low latency required for massive-scale supercomputing. While InfiniBand was once a niche technology used primarily in academic research, Nvidia has successfully moved it into the commercial mainstream, making it the gold standard for AI clusters.

However, Nvidia is not putting all its eggs in the InfiniBand basket. Recognizing that many enterprise customers prefer the ubiquity of Ethernet, the company launched Spectrum-X. This platform is designed specifically to bring AI-grade performance to Ethernet environments, offering the loss-less networking and high-bandwidth efficiency that standard Ethernet setups traditionally lack. By offering both InfiniBand and Spectrum-X, Nvidia has effectively cornered the market for high-performance data center fabrics.

The Full-Stack Advantage

One of the primary reasons for Nvidia’s dominance is its "full-stack" philosophy. Unlike competitors who might sell a switch or a network interface card (NIC) as a standalone component, Nvidia sells an integrated ecosystem. This approach allows for deep optimization between the hardware and the software. When a customer buys an Nvidia cluster, the GPUs, the interconnects, the switches, and the software libraries (like Magnum IO) are all designed to work in perfect harmony.

This vertical integration creates a formidable moat. For a competitor like AMD or Intel to displace Nvidia, they cannot simply build a faster chip; they must also build a networking fabric that can match the throughput and reliability of Nvidia’s ecosystem. As Deierling points out, Nvidia doesn’t just sell the technology; they build the entire compute stack and go to market through a vast network of partners, ensuring that their architecture becomes the industry standard.

This strategy has fundamentally shifted the value proposition for data center operators. In the past, networking was a commodity expense to be minimized. Now, it is a strategic investment. If a $40,000 GPU is sitting idle for even a fraction of a second because it is waiting for data to arrive over a slow network, the return on investment for the entire data center plummet. By providing the fastest possible networking, Nvidia ensures that its expensive GPUs are utilized at maximum efficiency, making the total cost of ownership lower for the customer despite the premium price tag.

The Rubin Era and the Future of Photonics

Nvidia shows no signs of slowing down. At the most recent GTC technology conference, Jensen Huang unveiled the "Rubin" platform, the successor to the Blackwell architecture. Rubin is more than just a new chip; it is a platform that includes six new chips designed to power the next generation of AI supercomputers.

The Rubin era will see even deeper integration of networking technologies. One of the most significant trends on the horizon is the shift toward co-packaged optics (CPO) and photonics. As data speeds continue to climb, traditional copper wiring is reaching its physical limits. Moving data via light—optics—is the next frontier. Nvidia’s latest announcements include more efficient Spectrum-X Ethernet Photonics switches, signaling a future where the line between the processor and the network becomes even more blurred.

Furthermore, Nvidia is expanding into new territories with the Inference Context Memory Storage platform. This technology addresses the growing need for fast data retrieval during the "inference" phase of AI—when a model is actually being used to answer questions or generate content. By optimizing how data is stored and moved during inference, Nvidia is ensuring its networking business remains relevant long after the initial training of a model is complete.

Industry Implications and the Competitive Landscape

The rise of Nvidia’s networking business has sent shockwaves through the broader tech industry. Legacy networking giants like Cisco, Juniper, and Arista are now finding themselves in a defensive position. While these companies still dominate general-purpose networking, they are racing to adapt to the specialized requirements of the AI era.

The competition is also heating up from specialized chipmakers like Broadcom and Marvell, who provide the silicon that powers high-end switches. These companies are banking on the "open" ecosystem of Ethernet to challenge Nvidia’s more proprietary InfiniBand and NVLink solutions. However, Nvidia’s ability to innovate at the system level—designing the chip, the network, and the software simultaneously—gives it a speed-to-market advantage that is difficult to replicate.

There is also the question of vendor lock-in. As Nvidia’s "full-stack" becomes more integrated, some customers worry about becoming too dependent on a single provider. This has led to the formation of industry groups like the Ultra Ethernet Consortium, which aims to create open standards for AI networking. Yet, for many companies in the middle of a high-stakes AI arms race, the immediate performance gains offered by Nvidia’s integrated stack outweigh the long-term concerns about lock-in.

Conclusion: The New Foundation of Computing

The metamorphosis of Nvidia’s networking division from a $7 billion acquisition into a $31 billion annual powerhouse is a testament to the company’s ability to anticipate the fundamental shifts in computing. By recognizing that the "data center is the new unit of computing," Jensen Huang has positioned Nvidia at the center of the AI infrastructure boom.

As we look toward the future, the distinction between "compute" and "networking" will likely continue to fade. In the AI factories of tomorrow, the network will not be an external component that connects servers; it will be the very fabric that enables a million processors to act as one. Nvidia’s quiet build-up of its networking business has not just created a multibillion-dollar rival to its own chip business—it has created the essential infrastructure upon which the entire AI economy is being built. With the upcoming Rubin platform and the push into photonics, Nvidia is ensuring that while others focus on the chips, it will continue to own the connections that make those chips powerful.