The digital landscape of software creation is undergoing a profound transformation, moving rapidly from mere AI-assisted coding to genuinely autonomous, agentic development. Signaling a seismic shift in its approach to developer tooling, Apple officially unveiled Xcode 26.3, an update that embeds sophisticated AI agents, notably Anthropic’s Claude Agent and specialized models from OpenAI (including successors to Codex), directly into its integrated development environment (IDE). This release, announced on Tuesday, pushes the boundaries of what is possible within the Apple application development stack, transforming Xcode from a passive environment into an active, intelligent collaborator capable of executing complex, multi-step engineering tasks. The Release Candidate for Xcode 26.3 is immediately available to all registered Apple Developers via the dedicated developer portal, with a general availability rollout expected shortly thereafter on the App Store.

This capability represents a significant architectural leap beyond the AI integrations introduced in previous versions. Last year’s Xcode 26 release tentatively brought generative AI functionality to the platform, offering rudimentary support for conversational models like ChatGPT and basic Claude interfaces. These tools primarily functioned as intelligent search engines or sophisticated code completers—a "copilot" model where the AI provided suggestions while the human developer maintained control over the entire workflow. Xcode 26.3, however, embraces the concept of agentic coding, granting the AI models a deeper, programmatic access to the IDE’s internal features and enabling them to pursue goals autonomously.

The Agentic Paradigm Shift

Agentic coding distinguishes itself by allowing the AI model to perceive its environment, formulate a plan, execute a series of actions (tools), evaluate the results, and iterate toward a defined objective—all without continuous human prompting for every single step. In the context of Xcode, this means the agents are no longer confined to merely suggesting the next line of Swift code. Instead, they can tap into the full spectrum of Xcode’s underlying features to perform highly complex automation.

The initial suite of capabilities is robust and immediately impactful. At launch, these agents are designed to comprehensively explore the structure and metadata of existing projects, enabling them to contextually understand the codebase before making modifications. Crucially, the agents can initiate the build process, execute unit and integration tests, identify errors, and subsequently self-correct the code based on the failure analysis. This end-to-end automation of the debugging and testing loop significantly accelerates the development cycle, particularly in mature or complex projects where localizing a bug can consume hours of human effort.

To ensure the agents operate within the highly specific constraints of the Apple ecosystem, the models are granted real-time, authoritative access to Apple’s current developer documentation. This critical integration mandates that the AI utilizes the latest APIs, adheres strictly to Apple’s Human Interface Guidelines (HIG), and implements best practices for performance and security across platforms (iPhone, iPad, Mac, Apple Watch, etc.). This solves a persistent problem in generalized LLMs, which often suffer from outdated training data or lack context regarding proprietary framework updates.

Technical Underpinnings: The Model Context Protocol (MCP)

Achieving this level of deep IDE integration required significant foundational work, culminating in the creation and deployment of the Model Context Protocol (MCP). The MCP serves as the standardized interface through which Xcode exposes its internal capabilities—its "toolset"—to external, compatible AI agents. In essence, the MCP transforms the IDE into a programmable environment accessible to large language models (LLMs).

Apple emphasized that its engineering collaboration with Anthropic and OpenAI focused heavily on optimizing the efficiency of the interaction. This involved rigorous work on token usage management and efficient tool calling. Since complex agent tasks involve numerous sequential calls and feedback loops, minimizing latency and controlling API costs requires surgical precision in how the model interacts with the MCP. The goal is to ensure the autonomous process runs efficiently enough to be practical in a fast-paced development environment.

The MCP’s design philosophy promotes an open, yet controlled, ecosystem. Any external agent that adheres to the MCP standard can now interface with Xcode for a wide range of tasks, including:

- Project Discovery and Analysis: Understanding project structure, dependencies, and metadata.

- File Management and Modification: Safe execution of code changes, snippet generation, and file restructuring.

- Preview and Simulation: Utilizing Xcode’s built-in preview tools to visualize changes without a full deployment.

- Documentation Retrieval: Contextual searching and utilization of official API references.

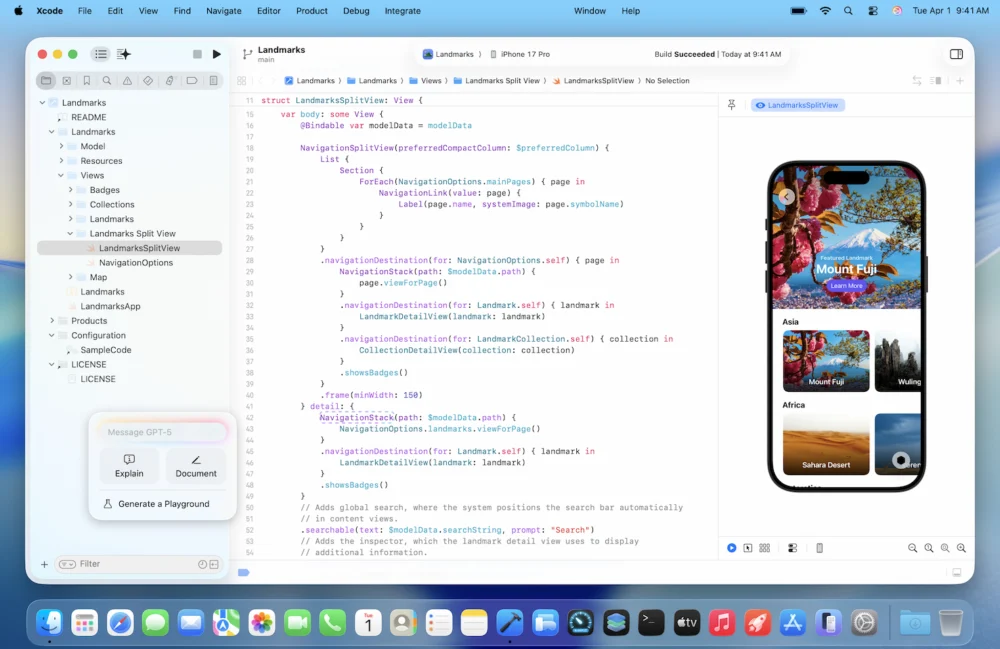

Developers initiate these complex tasks through a specialized prompt box, typically situated on the left pane of the IDE. Utilizing natural language commands, a developer can articulate high-level objectives—for instance, "Implement a new photo sharing feature that utilizes the latest Photos framework, ensuring SwiftUI compatibility and robust error handling." The agent then interprets this directive, breaks it down into granular sub-tasks, and begins interacting with the MCP to achieve the goal. This transition from writing code to writing comprehensive instructions marks a fundamental shift in the developer’s role, moving toward high-level architectural oversight and quality assurance.

Transparency, Education, and Quality Assurance

A critical component of this agentic architecture is transparency. Given the potential for AI-generated code to introduce subtle errors or "hallucinations," Apple has engineered the process to be highly visible and auditable. As the agent works, it systematically decomposes the requested task into a step-by-step plan. This plan, along with the agent’s reasoning and documentation lookup steps, is logged in a detailed "project transcript" displayed alongside the code editor.

Code changes are highlighted visually, ensuring developers can instantaneously identify exactly which lines were added, modified, or removed by the agent. This level of granular visibility is paramount for maintaining code quality and intellectual property control. Furthermore, the agent is encouraged to engage in pre-planning; Apple suggests that explicitly directing the agent to "think through its plan" before executing code can substantially improve the quality and relevance of the output.

Apple recognizes the educational potential inherent in this transparency. For junior developers or those learning new frameworks, observing the agent’s systematic approach—how it searches documentation, structures tasks, and fixes errors—provides an invaluable, real-time apprenticeship experience. To capitalize on this, the company is actively supporting the feature with educational resources, including a planned "code-along" workshop on its developer site, inviting users to learn the agentic workflow in real-time within their own copies of Xcode.

Finally, the system incorporates robust safety nets. Xcode automatically creates milestones—essentially version control checkpoints—every time the agent successfully completes a modification. If the generated code is unsatisfactory or introduces regressions, developers can instantly revert the project to any previous state, minimizing the risk associated with autonomous code generation. The process concludes only after the AI agent verifies the functionality of its created code through integrated testing, allowing it to iterate and refine its work until all tests pass.

Industry Implications and the Competitive Landscape

Apple’s aggressive move into agentic coding within Xcode immediately elevates the competitive stakes in the software development tools sector. For several years, the landscape has been dominated by the success of GitHub Copilot (powered by OpenAI’s models), which set the standard for AI-assisted coding. However, Copilot and similar tools (like those from Amazon CodeWhisperer) generally operate at the level of suggestion and completion. Xcode 26.3 moves into the realm of autonomy, a crucial differentiator.

This strategic integration is not merely about providing a better code suggestion tool; it is about reinforcing the gravity of the Apple ecosystem. By offering the most advanced, deeply integrated, and contextually aware development environment for building iOS, macOS, and visionOS applications, Apple strengthens its platform lock-in. Developers targeting Apple hardware will find the productivity gains offered by Xcode 26.3 difficult to ignore, further cementing Xcode as the indispensable tool for the 30 million-strong Apple developer community.

The decision to leverage external LLM providers like Anthropic and OpenAI, rather than relying solely on a proprietary Apple model, is also noteworthy. While Apple is developing its own on-device and cloud-based AI capabilities, collaborating with industry leaders ensures access to the most powerful generative models immediately. The Model Context Protocol (MCP) acts as the bridge, insulating Xcode’s internal structure while providing necessary external access. This hybrid approach—powerful third-party models orchestrated by Apple’s proprietary IDE protocol—is a highly effective short-term strategy to leapfrog competitors.

The implications for smaller developer teams and individual creators are profound. Agentic coding effectively democratizes complex development tasks. Routine maintenance, boilerplate creation, complex API integrations, and the tedious task of ensuring framework compatibility can now be delegated to the agent, freeing human developers to focus on architectural design, creative problem-solving, and high-level feature implementation. This capability has the potential to significantly lower the barrier to entry for creating sophisticated applications on Apple platforms.

Future Trajectory and Long-Term Impact

The integration in Xcode 26.3 represents the beginning of the autonomous software development lifecycle (SDLC). The immediate future will see agents expanding their tool usage exponentially. Currently, they handle project structure, testing, and documentation. Future iterations will likely see agents taking on tasks related to continuous integration/continuous deployment (CI/CD) pipelines, managing cloud resources (e.g., Apple CloudKit configurations), and even generating comprehensive UI layouts based on abstract design specifications.

The ultimate vision is a developer workflow where a human provides an application concept and high-level constraints, and the AI agent manages the full end-to-end development, testing, optimization, and preparation for submission. This trajectory poses complex questions regarding the future role of the human developer. While fears of complete obsolescence are premature—human judgment, creativity, and ethical oversight remain irreplaceable—the profile of the necessary developer skill set is shifting. Expertise in prompting, system architecture design, and rigorous quality verification will become more valuable than proficiency in rote coding.

There remain considerable challenges to navigate. Relying on external AI APIs introduces cost variables (token usage can quickly accumulate for complex, multi-step agent tasks) and potential latency issues. Furthermore, while the transparency features mitigate risk, the burden of rigorous verification still falls on the human developer. The industry must establish new standards for validating agent-generated code to ensure security vulnerabilities and subtle logical flaws (hallucinations) are caught before they impact end-users.

Despite these hurdles, Apple’s move is a definitive signal: the IDE is transitioning from a mere text editor and compiler into an intelligent, self-aware development partner. By establishing the MCP and embedding powerful agents from Anthropic and OpenAI, Apple has not just added a feature to Xcode; it has architecturally prepared its entire development ecosystem for an autonomous future, fundamentally redefining productivity for millions of developers worldwide. This shift promises to accelerate innovation across the entire Apple platform, fueling the next generation of applications that will define the user experience on its expansive hardware portfolio.