Tucked away in the labyrinthine alleys of Hongik-dong, a quiet residential enclave in eastern Seoul, stands an unassuming stone-tiled building that serves as the heart of South Korea’s intellectual heritage. This is the headquarters of the Korea Baduk Association, the central authority for the ancient game of Go, known locally as Baduk. For centuries, these halls were defined by the rhythmic, tactile clatter of polished stones meeting wooden boards—a sound synonymous with deep contemplation and the pursuit of a "divine move."

Today, however, the auditory landscape of the association has shifted. The traditional clicks of stones are increasingly overshadowed by the rapid-fire tapping of computer mice. Inside the training rooms, the world’s most elite players no longer sit exclusively across from human opponents. Instead, they are hunched over monitors, their faces illuminated by the glow of complex software interfaces. They are dissecting their previous matches through the lens of artificial intelligence, watching as algorithms assign cold, numerical probabilities to moves that were once considered matters of soul and intuition.

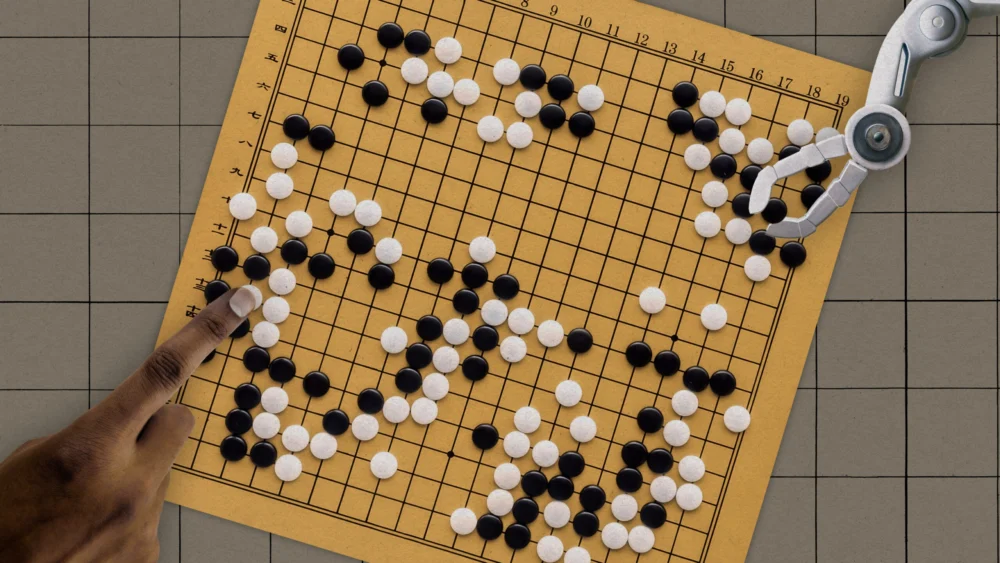

A decade has passed since Google DeepMind’s AlphaGo delivered a seismic shock to the global community by defeating the legendary Lee Sedol. That moment was more than just a victory for computer science; it was the beginning of a total ontological restructuring of one of the world’s oldest games. In the years since, AI has not merely assisted Go players—it has fundamentally rewired how they perceive strategy, creativity, and the very nature of competition.

The Mathematical Frontier

To understand the magnitude of AI’s impact, one must first grasp the sheer scale of Go. Invented in China more than 2,500 years ago, Go is an abstract strategy game of deceptive simplicity. Two players place black and white stones on a 19×19 grid, attempting to encircle territory and capture the opponent’s pieces. Unlike chess, which is often likened to a tactical battle, Go is frequently described as a total war of attrition and influence.

The complexity of the game is staggering. There are approximately 10^170 possible board configurations—a number that exceeds the estimated number of atoms in the observable universe. For millennia, humans navigated this infinite possibility space using heuristics: rules of thumb, proverb-like principles, and "joseki" (established opening sequences) passed down through generations of masters. These principles were treated as sacred truths until AI proved many of them were wrong.

The evolution of Go AI occurred in two distinct phases. The first, embodied by AlphaGo Lee, utilized supervised learning, training on a database of 30 million human moves to mimic the best players in history. The second phase, represented by AlphaGo Zero, discarded human data entirely. It learned by playing millions of games against itself, starting from a blank slate and guided only by the basic rules of the game. Within three days, AlphaGo Zero surpassed its predecessor 100 games to zero. By ignoring human "wisdom," the AI discovered strategies that humans had failed to imagine in over two millennia.

The Rise of "Shintelligence"

In the current professional landscape, the most dominant figure is Shin Jin-seo, the world’s top-ranked player. His dominance is so absolute that he has earned the nickname "Shintelligence," a nod to the uncanny resemblance between his playstyle and that of the machines. Shin’s daily routine is a testament to the new era of professional training. Every morning, he opens KataGo, a powerful open-source successor to the AlphaGo lineage.

Shin spends hours tracing "blue spots"—the digital markers that indicate the AI’s recommended optimal move. He rearranges stones on a virtual grid, attempting to reverse-engineer the logic of a machine that calculates millions of variations per second. For Shin, this is an "ascetic practice," a grueling mental marathon to align his human intuition with algorithmic perfection.

The data bears out this transformation. A 2022 study by the Korean Baduk League found that Shin’s moves match the AI’s top recommendations 37.5% of the time, a significant margin over the professional average of 28.5%. However, this alignment comes at a cost. Shin admits that his game has changed irrevocably. He no longer explores the board with the same freedom as his predecessors; he follows the "directions" of the AI, knowing that to deviate is to court a statistical disadvantage.

The Death of Artistry?

While AI has raised the technical floor of the game, it has also sparked a philosophical crisis. For centuries, Go was viewed as an art form—a "hand conversation" where players expressed their personalities and philosophies through their stones. Masters like Lee Sedol were known for provocative, chaotic moves that challenged the opponent’s spirit.

Now, that artistry is being replaced by homogenization. Because AI has "solved" many aspects of the opening game, the first 50 moves of high-level professional matches are often identical, as players recite the memorized scripts provided by their digital tutors. Ke Jie, the Chinese grandmaster who also fell to DeepMind’s software, has publicly lamented this trend, describing the experience of watching modern matches as "tiring and painful" due to the lack of original thought.

Lee Sedol, who retired in 2019, cited the rise of AI as a primary reason for his departure from the professional circuit. "Before AI, we sought something greater," Lee remarked. "I learned Go as an art. But if you copy your moves from an answer key, that’s no longer art." For Lee, the mystery of the game—the sense of charting unknown frontiers—has been replaced by the dictates of a superhuman oracle.

The Black Box and the Epistemic Limbo

Despite their reliance on these systems, even the world’s best players struggle to explain why the AI chooses certain moves. The neural networks that power KataGo and AlphaZero are "black boxes." They provide a winning probability for a specific coordinate on the board, but they do not offer a rationale.

Kim Chae-young, one of the world’s leading female professionals, describes the experience as interacting with a "higher dimension." When she trains, she isn’t looking for a rational explanation; she is trying to develop a "gut feeling" for the AI’s preferences. This has placed the game in a state of epistemic limbo. Humans can mimic the machine, but they have yet to fully decode the new paradigm of principles the machine has discovered.

Academic researchers are currently attempting to bridge this gap. In 2024, computer scientists at Google DeepMind successfully extracted new strategic concepts from AlphaZero and translated them into puzzles for human grandmasters. However, experts like Nam Chi-hyung, a professor of Go at Myongji University, argue that we are only scratching the surface. Until humans can deduce the general principles behind the AI’s moves, we remain mere imitators of a logic we cannot comprehend.

A New Era of Accessibility and Equality

While AI has arguably stifled individual creativity in the opening moves, it has had a profoundly democratic effect on the game’s social structure. Historically, Go training was gatekept by elite, male-dominated circles. Young players had to study under established masters, and female players often found themselves excluded from the most rigorous training environments.

AI has shattered these barriers. It provides a world-class tutor to anyone with a powerful enough GPU. This shift has led to a surge in the performance of female players. In 2022, Choi Jeong became the first woman to reach the finals of a major international tournament, a feat previously thought impossible. Kim Chae-young also credits AI with breaking the "psychological barrier" of male invincibility. By analyzing the moves of top male players through AI, she realized they were far from flawless, emboldening her to compete at the highest levels.

The Human Narrative in a Post-AI World

As the technical gap between humans and machines widens, a curious trend has emerged: fans still prefer watching humans. While two AI programs playing each other might produce a "perfect" game, it is often too complex and sterile for human spectators to enjoy.

The drama of Go now lies in the "middle game"—the chaotic phase where the board becomes too complex for human memory to hold, and players must rely on their own judgment. Fans revel in the mistakes, the mounting pressure, and the dramatic comebacks. In this context, the human element has become more precious.

Shin Jin-seo believes that even in the shadow of AI, humans can still tell a "story" on the board. For him, the machine is not an enemy but a "North Star"—a constant reminder of how much room there is to improve. Even Lee Sedol, despite his early cynicism, has found a sliver of hope. He dreams of a "masterpiece game"—a match of such technical brilliance and razor-edge precision that it borders on perfection. "Maybe AI can help us play a masterpiece," he muses.

As Go enters its third millennium, it is no longer the game it once was. It is faster, more calculated, and arguably more difficult than ever before. But as the world’s best players continue to rewire their thinking to match the speed of silicon, they are discovering that the ancient game still has secrets to reveal—even if those secrets are being whispered by a machine.