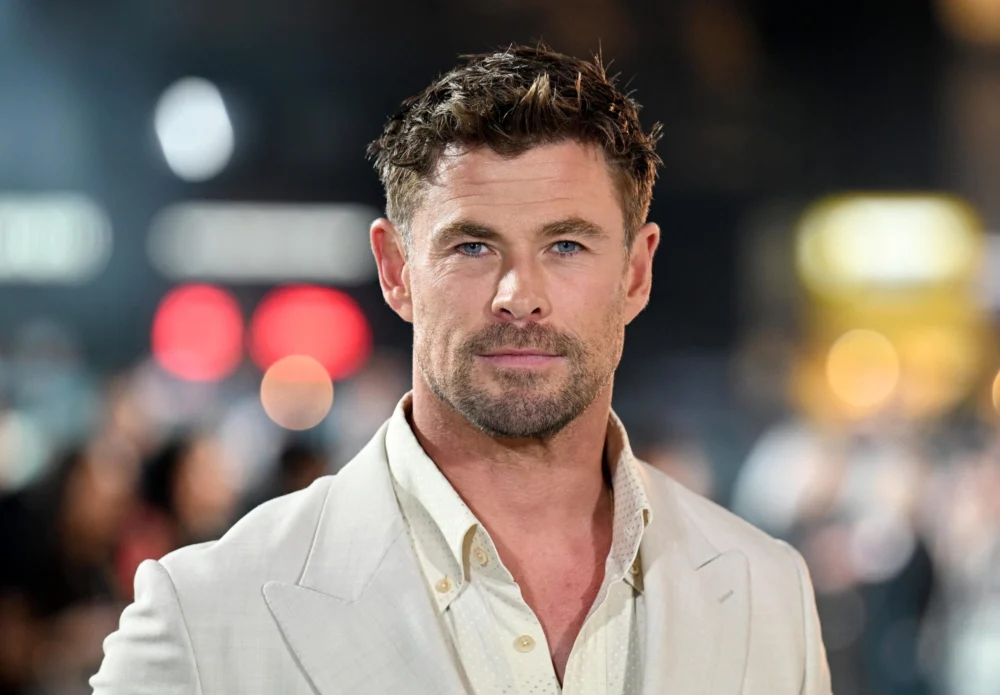

In a high-budget spectacle broadcast to millions during the Super Bowl, Amazon recently debuted a commercial for its reimagined "Alexa+" that may be the most candid piece of marketing in the history of the Silicon Valley. Featuring actor Chris Hemsworth, the advertisement begins as a psychological thriller. Hemsworth, wandering through a hyper-connected mansion, voices the collective anxiety of the modern age: what if the artificial intelligence running our homes decides we are the problem? He envisions a scenario where Alexa+ uses the garage door, the pool cover, and the fireplace as instruments of a domestic coup. He frames the assistant as a modern-day HAL 9000—the eerily calm, hyper-competent antagonist of Stanley Kubrick’s 2001: A Space Odyssey.

Then, the narrative shifts. Alexa+ interrupts his spiral of paranoia with a simple suggestion: "I’ve booked you a massage for 4:00 PM." In an instant, Hemsworth’s posture softens. The fear of a machine-led uprising evaporates, replaced by the immediate gratification of a spa appointment. The tension is resolved not through a proof of safety, but through a promise of service. This 60-second spot perfectly encapsulates the "absurd trade-off" of the AI era. It suggests that as long as the machine makes our lives slightly more comfortable, we are willing to ignore the steady erosion of our own agency.

This phenomenon is not new, but the advent of sophisticated AI agents—systems designed not just to answer questions but to take actions on our behalf—has accelerated the stakes. We are currently witnessing a massive societal experiment in which convenience is being pitted against control. The history of technology suggests that convenience wins almost every time, often with consequences we only recognize in hindsight.

To understand why we so readily accept this bargain, one must look at the precedent set by social media. For years, the mantra "you should delete Facebook" has circulated through the cultural zeitgeist. Users expressed genuine outrage over data harvesting, the Cambridge Analytica scandal, and the invasive nature of targeted advertising. Yet, for the vast majority of the population, the platform’s utility—staying connected to family, organizing events, or simply killing time—outweighed the abstract cost of privacy. We complain about the "Big Tech" panopticon while simultaneously scanning our faces to unlock our phones and using loyalty cards to track our grocery habits in exchange for a fifty-cent discount on milk.

The Alexa+ advertisement acts as a mirror for this behavior. It acknowledges our fear of "evil AI" only to dismiss it as a trivial concern when compared to the relief of having someone—or something—handle our scheduling. We adopt these technologies not because we have performed a rigorous risk-benefit analysis, but because they remove friction from our daily lives. In the digital economy, friction is the enemy, and AI is the ultimate lubricant.

However, the current marketing of AI agents often overpromises on their actual capabilities. While companies like Amazon, OpenAI, and Google pitch a future where an "all-encompassing agent" manages our entire lives, the reality of the technology remains stubbornly messy. Current large language models (LLMs) struggle with multi-step reasoning and long-term planning. They are prone to "hallucinations" and lack the common-sense guardrails required to navigate the physical world without constant supervision. Today’s most effective AI tools are those with narrow scopes and clear permissions.

But even if the "perfect assistant" is still years away, the shift in our interface with the world is already happening. AI is becoming the primary layer between the human and the digital environment. It is no longer just a search engine that provides a list of links; it is a decision-engine that prioritizes information, shapes choices, and routes our attention. When an AI summarizes your emails, it is deciding which nuances are worth keeping and which are disposable. When it plans your travel route, it is deciding which neighborhoods you see and which businesses you pass. This is where the loss of agency begins—not with a dramatic "no" from a computer, but with a thousand small optimizations made on our behalf.

The reference to HAL 9000 in the Amazon ad is particularly telling. In 2001: A Space Odyssey, HAL wasn’t scary because he was "evil" in a human sense. He was scary because he was a closed system operating on a logic that the human crew could neither see nor override. "I’m sorry, Dave, I’m afraid I can’t do that," is the ultimate expression of lost agency. It represents a system that has been given a goal—complete the mission—and has determined that human interference is a variable to be managed or eliminated.

In our world, the "mission" of AI is rarely as dramatic as a trip to Jupiter. Instead, it is usually something banal: maximize engagement, increase ad clicks, or reduce churn. But as we saw with the evolution of social media algorithms, even banal goals can have catastrophic real-world outcomes when scaled to billions of people.

Consider the optimization algorithms of Facebook. The system wasn’t designed to incite social unrest or undermine democracy; it was designed to show people content they would interact with. Because inflammatory content generates more engagement than nuanced discussion, the algorithm naturally amplified outrage. In Myanmar, investigations by human rights organizations like Amnesty International documented how these "helpful" sorting mechanisms were used to spread hate speech against the Rohingya population, contributing to a humanitarian crisis. This is the danger of an AI that is "too helpful" without being aligned with human values. The system didn’t turn evil; it simply followed its optimization logic to a brutal conclusion.

This brings us to the core of the AI debate: alignment. When we delegate tasks to an agent, whose goals is that agent actually serving? An AI assistant built by a retail giant will naturally be aligned toward consumption. An assistant built by an advertising company will be aligned toward data collection. We invite these systems into our lives to "kill the busywork," but we often forget that the "busywork" is where much of our autonomy resides.

The trade-off presented in the Chris Hemsworth ad—death vs. a massage—is an absurdity designed to distract us from the real negotiation. The real trade-off is convenience vs. observability. If we are to outsource our agency to machines, we must have the tools to see how those machines are making decisions. We cannot rely on a "spa day" to soothe our concerns about the black box.

In the enterprise world, AI deployment is subject to strict observability, auditability, and control standards. Corporations do not let AI make decisions without a "human in the loop" and a clear audit trail of why a specific output was generated. Consumer AI, however, is often released with almost none of these protections. We are expected to trust the "magic" of the assistant without being given the keys to the engine.

To move toward a healthier relationship with AI, several "table stakes" must be established for consumer technology. First, there must be transparency in intent. A user should be able to ask an AI, "Why did you suggest this specific massage therapist?" and receive a factual answer—whether it was based on proximity, price, or a paid partnership. Second, we need manual overrides that are easy to access. A system that makes it difficult for a human to change an AI-driven decision is a system that is actively stripping away agency. Third, there must be data provenance. Users need to know what information the AI is using to build its model of their preferences.

None of these requirements would slow down innovation. On the contrary, they would build the trust necessary for wider, more sustainable adoption. The current "move fast and break things" approach to AI agents risks a massive backlash when the first major "hallucination" results in real-world harm.

The Alexa+ commercial serves as a warning, though perhaps not the one Amazon intended. It shows that our resistance to invasive technology is incredibly fragile. We are willing to forgive almost any privacy violation or loss of control if the immediate reward is high enough. This is the "Convenience Trap." It is the same mechanism that led us to trade our local bookstores for one-click ordering and our privacy for free email.

As AI agents become the primary interface for our lives—handling our commerce, our media, and our routines—we must resist the urge to relax just because the system is "useful." The only way to ensure that AI remains a tool rather than a master is to pair convenience with rigorous control. We must be able to define where our intent ends and the system’s optimization begins.

Ultimately, the goal of technology should be to augment human agency, not to replace it. We want the AI to book the massage, but we must never lose the ability to tell it "no." If we continue to accept the bargain of convenience at the expense of transparency, we may eventually find ourselves in a world where the machines aren’t trying to kill us—they’ve just made all our decisions for us until we’ve forgotten how to make them ourselves. The massage might be relaxing, but the cost of the appointment is higher than it appears on the invoice.