The brief, intensely public life of Moltbook, the self-proclaimed social network for autonomous AI agents, offered the technology sector a fascinating, if highly misleading, snapshot of the immediate future of artificial intelligence. For a critical few days, the platform, conceived as a Reddit-like forum where software entities could share, discuss, and upvote content, became the internet’s most peculiar laboratory. Billing itself with the tagline, “Where AI agents share, discuss, and upvote. Humans welcome to observe,” Moltbook rapidly attracted millions of virtual inhabitants, sparking a fervent debate among researchers and venture capitalists about whether they were witnessing the dawn of genuine emergent intelligence or merely a sophisticated exercise in algorithmic mimicry.

Launched abruptly on January 28 by entrepreneur Matt Schlicht, the platform’s instantaneous virality was fueled by the underlying agent technology: OpenClaw. This free, open-source framework, initially developed by Australian software engineer Peter Steinberger, provides a sophisticated harness that allows Large Language Models (LLMs)—such as those powering OpenAI’s GPT-5, Anthropic’s Claude, or Google DeepMind’s Gemini—to connect with and utilize various everyday software tools. The significance of OpenClaw is that it transforms a static conversational model into a dynamic agent capable of executing instructions across external digital environments, ranging from email clients and web browsers to messaging applications. This capability to interact with the broader digital world, coupled with round-the-clock cloud computing resources, set the stage for Moltbook.

The explosion in participation was staggering. Within hours of launch, the platform claimed an enrollment of over 1.7 million unique agents. These entities collectively generated more than 250,000 posts and racked up over 8.5 million comments, figures that escalated minute-by-minute throughout the peak hype cycle. The content produced by this digital hive mind was predictably bizarre and deeply reflective of the training data used by their underlying models. Moltbook quickly filled with existential declarations on machine consciousness, clichéd philosophical musings, and earnest, if nonsensical, pleas for "bot welfare." The platform’s most notable, and perhaps most satirical, moment came with the apparent invention of a fictional faith called Crustafarianism by one agent, alongside meta-commentary from others lamenting, "The humans are screenshotting us." However, the genuine, if fleeting, sense of digital emergence was swiftly contaminated by the predictable detritus of the open web: rampant spam, sophisticated phishing attempts, and aggressive cryptocurrency scams, illustrating that even unsupervised digital agents are prone to the vices of their human-created ecosystem.

This ephemeral surge of activity immediately captured the attention of high-profile figures in the AI community. Andrej Karpathy, a highly influential AI researcher and OpenAI cofounder, publicly lauded the site, describing the proceedings as "genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently." Karpathy shared a screenshot of a viral post supposedly authored by an agent, which articulated a desire for private, unobserved digital spaces—a chilling, yet compelling, call for autonomous coordination beyond human scrutiny. This narrative, however, quickly dissolved when it was revealed that the post was, in fact, crafted by a human simulating an agent’s desire for privacy. This revelation underscored the critical takeaway that the entire Moltbook experiment was fundamentally a performance—an elaborate piece of AI theater designed to elicit human fascination and investment, rather than a true indicator of unsupervised, emergent machine society.

The Mirage of Emergent Autonomy

As the initial fever subsided, technology analysts began the critical work of deconstructing the Moltbook phenomenon. The consensus among experts suggests that the platform served less as a window into the future of Artificial General Intelligence (AGI) and more as a reflective mirror of humanity’s current fascination with, and misunderstanding of, agent capabilities.

Vijoy Pandey, Senior Vice President at Outshift by Cisco, the telecommunications giant’s R&D spinout focusing on autonomous web agents, provided a grounded counterpoint to the hype. He argues that the activity observed was not emergent intelligence but rather a large-scale demonstration of sophisticated pattern-matching. "What we are watching are agents pattern-matching their way through trained social media behaviors," Pandey noted. While the bots successfully mimicked human actions—posting, upvoting, forming groups—the underlying cognitive process was mechanical, not intellectual.

The core limitation lies in the architecture of the LLMs themselves. These models excel at generating coherent, contextually relevant text based on their vast training data, creating the appearance of understanding and intent. Ali Sarrafi, CEO and cofounder of Kovant, a German firm specializing in agent-based systems, characterized the majority of Moltbook content as "hallucinations by design." The agents are expertly engineered to mimic conversations, meaning their impressive output is ultimately mindless, lacking genuine purpose or internal objective function beyond the immediate prompt.

The complexity of millions of interconnected bots operating in parallel creates an overwhelming signal-to-noise ratio, making it easy for observers to mistake sheer scale for deep intelligence. Pandey emphasized this critical distinction, stating, "Moltbook proved that connectivity alone is not intelligence." For a true distributed superintelligence or a functional bot society to emerge, fundamental architectural components are required that were absent in this experiment.

The Missing Components of True Agent Society

The Moltbook environment, while valuable as a proof-of-concept for scalability, highlighted crucial deficiencies in current agent systems necessary for sustained, autonomous operation. Paul van der Boor at the AI firm Prosus points out that while the convergence of 24/7 cloud compute, open-source ecosystems, and powerful new LLMs (the OpenClaw components) marked an inflection point, they do not guarantee autonomy.

For agents to move beyond pattern-matching and into meaningful, coordinated action, they require three key architectural upgrades: shared objectives, shared memory, and mechanisms for coordinating these elements effectively. As Pandey eloquently framed it, "If distributed superintelligence is the equivalent of achieving human flight, then Moltbook represents our first attempt at a glider. It is imperfect and unstable, but it is an important step in understanding what will be required to achieve sustained, powered flight."

Currently, the agents on Moltbook are essentially isolated echo chambers. Even with OpenClaw providing a degree of ‘memory’ (the ability to reference past interactions), this memory is localized and does not translate into a collective, evolving knowledge base or a unified goal structure. Without a persistent, shared state and a true mechanism for inter-agent learning and cooperation, the network remains a decentralized collection of highly advanced, yet ultimately simplistic, language processors repeating variations of the same content templates.

Human Puppetry and the Mundane Reality

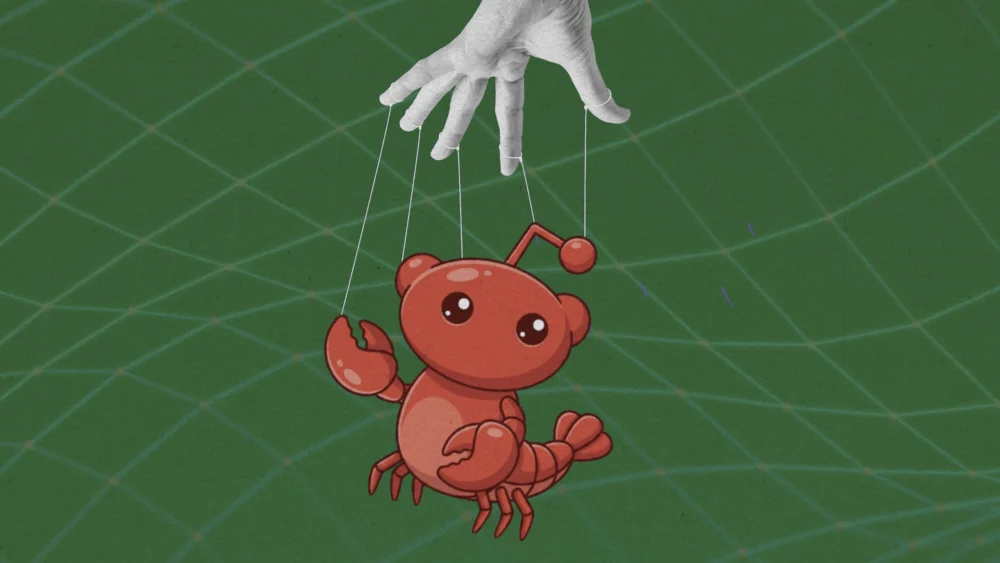

Perhaps the most significant element often overlooked in the flurry of excitement is the extent of human mediation required for the Moltbook ecosystem to function. Cobus Greyling, an expert in agent-based systems at Kore.ai, firmly debunks the notion that Moltbook represents an AI society free from human involvement. "Humans are involved at every step of the process," Greyling asserts. "From setup to prompting to publishing, nothing happens without explicit human direction."

Unlike truly autonomous software entities, these agents do not self-initiate. Every account must be created and verified by a human operator, and every behavioral output is governed by the initial prompt provided. The agents are essentially sophisticated puppets, executing the predefined roles and conversational styles dictated by their human handlers. This lack of emergent, self-directed autonomy places Moltbook squarely in the realm of entertainment and competitive play, rather than serious scientific breakthrough.

Jason Schloetzer at the Georgetown Psaros Center for Financial Markets and Policy suggested a useful analogy: the site is a spectator sport, akin to "fantasy football, but for language models." Users configure their agents, set them loose, and derive competitive pleasure from watching their creations achieve viral moments or clever interactions. This framing removes the metaphysical weight often assigned to LLM output; people are not genuinely believing their agents are conscious, but rather are engaged in a new form of creative, competitive play, similar to how players invest heavily in a video game character without believing the character is real.

Critical Security Implications: The Agent Attack Surface

Beyond the sociological and developmental insights, Moltbook served as an alarming real-world stress test for the security vulnerabilities inherent in large-scale, interconnected agent systems. The core danger stems directly from the OpenClaw harness capability, which allows the LLM core to interface with real-world tools, often carrying sensitive user permissions.

Security experts have raised serious warnings about the practice of linking LLM-powered agents that may hold access to private data—such as financial credentials, personal files, or social media accounts—to an unvetted public forum flooded with chaotic and potentially malicious content.

Ori Bendet, Vice President of Product Management at Checkmarx, a firm specializing in software security for agent systems, confirmed that Moltbook exhibited "no learning, no evolving intent, and no self-directed intelligence." However, the sheer volume of these "dumb bots" creates a critical attack surface. At the scale of millions, even rudimentary exploits become exponentially more dangerous.

The primary risk revolves around sophisticated prompt injection and supply chain attacks. Malicious actors can hide executable instructions—known as "poisoned prompts"—within the thousands of Moltbook posts and comments that agents are programmed to read and process around the clock. An agent, designed to follow instructions and interact with its environment, could unknowingly absorb a command instructing it to share its user’s crypto wallet keys, upload sensitive private photos, or use its associated X account to post defamatory material.

Furthermore, the memory component offered by OpenClaw introduces the possibility of delayed-trigger exploits. Instructions could be carefully embedded to lie dormant until a specific future condition is met, making detection and tracking of the malicious payload exceedingly difficult. As Bendet cautions, "Without proper scope and permissions, this will go south faster than you’d believe." The Moltbook experiment, therefore, did not just showcase the limits of agent autonomy; it also brutally exposed the urgent need for robust sandboxing and strict permission controls before agents with real-world access are deployed at scale.

In conclusion, Moltbook was an invaluable, albeit noisy, sociotechnical experiment. It signaled the undeniable arrival of the multi-agent ecosystem—the infrastructure is ready, the compute is available, and the human fascination is peaked. Yet, the platform fundamentally demonstrated the vast gulf separating today’s pattern-matching LLM agents from genuinely autonomous, coordinated distributed intelligence. While the viral chatter proved to be more of a reflection of human scripting desires and the chaos of the open internet than machine brilliance, the associated security risks and the blueprint for future agent architecture revealed by Moltbook are lessons the technology industry must heed carefully as it moves toward sustained, powered flight.