The landscape of generative artificial intelligence is undergoing a profound shift, moving rapidly from purely research-driven innovation to mainstream commercial integration. Central to this evolution is OpenAI’s ongoing monetization strategy for its flagship large language model, ChatGPT. Initial groundwork for this strategy is now manifesting in user interfaces, specifically for free-tier and lower-tier subscribers, signaling the beginning of a new economic reality for AI access. Early observations, particularly within the Android application ecosystem, point to the deployment of a dedicated, full-screen onboarding sequence designed to introduce users to the presence of advertising within the conversational interface. This rollout phase is critical, not only for establishing revenue streams but also for managing user expectations regarding the integrity of the core product experience.

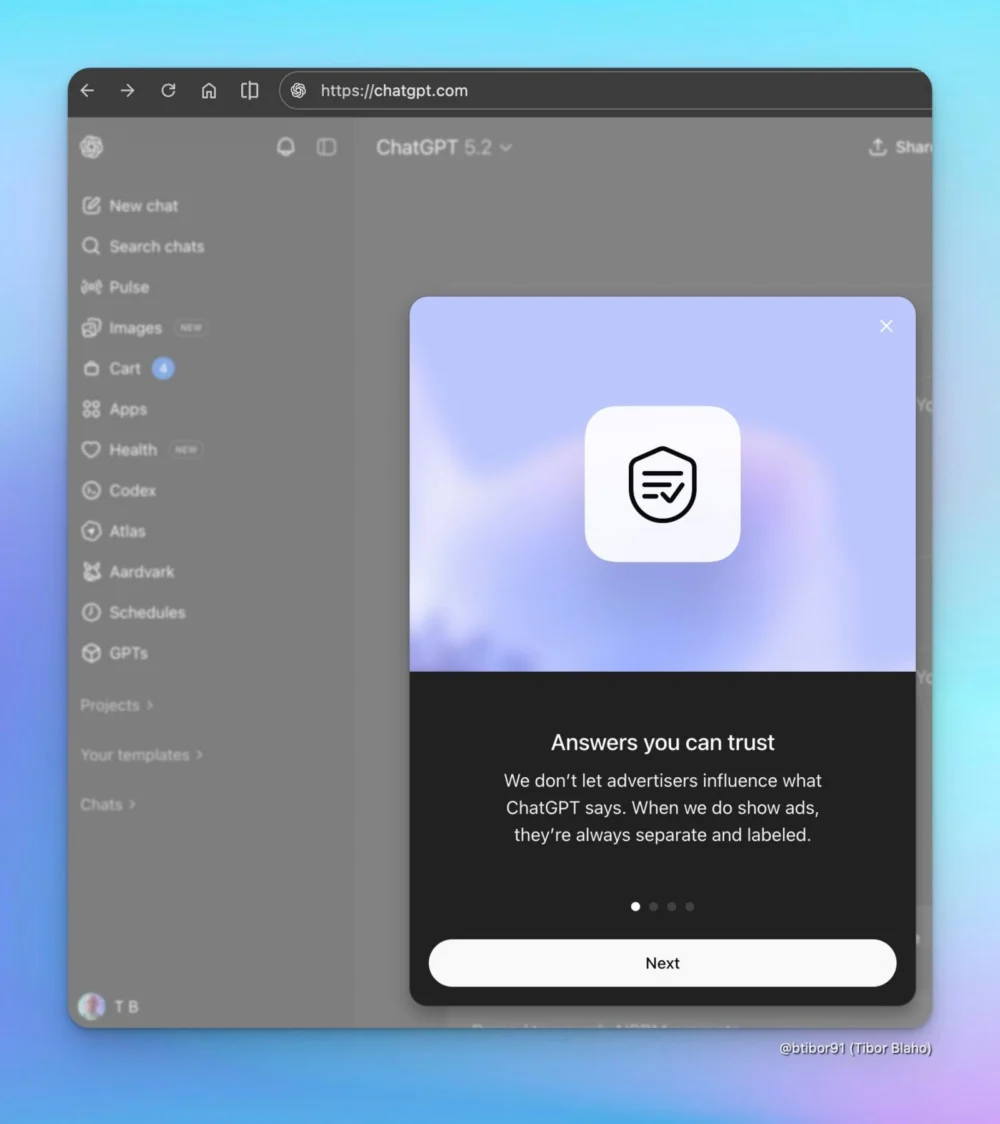

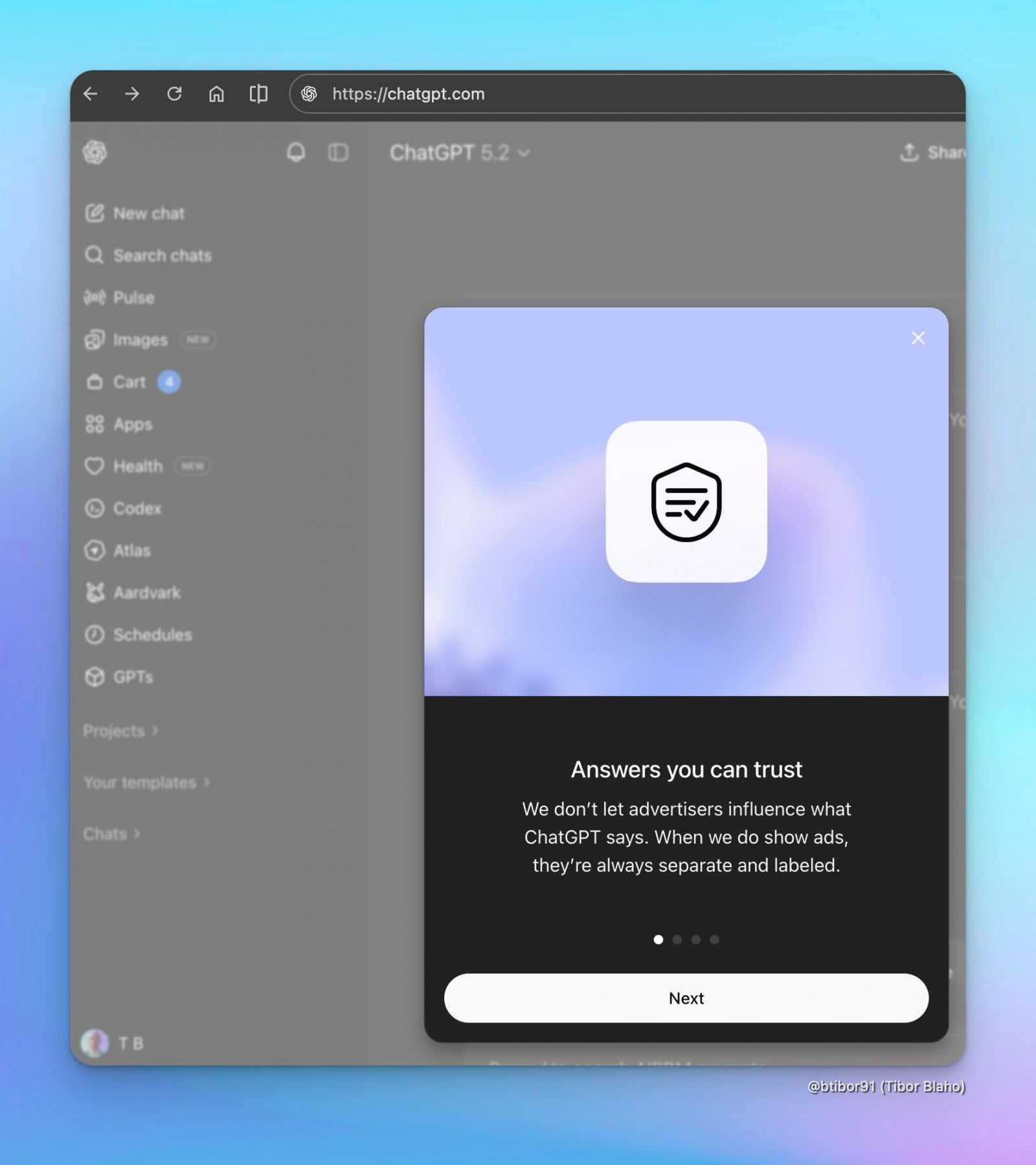

This introduction of advertisements into a tool widely adopted for factual inquiry, creative generation, and complex problem-solving immediately raises fundamental questions about content neutrality and data utilization. OpenAI has proactively sought to address these concerns through explicit assurances embedded within the very mechanism introducing the ads. During the mandatory onboarding process, the company emphasizes a strict separation between promotional content and the model’s generated output. The commitment hinges on the assertion that advertisements will not chemically alter, influence, or corrupt the answers provided by the underlying large language model, regardless of the user’s access tier (free or the entry-level paid accounts). Furthermore, these sponsored placements are mandated to be clearly demarcated and labeled, a necessary design choice to maintain transparency in a context where users implicitly trust the informational provenance of the text they receive.

The Delicate Balance of Contextual Advertising and Integrity

While the promise of answer neutrality is paramount, the mechanics of ad delivery reveal a sophisticated, context-aware approach that treads a fine line. OpenAI has confirmed a non-negotiable boundary: personal identifying information (PII) will not be shared directly with third-party advertisers. This aligns with general privacy expectations in 2024, though the definition of "personal information" remains a subject of intense scrutiny. However, the nuance lies in the context of the interaction. The system is designed such that the immediate subject matter of the ongoing chat session can indeed serve as a latent variable influencing the selection and display of sponsored advertisements positioned below the generated response.

For instance, a user querying the model about "advanced battery chemistry" might see advertisements for specialized industrial materials or related B2B services. This contextual targeting is a cornerstone of effective digital advertising, yet it requires the model to process and categorize the user’s query intent, even if that categorization does not feed back into the LLM’s training or scoring mechanisms for the output itself. This creates a dynamic where the user’s conversational topic dictates the commercial environment they inhabit momentarily.

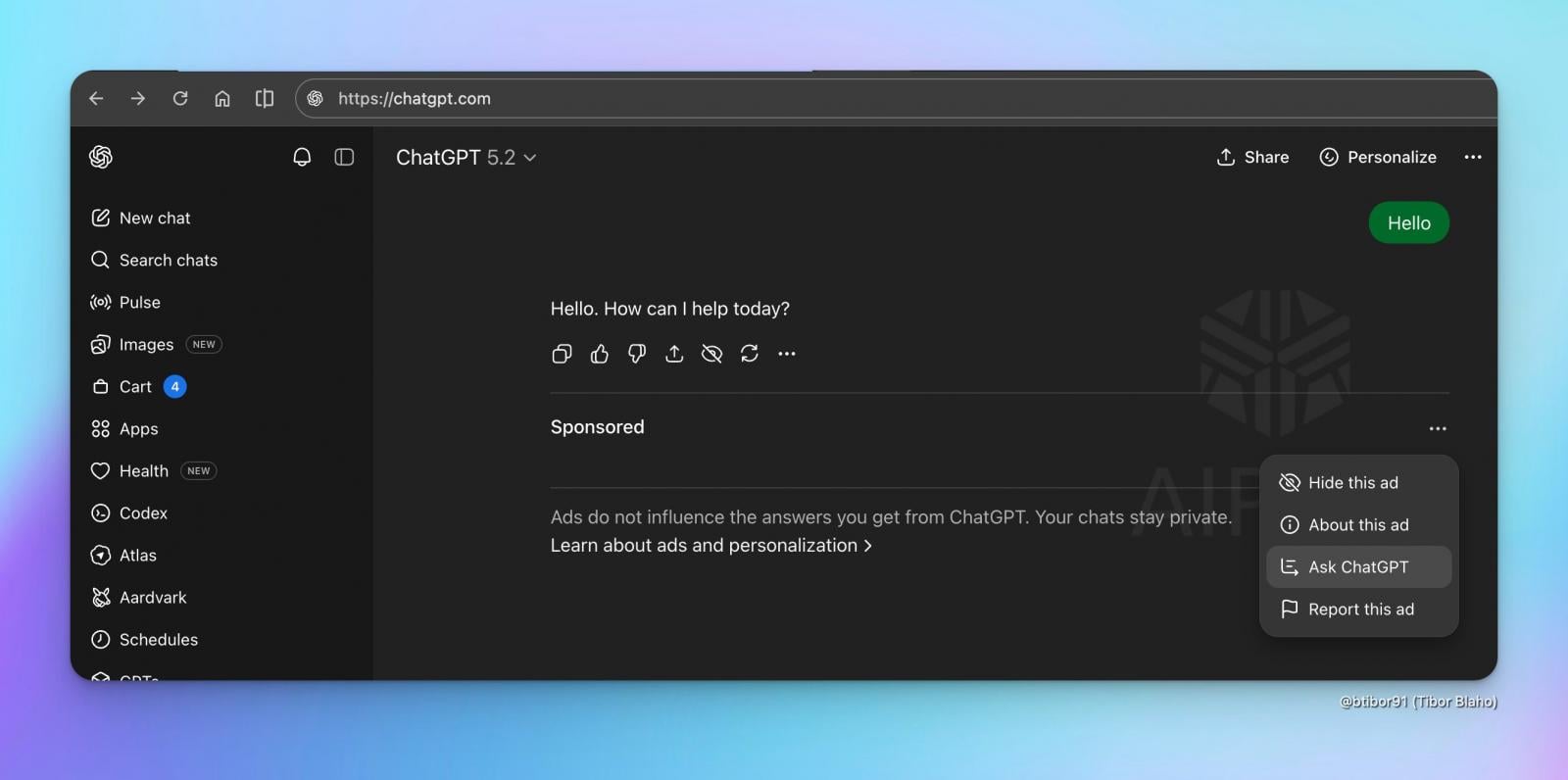

To mitigate potential user discomfort with this level of context awareness, OpenAI is implementing user-facing controls designed to grant a degree of agency over the advertising experience. Users are provided with the ability to actively conceal specific advertisements they deem irrelevant or intrusive. More importantly, they gain access to a transparency mechanism—the ability to view the justification for why a particular sponsored message was displayed. This "why this ad?" feature is a direct countermeasure to the historical opacity of programmatic advertising systems. Furthermore, users retain the power to purge ad-related data logs, offering a localized form of data sanitization divorced from their core conversational history.

Operationalizing Trust: Privacy Controls and Monetization Philosophy

The philosophical justification underpinning this monetization pivot has been articulated by OpenAI as being intrinsically linked to its core mission: ensuring that Artificial General Intelligence (AGI) ultimately benefits all of humanity. The argument suggests that robust, scalable advertising revenue is a necessary engine to democratize access to cutting-edge AI capabilities, effectively subsidizing the high computational costs associated with running models like GPT-4 for free or low-cost users. This positions advertising not as a parasitic element, but as a functional support structure for accessibility.

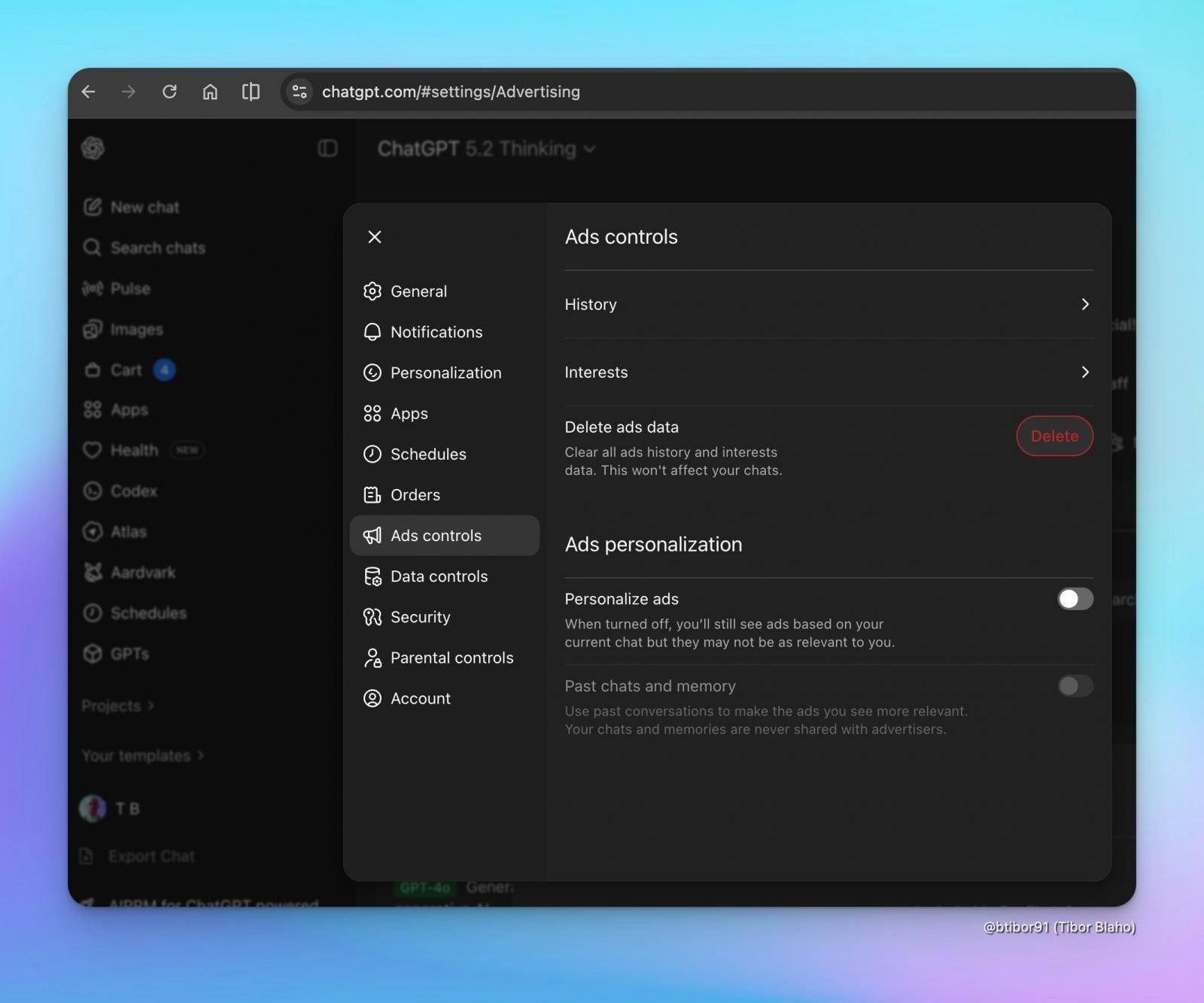

To enforce the privacy assurances, the platform explicitly states that conversations themselves are sequestered from the advertising pipeline, and the company commits to never engaging in the outright sale of user data to external advertising brokers. This commitment is structurally reinforced through the introduction of a dedicated "Ads controls" section within the general settings menu. This control panel serves as a central hub for managing user preferences related to advertising personalization. Users can selectively toggle interest-based ad targeting on or off. Crucially, the platform allows for the deletion of data specifically tagged for advertising purposes without affecting the retention or history of the actual conversational threads, providing a clean segregation of concerns for privacy-conscious users.

It must be noted, however, that the immediate influence of the current conversation on ad selection remains an active factor, even if personalization toggles are managed. This suggests a tiered approach to data usage: immediate context is utilized for real-time ad serving, while long-term behavioral profiles are managed via the explicit personalization settings.

The immediate rollout appears to target the lower tiers of the user base. According to OpenAI documentation, subscription tiers such as Plus, Pro, Business, and Enterprise will remain entirely ad-free. This segmentation establishes a clear value proposition for paid access—a pristine, uninterrupted AI interaction environment—while reserving the ad-supported experience for the high-volume, general access users who drive broad platform adoption and data collection at scale.

Industry Implications: The Commoditization of AI Interaction

The integration of advertising into conversational AI marks a significant inflection point for the entire AI sector. For years, the dominant model for consumer-facing AI was either purely subscription-based (like early ChatGPT Plus) or predicated on usage credits. The introduction of an ad-supported tier fundamentally changes the economics, treating the interaction itself as a monetizable commodity, akin to web search or social media feeds.

Democratization vs. Fragmentation: While OpenAI frames this as democratization, industry analysts view it as a necessary step toward commoditizing the base-level AI service. If competitors—such as Google with Gemini or Anthropic with Claude—observe a successful, non-damaging revenue flow from ads, they will inevitably follow suit. This could lead to a fragmentation of the user experience: premium tiers will offer pristine environments, while free tiers become increasingly optimized for engagement metrics derived from ad exposure.

The Trust Premium: OpenAI’s heavy emphasis on answer integrity is a strategic imperative. In the early days of generative AI, user trust was the most valuable, yet fragile, asset. Any perceived manipulation of output—even subtle bias introduced through ad influence—could rapidly erode that trust, leading to user migration. The very existence of the "Ask ChatGPT about it" option is an implicit admission that the potential for mistrust exists and requires an immediate, built-in mechanism for audit and rebuttal.

Data Handling Scrutiny: The context-aware ad targeting, even without PII sharing, will bring increased scrutiny from global data protection agencies. Regulators are increasingly focused on inferential data usage—what can be deduced about a user based on their queries. If a pattern of queries strongly implies a health condition, financial stress, or political leaning, even if the LLM doesn’t explicitly state this, the resulting advertisement selection could trigger privacy violations under frameworks like GDPR or CCPA if the user considers that inference personal data. OpenAI’s implementation will serve as a critical case study for how AI firms can navigate these complex regulatory waters in real-time advertising environments.

Future Trajectory: Evolving Ad Formats and Ecosystem Integration

Looking ahead, the current integration is likely a rudimentary first step. As OpenAI refines its advertising infrastructure, several trends are anticipated:

- Native Integration: We can expect ads to become less like discrete blocks and more deeply woven into the generative process. This could manifest as suggested "next steps" that are sponsored, or the AI dynamically referencing sponsored tools or services as part of a solution. For instance, if a user asks for code debugging, the model might suggest using a specific, sponsored IDE feature.

- API Monetization for Ads: The ultimate monetization strategy may involve using the ad-supported tier to drive adoption of developer tools and the API. If free users become accustomed to certain sponsored workflows, they may demand those same integrations when they transition to using OpenAI products within their own enterprise applications, creating a downstream revenue benefit.

- The Rise of "Ad-Free" LLMs as a Status Symbol: As the free tier becomes saturated with commercial messaging, the appeal of the paid tiers will intensify, driven less by access to faster models and more by the psychological value of an uninterrupted, focused cognitive space. The ability to pay to not be targeted will become a significant, tangible benefit of subscription models.

The move to integrate advertising into ChatGPT is a monumental shift, signaling that the era of entirely free, unrestricted access to frontier AI models is concluding. OpenAI is attempting to engineer a novel equilibrium where necessary revenue generation coexists with core principles of informational integrity and user privacy. The success of this delicate balancing act will define the economic model for conversational AI for the foreseeable future, setting precedents for how trust, commerce, and cognitive assistance interact in the next generation of digital platforms.