The cybersecurity landscape is undergoing a profound transformation, characterized by the increasing integration of artificial intelligence, not just in defensive measures, but alarmingly, within the tooling utilized by sophisticated threat actors. A recent investigation into a high-profile Interlock ransomware operation has illuminated this trend with the discovery of a previously unseen malware component, provisionally named Slopoly. This persistent backdoor, deployed during the intrusion, bears distinct hallmarks suggesting its creation was significantly accelerated, if not entirely authored, by generative AI models, specifically Large Language Models (LLMs). This finding marks a critical inflection point, signaling that the barrier to entry for developing complex, custom malware tooling is rapidly diminishing for financially motivated cybercriminal syndicates.

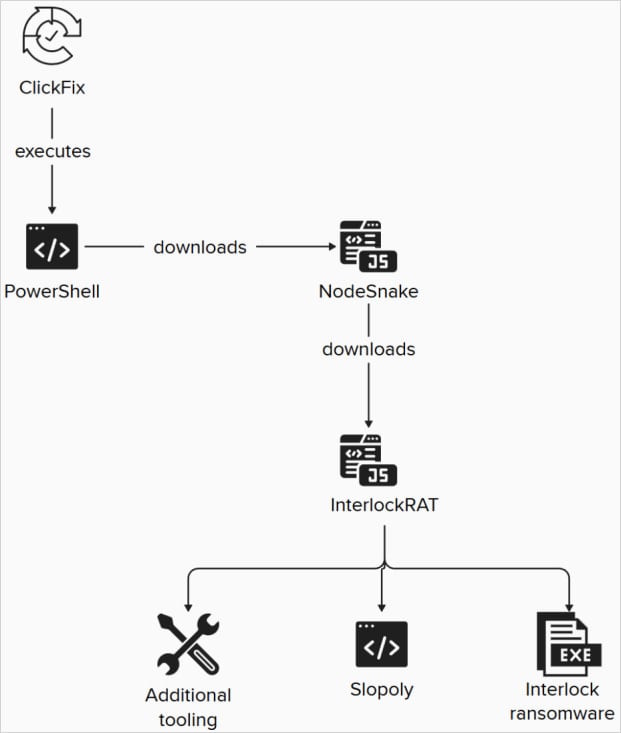

The campaign, attributed by IBM X-Force researchers to the financially driven collective tracked as Hive0163—a group focused intensely on large-scale data exfiltration preceding ransomware deployment—demonstrated a multi-stage intrusion. The initial compromise leveraged a well-known social engineering lure dubbed "ClickFix," a tactic Interlock has previously employed effectively against organizational targets. Following initial access, the threat actors maintained a clandestine foothold for over a week, during which reconnaissance and data staging likely occurred, culminating in the deployment of the Slopoly PowerShell script. This script functioned as a client interfacing with the actor’s Command and Control (C2) infrastructure, establishing a durable communication channel.

The analysis of the Slopoly script itself offers compelling, albeit indirect, evidence of AI involvement. Human-developed malware, particularly in the early stages of an operation, often prioritizes obfuscation and minimal verbosity to reduce artifact footprint. Slopoly, conversely, exhibited characteristics traditionally antithetical to clandestine malware: exceptionally thorough inline commentary, rigorously structured logging mechanisms, comprehensive error handling routines, and variables assigned with descriptive, human-readable names. Security researchers noted that such structural cleanliness and didactic coding practices are highly atypical for malware crafted solely by human adversaries under operational constraints. While the specific LLM used to generate the script remains undetermined, the output strongly suggests the use of a model trained to produce clean, well-documented code based on developer prompts.

This observation directly challenges conventional assumptions about malware development velocity. If threat actors can leverage AI to rapidly generate functional, customized backdoors—even if the resulting code is technically "unsophisticated" in its execution—the sheer speed of deployment and iteration becomes a significant operational advantage. Slopoly, despite its functional simplicity, acts as a proof-of-concept for AI-accelerated malware development within active ransomware supply chains.

Slopoly’s Nomenclature Discrepancy and Functional Analysis

Intriguingly, comments embedded within the Slopoly script itself labeled the tool as a “Polymorphic C2 Persistence Client.” However, the detailed forensic review conducted by X-Force failed to uncover any intrinsic capability for runtime code mutation, the defining characteristic of true polymorphism. This discrepancy is significant. It suggests that either the AI model hallucinated the "polymorphic" attribute based on a prompt, or the actor building the final payload stripped out the polymorphism feature while retaining the descriptive comment, perhaps for simplicity or because the builder tool handled variability in configuration rather than the script logic itself.

As the IBM report clarified, the script does not exhibit the advanced evasion techniques associated with true polymorphic engines. Instead, the variability appears to stem from the builder process. Researchers posit that the generative framework was used to assemble clients by injecting randomized configuration parameters—such as C2 beaconing intervals, specific mutex names for process identification, unique session identifiers, and the hardcoded C2 addresses. This process, while standard for malware builders across various families, is executed here with AI-generated foundational code, suggesting an AI-assisted process for creating unique variants for each deployment, which aids in evading signature-based detection systems that rely on static file hashes.

Functionally, Slopoly is designed for persistent remote access and modular execution. It establishes residency in the non-standard but often overlooked directory C:ProgramDataMicrosoftWindowsRuntime. Its command set is typical for a remote access tool (RAT) or backdoor designed to facilitate the next stages of an attack: the ability to download and execute arbitrary payloads (EXE, DLL, or scripting languages like JavaScript), execute system shell commands and relay output back to the operator, dynamically adjust its check-in frequency (beaconing), self-update capabilities, and the ability to terminate its own process cleanly.

The broader attack chain observed during this Interlock incident underscores the maturity of the Hive0163 operation. The initial ClickFix lure—often involving the distribution of seemingly legitimate, but compromised, IT tools—set the stage. Once inside, the threat actors did not rely on Slopoly alone. The environment was further saturated with other established tools, including the NodeSnake RAT and the InterlockRAT backdoor. This layering of persistence mechanisms demonstrates a robust operational security approach, ensuring that if one backdoor is discovered and purged, redundant access channels remain operational for data staging prior to the final ransomware deployment.

Background and Operational Context of Interlock

Interlock ransomware itself is a relatively recent entrant, having surfaced prominently in 2024. Despite its youth, the group behind it has rapidly developed a reputation for aggressive targeting and adaptability. They were notably early adopters of the ClickFix social engineering methodology, and subsequently integrated the "FileFix" variant, further demonstrating a commitment to refining initial access vectors.

The group’s target profile suggests a calculated, high-yield extortion strategy, frequently impacting critical sectors. Prior attacks claimed by the Interlock operators include the Texas Tech University System, the healthcare provider DaVita, Kettering Health, and municipal infrastructure such as the city of Saint Paul, Minnesota. These high-value targets confirm Hive0163’s focus on organizations where downtime and data exposure carry maximum financial and reputational penalties.

The final ransomware payload, when deployed, is described as a 64-bit Windows executable introduced via the JunkFiction loader. It exhibits operational sophistication, capable of establishing execution persistence as a scheduled task running with SYSTEM-level privileges. Crucially, it employs the legitimate Windows Restart Manager API to preemptively unlock files that might otherwise be inaccessible due to active use, a technique designed to maximize encryption coverage. The encrypted files are marked with distinct extensions, specifically .!NT3RLOCK or .int3R1Ock.

Industry Implications: The Democratization of Complexity

The primary industry implication of the Slopoly discovery is the formal acknowledgment that Large Language Models are now being effectively weaponized to enhance threat actor capabilities. This development fundamentally shifts the risk calculus for defenders. Historically, developing custom, effective malware required specialized coding expertise, time, and significant resources—a bottleneck that often limited the arsenals of smaller or newer threat groups.

AI tools effectively democratize this complexity. An actor with moderate scripting knowledge, armed with an LLM capable of generating complex PowerShell logic, can now potentially produce functional, tailored malware variants faster than security vendors can develop detection signatures. This accelerates the "time-to-deploy" for new tooling and complicates threat hunting efforts. Security teams must now account for code that is unusually clean, well-commented, or structured in ways that mimic professional software development patterns, even when found in the context of malicious payloads.

Furthermore, the attribution analysis hints at potential nexus points within the broader cybercriminal ecosystem. IBM suggests Hive0163 might maintain ties to developers associated with other established malware frameworks like Broomstick, SocksShell, PortStarter, SystemBC, and even the Rhysida ransomware operators. Such connections imply a sophisticated network of shared tooling, expertise, and potentially, development infrastructure, potentially leveraging AI-generated components across multiple financially motivated groups.

Expert Analysis: Beyond Sophistication to Operational Velocity

From an expert perspective, the Slopoly case highlights a critical nuance: AI doesn’t necessarily create more sophisticated malware (Slopoly itself is described as unsophisticated), but rather more accessible and faster-to-iterate malware. True sophistication often lies in complex evasion techniques, anti-analysis measures, and proprietary encryption algorithms. Slopoly appears to be an efficiency tool—a robust, easily deployed remote access mechanism generated with AI assistance to secure persistent C2 connectivity quickly.

The key lesson here is the move from handcrafted, artisanal malware to modular, AI-assembled components. If the foundational logic can be prompted from an LLM, the threat actor’s time is freed up to focus on the unique, human-driven aspects of the attack chain: the initial social engineering (ClickFix), the selection of high-value targets (like universities or healthcare systems), and the exfiltration strategy.

The concept of "polymorphic" code, even when inaccurately applied by the generated script, points toward an adversarial understanding of defensive evolution. Threat actors are aware that defenders rely on pattern matching and signature databases. By using a builder that randomizes configuration values—even without true polymorphic mutation—they are forcing defenders to create broader, potentially less precise detection rules, or to rely exclusively on behavioral analysis.

Future Impact and Defensive Strategy

The proliferation of AI-assisted malware necessitates a fundamental pivot in defensive strategy. Signature-based defenses, which rely on identifying known malicious file artifacts, will become increasingly brittle against AI-generated tools that can be reconfigured instantly.

The future defense must prioritize behavioral analytics and zero-trust architecture. Security operations centers (SOCs) need advanced Endpoint Detection and Response (EDR) solutions capable of modeling normal process behavior and flagging anomalies, such as PowerShell scripts exhibiting overly structured logging or unusual C2 beaconing patterns, regardless of how "clean" the code appears. Monitoring for the functional chain—ClickFix lure leading to PowerShell execution, followed by lateral movement or payload download—will become more crucial than analyzing the static file itself.

Furthermore, organizations must invest in advanced threat intelligence platforms that can rapidly ingest and analyze emerging malware samples, looking specifically for the tell-tale structural fingerprints of LLM generation, such as unusual commenting density or code structure. This proactive intelligence gathering will be essential for preemptively updating behavioral rules before these AI-assisted tools become widely adopted across the broader criminal underground. The Slopoly incident serves as an early warning: the age of generative cyber tooling is not a distant threat; it is actively being integrated into the most impactful ransomware operations today.