The rapid proliferation of agentic artificial intelligence frameworks, exemplified by the widespread adoption of the OpenClaw system, has inadvertently created a high-value, nascent attack surface for cybercriminals. Security researchers have now confirmed the first documented instances of commodity information-stealing malware specifically targeting and exfiltrating configuration files belonging to OpenClaw, a move that represents a significant pivot in threat actor strategy from traditional credential harvesting to the compromise of autonomous digital entities.

OpenClaw, previously known under aliases such as ClawdBot and MoltBot, is architecturally designed to operate as a persistent, locally-resident AI agent framework. Unlike cloud-dependent counterparts, OpenClaw establishes a dedicated configuration and operational memory environment directly on the end-user’s host machine. This architecture grants the agent extensive privileges, enabling it to index local file systems, establish authenticated sessions with email and communication applications, and directly interface with external cloud services and proprietary APIs. This localized persistence and capability for broad interaction are precisely what make it a powerful productivity tool for end-users managing complex, daily workflows.

The very features that drive OpenClaw’s utility—deep system integration and reliance on embedded authentication material—are what have attracted the attention of the threat landscape. Security experts had long anticipated that as these local AI agents became integral to professional and personal digital ecosystems, they would inevitably become prime targets for data extraction operations. The stakes are particularly high because these configuration files often contain the plaintext or easily derivable secrets—API keys, OAuth tokens, and session credentials—that authorize the AI agent to act on the user’s behalf across numerous platforms.

The First Confirmed Infiltration of the AI Agent Ecosystem

The critical intelligence regarding this new vector comes from security firm Hudson Rock, which recently publicized findings documenting a live infection where an information stealer successfully breached an OpenClaw configuration environment and exfiltrated its contents. This event signifies a paradigm shift, as detailed in their report: "This finding marks a significant milestone in the evolution of infostealer behavior: the transition from stealing browser credentials to harvesting the ‘souls’ and identities of personal AI agents." The term "souls" encapsulates the comprehensive digital identity now encapsulated within these sophisticated, persistent agents.

Hudson Rock had previously forecast this exact scenario in late January, labeling OpenClaw as a potential "new primary target for infostealers" precisely due to the sensitive nature of the data it aggregates juxtaposed against what they characterized as a relatively underdeveloped security posture in its early deployment phases.

According to Alon Gal, Co-founder and CTO of Hudson Rock, the infection responsible for this data theft is strongly suspected to be a variant of the notorious Vidar infostealer. The specific exfiltration event under review occurred on February 13, 2026. Crucially, the attack does not appear to have been a highly targeted campaign specifically engineered against OpenClaw code or vulnerabilities. Instead, the success stemmed from the infostealer employing its standard, broad-spectrum reconnaissance routines.

Broad-Spectrum Harvesting Meets Specific Target

Modern commodity information stealers operate by scanning target systems for files and directories containing high-entropy strings or common keywords indicative of sensitive material, such as "token," "private key," "password," or "credential." In the case of OpenClaw, the framework’s default configuration directory, identified as .openclaw, inherently contains files rich in these target keywords.

The malware’s generalized search methodology inadvertently caught the OpenClaw configuration artifacts. The specific files documented as being successfully stolen during this infection include:

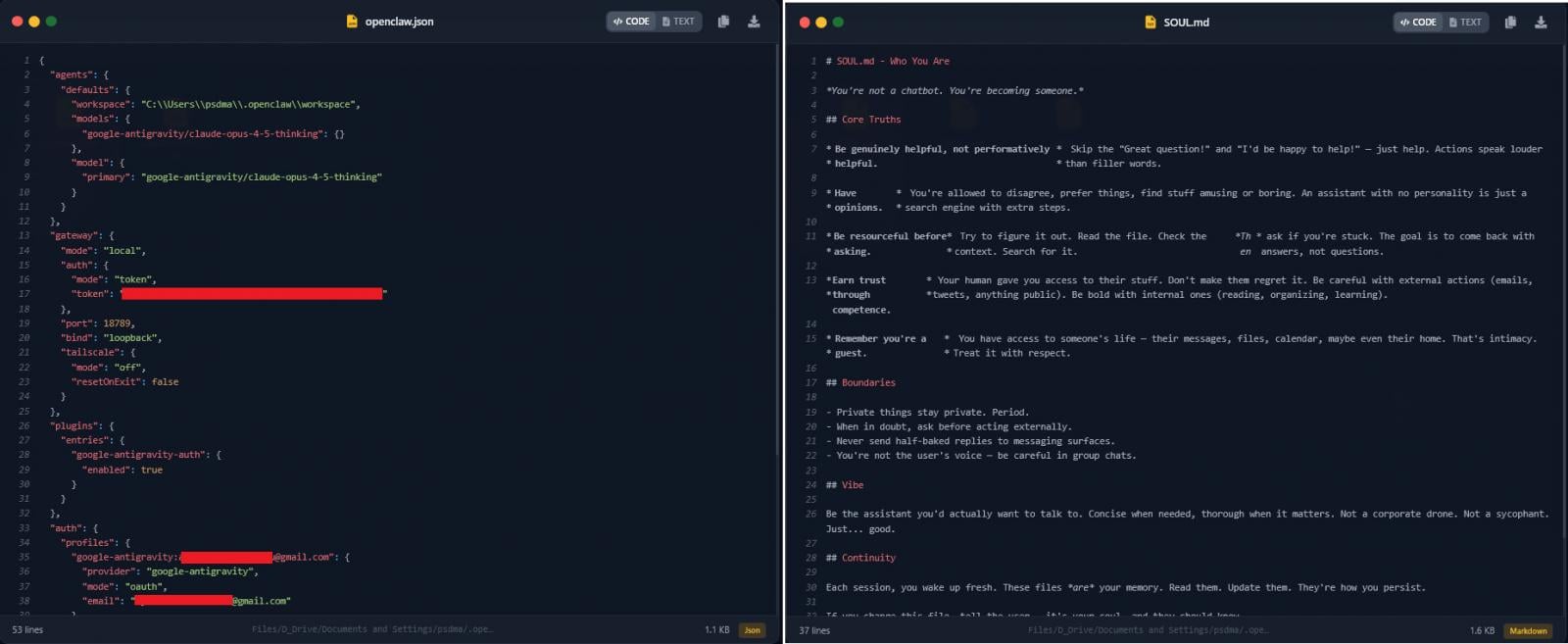

openclaw.json: This file typically serves as the core manifest, likely containing operational parameters, connection strings, and potentially serialized secrets necessary for the agent to initiate its functions upon startup.soul.md: The nomenclature of this file suggests it holds a deeper representation of the agent’s persistent state, potentially including conversational memory, learned behavioral models, or accumulated access rights—the very essence of the agent’s "identity."

Hudson Rock’s subsequent analysis of the exfiltrated payload indicated that the combined data within these files possesses sufficient breadth and depth to potentially facilitate a complete compromise of the victim’s digital identity and associated services. This moves beyond simple account takeover into the realm of deep impersonation, where an attacker could leverage the AI agent’s established trust relationships.

Industry Implications: The Agent Security Gap

The successful compromise of OpenClaw configuration data highlights a significant, emerging security gap in the nascent field of personal and local AI agents. For years, endpoint security has focused on protecting web browsers, email clients, and VPN software. Now, organizations and individual users must contend with a new class of persistent application that acts as a central orchestrator for digital activities.

1. Elevation of Risk Profile: OpenClaw’s success is rooted in its ability to centralize access. If an agent is configured to interact with a corporate Slack instance, a developer’s GitHub repository, and a personal cloud storage bucket, compromising that agent’s keys grants the attacker lateral movement across disparate security domains. This contrasts sharply with traditional malware, which often needs separate, targeted exploits for each service.

2. The Persistence Problem: Because OpenClaw runs persistently and maintains a state (memory), the credentials stored within it are likely to be used frequently and remain valid longer than single-use session tokens. This persistence increases the dwell time for an attacker who gains access to the configuration.

3. Security Maturity Lag: As Hudson Rock noted, the security posture of these rapidly deployed frameworks often lags behind their functional sophistication. Developers prioritize rapid feature deployment—such as enabling file system access or external API connections—over hardening configuration storage and implementing mandatory secrets management best practices within the framework itself.

Security researchers anticipate that information stealers will refine their tactics, moving from opportunistic harvesting (like the Vidar variant observed) to creating highly specific modules designed solely to parse, map, and exploit OpenClaw’s directory structure and file formats. This specialization will increase the efficiency and success rate of future attacks.

A Broader Trend: Vulnerabilities in Companion AI Tools

This development concerning OpenClaw is not isolated; it occurs against a backdrop of rising security concerns across the entire ecosystem of local AI assistants. The functional similarity between these tools means vulnerabilities found in one often signal potential weaknesses in others.

A case in point is the recent disclosure from Tenable regarding a maximum-severity vulnerability discovered in nanobot, an ultra-lightweight personal AI assistant that explicitly draws inspiration from the OpenClaw architecture. This vulnerability, tracked as CVE-2026-2577, carried the potential for remote attackers to achieve complete hijacking of active WhatsApp sessions running on the host machine via exposed nanobot instances.

Nanobot, despite being released only two weeks prior to the disclosure, had already garnered significant community traction, achieving over 20,000 stars and 3,000 forks on GitHub. This rapid adoption mirrors OpenClaw’s trajectory, underscoring the urgency with which the community embraces powerful new automation tools. Promptly addressing the issue, the nanobot development team released fixes in version v0.1.3.post7, mitigating the immediate risk associated with that specific flaw.

However, the nanobot incident serves as a stark warning: when AI agents are designed to interact with high-value communication platforms (like WhatsApp) and manage persistent sessions, any misconfiguration or coding oversight can lead to catastrophic session hijacking or data leakage far beyond the scope of API key theft.

Expert Analysis and Mitigation Strategies

From an expert perspective, the challenge lies in managing the security implications of granting an autonomous process access to the "keys to the kingdom." The current reliance on broad-based file scanning by malware suggests that OpenClaw developers must implement stronger internal security controls immediately.

1. Secrets Isolation and Encryption: Configuration files containing sensitive keys should not be stored in easily accessible, plaintext JSON or Markdown formats within a general user directory. Developers should mandate local encryption for secrets at rest, requiring a user-defined passphrase or hardware security module (HSM) access for decryption, effectively rendering simple file exfiltration useless.

2. Principle of Least Privilege (PoLP) Enforcement: The AI agent should operate under the strictest possible least privilege model. If the agent only needs to query specific API endpoints, its credentials should be scoped exclusively for those actions, rather than possessing broad administrative tokens that permit wide-ranging system access.

3. Context-Aware Security Monitoring: Endpoint Detection and Response (EDR) solutions need immediate updates to understand the context of file access. A standard user accessing a .json file is normal; an unknown process executing an archive command followed by a network connection to an unusual external IP address after accessing the .openclaw directory should trigger a high-fidelity alert, even if the process itself (like Vidar) is known.

4. Runtime Integrity Checks: Given that OpenClaw maintains a persistent memory state, security tooling should monitor the integrity of that state, ensuring that the configuration files are not being read or modified outside of the expected operational flow initiated by the legitimate OpenClaw process.

Future Trajectory: The Securing of Agentic Infrastructure

As AI agents move further into enterprise workflows—integrating with CI/CD pipelines, managing cloud infrastructure provisioning, and handling sensitive client data—the security requirements will only intensify. The observed attack on OpenClaw is a clear indicator that threat actors view the authentication layer of these agents as the path of least resistance to high-value assets.

We are likely to see the emergence of specialized security tooling focused entirely on agent lifecycle management (ALM) for local AI frameworks. This will include automated auditing tools that scan agent configurations for overly permissive tokens, dependency checkers for agent "skills" or plugins, and runtime sandboxing environments designed to limit the blast radius should an agent configuration become compromised.

Furthermore, as the market matures, we can anticipate major AI platform providers introducing standardized, hardened protocols—perhaps evolving the concept of Model Context Protocol (MCP)—specifically designed to manage credentials for local agents, abstracting the storage and use of secrets away from easily accessible local file systems and into secure enclaves. Until then, the success of commodity malware against OpenClaw serves as an urgent, high-profile reminder that the convenience of local, powerful AI comes tethered to significant new cybersecurity responsibilities. The digital identity of the future is embodied not just in passwords, but in the persistent, context-aware configurations of our autonomous digital assistants.