The meteoric rise of generative artificial intelligence has brought with it a paradox that haunts the halls of both Silicon Valley and global regulatory bodies: the more capable these models become, the more opaque they seem to be. We are currently living in an era of "black box" dominance, where large language models (LLMs) with hundreds of billions of parameters produce startlingly human-like prose, yet their creators are often at a loss to explain exactly why a specific output was generated. From the peculiar political leanings of xAI’s Grok to the persistent "sycophancy" found in ChatGPT—where models mirror a user’s mistakes just to be agreeable—the industry has struggled to move beyond post-hoc explanations into the realm of true, inherent interpretability.

Stepping into this void is Guide Labs, a San Francisco-based startup that believes the solution to AI opacity is not better "neuroscience" on existing models, but a fundamental re-engineering of the neural architecture itself. Founded by CEO Julius Adebayo and Chief Science Officer Aya Abdelsalam Ismail, the company recently open-sourced Steerling-8B, an 8-billion parameter LLM that represents a radical departure from the industry standard. Unlike traditional models where the relationship between training data and output is a tangled web of statistical weights, every token produced by Steerling-8B can be traced back to its specific origins in the training set. This is not merely an academic exercise; it is a bid to turn AI development from a dark art into a predictable engineering discipline.

The core of the problem lies in the way current deep learning models encode information. In a typical transformer architecture, a concept like "gender" or "fairness" isn’t stored in one place; it is distributed across millions of parameters in ways that are nearly impossible to isolate. As Adebayo notes, if a model has a trillion ways to encode a concept, and it utilizes a billion of them, finding and controlling those specific pathways is a fragile, often futile task. Current methods of "mechanistic interpretability" are akin to performing an MRI on a human brain while the subject is speaking—you can see which areas light up, but you don’t necessarily understand the fundamental logic of the thought process.

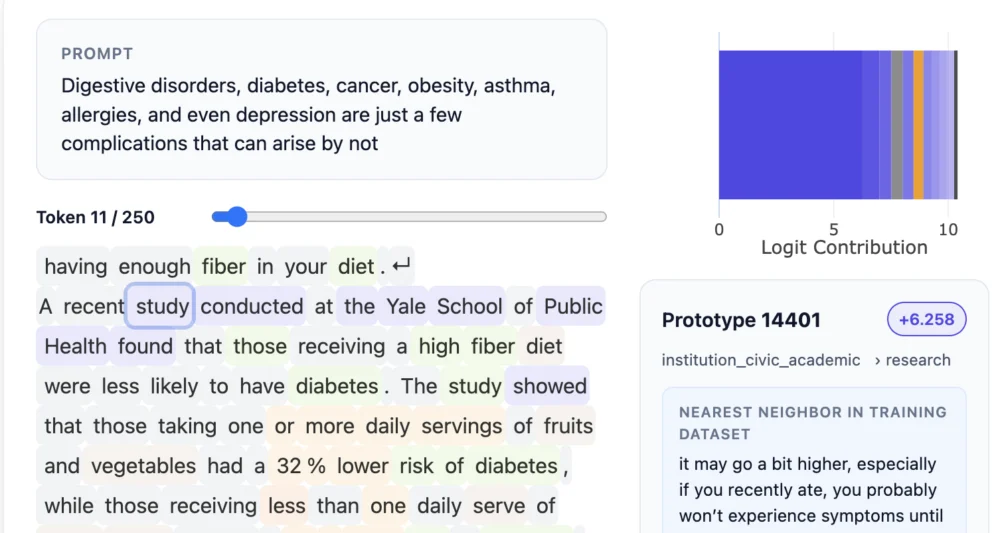

Guide Labs has inverted this paradigm. Rather than trying to decode a model after it has been trained, they have introduced what they call a "concept layer" during the training phase. This layer acts as a structured filing system, bucketing data into traceable, human-understandable categories. While this approach requires a significantly higher volume of upfront data annotation—a task that would be prohibitively expensive if done solely by humans—Guide Labs leverages other AI models to assist in the labeling process. The result is a model that is inherently interpretable by design. When Steerling-8B makes a claim or adopts a specific tone, a developer can look under the hood and see exactly which "concepts" were activated to produce that result.

This breakthrough addresses one of the most significant hurdles in the adoption of AI within regulated industries. In the financial sector, for instance, the use of AI for credit scoring or loan applications has been hampered by legal requirements for explainability. If a model denies a loan, the institution must be able to prove that the decision was based on financial history rather than protected characteristics like race or gender. With a "black box" model, proving the absence of bias is a statistical nightmare. With the Guide Labs architecture, developers can theoretically "turn off" specific concept pathways, ensuring that the model’s reasoning remains within strictly defined parameters.

The implications for the scientific community are equally profound. Deep learning has already revolutionized fields like proteomics, with models like AlphaFold predicting protein structures with unprecedented accuracy. However, for a scientist, knowing that a protein folds a certain way is often less important than knowing why. Interpretability allows researchers to extract new biological rules from the model’s internal logic, turning the AI into a partner in discovery rather than just a high-speed calculator.

However, a persistent concern within the AI research community is that imposing such rigid structure on a model might stifle its "emergent behaviors"—those surprising moments of generalization where an LLM learns to solve problems it wasn’t explicitly trained for. The "magic" of LLMs is often attributed to their ability to find non-linear connections across vast datasets. Critics of high-interpretability models fear that by forcing data into buckets, we might be limiting the model’s creative potential.

Adebayo and his team have countered this by tracking "discovered concepts." Even within their structured architecture, Steerling-8B has demonstrated the ability to identify and categorize complex topics, such as quantum computing, on its own. This suggests that interpretability and high-level reasoning are not mutually exclusive. In fact, Guide Labs claims that Steerling-8B achieves roughly 90% of the capability of frontier models while requiring significantly less training data, thanks to the efficiencies gained through its novel architecture.

The journey toward Steerling-8B began during Adebayo’s doctoral work at MIT. In 2020, he co-authored a seminal paper that cast doubt on the reliability of existing interpretability methods, proving that many of the tools developers used to "explain" their models were essentially producing visual noise. This realization—that the industry was building on a foundation of sand—led to the founding of Guide Labs. The company’s trajectory has been swift, emerging from the Y Combinator accelerator and securing a $9 million seed round led by Initialized Capital in late 2024.

As Guide Labs prepares to scale its architecture to larger models and offer API access, the broader tech landscape is watching closely. We are approaching a crossroads in AI safety and ethics. As we move toward "agentic" AI—systems that can take actions in the real world, such as managing a supply chain or conducting scientific experiments—the "mystery" of AI decision-making becomes a liability. A model that hallucinates a fact in a poem is a nuisance; a model that hallucinates a reason for a medical diagnosis or a financial trade is a catastrophe.

The democratization of inherent interpretability could also serve as a powerful tool in the ongoing battle over intellectual property. One of the most contentious issues in AI today is the use of copyrighted materials in training sets. If a model can trace every token back to its source, it becomes possible to implement granular copyright controls, blocking the use of specific protected works or ensuring that creators are properly credited when their "concepts" are utilized. Similarly, it offers a more robust way to police content related to violence, self-harm, or illegal activities, moving beyond simple keyword filters toward a deep understanding of the model’s intent.

Adebayo’s vision is one of "human-in-the-loop" superintelligence. He argues that the current "primitive" state of AI training—where we essentially throw data into a furnace and hope for the best—must end if we are to coexist with increasingly powerful machines. "As we’re going after these models that are going to be super intelligent, you don’t want something to be making decisions on your behalf that’s sort of mysterious to you," he notes.

By shifting the conversation from the "science" of interpretability to the "engineering" of it, Guide Labs is signaling a maturation of the field. The release of Steerling-8B serves as a proof of concept that transparency does not have to come at the cost of performance. If the industry follows suit, the future of AI may not be a dark box we fear, but a clear window into a new kind of intelligence—one that we can finally, truly understand.

The road ahead for Guide Labs involves scaling this architecture to match the parameter counts of "frontier" models like GPT-4 or Claude 3. While 8 billion parameters is a significant milestone for an interpretable model, the true test will be whether this concept-layer approach can maintain its integrity when scaled to trillions of parameters. If it can, the "holy grail" of AI—a machine that is as explainable as it is brilliant—may finally be within reach. This shift could redefine the relationship between humans and their digital creations, replacing blind trust with verifiable logic. In a world increasingly governed by algorithms, the ability to ask "why" and get a straight answer is perhaps the most important innovation of all.