The foundational promise of privacy on social media platforms hinges on robust access controls. For Instagram, this is epitomized by the ‘private account’ setting, a feature designed to act as a digital velvet rope, restricting visibility of photos, videos, and ephemeral Stories strictly to manually approved followers. However, recent disclosures from independent security researcher Jatin Banga have exposed a significant chink in this armor: evidence suggesting that certain private profile data—specifically direct links to images and accompanying captions—was being inadvertently served to unauthenticated users within the standard HTML response bodies of profile requests.

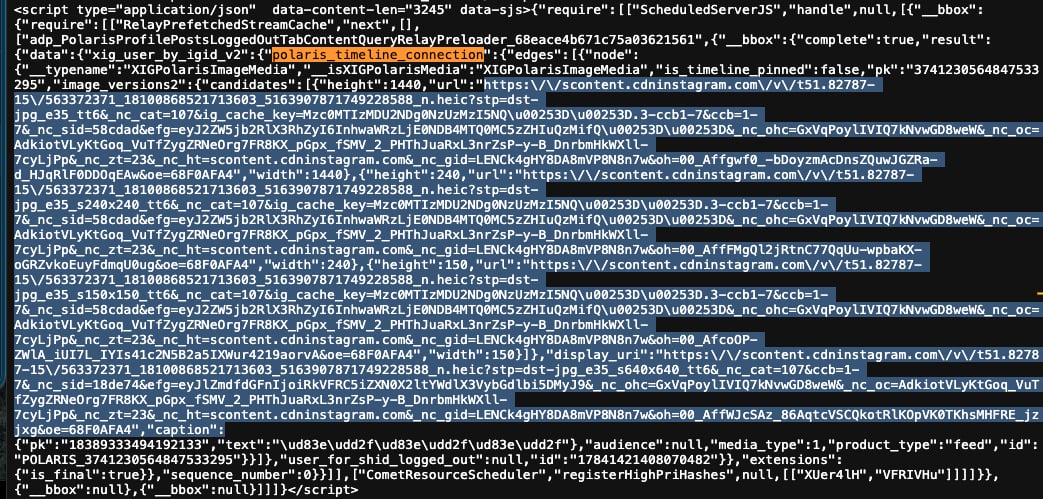

This vulnerability cuts to the core of data segregation within Meta’s vast infrastructure. When an unauthenticated user, one who has not followed the account, navigates to a profile explicitly marked as private, they are met with the expected gatekeeping message: "This account is private. Follow to see their photos and videos." This visual facade, however, masked a deeper, more insidious leak occurring at the server level. Banga’s meticulous documentation details how, under specific conditions—crucially involving the User-Agent string mimicking certain mobile devices—the resulting HTML source code contained structured data objects, notably within the polaris_timeline_connection JSON payload, that held encoded Content Delivery Network (CDN) URLs pointing directly to content that should have remained strictly behind the authentication wall.

Banga’s proof-of-concept, tested exclusively on accounts he owned or for which he had explicit permission, demonstrated the alarming scope of the flaw. His findings indicated that, across his controlled test pool, at least 28% of the private accounts exhibited this leakage, suggesting a systemic failure in backend authorization checks rather than an isolated server glitch. This is not merely about metadata exposure; it involves the direct revelation of media assets intended for a closed audience.

The Crux of the Vulnerability: Server-Side Authorization Failure

The researcher strongly refutes any characterization of the issue as a simple Content Delivery Network (CDN) caching error. Instead, Banga diagnoses the problem as a fundamental server-side authorization oversight. In essence, the server generating the initial profile page response failed to adequately verify the requester’s permission status before embedding media pointers into the publicly returned data stream. While the front-end application layer correctly displayed the "Private Account" notice, the underlying data payload transmitted to the browser or client application was improperly populated.

The timeline of disclosure is as significant as the technical finding itself. Banga initiated contact with Meta on October 12, 2025. Following an initial report, the researcher engaged in protracted discussions, creating a second, more detailed submission to clarify the mechanism. Despite the seriousness of exposing private content, the resolution path was fraught with friction. Meta’s initial categorization of the issue was contested by Banga, who insisted the error resided in the application logic, not the caching layer.

Ultimately, after several days of communication, Meta closed the ticket, labeling the reported vulnerability as "not applicable" due to an inability to reproduce the exploit. Intriguingly, Banga noted that the exploit ceased functioning across all his test accounts around October 16, suggesting an intervention occurred within 48 to 96 hours of his initial report, even while the formal ticket remained unresolved or dismissed.

Banga chose public disclosure, documenting his extensive evidence, including communications with Meta and sample response files, on a public GitHub repository. He explicitly stated he forfeited any possibility of a bug bounty by choosing this route, prioritizing transparency over financial reward. His central grievance lies not just with the existence of the bug, but with Meta’s subsequent handling: "Their negligence and reluctance to investigate the actual root cause—despite having the logs—is the real issue," he remarked. The implication is clear: if the vulnerability was indeed patched quickly, Meta possessed the logs necessary to confirm the server-side authorization lapse, yet chose to close the report without internal confirmation or acknowledgment of the breach’s nature.

Industry Implications: Trust and the Complexity of Scale

This incident underscores a persistent challenge for hyper-scale platforms like Meta: maintaining granular security controls across billions of user interactions and evolving front-end/back-end architectures. Authorization logic, especially when dealing with complex, nested data structures like a social media timeline, is notoriously difficult to implement perfectly across all request paths.

The fact that the exploit required a specific mobile User-Agent highlights a common pitfall in modern web development: inconsistent security validation across different client types. APIs and web views optimized for mobile often have slightly different request headers or data retrieval sequences compared to desktop web interfaces. A security check that passes muster for one client environment might be entirely absent or bypassed in another, leading to authorization leakage.

For the broader technology industry, this incident serves as a potent reminder of the "security debt" that accrues with rapid feature deployment. When a core feature—privacy—relies on complex server logic, any failure in that logic can translate directly into a broad privacy breach. Furthermore, the researcher’s difficulty in achieving official acknowledgment of a server-side fix, despite the exploit stopping, raises crucial questions about platform transparency in vulnerability management. If a fix is deployed, users and researchers need assurance that the root cause was addressed, not just the symptom, especially when user privacy is at stake.

The researcher’s decision to publish stems partly from the difficulty in archiving the live exploit. He noted that standard archiving tools, like the Wayback Machine, failed to capture the necessary data because they did not replicate the precise Mobile User-Agent and headers required to trigger the server-side leakage. This technical nuance further complicates external validation and historical auditing of such vulnerabilities, placing significant reliance on the platform vendor to be forthcoming about the exact nature of the fix.

Expert Analysis: The Danger of Data Ingestion Paths

From an architectural standpoint, the exposure of data via the polaris_timeline_connection object suggests that the data ingestion pipeline for serving profile views was fetching content objects without the requisite permission flag check. In high-throughput systems, engineers often rely on established internal data structures. If the initial query to the database or cache layer for a private profile already fetched the media URLs because the system assumed the request was authenticated (perhaps based on a session cookie that was missing or misinterpreted in the specific mobile request flow), those URLs would then be serialized into the response payload before any final authorization step could strip them out.

This points toward a potential failure in the "defense-in-depth" strategy. Ideally, the system should have multiple layers:

- Session/Authentication Check: Is the user logged in?

- Authorization Check: Is the user authorized to view this specific resource (i.e., are they a follower)?

- Data Sanitization Layer: Before rendering the final HTML/JSON, scrub any resource pointers that fail authorization checks.

The leakage suggests that the third layer, or perhaps even the second layer, was bypassed or executed too late in the process for this specific request path. For platforms that rely heavily on machine learning models or internal tooling that consume these raw server responses (even if they are intended for a mobile client), such leakage can become a vector for massive, unauthorized data harvesting.

Future Impact and Defensive Postures

The incident will undoubtedly fuel ongoing debates within the security community regarding responsible disclosure timelines and platform accountability. Banga’s decision to push for transparency suggests a growing dissatisfaction with closed-loop remediation processes where fixes are implemented without external verification or acknowledgement of the severity.

Moving forward, social platforms must invest heavily in dynamic security testing that actively probes for authorization bypasses across all supported client environments. Automated systems should routinely scan server responses for PII or sensitive media URLs when requests are made with known unauthenticated or restricted credentials.

Furthermore, the reliance on bug bounty programs, while beneficial, sometimes incentivizes silence until a bounty is confirmed. Researchers like Banga, who prioritize immediate public awareness of critical flaws, force platforms to confront issues rapidly, albeit sometimes leading to the aforementioned disputes over reproducibility and classification.

For users, the takeaway is a stark reminder that "private" status on large social networks should be understood as a relative term. While the platform intends to protect data, architectural flaws, even those temporarily patched, demonstrate that the boundary between public and private data streams can be surprisingly porous. This vulnerability underscores the necessity for continuous, aggressive penetration testing focused not just on external entry points, but on internal data handling and serialization logic—the true weak points in application security at scale. The security posture of any major service is only as strong as its least rigorously validated server response.