The imminent arrival of iOS 26.4, currently seeding in its initial beta form to eager developers and early adopters, signals a significant architectural pivot for Apple’s native podcasting application. While the accompanying operating system update hints at broader enhancements, including rumored advancements in AI-driven music curation within the Music application and the gradual implementation of end-to-end encryption for RCS messaging (though currently limited to inter-iPhone communications), the most tangible and strategically important evolution centers squarely on Apple Podcasts and its handling of multimedia content. Specifically, the platform is adopting a unified playback experience for video podcasts, a feature long established and perfected by dominant players in the digital audio and video sphere, most notably YouTube Music.

The Historical Fragmentation of Video Podcasting on Apple Platforms

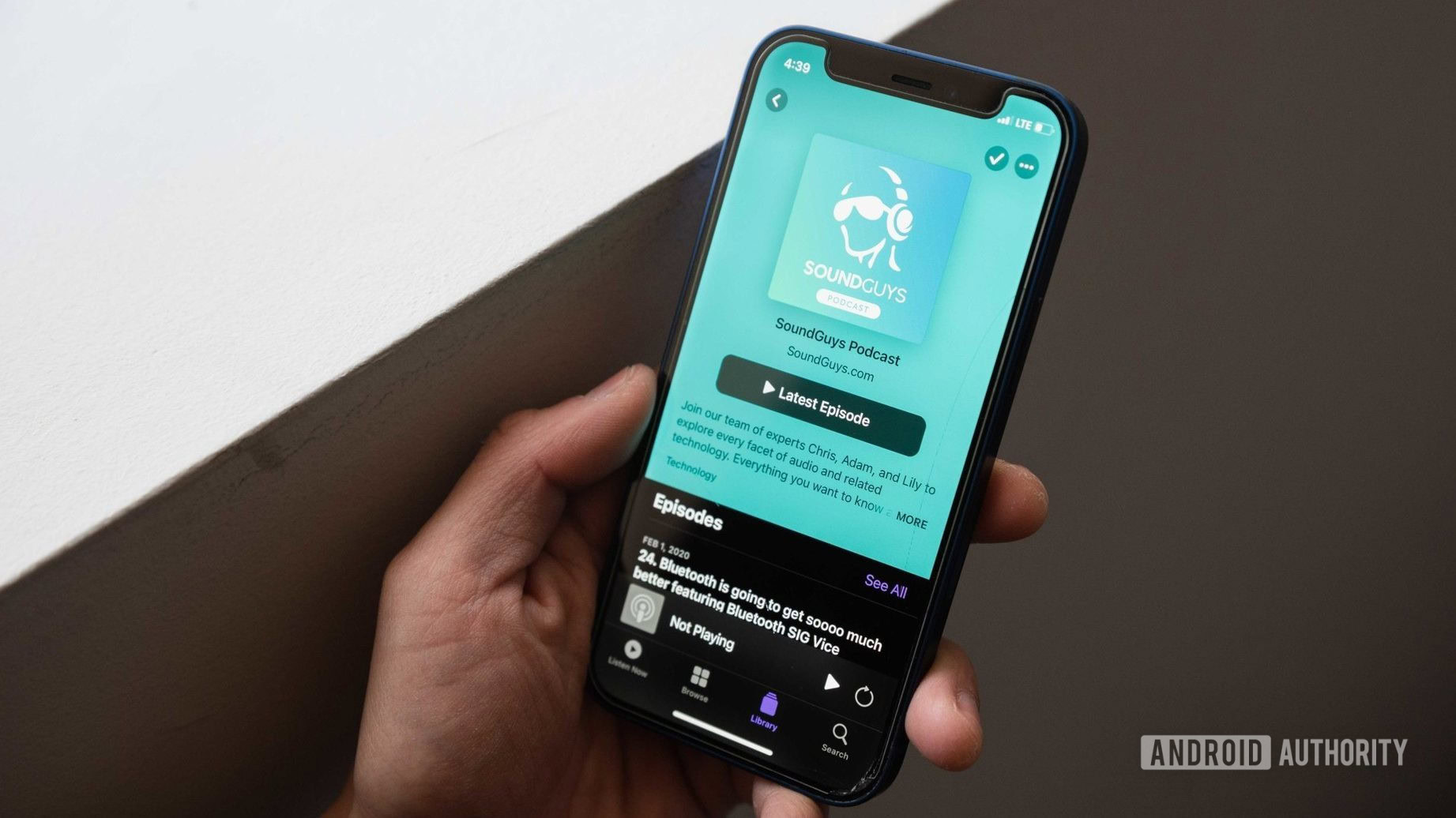

For years, the management of video within the Apple Podcasts ecosystem has been characterized by a degree of necessary, yet cumbersome, duality. Creators wishing to offer both an auditory and visual component to their episodic content were compelled to maintain separate feeds—one strictly audio, the other containing video metadata. This architecture forced the end-user experience into a fractured state. A listener intent on viewing the visual element of an episode would have to manually switch applications or navigate complex settings, often losing their place in the audio timeline. Upon returning to the audio track, synchronization was frequently lost, requiring tedious manual scrubbing to resume listening at the intended point. This friction represented a substantial barrier to entry for video-first content creators migrating to the Apple Podcasts platform and created unnecessary cognitive load for dedicated subscribers.

This upcoming overhaul, highlighted by Apple’s extensive documentation detailing the new framework, directly addresses this fragmentation. The core innovation lies in the integration of these dual formats—audio and video—into a singular, cohesive stream. This consolidation is powered by the adoption of the established HTTP Live Streaming (HLS) standard, a technology Apple itself pioneered for adaptive bitrate streaming. By leveraging HLS, the platform can now dynamically manage the stream, presenting the video by default while maintaining the audio track as the primary, fallback experience.

The YouTube Music Parallel: A Strategic Convergence

The functionality being introduced bears striking resemblance to the mechanism already perfected within YouTube Music. On that platform, when an artist releases a track with an accompanying music video, users are presented with an immediate toggle. This control, typically situated near the playback interface, allows instantaneous switching between the pure audio stream (useful for background listening or when data constraints are a concern) and the full video presentation. This fluid interchangeability has become a cornerstone of modern, multi-modal media consumption.

Apple’s move to embed a similar, easily accessible "Turn video off" control directly above the standard play/pause interface in Podcasts marks an acknowledgment of user expectations shaped by competing services. While Apple’s Music app itself currently segregates audio and video tracks, treating them as distinct entities rather than toggleable modes within a single episode view, the Podcasts implementation suggests a forward-looking commitment to integrated multimedia consumption across its entire services portfolio. The critical element here will be the execution: ensuring that the seamless transition between viewing and listening does not introduce latency, disrupt the flow of conversation, or, crucially, compromise the synchronization integrity of associated metadata elements such as auto-generated chapter markers or interactive transcripts.

Expert Analysis: Technical Underpinnings and Creator Workflow

The technical migration to HLS is not merely an aesthetic upgrade; it is a fundamental shift in how Apple indexes and serves podcast content. Moving away from disparate RSS pointers for video and audio means that content delivery networks (CDNs) can optimize caching and bandwidth allocation more effectively. For creators, this necessitates an updated workflow managed through Podcast Connect. The support documentation now guides publishers toward utilizing third-party hosting solutions capable of serving HLS-compliant manifests, effectively standardizing the ingestion pipeline for rich media podcasts.

This standardization is vital for monetization. The beta rollout already hints at the integration of dynamic ad insertion capabilities specifically for video segments. This includes the potential for host-read video placements, a premium advertising format that commands higher CPMs. By creating a robust, unified delivery mechanism, Apple lowers the technical overhead for advertisers seeking to target viewers based on their consumption habits within the Podcasts application, potentially unlocking significant new revenue streams for top-tier video podcasters.

However, the initial beta phase, as observed by early testers, reveals expected teething issues. Reports indicate instability in the synchronization of transcripts and chapter navigation when toggling media formats. These glitches underscore the complexity of perfecting real-time stream switching while maintaining perfect temporal alignment across multiple data layers (audio, video, text data). Apple’s commitment to ironing out these bugs before the stable release of iOS 26.4 will be crucial to the feature’s long-term success. If the transition is jarring, users accustomed to the polish of competing platforms will likely revert to simpler audio-only consumption or migrate to platforms where video integration is already seamless.

Industry Implications: Elevating the Podcast Tier

This development has profound implications for the entire podcasting industry, particularly the burgeoning segment of "video podcasts." Major media entities, celebrities, and established media companies have increasingly invested in high-production-value video versions of their shows, often treating them as companion pieces to their primary audio releases. Previously, Spotify and YouTube have served as the default destinations for this premium content due to their native support for video playback.

Apple’s update effectively levels the playing field regarding core functionality. By making video podcasts a first-class citizen within its native app—an app bundled on every iPhone, iPad, and Mac—Apple dramatically increases the discoverability and accessibility of this content for its massive user base. For creators, this means retaining their audience within the walled garden, benefiting from Apple’s established subscription infrastructure and deep integration with the iOS experience (e.g., CarPlay, HomePod).

This move solidifies the industry trend toward multimedia convergence. The distinction between a traditional podcast and a video series is rapidly blurring. Platforms are evolving from simple audio aggregators into comprehensive media hubs. Amazon Music, Spotify, and now Apple Podcasts are all engaged in a strategic battle to become the single destination for all auditory and visual episodic content, recognizing that user engagement time—regardless of the medium—is the ultimate metric of platform success.

Contextualizing iOS 26.4: A Broader Platform Evolution

It is important to view this Podcasts enhancement within the wider context of the iOS 26.4 cycle. The operating system update is slated for a Spring 2026 launch, a timeline that coincides with significant anticipation regarding Apple’s broader AI strategy, often referred to collectively as Apple Intelligence. The integration of advanced generative AI capabilities, including the rumored overhaul of Siri, has been a subject of intense speculation, particularly following reports of Apple utilizing foundational models from external partners like Google to augment its own on-device processing capabilities.

The success of these sophisticated, interconnected features—whether it’s AI-generated summaries, enhanced voice commands, or, in this case, seamless media switching—relies on a unified, modern streaming backbone. The HLS implementation in Podcasts serves as an early, practical demonstration of Apple’s capacity to deliver complex, synchronized multimedia experiences across its hardware and software stack. It builds the technical foundation necessary for future, more abstract AI features to operate without disruption.

Future Trajectories and Competitive Dynamics

The immediate future suggests a vigorous competition in feature parity, especially regarding content consumption models. If Apple successfully rolls out this video integration without significant performance degradation, the pressure mounts on Spotify to ensure its own video podcast implementation remains superior or at least equivalent. Furthermore, the success of Apple’s dynamic ad insertion capabilities within this new HLS framework could dictate the next wave of investment from major studios into video podcast production specifically targeting the Apple ecosystem.

Looking further ahead, this development paves the way for genuinely interactive podcast experiences. With synchronized video, audio, transcripts, and chapters all accessible via a single stream, the pathway is clear for augmented reality overlays or deeper interactivity triggered by visual cues in the video track. Imagine a cooking podcast where tapping on an ingredient shown on screen instantly pulls up a shopping list integration, all managed through the same player interface.

In summary, the iOS 26.4 beta is revealing Apple’s commitment to transforming its native Podcasts application from a simple audio delivery system into a fully capable multimedia consumption platform. By adopting the unified audio/video streaming model popularized by rivals like YouTube Music, Apple is resolving a significant usability roadblock for creators and subscribers alike. While the initial beta stability needs refinement, this architectural shift is a clear signal that video is no longer an afterthought for Apple’s podcast strategy; it is becoming central to how the company intends to capture user attention in the evolving landscape of digital episodic content. The success of this rollout will be a critical indicator of Apple’s ability to integrate cutting-edge streaming technology to maintain parity and push innovation in the highly competitive media services sector.