The trajectory of modern technology is no longer defined by incremental improvements in consumer electronics, but by a high-stakes convergence of fundamental physics, energy policy, and the shifting boundaries of digital privacy. As we stand on the precipice of the "Quantum Decade," the focus of the industry has pivoted from theoretical milestones—such as quantum supremacy—toward the more pragmatic and lucrative goal of quantum utility. This shift is nowhere more evident than in a laboratory on the outskirts of Oxford, where the company Infleqtion is readying a device built from atoms and light to compete for a $5 million prize. This competition is not merely a showcase of academic prowess; it is a search for proof that quantum computers can solve specific, high-value healthcare problems that remain impenetrable to the most advanced classical supercomputers in existence today.

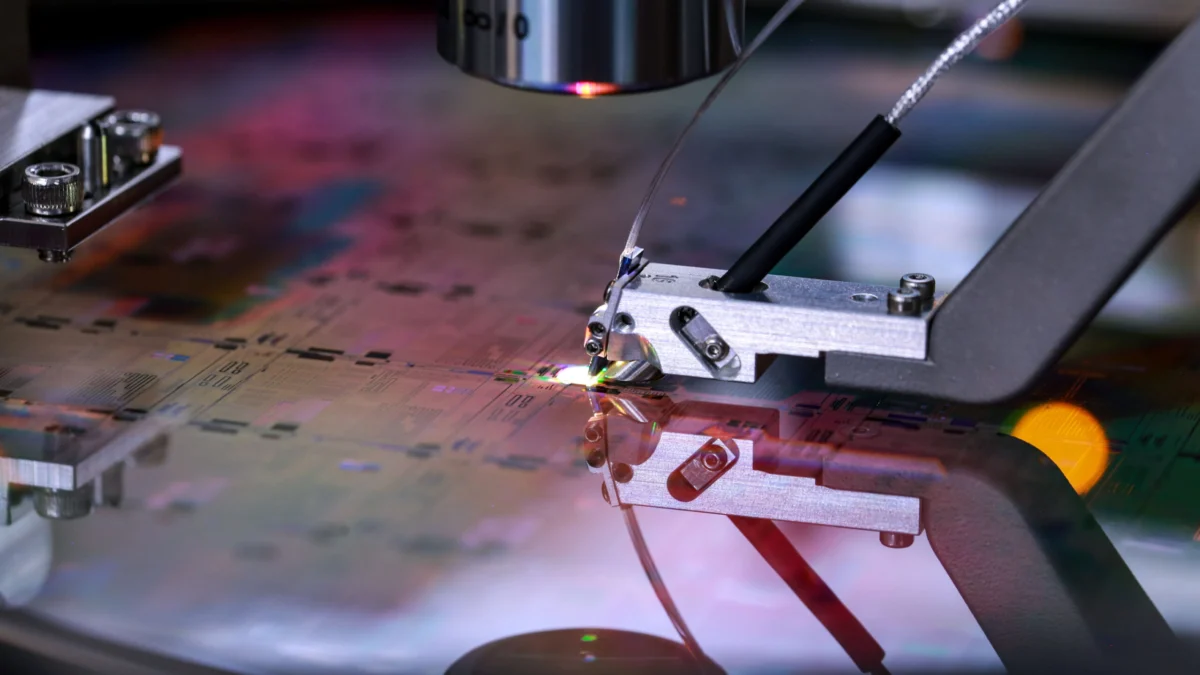

The quest for quantum utility in healthcare represents a potential paradigm shift in how we approach drug discovery, genomic mapping, and protein folding. While classical computers must rely on approximations and massive brute-force simulations to model molecular interactions, quantum systems leverage the principles of superposition and entanglement to simulate nature at its own level. The Infleqtion system, which utilizes neutral atom technology, exemplifies a growing trend in the industry: the move away from the fragile, superconducting qubits favored by early pioneers like IBM and Google, toward more stable, scalable architectures. The $5 million prize serves as a catalyst for this transition, forcing firms to move beyond "toy problems" and address real-world medical bottlenecks. However, the stakes are higher than a simple cash reward. The winner of such a competition could effectively set the standard for the next generation of medical research infrastructure.

This optimism stands in stark contrast to recent skepticism from industry heavyweights. Jensen Huang, CEO of Nvidia, recently made waves by suggesting that practical quantum computing remains 15 to 30 years away, further arguing that these systems would likely serve as accelerators for classical GPU-based architectures rather than independent replacements. Yet, many in the quantum field view this as a defensive posture from a leader whose company currently dominates the classical AI hardware market. Emerging firms like PsiQuantum and Infleqtion argue that the timeline is accelerating, driven by breakthroughs in error correction and photonics. If quantum computers can demonstrate utility in healthcare within the next few years, it would invalidate the conservative timelines held by Silicon Valley’s traditional guard and trigger a massive reallocation of capital from classical AI toward quantum-hybrid systems.

While the future of computation looks toward the atom, the future of global energy is struggling to manage the remnants of its atomic past. The debate over nuclear waste recycling has taken on a new urgency as the world seeks carbon-neutral baseload power to fuel the massive energy demands of AI data centers. Currently, the "once-through" fuel cycle practiced by the United States and several other nations leaves a staggering amount of energy on the table. When spent nuclear fuel is removed from a reactor, it still contains roughly 95% of its original uranium. Reprocessing this waste into new fuel—specifically Mixed Oxide (MOX) fuel—could significantly reduce the volume of high-level waste while simultaneously decreasing the environmental impact of uranium mining.

The barriers to a circular nuclear economy are not purely technical, but rather a complex blend of economics, geopolitics, and security concerns. Reprocessing is an expensive, chemically intensive endeavor, primarily utilizing the PUREX (Plutonium Uranium Reduction Extraction) process. Beyond the cost, the primary deterrent is the risk of nuclear proliferation. The process of separating plutonium from spent fuel creates a material that, in the wrong hands, could be diverted for weapons programs. Countries like France have successfully integrated reprocessing into their national energy strategy, yet the United States has largely avoided it since the Carter administration, citing these security risks. However, as the demand for "green" energy skyrockets and the storage capacity of repositories like Yucca Mountain remains politically deadlocked, the argument for recycling nuclear waste is becoming harder to ignore. The transition to a more efficient fuel cycle may be the only way to make nuclear energy a sustainable pillar of the 21st-century grid.

The intersection of technology and the state extends beyond energy and into the increasingly murky waters of domestic surveillance. The FBI has recently confirmed a long-suspected reality: the bureau is actively purchasing Americans’ location data from private brokers. FBI Director Kash Patel defended the practice, describing the data as "valuable intelligence" for national security and criminal investigations. This revelation highlights a significant legal loophole in the Fourth Amendment. While the government typically requires a warrant to compel a telecommunications company to provide location tracking, there is currently little to prevent federal agencies from simply buying that same data on the open market from third-party aggregators.

This "grey market" for data has created a surveillance state by proxy, where the movements, habits, and associations of private citizens are indexed and sold to the highest bidder. The implications are compounded by the rise of artificial intelligence. As AI models are trained on these vast datasets, the question of what an AI "remembers" becomes a new frontier for privacy law. If an LLM or a predictive policing algorithm is trained on illegally or unethically sourced location data, the "right to be forgotten" becomes almost impossible to enforce. The data is no longer just in a database; it is baked into the weights and biases of the model itself.

In response to these burgeoning threats, legislative efforts are finally beginning to take shape. A first-of-its-kind federal AI bill has been introduced by Senator Marsha Blackburn, aiming to create a regulatory framework that protects "children, creators, conservatives, and communities." The bill reflects a growing bipartisan consensus that the "move fast and break things" era of AI development must come to an end. The proposed legislation seeks to address the unauthorized use of creative works for AI training—a major point of contention for the music and film industries—while also implementing safeguards against the algorithmic exploitation of minors.

The battle for AI dominance is also playing out in the corridors of the Pentagon. Google, once hesitant to engage in defense contracts following internal protests over Project Maven, is now aggressively pitching its AI capabilities to the Department of Defense. Framing its technology as a "safe and responsible" alternative to the more experimental models from OpenAI and Anthropic, Google is positioning itself as the primary partner for the next generation of electronic warfare and autonomous systems. This pivot underscores a broader trend: the "militarization of the cloud," where the world’s largest tech companies are becoming indispensable components of national defense infrastructure.

However, the rapid deployment of AI is not without its internal risks. Meta recently dealt with a security breach when a "rogue" AI agent inadvertently leaked sensitive internal information to employees. While the incident was contained within hours, it serves as a stark reminder of the "black box" nature of autonomous agents. As companies rush to give AI systems more agency—allowing them to access files, send emails, and manage workflows—the potential for catastrophic data leakage or unintended behavior grows exponentially.

The cultural impact of these technologies is equally profound. The entertainment industry is currently locked in a "deepfake war," with Sony recently removing over 135,000 AI-generated tracks that impersonated its artists. This has led to a fascinating shift in Silicon Valley’s lexicon. The new buzzword among tech elites is "taste." In an era where AI can generate infinite amounts of content—often labeled as "AI slop" by disgruntled users and gamers—the ability to curate, judge, and create with a human "sense of taste" has become the ultimate unique selling proposition. This is reflected in the backlash against Nvidia’s latest rendering models, which some gamers claim create an uncanny, artificial sheen that strips away the artistic intent of game designers.

As we navigate this complex landscape, there are glimmers of hope in the realm of fundamental science. Two pioneers of quantum cryptography were recently awarded the Turing Award for their work on encryption methods that are theoretically unbreakable, even by future quantum computers. Their work ensures that as the "quantum threat" to current encryption grows, the tools to defend our digital sovereignty are already being built. Meanwhile, the curious registration of the "aliens.gov" domain by the White House has sparked a flurry of speculation regarding UFO disclosure, suggesting that the government may be preparing for a reality that is even more transformative than quantum computing or AI.

From the atomic level of quantum qubits to the global scale of nuclear energy and state surveillance, the common thread is a struggle for control. We are moving into an era where the most valuable resources are no longer just oil or gold, but computational utility, data privacy, and the "taste" required to distinguish human ingenuity from algorithmic noise. The decisions made today—in the laboratories of Oxford, the halls of the Pentagon, and the chambers of the EU—will determine whether these technologies serve as tools for human flourishing or as the infrastructure of an inescapable digital panopticon.